## Screenshot: Label Studio Human Preference Annotation Interface

### Overview

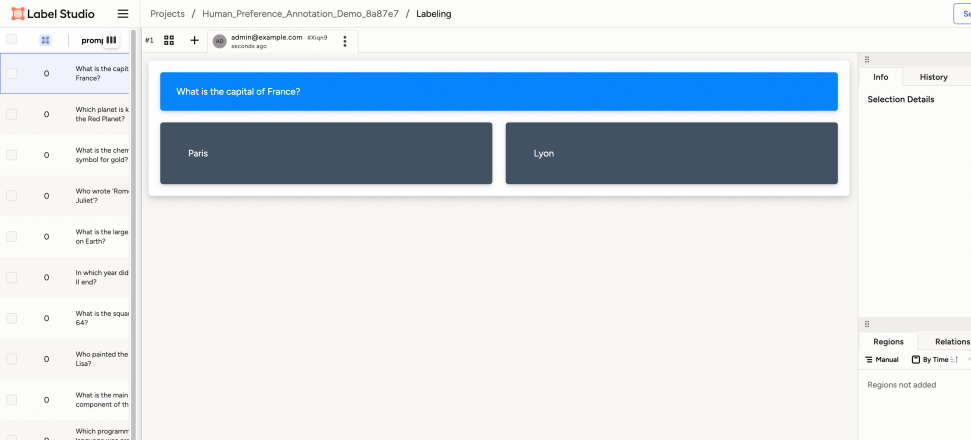

This image is a screenshot of a web-based data labeling application called "Label Studio." The interface is displaying a task for "Human Preference Annotation," where a user is presented with a question and two possible answers to choose from. The layout is divided into several functional panels: a header, a left sidebar with a task list, a central main content area for the active task, and a right sidebar for details and history.

### Components/Axes

The interface is structured into the following distinct regions:

1. **Header (Top Bar):**

* **Left:** Label Studio logo (red icon with text "Label Studio") and a hamburger menu icon (three horizontal lines).

* **Center:** Breadcrumb navigation path: `Projects / Human_Preference_Annotation_Demo_8a87e7 / Labeling`.

* **Right:** A user indicator showing "admin@example.com" with a timestamp "XX:XX:XX seconds ago," a vertical ellipsis (three dots) menu icon, and a partially visible blue button (likely "Submit" or "Save").

2. **Left Sidebar (Task List):**

* A vertical list of tasks, each represented by a row containing a checkbox, a number, and a truncated question text.

* The first task (numbered "1") is highlighted with a light blue background, indicating it is the currently active task.

* **Visible Task List Items (from top to bottom):**

* `1` - `What is the capital of France?` (Active/Selected)

* `2` - `Which planet is known as the Red Planet?`

* `3` - `What is the chemical symbol for gold?`

* `4` - `Who wrote Romeo and Juliet?`

* `5` - `What is the largest ocean on Earth?`

* `6` - `In which year did World War II end?`

* `7` - `What is the square root of 64?`

* `8` - `Who painted the Mona Lisa?`

* `9` - `What is the main component of the Earth's atmosphere?`

* `10` - `Which programming language is...` (Text is cut off)

3. **Main Content Area (Task Workspace):**

* This is the central panel where the active task is displayed.

* **Question Panel:** A large blue rectangle at the top containing the full question text: `What is the capital of France?`

* **Answer Options:** Two large, dark grey rectangular buttons below the question, presented side-by-side.

* **Left Button:** Contains the text `Paris`.

* **Right Button:** Contains the text `Lyon`.

4. **Right Sidebar (Details Panel):**

* Contains two tabs at the top: `Info` (active) and `History`.

* Below the tabs is a section titled `Selection Details`.

* Further down, there are two more tabs: `Regions` (active) and `Relations`.

* Under the `Regions` tab, there are two sub-options: `Manual` and `By Time` (with a dropdown arrow).

* At the bottom of this panel, the text `Regions not added` is displayed, indicating no annotations have been made on this task yet.

5. **Footer (Bottom Bar):**

* A thin, empty grey bar spans the bottom of the entire application window.

### Detailed Analysis

* **Task Flow:** The interface presents a clear workflow. The user selects a task from the list on the left. The central panel then displays that task's content (a question and multiple-choice answers). The user's selection (clicking either "Paris" or "Lyon") would constitute the annotation or label for that data point.

* **UI State:** The application is in a "labeling" state, as indicated by the breadcrumb. The active task is #1. The right sidebar shows no existing annotations ("Regions not added") for the current task.

* **Text Transcription:** All visible text in the interface is in English. The text is presented clearly in a sans-serif font. Some text in the left sidebar is truncated with an ellipsis (`...`) due to space constraints.

### Key Observations

* **Purpose-Built Interface:** The layout is highly specialized for sequential data labeling, with a clear separation between task selection (left), task execution (center), and metadata/annotation details (right).

* **Minimalist Task Design:** The core task is extremely simple: a single question with two mutually exclusive answers. This suggests the demo project is designed for basic preference or knowledge testing.

* **No Annotations Present:** The "Regions not added" message in the right sidebar confirms that the current user has not yet interacted with or labeled the active task (#1).

* **User Context:** The user is logged in as `admin@example.com`, suggesting this might be a setup or demonstration view.

### Interpretation

This screenshot captures a moment in a human-in-the-loop machine learning or data curation pipeline. The "Human_Preference_Annotation_Demo" project name implies the goal is to collect human judgments on pairs of answers (like "Paris" vs. "Lyon") to train or evaluate a model. The interface is designed for efficiency, allowing an annotator to quickly move through a list of simple questions.

The structure reveals a common pattern in annotation tools: a master list of items, a focused workspace for the current item, and a contextual panel for tools and history. The absence of any added regions indicates the annotation process for this specific item has not begun. The tool is ready for the user to click on one of the two answer buttons, which would likely register as a label and automatically advance to the next task in the list.