## Diagram: High-Performance Computing System Architecture

### Overview

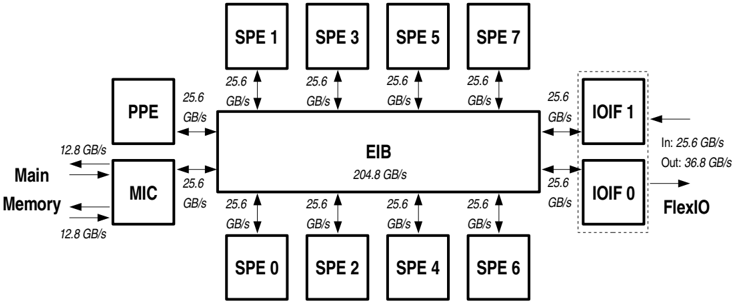

The diagram illustrates a high-performance computing system architecture with multiple interconnected components. It highlights data flow paths, bandwidth capacities (in GB/s), and relationships between key elements such as processing units, memory, and I/O interfaces.

### Components/Axes

- **Central Element**: EIB (Element Interconnect Bus) with a total bandwidth of **204.8 GB/s**.

- **Processing Units**:

- 8 SPEs (Streaming Processing Elements) labeled SPE 0–7, each connected to the EIB at **25.6 GB/s**.

- **Memory Subsystem**:

- **PPE** (Power Processing Element) connected to EIB at **25.6 GB/s**.

- **MIC** (Memory Controller) connected to EIB at **25.6 GB/s**.

- **Main Memory** connected to MIC at **12.8 GB/s** (bidirectional).

- **I/O Interfaces**:

- **IOIF 1** and **IOIF 0** (Input/Output Interfaces) connected to EIB at **25.6 GB/s**.

- **FlexIO** connected to IOIF 0 with **36.8 GB/s** output and **25.6 GB/s** input.

### Detailed Analysis

- **SPEs**: All 8 SPEs (SPE 0–7) are symmetrically connected to the EIB with identical bandwidth (**25.6 GB/s**), forming a uniform processing layer.

- **EIB Bandwidth**: Total EIB bandwidth (**204.8 GB/s**) matches the sum of all SPE connections (8 × 25.6 GB/s), indicating no contention in this configuration.

- **Memory Hierarchy**:

- PPE and MIC have equal bandwidth to EIB (**25.6 GB/s**), suggesting balanced roles in data handling.

- Main Memory’s **12.8 GB/s** connection to MIC is half the EIB bandwidth, potentially indicating a bottleneck or optimized data transfer strategy.

- **I/O Paths**:

- IOIF 1 and IOIF 0 both connect to EIB at **25.6 GB/s**, but FlexIO’s output (**36.8 GB/s**) exceeds this, implying external communication prioritization or aggregation.

### Key Observations

1. **Symmetry in SPE Connections**: Uniform bandwidth allocation to all SPEs suggests parallel processing optimization.

2. **Memory Bandwidth Limitation**: Main Memory’s **12.8 GB/s** link to MIC is half the EIB’s per-SPE bandwidth, which could limit data feeding to processing units.

3. **FlexIO Asymmetry**: FlexIO’s higher output (**36.8 GB/s**) vs. input (**25.6 GB/s**) hints at unidirectional data offloading or specialized I/O tasks.

### Interpretation

This architecture prioritizes parallelism via 8 SPEs sharing a high-bandwidth EIB. The EIB acts as a central hub, enabling efficient inter-SPE communication. However, the **12.8 GB/s** Main Memory-MIC link may become a bottleneck under memory-intensive workloads, as it is half the EIB’s per-SPE bandwidth. The FlexIO’s asymmetric I/O rates suggest external data transfer is optimized for output-heavy operations (e.g., rendering or data streaming). The design balances compute power with I/O constraints, typical of GPU or accelerator architectures where data locality and parallelism are critical.