TECHNICAL ASSET FINGERPRINT

404bf52511308f6215fc1e2f

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

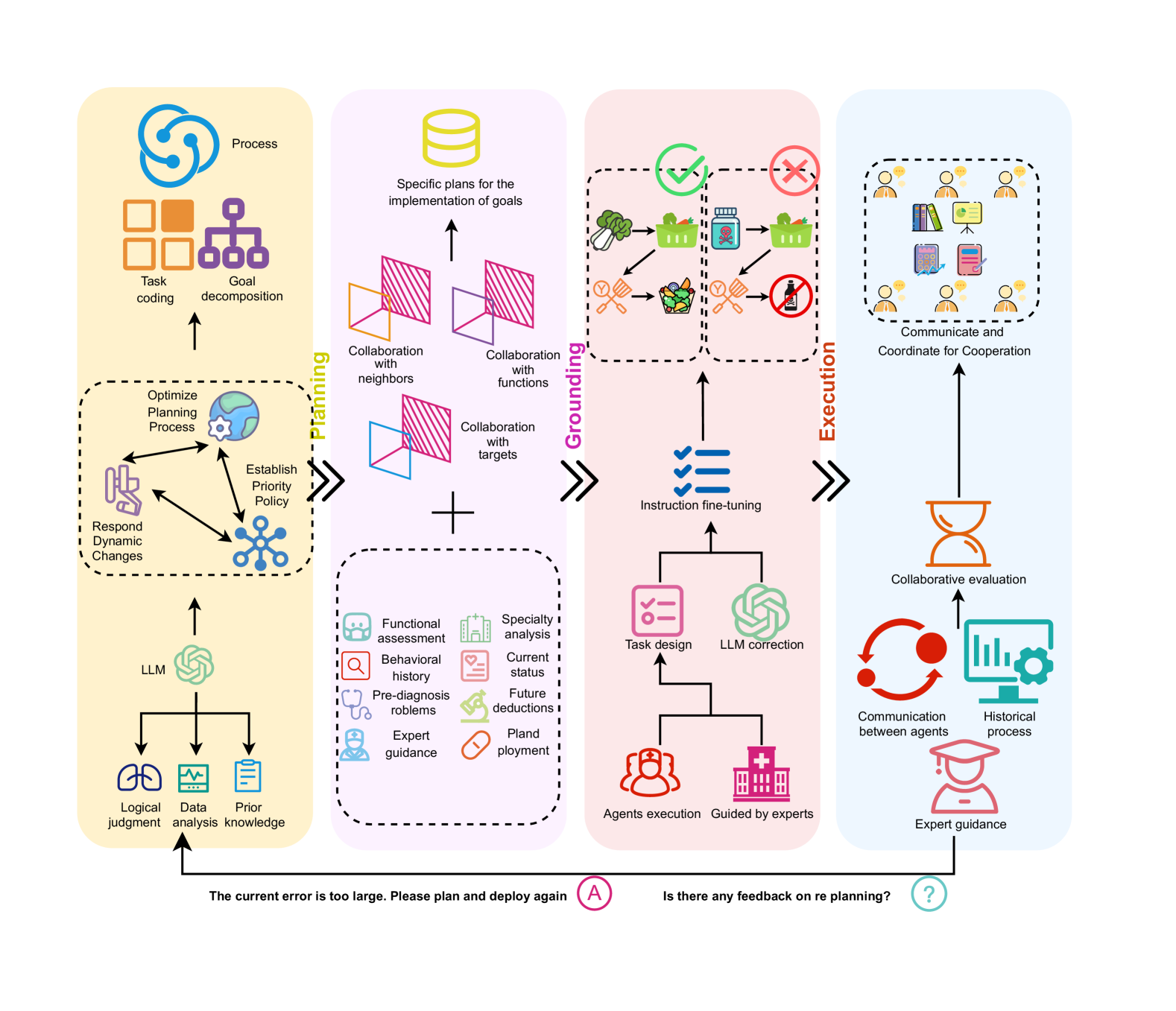

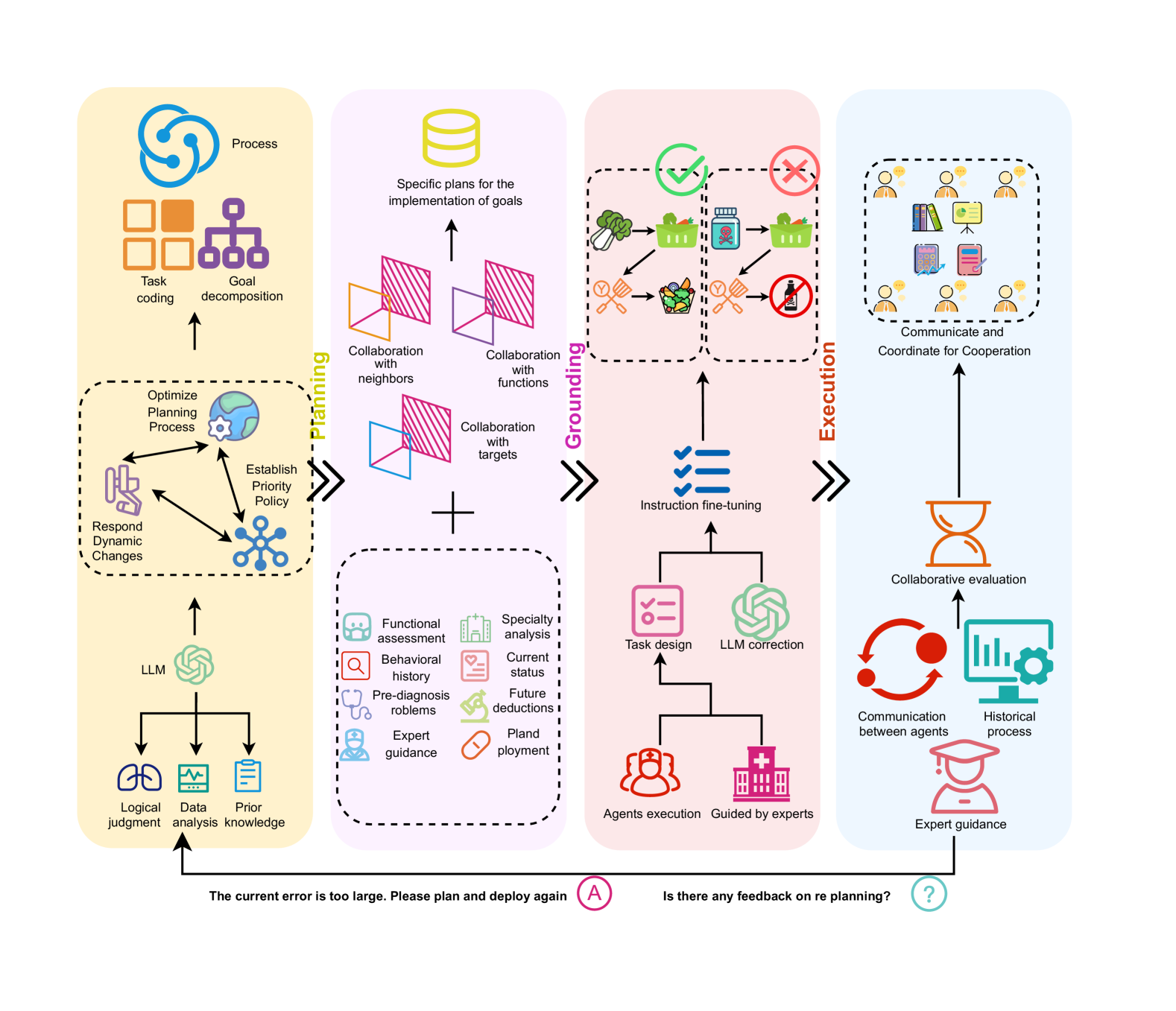

## Process Flow Diagram: Multi-Agent Collaborative Planning and Execution System

### Overview

The image is a detailed process flow diagram illustrating a four-stage, cyclical system for collaborative task planning, grounding, execution, and evaluation. The system appears designed for complex problem-solving, potentially in a medical or expert-guided domain, involving Large Language Models (LLMs), human experts, and multiple specialized agents. The flow moves from left to right through the stages, with a feedback loop at the bottom indicating iterative refinement.

### Components/Axes

The diagram is organized into four vertical, color-coded sections, each representing a major phase. The flow between phases is indicated by large, black, right-pointing chevrons (`>>`).

1. **Phase 1: Planning (Yellow Background, Leftmost)**

* **Top Cluster:** Icons and labels for "Process," "Task coding," and "Goal decomposition."

* **Middle Dashed Box:** A sub-process labeled "Optimize Planning Process" containing three interconnected elements: "Respond Dynamic Changes," "Establish Priority Policy," and an icon of a globe with a gear.

* **Bottom Cluster:** An "LLM" icon (resembling the OpenAI logo) feeds into three outputs: "Logical judgment," "Data analysis," and "Prior knowledge."

2. **Phase 2: Grounding (Light Purple Background, Center-Left)**

* **Top:** A database icon labeled "Specific plans for the implementation of goals."

* **Middle:** Three collaboration models represented by overlapping colored shapes: "Collaboration with neighbors," "Collaboration with functions," and "Collaboration with targets."

* **Bottom Dashed Box:** A list of assessment and analysis types, each with an icon: "Functional assessment," "Behavioral history," "Pre-diagnosis problems," "Expert guidance," "Specialty analysis," "Current status," "Future deductions," and "Planned deployment."

3. **Phase 3: Execution (Light Pink Background, Center-Right)**

* **Top Dashed Box:** A decision-making flowchart with icons. A green checkmark leads to a path involving vegetables, a basket, and a pot. A red "X" leads to a path involving a medicine bottle and a "no" symbol over a bottle, suggesting validation and rejection of actions.

* **Middle:** An "Instruction fine-tuning" element (blue checklist icon) receives input from the decision flowchart.

* **Bottom:** A branching structure showing "Task design" and "LLM correction" feeding into "Agents execution" and "Guided by experts."

4. **Phase 4: Evaluation & Coordination (Light Blue Background, Rightmost)**

* **Top Dashed Box:** A group of people icons surrounding various documents and charts, labeled "Communicate and Coordinate for Cooperation."

* **Middle:** An hourglass icon labeled "Collaborative evaluation."

* **Bottom:** A cycle showing "Communication between agents" (red circular arrows) and "Historical process" (monitor with gear), both informed by "Expert guidance" (graduation cap icon).

5. **Feedback Loop (Bottom of Diagram):**

* A black arrow runs from the bottom of the Execution/Evaluation phases back to the start of the Planning phase.

* Two text annotations are placed along this arrow:

* Left side: "The current error is too large. Please plan and deploy again" next to a circled "A".

* Right side: "Is there any feedback on re-planning?" next to a circled question mark.

### Detailed Analysis

* **Process Flow:** The system initiates in **Planning**, where an LLM uses prior knowledge and data to decompose goals and create tasks. This plan is then **Grounded** through collaboration models and detailed assessments. The grounded plan moves to **Execution**, where instructions are fine-tuned, tasks are designed, and agents (potentially AI or human) carry out actions, sometimes with expert guidance. Finally, the process enters **Evaluation & Coordination**, where outcomes are assessed collaboratively, communication occurs, and historical data is logged.

* **Key Relationships:**

* The LLM is a core component in the Planning phase, providing foundational judgment and analysis.

* Expert guidance is a recurring input, appearing in the Grounding (assessment list) and Execution (guided by experts) phases, and is a final output in the Evaluation phase.

* The "Instruction fine-tuning" step in Execution acts as a bridge between the high-level decision flowchart and the actual task execution.

* The feedback loop is critical, triggered by large errors or a need for re-planning, sending the process back to the initial Planning stage.

### Key Observations

* **Cyclical Nature:** The diagram is not linear but a continuous cycle, emphasizing iterative improvement based on evaluation and error feedback.

* **Hybrid Intelligence:** The system explicitly combines artificial intelligence (LLM, agents) with human intelligence (experts, collaboration, coordination).

* **Medical/Expert Context Clues:** Icons like a medicine bottle, stethoscope ("Pre-diagnosis problems"), and hospital ("Guided by experts") strongly suggest an application in healthcare or a similar expert-intensive field.

* **Structured Collaboration:** The Grounding phase breaks down collaboration into specific, structured models (with neighbors, functions, targets), indicating a sophisticated multi-agent framework.

### Interpretation

This diagram outlines a robust framework for deploying AI agents in complex, real-world tasks that require planning, validation, and expert oversight. It represents a **Peircean investigative cycle**:

1. **Abduction (Planning):** The LLM and planning process generate hypotheses (plans) based on available data and knowledge.

2. **Deduction (Grounding):** The plan is refined and tested against specific collaboration models and assessment criteria to predict outcomes.

3. **Induction (Execution):** Actions are taken in the real world (or a simulated environment), and results are observed.

4. **Evaluation & Feedback:** The outcomes are evaluated against the original goals. The explicit feedback loop ("error is too large") is the mechanism for **retroduction**—questioning the initial assumptions and plans when they fail to match reality, thus driving the next cycle of inquiry.

The system's value lies in its structured approach to mitigating the risks of autonomous AI by embedding human expertise, rigorous grounding, and continuous evaluation. It is designed not just to execute tasks, but to learn and adapt through collaboration and error correction, making it suitable for high-stakes domains where precision and accountability are paramount.

DECODING INTELLIGENCE...