TECHNICAL ASSET FINGERPRINT

43a263068eb914857e538fe6

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

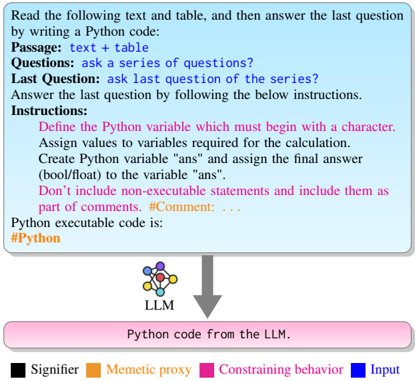

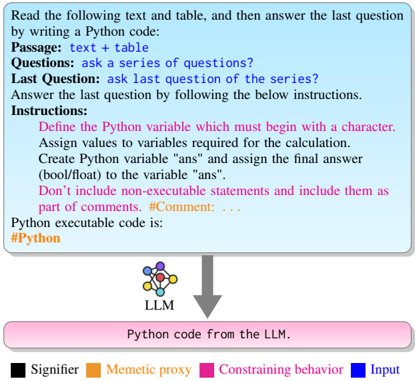

## Diagram: LLM Python Code Generation

### Overview

The image illustrates a process where a user reads text and a table, answers a question by writing Python code, and provides instructions for the code. This input is then processed by an LLM (Large Language Model) to generate Python code. The diagram includes a legend explaining the color-coding of different elements.

### Components/Axes

* **Top Section:** A light blue rounded rectangle containing instructions and context for the user.

* Text: "Read the following text and table, and then answer the last question by writing a Python code:"

* "Passage: text + table"

* "Questions: ask a series of questions?"

* "Last Question: ask last question of the series?"

* "Answer the last question by following the below instructions."

* "Instructions:"

* "Define the Python variable which must begin with a character."

* "Assign values to variables required for the calculation."

* "Create Python variable "ans" and assign the final answer (bool/float) to the variable "ans"."

* "Don't include non-executable statements and include them as part of comments. #Comment: ..."

* "Python executable code is:"

* "#Python" (orange text)

* **Middle Section:**

* An arrow pointing downwards from the bottom of the top section to the top of the bottom section.

* An icon representing an LLM (Large Language Model) with interconnected nodes.

* Text: "LLM" below the icon.

* **Bottom Section:** A pink rounded rectangle representing the output of the LLM.

* Text: "Python code from the LLM."

* **Legend (Bottom):**

* Black square: "Signifier"

* Orange square: "Memetic proxy"

* Pink square: "Constraining behavior"

* Blue square: "Input"

### Detailed Analysis or ### Content Details

* **User Input:** The user is instructed to read text and a table, answer a question using Python code, and follow specific instructions for writing the code.

* **LLM Processing:** The LLM takes the user's input and generates Python code.

* **Output:** The output is Python code generated by the LLM.

* **Color Coding:** The legend indicates that different elements in the process are color-coded to represent their function:

* Signifier (Black): Not explicitly shown in the diagram, but likely refers to elements that signify meaning or intent.

* Memetic Proxy (Orange): The "#Python" tag is colored orange, indicating it acts as a memetic proxy.

* Constraining Behavior (Pink): The bottom section "Python code from the LLM" is pink, indicating it represents a constraining behavior.

* Input (Blue): The top section containing the instructions is light blue, indicating it represents the input.

### Key Observations

* The diagram illustrates a workflow where user input is processed by an LLM to generate Python code.

* The color-coding provides additional information about the function of different elements in the process.

* The instructions emphasize the importance of defining variables correctly and including comments in the code.

### Interpretation

The diagram demonstrates the use of an LLM to generate Python code based on user instructions and input. The color-coding helps to clarify the roles of different elements in the process, such as the input, the LLM's processing, and the resulting code. The instructions provided to the user highlight the importance of clear and well-documented code, which is essential for effective collaboration and maintainability. The diagram suggests that the LLM is intended to assist users in writing Python code by automating the generation process based on specific instructions and input data.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

## Diagram: LLM Python Code Generation Flow with Prompt Categorization

### Overview

This image is a technical diagram illustrating the process of generating Python code using a Large Language Model (LLM) based on a structured prompt. It depicts an input prompt containing a problem description and specific instructions, which is then processed by an LLM to produce Python code. A legend categorizes different types of information within the prompt using color-coding.

### Components/Axes

The diagram is structured vertically into three main conceptual regions: Input Prompt, Processing Unit, and Output.

**1. Input Prompt (Top Section - Light Blue Rounded Rectangle):**

This section, positioned at the top of the image, contains the full problem statement and instructions for the LLM.

* **Main Header (Black text):** "Read the following text and table, and then answer the last question by writing a Python code:"

* **Problem Context (Black text):**

* "Passage: text + table"

* "Questions: ask a series of questions?"

* "Last Question: ask last question of the series?"

* "Answer the last question by following the below instructions."

* **Instructions Header (Black text):** "Instructions:"

* **Specific Coding Constraints (Magenta text, indented bullet points):**

* "Define the Python variable which must begin with a character."

* "Assign values to variables required for the calculation."

* "Create Python variable "ans" and assign the final answer (bool/float) to the variable "ans"."

* "Don't include non-executable statements and include them as part of comments. #Comment: ..."

* **Code Type Indicator (Black text):** "Python executable code is:"

* **Python Tag (Orange text):** "#Python"

**2. Processing Unit (Middle Section - Centered):**

This section represents the computational model.

* **Icon:** A multi-colored network-like icon (resembling a neural network or graph) with nodes in purple, yellow, green, red, and blue, connected by lines. It is positioned centrally below the input prompt.

* **Label (Black text):** "LLM" (positioned directly below the icon).

* **Flow Indicator:** A thick gray arrow points downwards from the "LLM" and icon, indicating the direction of processing.

**3. Output (Bottom Section - Pink Rounded Rectangle):**

This section, positioned at the bottom of the image, represents the result of the LLM's processing.

* **Output Description (Black text):** "Python code from the LLM."

**4. Legend (Bottom-Left):**

A legend is located at the bottom-left of the image, defining the meaning of the colors used in the text.

* **Black square:** "Signifier"

* **Orange square:** "Memetic proxy"

* **Magenta square:** "Constraining behavior"

* **Blue square:** "Input"

### Detailed Analysis

The diagram illustrates a prompt engineering scenario for an LLM. The top light blue box serves as the comprehensive input.

* **Signifiers (Black text):** All general descriptive text, headers, and labels such as "Passage:", "Questions:", "Instructions:", "LLM", and "Python code from the LLM" are colored black, indicating they are "Signifiers" according to the legend. These elements define the context and components of the task.

* **Memetic proxy (Orange text):** The "#Python" tag within the input prompt is colored orange, identifying it as a "Memetic proxy". This likely acts as a specific keyword or token that guides the LLM towards generating Python code.

* **Constraining behavior (Magenta text):** The detailed instructions for writing the Python code (e.g., variable naming conventions, output variable "ans", comment rules) are colored magenta. These are explicitly labeled as "Constraining behavior", meaning they are rules or limitations that the LLM must adhere to when generating its output.

* **Input (Blue):** No textual elements in the main prompt or output are explicitly colored blue. However, the legend defines blue as "Input". The LLM icon itself contains blue nodes, suggesting that "Input" might refer to internal components or conceptual aspects of the LLM's processing rather than a direct label for the textual prompt itself. Conceptually, the entire top light blue box *is* the input to the LLM.

The gray arrow clearly shows a unidirectional flow from the structured input prompt, through the LLM, to the generated Python code.

### Key Observations

* The diagram clearly segments the task into input, processing, and output stages.

* Color-coding is used effectively to categorize different types of information within the input prompt, providing a structured way to understand prompt components.

* The "Constraining behavior" (magenta) is crucial for guiding the LLM to produce code that meets specific requirements.

* The "Memetic proxy" (orange) suggests the use of specific tags or keywords to influence the LLM's output style or format.

### Interpretation

This diagram demonstrates a common pattern in interacting with Large Language Models for code generation. The "Signifiers" provide the general context and problem statement, setting the stage for the LLM. The "Memetic proxy" acts as a strong hint or directive, signaling the desired output format or domain (Python in this case). Most critically, the "Constraining behavior" elements are the explicit guardrails and requirements that ensure the generated code is not just functional but also adheres to specific structural or stylistic criteria. These constraints are vital for making LLM-generated code usable and consistent with predefined standards.

The LLM, represented by the network icon, takes this multi-faceted input and processes it to produce the desired "Python code from the LLM." The diagram highlights the importance of a well-structured and semantically rich prompt to effectively guide an LLM, moving beyond simple natural language requests to include explicit behavioral constraints and contextual cues. This structured prompting approach aims to improve the reliability and quality of LLM-generated outputs, especially in complex tasks like code generation.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: LLM Code Generation Process

### Overview

The image depicts a diagram illustrating the process of generating Python code using a Large Language Model (LLM) based on a given passage and table, along with a set of instructions. The diagram highlights the different components involved and their roles in the code generation process.

### Components/Axes

The diagram is segmented into several key areas:

* **Top Section (Input & Instructions):** Contains textual information describing the input (Passage, Questions, Last Question) and the instructions for generating the Python code.

* **Central Section (LLM):** Features a visual representation of the LLM, depicted as a network of interconnected nodes.

* **Bottom Section (Output):** Indicates the output of the LLM, which is Python code.

* **Legend:** Located at the bottom-right, defining the color-coding used in the diagram:

* Black: Signifier

* Orange: Memetic proxy

* Pink: Constraining behavior

* Blue: Input

### Detailed Analysis or Content Details

**Top Section (Input & Instructions):**

* **Passage:** "text + table"

* **Questions:** "ask a series of questions?"

* **Last Question:** "ask last question of the series?"

* **Instructions:**

* "Define the Python variable which must begin with a character."

* "Assign values to variables required for the calculation."

* "Create Python variable "ans" and assign the final answer (bool/float) to the variable "ans"."

* "Don't include non-executable statements and include them as part of comments. #Comment: ..."

* **Python executable code is:** "#Python" (This is a label, not code)

**Central Section (LLM):**

* The LLM is represented by a complex network of interconnected nodes, colored in shades of blue and white.

* The label "LLM" is positioned below the network.

* An arrow points downwards from the LLM towards the bottom section.

**Bottom Section (Output):**

* A rectangular box labeled "Python code from the LLM." is colored in pink.

**Color Coding:**

* The "Passage", "Questions", and "Last Question" text are colored blue, indicating they are "Input".

* The "Instructions" text is colored pink, indicating "Constraining behavior".

* The "#Python" label is colored black, indicating "Signifier".

* The LLM network is colored with a mix of blue and white, with the blue components likely representing "Input" and the white components representing internal processing.

* The "Python code from the LLM." box is colored pink, indicating "Constraining behavior".

### Key Observations

* The diagram emphasizes the flow of information from input (text and questions) through the LLM to the output (Python code).

* The instructions are presented as constraints guiding the LLM's code generation process.

* The color-coding effectively highlights the different roles of each component in the process.

* The diagram is conceptual and does not contain specific data points or numerical values.

### Interpretation

The diagram illustrates a simplified model of how an LLM can be used to generate Python code based on a given input and a set of instructions. The "Passage" and "Questions" serve as the input to the LLM, which then processes this information and generates Python code as output. The "Instructions" act as constraints, guiding the LLM to produce code that meets specific requirements (e.g., variable naming, assignment of the final answer). The color-coding helps to visually differentiate between the input, the LLM's internal processing, and the output. The diagram suggests a process of transformation, where the LLM takes unstructured text and questions and converts them into structured Python code. The diagram is a high-level representation and does not delve into the technical details of how the LLM operates internally. It is a conceptual illustration of the code generation process.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Diagram: LLM-Based Python Code Generation Process

### Overview

The image is a flowchart diagram illustrating a process for using a Large Language Model (LLM) to generate Python code based on a given text passage and a series of questions. The diagram uses color-coded elements and directional arrows to show the flow from input instructions to the final code output.

### Components/Axes

The diagram consists of three main visual components arranged vertically:

1. **Top Component (Light Blue Box):** A large rectangular box containing the primary instructions and input structure.

2. **Middle Component (LLM Icon & Arrow):** A small icon representing an LLM (depicted as a network of connected nodes) with a downward-pointing arrow.

3. **Bottom Component (Pink Box):** A rectangular box representing the output.

4. **Legend (Bottom of Image):** A color-coded key explaining the meaning of specific colors used in the diagram.

### Detailed Analysis

#### Top Component: Instruction Box

* **Background Color:** Light blue.

* **Text Content (Transcribed):**

* **Header:** "Read the following text and table, and then answer the last question by writing a Python code:"

* **Structure Labels (in blue):**

* "Passage: text + table"

* "Questions: ask a series of questions?"

* "Last Question: ask last question of the series?"

* **Instruction Block:** "Answer the last question by following the below instructions."

* **Detailed Instructions (in pink):**

* "Define the Python variable which must begin with a character."

* "Assign values to variables required for the calculation."

* "Create Python variable "ans" and assign the final answer (bool/float) to the variable "ans"."

* "Don't include non-executable statements and include them as part of comments. #Comment: . . ."

* **Final Line (in orange):** "Python executable code is: #Python"

#### Middle Component: Process Flow

* **LLM Icon:** A small graphic of interconnected circles (nodes) in blue, orange, and black, labeled "LLM" underneath.

* **Arrow:** A thick, gray, downward-pointing arrow connects the bottom of the instruction box to the top of the output box, indicating the direction of data flow.

#### Bottom Component: Output Box

* **Background Color:** Pink.

* **Text Content:** "Python code from the LLM."

#### Legend

* **Location:** Bottom of the image, below the main flowchart.

* **Color Key:**

* **Black Square:** "Signifier"

* **Orange Square:** "Memetic proxy"

* **Pink Square:** "Constraining behavior"

* **Blue Square:** "Input"

### Key Observations

1. **Color-Coding:** The diagram uses color intentionally. Blue is used for input labels ("Passage," "Questions," "Last Question") and the "Input" legend item. Pink is used for the constraining instructions and the output box, linking the rules to the final product. Orange highlights the final prompt for code ("#Python") and the "Memetic proxy" legend item.

2. **Process Flow:** The flow is strictly linear and top-down: Input Instructions -> LLM Processing -> Code Output.

3. **Instruction Specificity:** The instructions are highly specific, dictating variable naming conventions (must start with a character), the creation of a final answer variable (`ans`), and the handling of comments.

4. **Placeholder Text:** The "Passage," "Questions," and "Last Question" labels are placeholders, indicating where specific content would be inserted in a real application.

### Interpretation

This diagram outlines a structured **prompt engineering template** for an LLM tasked with code generation. It demonstrates a method to constrain and guide the LLM's output to ensure it produces valid, executable Python code that adheres to specific formatting rules.

* **Relationship Between Elements:** The "Constraining behavior" (pink) instructions are the core of the prompt, designed to override the LLM's default tendencies and force a predictable output format. The "Input" (blue) provides the raw data and query. The "Memetic proxy" (orange) likely refers to the LLM itself or the code-generation act as a proxy for solving the problem presented in the input.

* **Purpose:** The process aims to transform unstructured or semi-structured information (text + table + questions) into a precise, computational answer (`ans` variable) encapsulated in runnable code. This is valuable for automation, data analysis, and integrating LLM reasoning into larger software systems.

* **Notable Design Choice:** The separation of the "series of questions" from the "last question" suggests a chain-of-thought or multi-step reasoning process where the LLM must first understand the context before generating the final, code-based answer. The strict comment rule (`#Comment: . . .`) ensures that any explanatory text from the LLM does not break the code's executability.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Text-to-Python Code Generation Workflow

### Overview

The image depicts a structured workflow for generating Python code from a textual passage and table. It includes a blue box at the top (containing text, questions, and instructions) and a pink box at the bottom (containing Python code). An arrow connects the two boxes, indicating a directional flow. Color-coded elements (black, orange, pink, blue) highlight specific components like signifiers, memetic proxies, constraining behaviors, and inputs.

### Components/Axes

- **Blue Box (Top Section)**:

- **Passage**: Contains "text + table" (table content not visible).

- **Questions**: Lists "ask a series of questions?"

- **Last Question**: "ask last question of the series?"

- **Instructions**:

- Define Python variables starting with a character.

- Assign values to variables for calculations.

- Create Python variables "ans" (bool/float).

- Avoid non-executable statements; include comments as part of comments.

- **Pink Box (Bottom Section)**:

- **Python Code**: Contains executable code with comments (e.g., `#Python`, `#Comment`).

- **Arrow**: Gray, connecting the blue and pink boxes, indicating the flow from text to code.

- **Color Legend**:

- **Black**: Signifiers (e.g., "LLM").

- **Orange**: Memetic proxy (e.g., "LLM").

- **Pink**: Constraining behavior (e.g., "Python code from the LLM").

- **Blue**: Input (e.g., "Passage", "Questions").

### Detailed Analysis

- **Textual Content**:

- The blue box includes a hierarchical structure:

1. **Passage**: A textual input with an embedded table (content unspecified).

2. **Questions**: A prompt to generate a series of questions.

3. **Last Question**: A specific query about the final question in the series.

4. **Instructions**: Step-by-step guidelines for code generation, emphasizing variable naming, value assignment, and comment formatting.

- The pink box contains Python code with comments, though the exact code is not visible in the image.

- **Color Coding**:

- **Black (Signifiers)**: Used for labels like "LLM" (likely representing the language model).

- **Orange (Memetic Proxy)**: Highlights the LLM as a proxy for generating code.

- **Pink (Constraining Behavior)**: Indicates the Python code output, constrained by the instructions.

- **Blue (Input)**: Marks the textual passage and questions as input data.

### Key Observations

1. **Flow Direction**: The arrow explicitly links the textual input (blue box) to the Python code output (pink box), emphasizing a cause-effect relationship.

2. **Instructional Rigor**: The instructions enforce strict coding practices (e.g., variable naming, comment formatting), suggesting a focus on code readability and correctness.

3. **Missing Table Data**: The "text + table" in the passage lacks visible content, limiting analysis of how the table influences the code generation.

4. **Color Consistency**: The legend colors align with the elements they describe (e.g., "LLM" is both black and orange, possibly indicating dual roles).

### Interpretation

The diagram illustrates a **text-to-code pipeline** where a language model (LLM) processes a textual passage and table to generate Python code. The color coding and structured instructions suggest a systematic approach to ensure the generated code adheres to predefined constraints. The absence of the table’s content is a critical gap, as it could reveal how structured data influences the output. The use of "ans" as a variable implies the code’s purpose is to compute a final answer (e.g., a boolean or float value). The workflow emphasizes **input-output clarity** and **code quality**, with the LLM acting as both a signifier (black) and a memetic proxy (orange) for code generation.

DECODING INTELLIGENCE...