\n

## Diagram: Knowledge Graph Question Answering System

### Overview

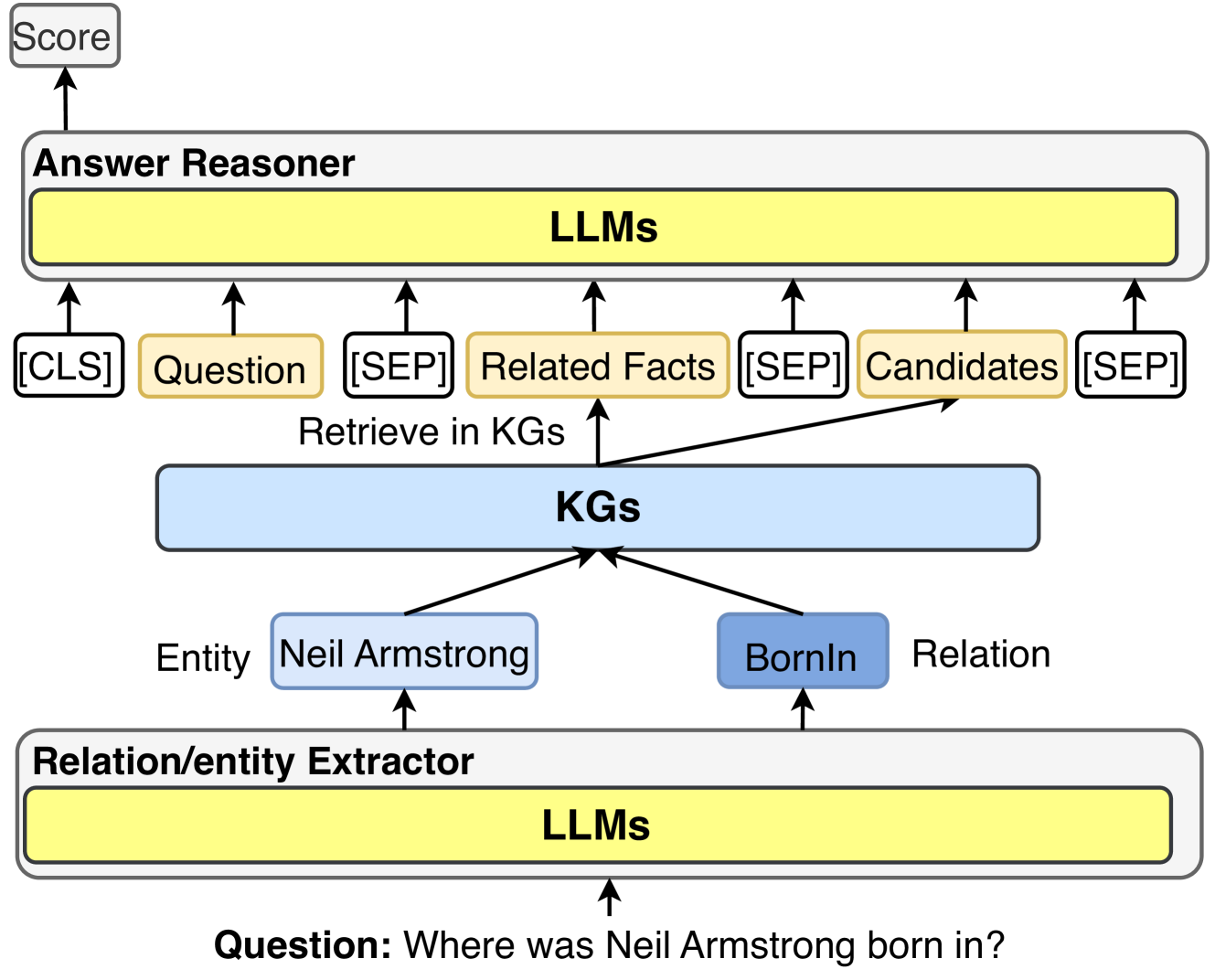

This diagram illustrates a knowledge graph (KG) based question answering system. It depicts the flow of information from a question input, through entity and relation extraction, knowledge retrieval, and finally to an answer reasoner that produces a score. The system leverages Large Language Models (LLMs) at multiple stages.

### Components/Axes

The diagram consists of the following components:

* **Question:** "Where was Neil Armstrong born in?" (bottom)

* **Relation/entity Extractor:** A yellow rectangle labeled "LLMs" and a grey rectangle labeled "Relation/entity Extractor".

* **Entity:** "Neil Armstrong" (bottom-center)

* **Relation:** "BornIn" (bottom-center)

* **KGs:** A blue rectangle labeled "KGs" (center)

* **Retrieve in KGs:** A curved arrow indicating retrieval from the KGs.

* **Answer Reasoner:** A light-grey rectangle labeled "Answer Reasoner" (top)

* **LLMs:** A yellow rectangle labeled "LLMs" (top-center)

* **[CLS] Question [SEP] Related Facts [SEP] Candidates [SEP]:** Text blocks above the LLMs, indicating input tokens.

* **Score:** A light-grey rectangle labeled "Score" (top-left)

### Detailed Analysis or Content Details

The diagram shows a process flow:

1. A question ("Where was Neil Armstrong born in?") is input to a Relation/entity Extractor.

2. The Relation/entity Extractor, powered by LLMs, identifies the "Entity" as "Neil Armstrong" and the "Relation" as "BornIn".

3. These extracted entities and relations are used to "Retrieve in KGs" from a Knowledge Graph (KGs).

4. The retrieved information, along with the original question, is formatted as "[CLS] Question [SEP] Related Facts [SEP] Candidates [SEP]" and fed into an "Answer Reasoner".

5. The Answer Reasoner, also powered by LLMs, processes this information and outputs a "Score".

The diagram uses arrows to indicate the direction of information flow. The curved arrow from KGs to LLMs indicates the retrieval process. The straight arrows indicate the flow of data between components.

### Key Observations

The diagram highlights the use of LLMs in both the Relation/entity Extractor and the Answer Reasoner. The use of special tokens ([CLS], [SEP]) suggests a transformer-based model is being used. The diagram focuses on the process rather than specific data or numerical values.

### Interpretation

This diagram illustrates a common architecture for knowledge graph-based question answering. The system leverages the strengths of both knowledge graphs (structured knowledge) and LLMs (natural language understanding and reasoning). The LLMs are used to extract relevant information from the question and to reason over the retrieved knowledge to generate an answer. The score likely represents the confidence of the answer. The use of special tokens suggests the system is designed to work with transformer-based LLMs, which are currently state-of-the-art in natural language processing. The diagram suggests a modular design, where each component can be potentially replaced or improved independently. The system is designed to answer questions based on facts stored in the KGs. The diagram does not provide information about the size or content of the KGs, or the specific LLMs being used.