\n

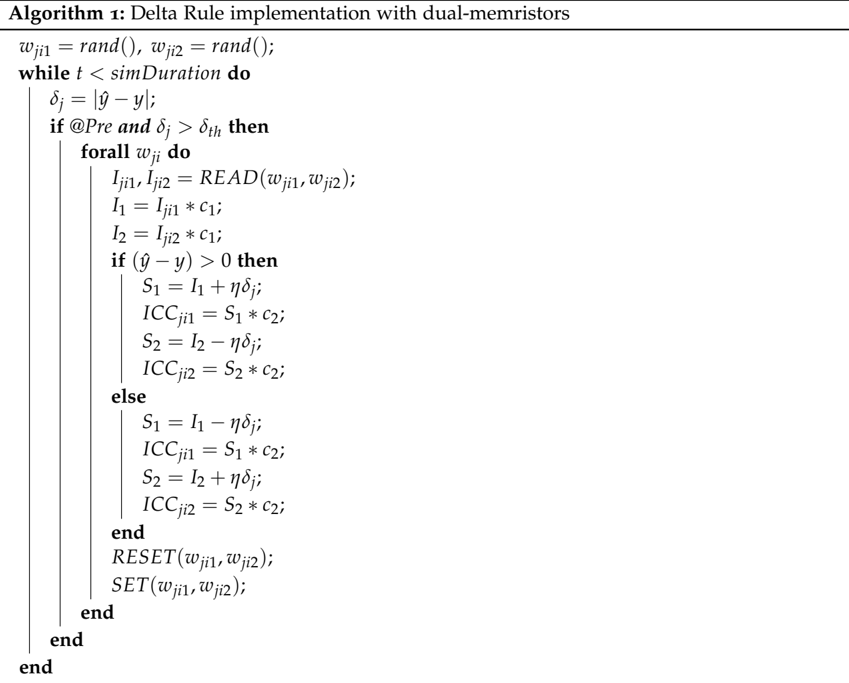

## Algorithm: Delta Rule Implementation with Dual-Memristors

### Overview

The image presents a pseudocode algorithm titled "Delta Rule Implementation with Dual-Memristors". It outlines a learning rule, likely for a neural network, utilizing dual-memristor devices. The algorithm iteratively adjusts weights based on the difference between a target output and an actual output.

### Components/Axes

The content is structured as a pseudocode block with the following key elements:

* **Initialization:** `wji1 = rand(); wji2 = rand();` - Initializes weights `wji1` and `wji2` with random values.

* **Loop Condition:** `while t < simDuration do` - The algorithm iterates as long as the simulation time `t` is less than `simDuration`.

* **Error Calculation:** `δj = |ŷ - y|` - Calculates the error `δj` as the absolute difference between the target output `ŷ` and the actual output `y`.

* **Conditional Statement:** `if @Pre and δj > δth then` - Executes the following block only if the condition `@Pre` is true and the error `δj` is greater than a threshold `δth`.

* **Inner Loop:** `forall wji do` - Iterates through all weights `wji`.

* **Read Operation:** `Iji1, Iji2 = READ(wji1, wji2);` - Reads current values from memristors `wji1` and `wji2`, assigning them to `Iji1` and `Iji2`.

* **Intermediate Calculations:** `I1 = Iji1 * c1; I2 = Iji2 * c1;` - Calculates intermediate values `I1` and `I2` by multiplying the read currents with a constant `c1`.

* **Conditional Update (Positive Error):** `if (ŷ - y) > 0 then` - Executes the following block if the difference between the target and actual output is positive.

* `S1 = I1 + ηδj; ICCji1 = S1 * c2;` - Updates `S1` and calculates `ICCji1`.

* `S2 = I2 - ηδj; ICCji2 = S2 * c2;` - Updates `S2` and calculates `ICCji2`.

* **Conditional Update (Negative Error):** `else` - Executes the following block if the difference between the target and actual output is not positive (i.e., negative or zero).

* `S1 = I1 - ηδj; ICCji1 = S1 * c2;` - Updates `S1` and calculates `ICCji1`.

* `S2 = I2 + ηδj; ICCji2 = S2 * c2;` - Updates `S2` and calculates `ICCji2`.

* **Reset Operation:** `RESET(wji1, wji2);` - Resets the memristors `wji1` and `wji2`.

* **Set Operation:** `SET(wji1, wji2);` - Sets the memristors `wji1` and `wji2`.

* **End Statements:** `end`, `end` - Terminates the inner and outer loops.

### Detailed Analysis or Content Details

The algorithm describes a learning process where weights are adjusted based on the error signal. The use of `READ` and `RESET/SET` operations suggests the algorithm is designed for memristor-based hardware implementation. The constants `c1` and `c2` likely represent device characteristics. The parameter `η` (eta) appears to be a learning rate. The `@Pre` condition is not defined, suggesting it might be a hardware-specific pre-condition.

### Key Observations

The algorithm implements a delta rule, a classic supervised learning algorithm. The use of memristors introduces non-linearity and potentially energy efficiency. The conditional updates based on the sign of the error indicate a gradient-based learning approach. The `READ` operation suggests the memristor's state is read before weight updates.

### Interpretation

This algorithm demonstrates a potential implementation of a neural network learning rule using memristor devices. The algorithm leverages the unique properties of memristors – their ability to retain state and perform analog computation – to implement weight storage and updates. The `READ`, `RESET`, and `SET` operations are crucial for interacting with the memristor hardware. The algorithm's performance would depend on the specific characteristics of the memristors (represented by `c1` and `c2`) and the learning rate `η`. The `@Pre` condition suggests a hardware-level requirement for the learning process to proceed. The use of absolute value in the error calculation `δj = |ŷ - y|` suggests the algorithm is not sensitive to the sign of the error during the initial error calculation, but the sign is used to determine the direction of weight updates. This is a standard approach in delta rule implementations. The algorithm is a simplified model and may require further refinement for practical applications, such as handling multiple layers or more complex activation functions.