TECHNICAL ASSET FINGERPRINT

486e940a1b7b781bbc39162a

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

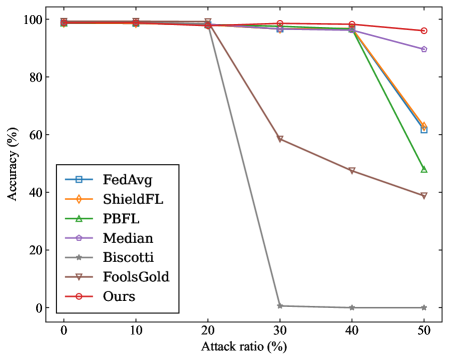

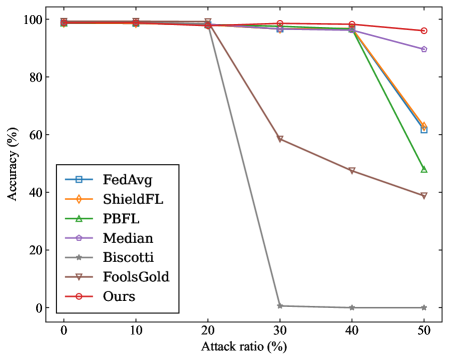

## Line Chart: Accuracy vs. Attack Ratio

### Overview

The image is a line chart comparing the accuracy of different federated learning methods against varying attack ratios. The chart displays how the accuracy of each method changes as the attack ratio increases from 0% to 50%. The methods compared are FedAvg, ShieldFL, PBFL, Median, Biscotti, FoolsGold, and Ours.

### Components/Axes

* **X-axis:** Attack ratio (%), with markers at 0, 10, 20, 30, 40, and 50.

* **Y-axis:** Accuracy (%), with markers at 0, 20, 40, 60, 80, and 100.

* **Legend:** Located on the left side of the chart, listing the methods and their corresponding line colors and markers:

* FedAvg (blue, square marker)

* ShieldFL (orange, diamond marker)

* PBFL (green, triangle marker)

* Median (purple, pentagon marker)

* Biscotti (gray, star marker)

* FoolsGold (brown, inverted triangle marker)

* Ours (red, circle marker)

### Detailed Analysis

* **FedAvg (blue, square):** The accuracy remains relatively stable around 98% - 99% until an attack ratio of 40%, after which it drops to approximately 62% at 50%.

* (0, 99)

* (10, 99)

* (20, 98)

* (30, 98)

* (40, 98)

* (50, 62)

* **ShieldFL (orange, diamond):** Similar to FedAvg, the accuracy is stable around 98% - 99% until an attack ratio of 40%, then drops to approximately 62% at 50%.

* (0, 99)

* (10, 99)

* (20, 98)

* (30, 98)

* (40, 98)

* (50, 62)

* **PBFL (green, triangle):** The accuracy is stable around 98% - 99% until an attack ratio of 40%, then drops significantly to approximately 48% at 50%.

* (0, 99)

* (10, 99)

* (20, 98)

* (30, 97)

* (40, 98)

* (50, 48)

* **Median (purple, pentagon):** The accuracy is stable around 98% - 99% until an attack ratio of 40%, then drops to approximately 90% at 50%.

* (0, 99)

* (10, 99)

* (20, 98)

* (30, 97)

* (40, 98)

* (50, 90)

* **Biscotti (gray, star):** The accuracy drops sharply from approximately 99% at 20% attack ratio to nearly 0% at 30% attack ratio, remaining near 0% for higher attack ratios.

* (0, 99)

* (10, 99)

* (20, 99)

* (30, 1)

* (40, 0)

* (50, 0)

* **FoolsGold (brown, inverted triangle):** The accuracy gradually decreases from approximately 99% at 0% attack ratio to approximately 40% at 50% attack ratio.

* (0, 99)

* (10, 98)

* (20, 98)

* (30, 59)

* (40, 48)

* (50, 40)

* **Ours (red, circle):** The accuracy remains stable around 98% - 99% across all attack ratios.

* (0, 99)

* (10, 99)

* (20, 99)

* (30, 98)

* (40, 98)

* (50, 97)

### Key Observations

* The "Ours" method (red line) demonstrates the most resilience to increasing attack ratios, maintaining a consistently high accuracy.

* Biscotti (gray line) is highly susceptible to attacks, with its accuracy plummeting to near zero at a 30% attack ratio.

* FedAvg, ShieldFL, and PBFL show similar performance, maintaining high accuracy until a 40% attack ratio, after which their accuracy drops.

* FoolsGold experiences a gradual decline in accuracy as the attack ratio increases.

* Median maintains a high accuracy even at a 50% attack ratio.

### Interpretation

The chart illustrates the vulnerability of different federated learning methods to adversarial attacks. The "Ours" method appears to be the most robust against such attacks, maintaining a high level of accuracy even with a high attack ratio. Biscotti is the most vulnerable, while FedAvg, ShieldFL, and PBFL show moderate vulnerability. The performance of Median and FoolsGold falls in between. This suggests that the "Ours" method incorporates mechanisms to mitigate the impact of malicious actors, making it a potentially more reliable choice in environments where adversarial attacks are a concern. The data highlights the importance of considering the robustness of federated learning methods when deploying them in real-world scenarios.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

## Chart Type: Line Chart - Accuracy vs. Attack Ratio

### Overview

This image displays a 2D line chart illustrating the performance of seven different methods (FedAvg, ShieldFL, PBFL, Median, Biscotti, FoolsGold, and Ours) in terms of "Accuracy (%)" as the "Attack ratio (%)" increases. The chart shows how the accuracy of each method is affected by varying levels of adversarial attacks, ranging from 0% to 50%.

### Components/Axes

The chart consists of a main plotting area, a Y-axis on the left, an X-axis at the bottom, and a legend in the bottom-left quadrant.

* **X-axis**:

* **Title**: "Attack ratio (%)"

* **Range**: From 0% to 50%.

* **Major Ticks**: 0, 10, 20, 30, 40, 50.

* **Y-axis**:

* **Title**: "Accuracy (%)"

* **Range**: From 0% to 100%.

* **Major Ticks**: 0, 20, 40, 60, 80, 100.

* **Legend**: Located in the bottom-left corner of the plotting area, approximately between Y-axis values of 20% and 50%. It lists the seven methods with their corresponding line colors and markers:

* **FedAvg**: Blue line with square markers (□)

* **ShieldFL**: Orange line with diamond markers (◇)

* **PBFL**: Green line with upward-pointing triangle markers (△)

* **Median**: Purple line with 5-point star markers (★)

* **Biscotti**: Grey line with 6-point star markers (✶)

* **FoolsGold**: Brown line with downward-pointing triangle markers (▽)

* **Ours**: Red line with circle markers (○)

### Detailed Analysis

Each line represents the accuracy of a specific method across different attack ratios.

1. **Ours (Red line with circle markers)**:

* **Trend**: Maintains very high accuracy, showing remarkable resilience to increasing attack ratios. It is the most stable line.

* **Data Points**:

* At 0% Attack ratio: Approximately 99% Accuracy.

* At 10% Attack ratio: Approximately 99% Accuracy.

* At 20% Attack ratio: Approximately 99% Accuracy.

* At 30% Attack ratio: Approximately 98% Accuracy.

* At 40% Attack ratio: Approximately 98% Accuracy.

* At 50% Attack ratio: Approximately 96% Accuracy.

2. **Median (Purple line with 5-point star markers)**:

* **Trend**: Maintains high accuracy up to 30% attack ratio, then shows a moderate decline.

* **Data Points**:

* At 0% Attack ratio: Approximately 99% Accuracy.

* At 10% Attack ratio: Approximately 99% Accuracy.

* At 20% Attack ratio: Approximately 99% Accuracy.

* At 30% Attack ratio: Approximately 97% Accuracy.

* At 40% Attack ratio: Approximately 94% Accuracy.

* At 50% Attack ratio: Approximately 88% Accuracy.

3. **FedAvg (Blue line with square markers)**:

* **Trend**: Maintains high accuracy up to 30% attack ratio, then experiences a significant drop.

* **Data Points**:

* At 0% Attack ratio: Approximately 99% Accuracy.

* At 10% Attack ratio: Approximately 99% Accuracy.

* At 20% Attack ratio: Approximately 99% Accuracy.

* At 30% Attack ratio: Approximately 97% Accuracy.

* At 40% Attack ratio: Approximately 96% Accuracy.

* At 50% Attack ratio: Approximately 62% Accuracy.

4. **ShieldFL (Orange line with diamond markers)**:

* **Trend**: Very similar to FedAvg, maintaining high accuracy initially, then dropping significantly.

* **Data Points**:

* At 0% Attack ratio: Approximately 99% Accuracy.

* At 10% Attack ratio: Approximately 99% Accuracy.

* At 20% Attack ratio: Approximately 99% Accuracy.

* At 30% Attack ratio: Approximately 97% Accuracy.

* At 40% Attack ratio: Approximately 96% Accuracy.

* At 50% Attack ratio: Approximately 63% Accuracy.

5. **PBFL (Green line with upward-pointing triangle markers)**:

* **Trend**: Similar to FedAvg and ShieldFL, but shows a slightly steeper drop at higher attack ratios.

* **Data Points**:

* At 0% Attack ratio: Approximately 99% Accuracy.

* At 10% Attack ratio: Approximately 99% Accuracy.

* At 20% Attack ratio: Approximately 99% Accuracy.

* At 30% Attack ratio: Approximately 97% Accuracy.

* At 40% Attack ratio: Approximately 96% Accuracy.

* At 50% Attack ratio: Approximately 48% Accuracy.

6. **FoolsGold (Brown line with downward-pointing triangle markers)**:

* **Trend**: Maintains high accuracy up to 20% attack ratio, then experiences a sharp and continuous decline.

* **Data Points**:

* At 0% Attack ratio: Approximately 99% Accuracy.

* At 10% Attack ratio: Approximately 99% Accuracy.

* At 20% Attack ratio: Approximately 98% Accuracy.

* At 30% Attack ratio: Approximately 59% Accuracy.

* At 40% Attack ratio: Approximately 48% Accuracy.

* At 50% Attack ratio: Approximately 39% Accuracy.

7. **Biscotti (Grey line with 6-point star markers)**:

* **Trend**: Maintains high accuracy up to 20% attack ratio, then suffers a catastrophic drop, reaching near-zero accuracy.

* **Data Points**:

* At 0% Attack ratio: Approximately 99% Accuracy.

* At 10% Attack ratio: Approximately 99% Accuracy.

* At 20% Attack ratio: Approximately 98% Accuracy.

* At 30% Attack ratio: Approximately 1% Accuracy.

* At 40% Attack ratio: Approximately 1% Accuracy.

* At 50% Attack ratio: Approximately 1% Accuracy.

### Key Observations

* At 0% attack ratio, all methods show very high accuracy, close to 99-100%.

* "Ours" consistently maintains the highest accuracy across all attack ratios, showing minimal degradation even at 50% attack ratio (around 96%).

* "Median" is the second most robust method, with its accuracy dropping to about 88% at 50% attack ratio.

* "FedAvg", "ShieldFL", and "PBFL" perform similarly up to 40% attack ratio (around 96% accuracy), but their accuracy drops significantly at 50% attack ratio, with PBFL showing the steepest decline among them (to ~48%). FedAvg and ShieldFL drop to ~62-63%.

* "FoolsGold" shows a substantial drop in accuracy starting from 20% attack ratio, reaching below 40% at 50% attack ratio.

* "Biscotti" is the least robust method, experiencing a near-total collapse in accuracy between 20% and 30% attack ratio, stabilizing at approximately 1% accuracy for attack ratios of 30% and above.

### Interpretation

This chart demonstrates the robustness of different machine learning or federated learning aggregation methods against adversarial attacks, measured by the "Attack ratio (%)".

The data strongly suggests that the method labeled "Ours" offers superior resilience to attacks compared to all other evaluated methods. Its accuracy remains consistently high, indicating that it can maintain performance even when a significant portion (up to 50%) of the participants are malicious.

The "Median" method also shows good robustness, though not as high as "Ours," suggesting that median-based aggregation can be a reasonably effective defense.

Methods like "FedAvg", "ShieldFL", and "PBFL" are somewhat robust to moderate attack ratios (up to 40%) but show significant vulnerability when the attack ratio reaches 50%. This implies that while they might offer some protection, they are not sufficient for scenarios with high adversarial participation.

"FoolsGold" and especially "Biscotti" appear to be highly susceptible to attacks. "Biscotti"'s performance degradation is particularly severe, indicating that it is practically unusable in the presence of even moderate adversarial attacks (above 20%).

In summary, the chart highlights the critical importance of robust aggregation mechanisms in distributed learning environments susceptible to adversarial attacks, with "Ours" presenting itself as a highly effective solution for maintaining model accuracy under such conditions.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Line Chart: Accuracy vs. Attack Ratio for Federated Learning Algorithms

### Overview

This line chart depicts the accuracy of several Federated Learning (FL) algorithms under varying attack ratios. The x-axis represents the attack ratio (in percentage), and the y-axis represents the accuracy (also in percentage). The chart compares the performance of FedAvg, ShieldFL, PBFL, Median, Biscotti, FoolsGold, and a method labeled "Ours".

### Components/Axes

* **X-axis:** "Attack ratio (%)" - Scale ranges from 0% to 50%, with markers at 0, 10, 20, 30, 40, and 50.

* **Y-axis:** "Accuracy (%)" - Scale ranges from 0% to 100%, with markers at 0, 20, 40, 60, 80, and 100.

* **Legend:** Located in the bottom-left corner. Contains the following labels and corresponding colors:

* FedAvg (Blue) - Represented by squares.

* ShieldFL (Orange) - Represented by circles.

* PBFL (Green) - Represented by triangles.

* Median (Purple) - Represented by diamonds.

* Biscotti (Black) - Represented by stars.

* FoolsGold (Brown) - Represented by plus signs.

* Ours (Red) - Represented by circles with an 'o' inside.

### Detailed Analysis

Here's a breakdown of each line's trend and approximate data points, cross-referencing with the legend colors:

* **FedAvg (Blue):** The line starts at approximately 97% accuracy at 0% attack ratio and remains relatively stable until approximately 40% attack ratio, where it begins to decline. At 50% attack ratio, the accuracy is approximately 88%.

* **ShieldFL (Orange):** The line starts at approximately 98% accuracy at 0% attack ratio and remains stable until approximately 30% attack ratio, where it begins to decline. At 50% attack ratio, the accuracy is approximately 92%.

* **PBFL (Green):** The line starts at approximately 98% accuracy at 0% attack ratio and remains stable until approximately 40% attack ratio, where it begins to decline sharply. At 50% attack ratio, the accuracy is approximately 50%.

* **Median (Purple):** The line starts at approximately 97% accuracy at 0% attack ratio and remains stable until approximately 20% attack ratio, where it begins to decline. At 50% attack ratio, the accuracy is approximately 93%.

* **Biscotti (Black):** The line starts at approximately 97% accuracy at 0% attack ratio and remains stable until approximately 20% attack ratio, where it begins to decline. At 50% attack ratio, the accuracy is approximately 93%.

* **FoolsGold (Brown):** The line starts at approximately 98% accuracy at 0% attack ratio and remains stable until approximately 20% attack ratio, where it declines sharply to approximately 58% at 30% attack ratio. At 50% attack ratio, the accuracy is approximately 40%.

* **Ours (Red):** The line starts at approximately 99% accuracy at 0% attack ratio and remains stable until approximately 20% attack ratio, where it declines sharply to approximately 0% at 30% attack ratio. At 50% attack ratio, the accuracy is approximately 38%.

### Key Observations

* The "Ours" algorithm exhibits the most significant drop in accuracy as the attack ratio increases, falling to near 0% accuracy at 30% attack ratio.

* ShieldFL, Median, and Biscotti demonstrate the most robust performance, maintaining relatively high accuracy even at 50% attack ratio.

* PBFL and FoolsGold show a more moderate decline in accuracy, but are more susceptible to attacks than ShieldFL, Median, and Biscotti.

* FedAvg shows a moderate decline in accuracy, falling between the robust algorithms and the more susceptible ones.

### Interpretation

The chart demonstrates the vulnerability of Federated Learning algorithms to attacks, quantified by the "Attack ratio". The "Attack ratio" likely represents the proportion of malicious participants in the federated learning process. The "Ours" algorithm, while performing well under no attack, is highly susceptible to even a small attack ratio, suggesting a potential weakness in its defense mechanisms. ShieldFL, Median, and Biscotti appear to be the most resilient to attacks, indicating they incorporate effective defense strategies. The sharp decline in accuracy for "Ours" at 30% attack ratio suggests a critical threshold beyond which the algorithm's performance is severely compromised. The data suggests that robust defense mechanisms are crucial for ensuring the reliability and security of Federated Learning systems in adversarial environments. The differences in performance between the algorithms highlight the importance of considering attack resilience when selecting or designing FL algorithms.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart: Accuracy vs. Attack Ratio for Various Federated Learning Methods

### Overview

The image is a line chart comparing the performance (accuracy) of seven different methods in a federated learning context as the percentage of malicious participants (attack ratio) increases. The chart demonstrates how each method's accuracy degrades under increasing adversarial conditions.

### Components/Axes

* **Chart Type:** Multi-line chart with markers.

* **X-Axis:** Labeled **"Attack ratio (%)"**. Major tick marks and labels are present at 0, 10, 20, 30, 40, and 50.

* **Y-Axis:** Labeled **"Accuracy (%)"**. The scale runs from 0 to 100, with major tick marks at intervals of 20 (0, 20, 40, 60, 80, 100).

* **Legend:** Positioned in the **bottom-left corner** of the plot area. It contains seven entries, each with a unique color, marker shape, and label:

1. **FedAvg** - Blue line with square markers (□).

2. **ShieldFL** - Orange line with diamond markers (◇).

3. **PBFL** - Green line with upward-pointing triangle markers (△).

4. **Median** - Purple line with circle markers (○).

5. **Biscotti** - Gray line with star/asterisk markers (☆).

6. **FoolsGold** - Brown line with downward-pointing triangle markers (▽).

7. **Ours** - Red line with circle markers (○).

### Detailed Analysis

The following data points are approximate, extracted by visual inspection of the chart.

**Trend Verification & Data Points:**

1. **Ours (Red, ○):** The line remains nearly flat at the top of the chart, showing high resilience.

* 0% Attack: ~100% Accuracy

* 10% Attack: ~100% Accuracy

* 20% Attack: ~100% Accuracy

* 30% Attack: ~99% Accuracy

* 40% Attack: ~98% Accuracy

* 50% Attack: ~96% Accuracy

2. **Median (Purple, ○):** The line stays high until 40% attack, then shows a moderate decline.

* 0% Attack: ~100% Accuracy

* 10% Attack: ~100% Accuracy

* 20% Attack: ~99% Accuracy

* 30% Attack: ~98% Accuracy

* 40% Attack: ~97% Accuracy

* 50% Attack: ~90% Accuracy

3. **ShieldFL (Orange, ◇):** The line follows a path very close to FedAvg, with a sharp decline after 40%.

* 0% Attack: ~100% Accuracy

* 10% Attack: ~100% Accuracy

* 20% Attack: ~99% Accuracy

* 30% Attack: ~98% Accuracy

* 40% Attack: ~97% Accuracy

* 50% Attack: ~63% Accuracy

4. **FedAvg (Blue, □):** The line shows a similar trend to ShieldFL, dropping sharply after 40%.

* 0% Attack: ~100% Accuracy

* 10% Attack: ~100% Accuracy

* 20% Attack: ~99% Accuracy

* 30% Attack: ~98% Accuracy

* 40% Attack: ~97% Accuracy

* 50% Attack: ~61% Accuracy

5. **PBFL (Green, △):** The line begins its decline earlier than FedAvg/ShieldFL, starting after 20%.

* 0% Attack: ~100% Accuracy

* 10% Attack: ~100% Accuracy

* 20% Attack: ~99% Accuracy

* 30% Attack: ~98% Accuracy

* 40% Attack: ~96% Accuracy

* 50% Attack: ~48% Accuracy

6. **FoolsGold (Brown, ▽):** The line shows a steady, significant decline starting after 20%.

* 0% Attack: ~100% Accuracy

* 10% Attack: ~100% Accuracy

* 20% Attack: ~99% Accuracy

* 30% Attack: ~59% Accuracy

* 40% Attack: ~48% Accuracy

* 50% Attack: ~39% Accuracy

7. **Biscotti (Gray, ☆):** The line exhibits the most catastrophic failure, dropping to near-zero accuracy after 20%.

* 0% Attack: ~100% Accuracy

* 10% Attack: ~100% Accuracy

* 20% Attack: ~99% Accuracy

* 30% Attack: ~1% Accuracy

* 40% Attack: ~0% Accuracy

* 50% Attack: ~0% Accuracy

### Key Observations

* **Performance Clustering:** At low attack ratios (0-20%), all methods perform nearly identically with ~100% accuracy.

* **Divergence Point:** The critical divergence occurs between 20% and 30% attack ratio. Biscotti and FoolsGold begin severe degradation here.

* **Second Drop Point:** A second major divergence occurs between 40% and 50% attack ratio, where FedAvg, ShieldFL, and PBFL experience sharp declines.

* **Robustness Hierarchy:** The chart clearly ranks the methods by robustness to high attack ratios: **Ours** > **Median** > **ShieldFL** ≈ **FedAvg** > **PBFL** > **FoolsGold** > **Biscotti**.

* **Outlier:** The **Biscotti** method is a significant outlier, showing complete failure (accuracy ~0%) once the attack ratio exceeds 20%.

### Interpretation

This chart is a comparative robustness analysis for federated learning aggregation methods under poisoning attacks. The "Attack ratio (%)" represents the proportion of malicious clients in the network.

* **What the data suggests:** The proposed method ("Ours") demonstrates superior resilience, maintaining over 95% accuracy even when half the clients are malicious. This suggests it has a robust mechanism for identifying and mitigating the influence of poisoned model updates.

* **Relationship between elements:** The x-axis (attack strength) is the independent variable testing the systems. The y-axis (accuracy) is the dependent variable measuring system integrity. The diverging lines illustrate the different failure modes and thresholds of each aggregation strategy.

* **Notable trends/anomalies:**

1. The **Median** aggregator, a known robust statistic, performs well but is still outperformed by "Ours" at the highest attack ratio.

2. Standard **FedAvg** is highly vulnerable once a critical mass of attackers (~40%) is reached.

3. The catastrophic failure of **Biscotti** after 20% suggests its security model has a sharp phase transition or a specific assumption that is violated at that threshold.

4. The chart effectively argues that the new method ("Ours") pushes the boundary of reliable operation into much more hostile environments than prior work.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: Accuracy vs. Attack Ratio

### Overview

The image is a line graph comparing the accuracy of eight different methods (FedAvg, ShieldFL, PBFL, Median, Biscotti, FoolsGold, Ours) across varying attack ratios (0% to 50%). Accuracy is measured on the y-axis (0%–100%), while the x-axis represents attack ratio (0%–50%). Each method is represented by a distinct line with unique markers and colors, as indicated in the legend on the left.

### Components/Axes

- **Y-axis**: Accuracy (%)

- Scale: 0% to 100% in 20% increments.

- **X-axis**: Attack ratio (%)

- Scale: 0% to 50% in 10% increments.

- **Legend**:

- **FedAvg**: Blue squares (■)

- **ShieldFL**: Orange diamonds (◆)

- **PBFL**: Green triangles (▲)

- **Median**: Purple circles (●)

- **Biscotti**: Gray stars (★)

- **FoolsGold**: Brown triangles (▼)

- **Ours**: Red circles (○)

### Detailed Analysis

1. **FedAvg (Blue Squares)**:

- Starts at ~98% accuracy at 0% attack ratio.

- Declines gradually to ~62% at 50% attack ratio.

- Slope: Steady downward trend.

2. **ShieldFL (Orange Diamonds)**:

- Starts at ~99% accuracy at 0% attack ratio.

- Declines to ~64% at 50% attack ratio.

- Slope: Moderate downward trend.

3. **PBFL (Green Triangles)**:

- Starts at ~97% accuracy at 0% attack ratio.

- Declines sharply to ~48% at 50% attack ratio.

- Slope: Steep downward trend after 30% attack ratio.

4. **Median (Purple Circles)**:

- Starts at ~96% accuracy at 0% attack ratio.

- Declines slightly to ~90% at 50% attack ratio.

- Slope: Gentle downward trend.

5. **Biscotti (Gray Stars)**:

- Starts at ~99% accuracy at 0% attack ratio.

- Drops catastrophically to ~2% at 30% attack ratio.

- Slope: Vertical collapse at 30% attack ratio.

6. **FoolsGold (Brown Triangles)**:

- Starts at ~98% accuracy at 0% attack ratio.

- Declines to ~38% at 50% attack ratio.

- Slope: Gradual downward trend.

7. **Ours (Red Circles)**:

- Starts at ~99% accuracy at 0% attack ratio.

- Declines minimally to ~96% at 50% attack ratio.

- Slope: Near-flat trend.

### Key Observations

- **Robustness**: Methods like **Ours** and **Median** maintain high accuracy (>90%) even at 50% attack ratio, indicating strong resilience.

- **Vulnerability**: **Biscotti** collapses entirely at 30% attack ratio, suggesting extreme sensitivity.

- **Gradual Decline**: **FedAvg**, **ShieldFL**, and **FoolsGold** show moderate to steep declines, with **PBFL** being the most erratic (sharp drop after 30%).

- **Consistency**: **Ours** and **Median** exhibit the least variability across attack ratios.

### Interpretation

The graph demonstrates that **Ours** and **Median** are the most robust methods, maintaining near-100% accuracy even under high attack ratios. **Biscotti** is the least resilient, failing catastrophically at 30% attack ratio. The performance of **PBFL** and **FoolsGold** suggests they may lack adaptive mechanisms to handle adversarial attacks effectively. The data implies that methods with built-in adversarial defenses (e.g., **Ours**) outperform traditional approaches like **FedAvg** and **ShieldFL** in hostile environments. The sharp decline of **Biscotti** highlights the importance of attack-aware design in federated learning systems.

DECODING INTELLIGENCE...