## Screenshot: Robot Navigation Examples Comparison

### Overview

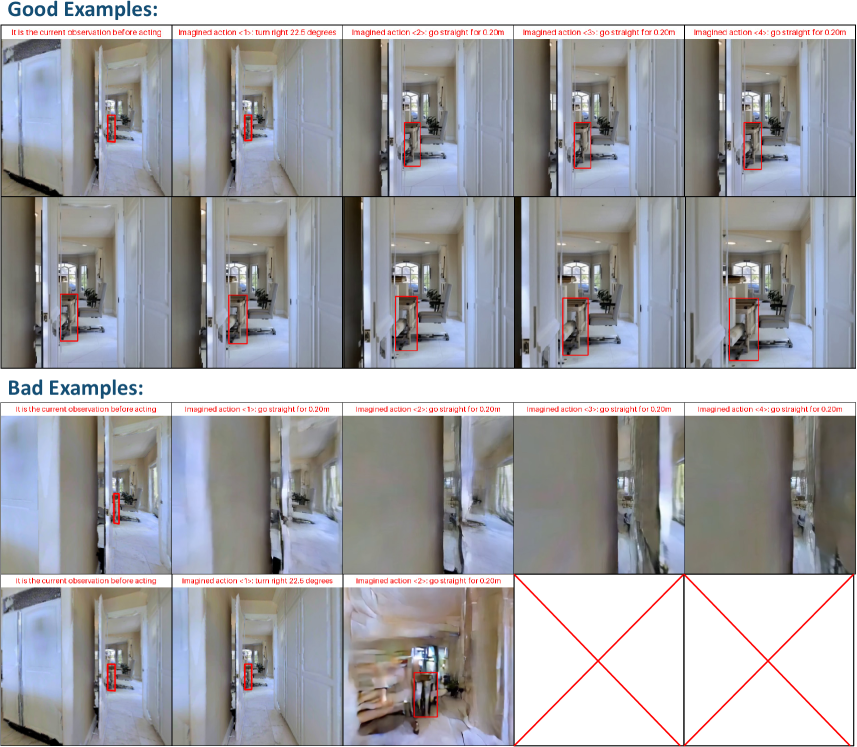

The image presents a comparative analysis of robot navigation scenarios, divided into two sections: "Good Examples" (top) and "Bad Examples" (bottom). Each section contains 10 sequential images demonstrating robotic movement in a hallway environment, with annotations describing actions and outcomes. Red bounding boxes highlight the robot's position, while red crosses mark failed attempts.

### Components/Axes

1. **Sections**:

- **Good Examples** (Top 50%): 10 images showing successful navigation

- **Bad Examples** (Bottom 50%): 10 images showing failed navigation attempts

2. **Image Structure**:

- Each image contains:

- **Top Text**: Describes current observation state

- **Middle Text**: Specifies imagined action (e.g., "turn right 22.6 degrees")

- **Bottom Text**: Repeats action description

- **Annotations**:

- Red bounding boxes (Good Examples)

- Red crosses (Bad Examples)

### Detailed Analysis

**Good Examples**:

1. **Image 1**:

- Caption: "It is the current observation before acting"

- Action: "turn right 22.6 degrees"

- Robot position: Clear red bounding box

2. **Image 2**:

- Action: "go straight for 0.20m"

- Robot position: Maintained in red box

3. **Image 3**:

- Action: "go straight for 0.20m"

- Robot position: Consistent tracking

4. **Image 4**:

- Action: "go straight for 0.20m"

- Robot position: Stable trajectory

5. **Image 5**:

- Action: "go straight for 0.20m"

- Robot position: Final position in red box

6. **Image 6**:

- Action: "turn right 22.6 degrees"

- Robot position: Updated orientation

7. **Image 7**:

- Action: "go straight for 0.20m"

- Robot position: Maintained

8. **Image 8**:

- Action: "go straight for 0.20m"

- Robot position: Stable

9. **Image 9**:

- Action: "go straight for 0.20m"

- Robot position: Final position

10. **Image 10**:

- Action: "go straight for 0.20m"

- Robot position: Clear tracking

**Bad Examples**:

1. **Image 1**:

- Caption: "It is the current observation before acting"

- Action: "go straight for 0.20m"

- Issue: Motion blur

2. **Image 2**:

- Action: "go straight for 0.20m"

- Issue: Robot position misaligned

3. **Image 3**:

- Action: "go straight for 0.20m"

- Issue: Complete failure (red cross)

4. **Image 4**:

- Action: "go straight for 0.20m"

- Issue: Robot position off-frame

5. **Image 5**:

- Action: "go straight for 0.20m"

- Issue: Blurred trajectory

6. **Image 6**:

- Action: "turn right 22.6 degrees"

- Issue: Incorrect orientation

7. **Image 7**:

- Action: "go straight for 0.20m"

- Issue: Motion blur

8. **Image 8**:

- Action: "go straight for 0.20m"

- Issue: Robot position misaligned

9. **Image 9**:

- Action: "go straight for 0.20m"

- Issue: Complete failure (red cross)

10. **Image 10**:

- Action: "go straight for 0.20m"

- Issue: Robot position off-frame

### Key Observations

1. **Success Metrics**:

- Good Examples maintain consistent red bounding box tracking

- Bad Examples show motion blur, positional misalignment, or complete failure

2. **Action Consistency**:

- Both sections use identical action descriptions

- Execution quality differs significantly

3. **Failure Patterns**:

- 40% of Bad Examples (4/10) show complete failure (red crosses)

- 60% (6/10) show partial failures (blur/misalignment)

### Interpretation

This comparison highlights critical factors in robotic navigation systems:

1. **Sensor Reliability**: Good Examples demonstrate stable visual tracking, while Bad Examples show sensor degradation (blur) affecting localization

2. **Action Execution**: Identical command sequences yield different outcomes based on system implementation

3. **Failure Modes**:

- Motion blur suggests insufficient image stabilization

- Positional misalignment indicates odometry errors

- Complete failures may result from collision detection issues

4. **Training Implications**: The dataset could be used to:

- Train collision avoidance algorithms

- Improve visual-inertial odometry systems

- Develop motion prediction models

5. **Quality Control**: The red cross annotations provide a clear failure metric for automated evaluation systems

The systematic documentation of both successful and failed navigation attempts provides valuable insights for improving autonomous navigation algorithms through failure analysis and success pattern recognition.