## Diagram: Federated Learning Process with Lower and Upper Models

### Overview

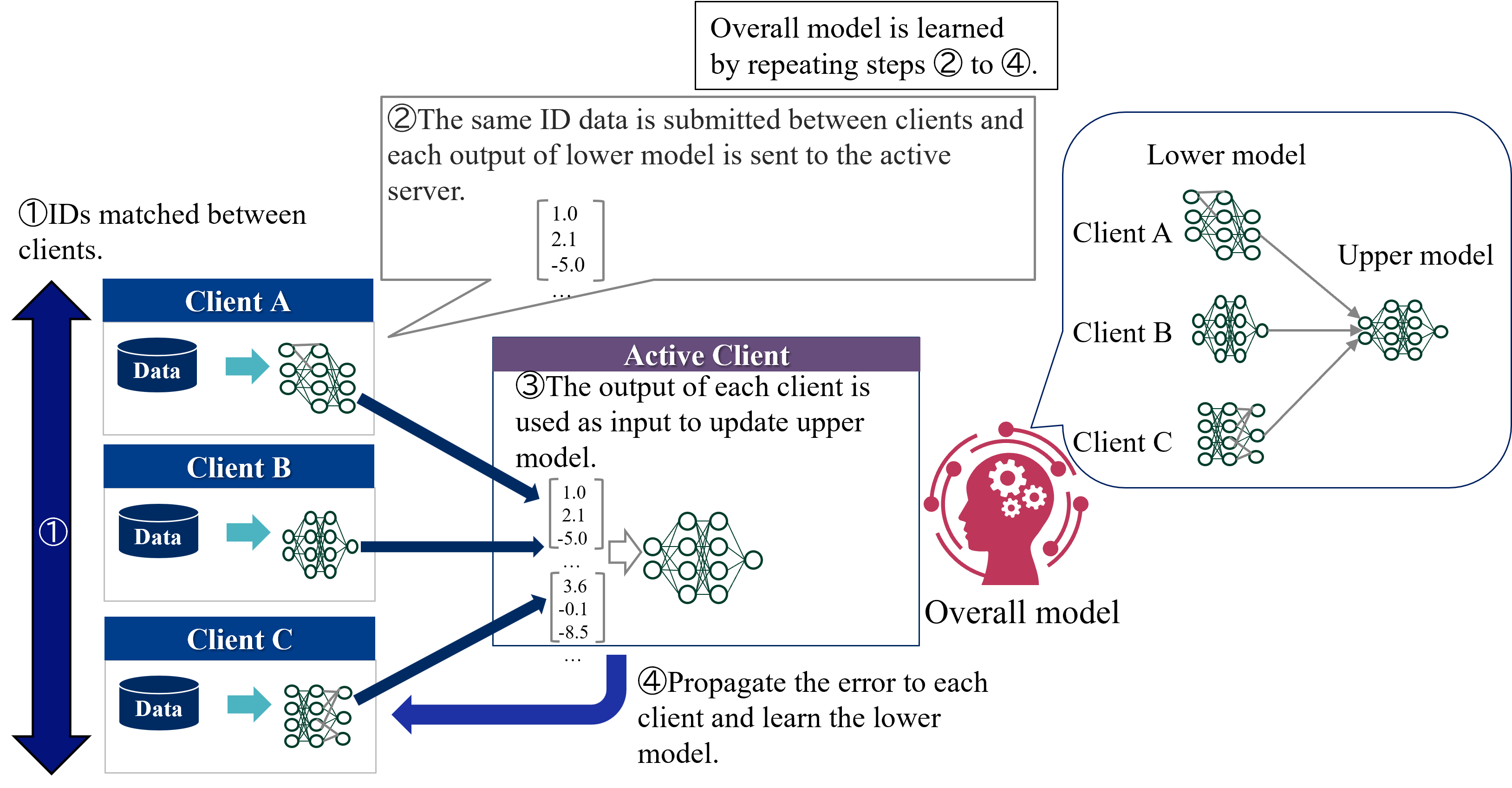

The image is a technical diagram illustrating a four-step federated learning or collaborative machine learning process. It depicts how multiple clients (A, B, C) with local data and models interact with an "Active Client" to train an "Overall model" without centralizing raw data. The process involves matching IDs, sharing model outputs, updating a central model, and propagating errors back to refine local models.

### Components/Axes

**Primary Components:**

1. **Clients (Left Column):** Three clients labeled "Client A", "Client B", and "Client C". Each is represented by a blue header box containing:

* A cylinder icon labeled "Data".

* A right-pointing arrow.

* A neural network icon representing a "Lower model".

2. **Active Client (Center):** A purple-header box labeled "Active Client". It contains:

* Text describing Step ③.

* Two example numerical vectors (e.g., `[1.0, 2.1, -5.0]`).

* A neural network icon representing the "Upper model".

3. **Overall Model (Right):** A red icon of a human head with gears, labeled "Overall model". A callout bubble connects it to a detailed view.

4. **Detailed Model View (Top Right):** A rounded rectangle showing the relationship between "Lower model" and "Upper model". It depicts three separate neural networks (for Clients A, B, C) feeding into a single, larger neural network.

5. **Process Flow Arrows:** Dark blue arrows indicate the direction of data and error flow between components.

6. **Numbered Steps:** Four circled numbers (①, ②, ③, ④) with accompanying descriptive text boxes.

**Textual Labels and Descriptions:**

* **Step ①:** "IDs matched between clients." (Positioned top-left, next to a vertical double-headed arrow spanning the three clients).

* **Step ②:** "The same ID data is submitted between clients and each output of lower model is sent to the active server." (Positioned top-center, with a speech bubble pointing to the output from Client A's model).

* **Step ③:** "The output of each client is used as input to update upper model." (Inside the "Active Client" box).

* **Step ④:** "Propagate the error to each client and learn the lower model." (Positioned bottom-center, with a curved arrow pointing back to the clients).

* **Top Summary Box:** "Overall model is learned by repeating steps ② to ④."

* **Model Labels:** "Lower model" and "Upper model" are explicitly labeled in the detailed view.

### Detailed Analysis

**Process Flow & Spatial Grounding:**

1. **Step ① (Left Side):** The process begins with the three clients (A, B, C) vertically aligned on the left. A vertical double-headed arrow labeled ① indicates that "IDs [are] matched between clients," establishing a common reference for data samples across parties.

2. **Step ② (Data Flow to Center):** From each client's "Lower model" neural network, a dark blue arrow points rightward to the "Active Client" box. This represents the submission of model outputs. Example output vectors are shown:

* From Client A: `[1.0, 2.1, -5.0, ...]`

* From Client C: `[3.6, -0.1, -8.5, ...]`

The text for Step ② clarifies this is done for "the same ID data."

3. **Step ③ (Central Update):** Inside the "Active Client" box, the collected outputs (represented by the vectors) are fed into the "Upper model" neural network. This step updates the central model.

4. **Step ④ (Error Propagation):** A large, curved dark blue arrow originates from the "Active Client" / "Upper model" and points back towards the clients on the left. This represents the backpropagation of error signals to update each client's local "Lower model."

5. **Overall Model & Iteration (Right & Top):** The "Overall model" icon on the right is connected to the detailed view showing the architecture: multiple "Lower models" (one per client) feeding into a single "Upper model." The top summary box states this entire cycle (Steps ② to ④) is repeated to learn the final overall model.

**Key Observations**

* **Architecture:** The system uses a two-tier model structure. Each client maintains a private "Lower model" trained on its local data. A central "Upper model" (the "Overall model") aggregates information from these lower models.

* **Data Privacy:** Raw data ("Data" cylinders) never leaves the clients. Only model outputs (numerical vectors) and error signals are communicated, which is a hallmark of federated learning aimed at preserving data privacy.

* **Central Coordination:** The "Active Client" acts as a server or aggregator in this round of the process, coordinating the update of the global upper model.

* **Iterative Learning:** The process is explicitly iterative, as noted in the top summary box.

### Interpretation

This diagram explains a **collaborative, privacy-preserving machine learning framework**. The core idea is to train a powerful global model ("Upper model") by leveraging data from multiple distributed sources (Clients A, B, C) without requiring them to share their sensitive raw data.

* **How it works:** Each client first trains a local "Lower model" on its own data. For a given set of data points (matched by ID), these local models generate outputs (embeddings or predictions). These outputs, not the raw data, are sent to a central coordinator (the "Active Client"). The coordinator uses these outputs as input to train the "Upper model." The error from the upper model is then sent back to each client, allowing them to refine their local lower models. This cycle repeats.

* **Why it matters:** This approach is critical in fields like healthcare, finance, or any domain with strict data sovereignty and privacy regulations (e.g., GDPR). It allows organizations to collaboratively build a more accurate and generalizable model than any could build alone, while keeping their data secure on their own servers.

* **Notable Design:** The separation into "Lower" and "Upper" models suggests a form of **representation learning** or **ensemble learning**. The lower models act as feature extractors tailored to each client's data distribution, while the upper model learns to combine these specialized representations for a global task. The "ID matching" step is crucial to ensure the upper model is learning from corresponding data points across clients.