## System Architecture Diagram: Federated Learning Workflow

### Overview

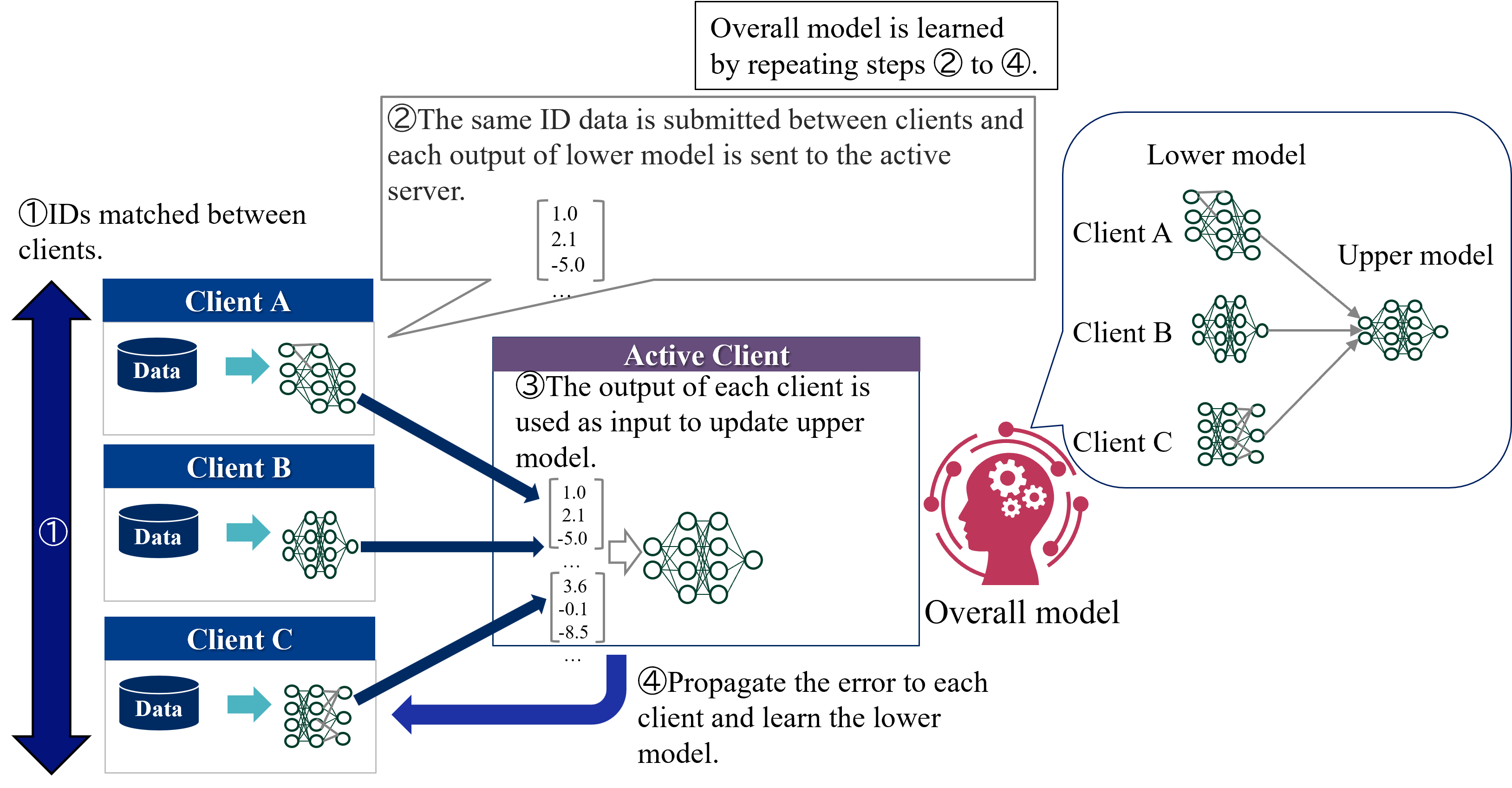

This diagram illustrates a federated learning system where multiple clients (A, B, C) collaboratively train a shared model while preserving data privacy. The workflow involves four key steps: data matching, output aggregation, active client training, and error propagation.

### Components/Axes

1. **Clients (A, B, C)**:

- Each client has a local "Data" repository and a "Lower model" (neural network).

- Positioned on the left side of the diagram.

2. **Server**:

- Central hub for aggregating outputs from lower models.

- Not explicitly labeled but implied by bidirectional arrows.

3. **Active Client**:

- Central node processing aggregated outputs to update the "Upper model."

- Contains a speech bubble with numerical outputs from lower models.

4. **Upper Model**:

- Global model updated by the active client.

- Connected to all clients via bidirectional arrows.

5. **Overall Model**:

- Learned iteratively by repeating steps 2–4.

- Represented by a brain icon with gears.

**Legend**:

- **Blue**: Data repositories.

- **Green**: Neural network layers (lower/upper models).

- **Purple**: Active client section.

- **Red**: Overall model (brain icon).

- **Gray**: Arrows indicating data/model flow.

### Detailed Analysis

1. **Step 1 (IDs Matched)**:

- Clients (A, B, C) share identifiers (IDs) to synchronize data.

- Arrows point downward from clients to the server.

2. **Step 2 (Output Submission)**:

- Lower models from clients A, B, and C send outputs to the server.

- Speech bubble in the active client shows example outputs:

```

[1.0, 2.1, -5.0, 3.6, -0.1, -8.5]

```

3. **Step 3 (Active Client Training)**:

- The active client uses aggregated outputs to update the upper model.

- Arrows flow from the server to the active client.

4. **Step 4 (Error Propagation)**:

- Errors from the upper model are propagated back to lower models.

- Arrows flow from the active client to all clients.

### Key Observations

- **Cyclic Workflow**: Steps 2–4 repeat iteratively to refine the overall model.

- **Data Privacy**: Clients retain raw data locally; only model outputs are shared.

- **Numerical Outputs**: The speech bubble values suggest heterogeneous contributions from clients (e.g., Client A: 1.0, 2.1, -5.0; Client B: 3.6, -0.1, -8.5).

- **Color Consistency**:

- Blue data repositories match Client A/B/C labels.

- Green neural networks align with lower/upper model layers.

### Interpretation

This diagram represents a **federated learning architecture** where:

1. **Decentralized Training**: Clients train local models on private data without sharing raw datasets.

2. **Aggregation**: The server combines model outputs (Step 2) to update a global model.

3. **Error Feedback**: The active client refines the upper model and distributes improvements to lower models (Step 4).

4. **Iterative Learning**: Repeating steps 2–4 enhances the overall model’s accuracy over time.

**Notable Patterns**:

- The active client acts as a central coordinator, balancing contributions from all clients.

- Negative values in the speech bubble (-5.0, -8.5) may indicate outlier predictions or adversarial data points.

- The brain icon symbolizes the "intelligence" of the overall model, which evolves through client collaboration.

**Why It Matters**:

This workflow enables scalable machine learning in distributed systems (e.g., healthcare, IoT) while complying with data privacy regulations like GDPR. The error propagation mechanism ensures continuous model improvement without centralizing sensitive data.