\n

## Diagram: Multimodal Large Language Model Architectures

### Overview

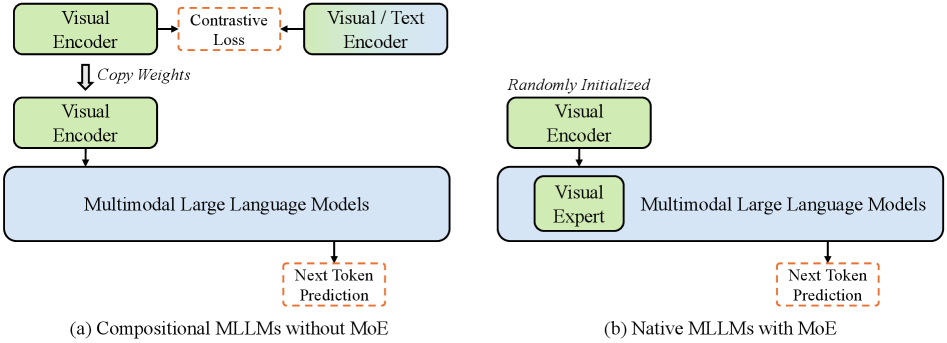

The image presents a diagram illustrating two different architectures for Multimodal Large Language Models (MLLMs): compositional MLLMs without Mixture of Experts (MoE), and native MLLMs with MoE. The diagram uses boxes and arrows to represent components and data flow.

### Components/Axes

The diagram consists of the following components:

* **Visual Encoder:** Represented by a yellow box.

* **Visual/Text Encoder:** Represented by a blue box.

* **Multimodal Large Language Models:** Represented by a light blue box.

* **Visual Expert:** Represented by a green box.

* **Arrows:** Represent data flow and transformations.

* **Labels:** "Contrastive Loss", "Copy Weights", "Randomly Initialized", "Next Token Prediction".

* **Sub-captions:** "(a) Compositional MLLMs without MoE", "(b) Native MLLMs with MoE".

* **Dashed Lines:** Indicate optional or secondary pathways.

### Detailed Analysis or Content Details

**Diagram (a): Compositional MLLMs without MoE**

1. A "Visual Encoder" (yellow) is connected via a dashed red line labeled "Contrastive Loss" to a "Visual/Text Encoder" (blue).

2. An arrow labeled "Copy Weights" points downwards from the "Visual/Text Encoder" to another "Visual Encoder" (yellow).

3. The second "Visual Encoder" (yellow) is connected to "Multimodal Large Language Models" (light blue) via a solid arrow.

4. The "Multimodal Large Language Models" (light blue) has a dashed red line pointing downwards labeled "Next Token Prediction".

**Diagram (b): Native MLLMs with MoE**

1. A "Visual Encoder" (yellow) is labeled "Randomly Initialized".

2. The "Randomly Initialized Visual Encoder" is connected to a "Visual Expert" (green) via a solid arrow.

3. The "Visual Expert" (green) is connected to "Multimodal Large Language Models" (light blue) via a solid arrow.

4. The "Multimodal Large Language Models" (light blue) has a dashed red line pointing downwards labeled "Next Token Prediction".

### Key Observations

* Diagram (a) shows a process of initializing a visual encoder through contrastive loss and copying weights, while diagram (b) shows a randomly initialized visual encoder directly feeding into a visual expert.

* Both diagrams share a common output: "Next Token Prediction" from the "Multimodal Large Language Models".

* The use of dashed lines suggests that "Contrastive Loss" and "Next Token Prediction" are not necessarily core components but rather auxiliary processes.

* The "Visual Expert" component is unique to the MoE architecture.

### Interpretation

The diagram illustrates two distinct approaches to building multimodal large language models. The first (a) represents a compositional approach where a visual encoder is pre-trained using contrastive loss and its weights are copied to another visual encoder before being integrated into the larger language model. This suggests a transfer learning strategy. The second (b) represents a native approach where a visual encoder is randomly initialized and then refined by a "Visual Expert" component before being integrated into the language model. This suggests a more end-to-end learning strategy.

The "Next Token Prediction" output in both diagrams highlights the core function of these models: generating text based on multimodal input. The difference lies in how the visual information is initially processed and integrated into the language model. The MoE architecture (b) potentially allows for more specialized processing of visual information through the "Visual Expert", while the compositional approach (a) relies on pre-trained weights and transfer learning. The diagram suggests that the MoE approach may be more flexible and adaptable, but potentially requires more training data.