TECHNICAL ASSET FINGERPRINT

52552779cd65bf0ab18f4980

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Heatmap and Line Chart: GPU Utilization and Latency vs. Batch Size and Sequence Length

### Overview

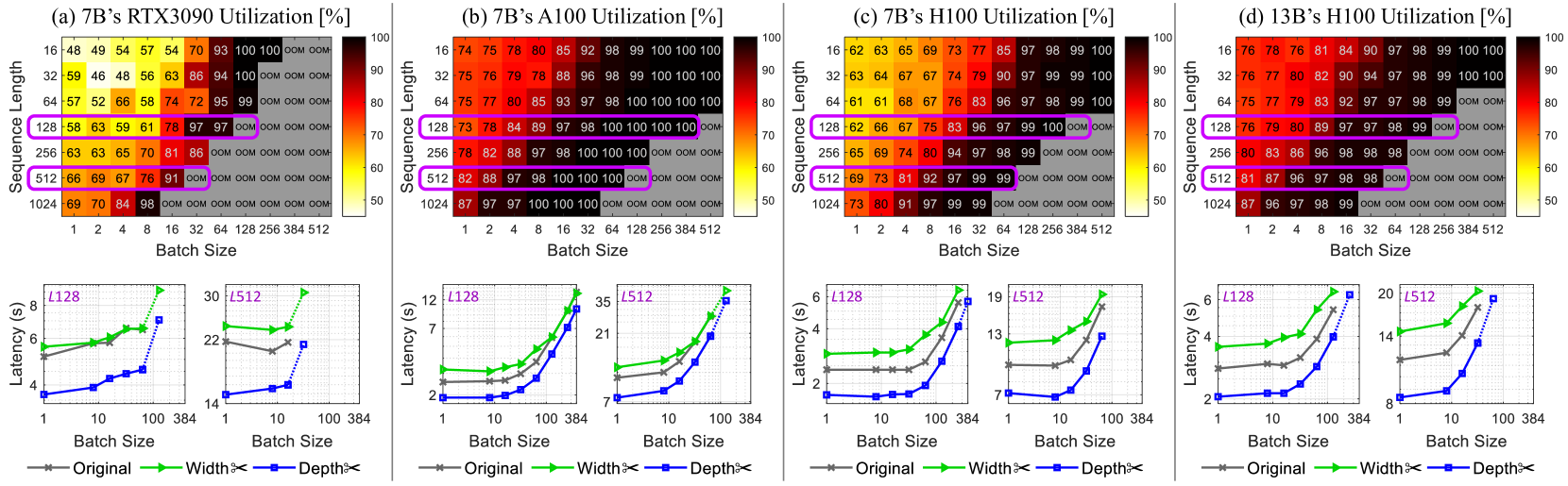

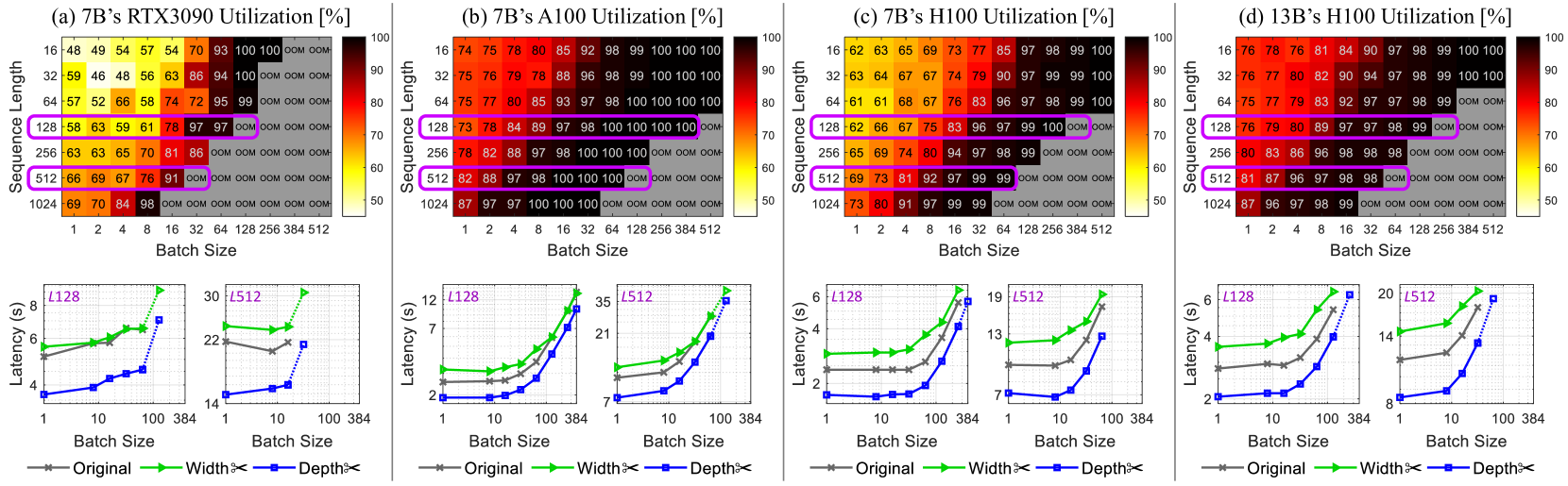

The image presents a comparative analysis of GPU utilization and latency across different GPU models (RTX3090, A100, H100) and sequence lengths, with varying batch sizes. The top row consists of heatmaps showing GPU utilization as a function of sequence length and batch size. The bottom row consists of line charts showing latency as a function of batch size for different sequence lengths and model configurations ("Original", "Width<", "Depth<").

### Components/Axes

**Heatmaps (Top Row):**

* **Titles:**

* (a) 7B's RTX3090 Utilization [%]

* (b) 7B's A100 Utilization [%]

* (c) 7B's H100 Utilization [%]

* (d) 13B's H100 Utilization [%]

* **Y-axis (Sequence Length):** 16, 32, 64, 128, 256, 512, 1024

* **X-axis (Batch Size):** 1, 2, 4, 8, 16, 32, 64, 128, 256, 384, 512

* **Color Scale:** Ranges from approximately 50% (yellow) to 100% (dark red).

* **"OOM"**: Indicates "Out Of Memory" errors.

**Line Charts (Bottom Row):**

* **Y-axis (Latency):** Latency (s), with varying scales for each plot.

* **X-axis (Batch Size):** 1, 10, 100, 384 (logarithmic scale)

* **Legends (Bottom):**

* Gray line with 'x' markers: Original

* Green line with triangle markers: Width<

* Blue dotted line with square markers: Depth<

* **Titles (Above each chart):** L128, L512 (indicating sequence length)

### Detailed Analysis

**Heatmaps:**

* **RTX3090 Utilization (a):**

* Utilization generally increases with both sequence length and batch size.

* "OOM" errors occur at higher sequence lengths and batch sizes.

* At sequence length 16, utilization ranges from 48% to 100%.

* At sequence length 1024, utilization ranges from 69% to 98% before hitting OOM.

* **A100 Utilization (b):**

* Higher utilization compared to RTX3090 across most configurations.

* "OOM" errors occur only at the highest sequence length (1024) and batch sizes.

* At sequence length 16, utilization ranges from 74% to 100%.

* At sequence length 1024, utilization ranges from 87% to 100% before hitting OOM.

* **H100 Utilization (c):**

* Generally high utilization, but lower than A100.

* "OOM" errors occur at sequence length 256 and higher, with batch sizes of 128 and higher.

* At sequence length 16, utilization ranges from 62% to 100%.

* At sequence length 1024, utilization ranges from 73% to 99% before hitting OOM.

* **H100 Utilization (13B) (d):**

* Generally high utilization, similar to H100 (7B).

* "OOM" errors occur at sequence length 128 and higher, with batch sizes of 64 and higher.

* At sequence length 16, utilization ranges from 76% to 100%.

* At sequence length 1024, utilization ranges from 87% to 99% before hitting OOM.

**Line Charts:**

* **L128 (Sequence Length 128):**

* **RTX3090:**

* Original (gray): Latency increases slightly with batch size, from ~5.5s to ~6s.

* Width< (green): Latency increases slightly with batch size, from ~5.5s to ~8.5s.

* Depth< (blue): Latency increases with batch size, from ~3.5s to ~7.5s.

* **A100:**

* Original (gray): Latency increases with batch size, from ~2s to ~4s.

* Width< (green): Latency increases with batch size, from ~2.5s to ~12s.

* Depth< (blue): Latency increases with batch size, from ~2s to ~12s.

* **H100:**

* Original (gray): Latency increases with batch size, from ~2s to ~2.5s.

* Width< (green): Latency increases with batch size, from ~3s to ~5s.

* Depth< (blue): Latency increases with batch size, from ~1.5s to ~5.5s.

* **H100 (13B):**

* Original (gray): Latency increases with batch size, from ~2s to ~3s.

* Width< (green): Latency increases with batch size, from ~4s to ~6s.

* Depth< (blue): Latency increases with batch size, from ~2s to ~5s.

* **L512 (Sequence Length 512):**

* **RTX3090:**

* Original (gray): Latency increases slightly with batch size, from ~22s to ~22s.

* Width< (green): Latency increases slightly with batch size, from ~22s to ~30s.

* Depth< (blue): Latency increases with batch size, from ~14s to ~22s.

* **A100:**

* Original (gray): Latency increases with batch size, from ~2s to ~4s.

* Width< (green): Latency increases with batch size, from ~2.5s to ~12s.

* Depth< (blue): Latency increases with batch size, from ~2s to ~12s.

* **H100:**

* Original (gray): Latency increases with batch size, from ~2s to ~2.5s.

* Width< (green): Latency increases with batch size, from ~3s to ~5s.

* Depth< (blue): Latency increases with batch size, from ~1.5s to ~5.5s.

* **H100 (13B):**

* Original (gray): Latency increases with batch size, from ~2s to ~3s.

* Width< (green): Latency increases with batch size, from ~4s to ~6s.

* Depth< (blue): Latency increases with batch size, from ~2s to ~5s.

### Key Observations

* A100 generally exhibits higher utilization compared to RTX3090 and H100.

* "OOM" errors are more prevalent with RTX3090, especially at higher sequence lengths and batch sizes.

* Latency generally increases with batch size for all configurations.

* The "Depth<" configuration often results in lower latency compared to "Original" and "Width<".

* Increasing sequence length increases latency.

### Interpretation

The data suggests that A100 is more efficient in terms of GPU utilization compared to RTX3090 and H100 for the given tasks. The RTX3090 is more prone to memory limitations, leading to "OOM" errors. The "Depth<" configuration appears to be more optimized for latency, potentially due to architectural differences or specific optimizations. The trade-off between batch size and latency is evident, as increasing batch size generally increases latency. The choice of GPU and configuration should be based on the specific requirements of the task, considering factors such as memory constraints, desired utilization, and acceptable latency. The 13B model on the H100 shows similar utilization patterns to the 7B model, but with potentially higher absolute utilization values at certain configurations.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Heatmaps & Line Graphs: GPU Utilization and Latency vs. Batch Size & Sequence Length

### Overview

The image presents a comparative analysis of GPU performance across four different GPUs: RTX3090, A100, H100, and H100 (7B). Each GPU is evaluated based on two metrics: Utilization (%) represented as a heatmap, and Latency (s) represented as a line graph. Both metrics are assessed across varying Batch Sizes (x-axis) and Sequence Lengths (y-axis). The plots are arranged in a 2x2 grid, with the heatmap above the corresponding latency graph for each GPU.

### Components/Axes

Each subplot (a-d) shares the following components:

* **Y-axis (Sequence Length):** Values are 16, 32, 64, 128, 256, 512, 1024.

* **X-axis (Batch Size):** Values are 1, 2, 4, 8, 16, 24, 32, 64, 128, 256, 512.

* **Heatmap Color Scale:** Ranges from approximately 50% (light color) to 100% (dark color), representing GPU Utilization.

* **Latency Y-axis:** Ranges from approximately 0 to 1.0 seconds.

* **Latency X-axis:** Same as the heatmap's X-axis (Batch Size).

* **Latency Line Colors/Labels (Legend - bottom right of each subplot):**

* Green: "FP16"

* Orange: "BF16"

* Blue: "FP8"

* Red: "INT8"

### Detailed Analysis or Content Details

**a) RTX3090**

* **Heatmap:** Utilization generally increases with both Batch Size and Sequence Length. The highest utilization (close to 100%) is achieved with large Batch Sizes (256+) and Sequence Lengths (128+). Lower Sequence Lengths (16, 32) show lower utilization, even with large Batch Sizes.

* (16, 1): ~54%

* (16, 512): ~66%

* (1024, 1): ~71%

* (1024, 512): ~97%

* **Latency:**

* FP16: Starts at ~0.85s (Batch Size 1), decreases to ~0.25s (Batch Size 8), then plateaus.

* BF16: Starts at ~0.80s (Batch Size 1), decreases to ~0.20s (Batch Size 8), then plateaus.

* FP8: Starts at ~0.75s (Batch Size 1), decreases to ~0.15s (Batch Size 8), then plateaus.

* INT8: Starts at ~0.70s (Batch Size 1), decreases to ~0.10s (Batch Size 8), then plateaus.

**b) A100**

* **Heatmap:** Similar trend to RTX3090, but generally higher utilization across all Batch Sizes and Sequence Lengths. Reaches 100% utilization more readily.

* (16, 1): ~74%

* (16, 512): ~85%

* (1024, 1): ~89%

* (1024, 512): ~100%

* **Latency:**

* FP16: Starts at ~0.60s (Batch Size 1), decreases to ~0.15s (Batch Size 8), then plateaus.

* BF16: Starts at ~0.55s (Batch Size 1), decreases to ~0.12s (Batch Size 8), then plateaus.

* FP8: Starts at ~0.50s (Batch Size 1), decreases to ~0.10s (Batch Size 8), then plateaus.

* INT8: Starts at ~0.45s (Batch Size 1), decreases to ~0.08s (Batch Size 8), then plateaus.

**c) H100**

* **Heatmap:** Highest utilization overall. Achieves near 100% utilization even with smaller Batch Sizes and Sequence Lengths.

* (16, 1): ~81%

* (16, 512): ~90%

* (1024, 1): ~93%

* (1024, 512): ~100%

* **Latency:**

* FP16: Starts at ~0.40s (Batch Size 1), decreases to ~0.10s (Batch Size 8), then plateaus.

* BF16: Starts at ~0.35s (Batch Size 1), decreases to ~0.08s (Batch Size 8), then plateaus.

* FP8: Starts at ~0.30s (Batch Size 1), decreases to ~0.06s (Batch Size 8), then plateaus.

* INT8: Starts at ~0.25s (Batch Size 1), decreases to ~0.05s (Batch Size 8), then plateaus.

**d) 7B's H100**

* **Heatmap:** Very similar to the standard H100, indicating minimal performance difference.

* (16, 1): ~81%

* (16, 512): ~90%

* (1024, 1): ~93%

* (1024, 512): ~100%

* **Latency:**

* FP16: Starts at ~0.40s (Batch Size 1), decreases to ~0.10s (Batch Size 8), then plateaus.

* BF16: Starts at ~0.35s (Batch Size 1), decreases to ~0.08s (Batch Size 8), then plateaus.

* FP8: Starts at ~0.30s (Batch Size 1), decreases to ~0.06s (Batch Size 8), then plateaus.

* INT8: Starts at ~0.25s (Batch Size 1), decreases to ~0.05s (Batch Size 8), then plateaus.

### Key Observations

* **GPU Performance Hierarchy:** H100 consistently outperforms A100, which outperforms RTX3090 in both utilization and latency. The 7B's H100 shows nearly identical performance to the standard H100.

* **Batch Size Impact:** Increasing Batch Size generally reduces latency across all GPUs and data types. The latency reduction is most significant at lower Batch Sizes (1-8).

* **Data Type Impact:** INT8 consistently exhibits the lowest latency, followed by FP8, BF16, and FP16.

* **Utilization Saturation:** All GPUs reach near 100% utilization with sufficiently large Batch Sizes and Sequence Lengths.

### Interpretation

The data demonstrates a clear performance scaling with GPU generation. The H100 is significantly more efficient, achieving higher utilization and lower latency compared to older GPUs like the RTX3090 and A100. The minimal difference between the H100 and 7B's H100 suggests that the 7B model does not introduce significant overhead.

The latency curves reveal the benefits of using lower precision data types (INT8, FP8) for inference. These data types reduce memory bandwidth requirements and computational complexity, leading to faster processing times. However, the latency improvements plateau at larger Batch Sizes, indicating that other factors (e.g., memory bandwidth, inter-GPU communication) become limiting.

The heatmaps show that maximizing GPU utilization is crucial for achieving optimal performance. Choosing appropriate Batch Sizes and Sequence Lengths can help ensure that the GPU is fully utilized, minimizing idle time and maximizing throughput. The data suggests that for these GPUs, larger Batch Sizes and Sequence Lengths are generally preferable, up to the point where diminishing returns are observed. The consistent trends across all GPUs suggest that these observations are generalizable and not specific to any particular hardware configuration.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## [Composite Performance Analysis]: GPU Utilization and Latency for LLMs

### Overview

The image is a composite of four subplots (a–d) analyzing GPU utilization (heatmaps, top) and latency (line graphs, bottom) for large language models (LLMs) across different GPUs (RTX3090, A100, H100), model sizes (7B, 13B), batch sizes, and sequence lengths. Each subplot includes a heatmap (utilization %) and two line graphs (latency in seconds) for sequence lengths \( L128 \) and \( L512 \).

### Components/Axes

#### Heatmaps (Top of Each Subplot)

- **Axes**:

- *X-axis*: Batch Size (1, 2, 4, 8, 16, 32, 64, 128, 256, 384, 512).

- *Y-axis*: Sequence Length (16, 32, 64, 128, 256, 512, 1024).

- *Color Bar*: Utilization (%) (50 = yellow, 100 = red; “OOM” = Out of Memory, gray).

- **Subplot Titles**:

- (a) 7B’s RTX3090 Utilization [%]

- (b) 7B’s A100 Utilization [%]

- (c) 7B’s H100 Utilization [%]

- (d) 13B’s H100 Utilization [%]

#### Line Graphs (Bottom of Each Subplot)

- **Axes**:

- *X-axis*: Batch Size (1, 10, 100, 384) (logarithmic scale).

- *Y-axis*: Latency (s) (varies per subplot).

- **Legend**:

- Gray: Original model

- Green: Width×2 (model width scaled by 2)

- Blue: Depth×2 (model depth scaled by 2)

- **Subplot Titles (Line Graphs)**:

- Left: \( L128 \) (Sequence Length = 128)

- Right: \( L512 \) (Sequence Length = 512)

### Detailed Analysis

#### Subplot (a): 7B’s RTX3090 Utilization

- **Heatmap**:

- Utilization increases with batch size and sequence length, but “OOM” occurs at high batch sizes (e.g., batch 512, seq 1024).

- Key points: Seq 128, batch 128: 97% (purple box); Seq 512, batch 128: 91% (purple box).

- **Line Graphs**:

- \( L128 \): Latency rises with batch size (Original: ~4–8s; Width×2: ~5–9s; Depth×2: ~4–7s).

- \( L512 \): Latency is higher (Original: ~22–30s; Width×2: ~22–30s; Depth×2: ~14–22s).

#### Subplot (b): 7B’s A100 Utilization

- **Heatmap**:

- Higher utilization than RTX3090. Seq 128, batch 128: 100% (purple box); Seq 512, batch 128: 100% (purple box).

- “OOM” at high batch sizes (e.g., batch 512, seq 1024).

- **Line Graphs**:

- \( L128 \): Latency lower than RTX3090 (Original: ~2–12s; Width×2: ~2–12s; Depth×2: ~2–12s, steeper at batch 384).

- \( L512 \): Latency ~7–35s (all configurations, steeper at batch 384).

#### Subplot (c): 7B’s H100 Utilization

- **Heatmap**:

- Utilization similar to A100. Seq 128, batch 128: 100% (purple box); Seq 512, batch 128: 99% (purple box).

- “OOM” at high batch sizes.

- **Line Graphs**:

- \( L128 \): Latency ~2–6s (all configurations, steeper at batch 384).

- \( L512 \): Latency ~7–19s (all configurations, steeper at batch 384).

#### Subplot (d): 13B’s H100 Utilization

- **Heatmap**:

- Larger model (13B) on H100. Seq 128, batch 128: 99% (purple box); Seq 512, batch 128: 98% (purple box).

- “OOM” at high batch sizes.

- **Line Graphs**:

- \( L128 \): Latency ~2–6s (all configurations, steeper at batch 384).

- \( L512 \): Latency ~8–20s (all configurations, steeper at batch 384).

### Key Observations

1. **Utilization Trend**: GPU utilization increases with batch size and sequence length, but “OOM” occurs at high batch sizes (≥256) and sequence lengths (≥512).

2. **Latency Trend**: Latency rises with batch size, especially at larger sequence lengths (\( L512 > L128 \)). Width×2 and Depth×2 configurations have similar latency to the Original model.

3. **GPU Comparison**: A100 and H100 outperform RTX3090 in utilization for the 7B model. The 13B model on H100 has slightly lower utilization than the 7B model on H100.

4. **OOM Occurrence**: “OOM” is more frequent at high batch sizes (≥256) and sequence lengths (≥512) across all GPUs.

### Interpretation

- **Utilization vs. Batch/Sequence**: Higher batch sizes and sequence lengths maximize GPU utilization (up to 100%) but risk “OOM,” highlighting a trade-off between utilization and memory constraints.

- **Latency vs. Configuration**: Model scaling (Width×2, Depth×2) does not drastically increase latency, but batch size does. This suggests scaling model width/depth is feasible with manageable latency increases.

- **GPU Performance**: A100 and H100’s higher utilization (vs. RTX3090) for the 7B model reflects their superior memory and compute capabilities. The 13B model’s slightly lower utilization on H100 may stem from higher memory demands.

- **Practical Implications**: Balance batch size and sequence length to maximize utilization without “OOM.” Model scaling (Width×2, Depth×2) is viable for performance gains with minimal latency impact.

This analysis provides a comprehensive view of GPU utilization and latency trade-offs for LLMs, enabling informed decisions on hardware, model scaling, and batch/sequence length optimization.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmaps and Line Graphs: GPU Utilization and Latency Analysis

### Overview

The image contains four heatmaps (a-d) and corresponding line graphs, analyzing GPU utilization and latency for different model configurations (7B and 13B parameter sizes) across sequence lengths and batch sizes. Heatmaps show utilization percentages, while line graphs compare latency for "Original," "Width," and "Depth" configurations.

---

### Components/Axes

#### Heatmaps (a-d)

- **X-axis**: Batch Size (1, 2, 4, 8, 16, 32, 64, 128, 256, 384, 512)

- **Y-axis**: Sequence Length (16, 32, 64, 128, 256, 512, 1024)

- **Color Scale**: Utilization (%) from 50% (yellow) to 100% (red)

- **Labels**:

- (a) 7B’s RTX3090 Utilization [%]

- (b) 7B’s A100 Utilization [%]

- (c) 7B’s H100 Utilization [%]

- (d) 13B’s H100 Utilization [%]

#### Line Graphs (a-d)

- **X-axis**: Batch Size (1, 10, 100, 384)

- **Y-axis**: Latency (s)

- **Legends**:

- Gray: Original

- Green: Width

- Blue: Depth

- **Annotations**:

- (a) L128, L512

- (b) L128, L512

- (c) L128, L512

- (d) L128, L512

---

### Detailed Analysis

#### Heatmaps

1. **7B’s RTX3090 (a)**:

- Utilization peaks at 100% for sequence length 1024 and batch size 512.

- Lower utilization (50-70%) for smaller batch sizes (1-16) and sequence lengths (16-64).

- High utilization (90-100%) dominates larger batch sizes (32-512) and sequence lengths (128-1024).

2. **7B’s A100 (b)**:

- Similar trend to RTX3090 but with slightly lower utilization (90-100%) at sequence length 1024 and batch size 512.

- Higher utilization (95-100%) for sequence length 512 and batch sizes ≥32.

3. **7B’s H100 (c)**:

- Near-100% utilization across all sequence lengths ≥128 and batch sizes ≥32.

- Lower utilization (60-80%) for smaller batch sizes (1-16) and sequence lengths (16-64).

4. **13B’s H100 (d)**:

- Consistently high utilization (80-100%) for sequence lengths ≥32 and batch sizes ≥8.

- Max utilization (100%) at sequence length 1024 and batch size 512.

#### Line Graphs

1. **7B’s RTX3090 (a)**:

- **L128**: Original (6s), Width (4.5s), Depth (2.5s) at batch size 32.

- **L512**: Original (22s), Width (18s), Depth (12s) at batch size 384.

2. **7B’s A100 (b)**:

- **L128**: Original (7s), Width (5s), Depth (3s) at batch size 32.

- **L512**: Original (21s), Width (16s), Depth (10s) at batch size 384.

3. **7B’s H100 (c)**:

- **L128**: Original (6s), Width (4s), Depth (2s) at batch size 32.

- **L512**: Original (13s), Width (9s), Depth (5s) at batch size 384.

4. **13B’s H100 (d)**:

- **L128**: Original (14s), Width (10s), Depth (6s) at batch size 32.

- **L512**: Original (20s), Width (14s), Depth (8s) at batch size 384.

---

### Key Observations

1. **Utilization Trends**:

- Larger models (13B) achieve higher utilization than smaller models (7B) across most configurations.

- H100 GPUs consistently outperform RTX3090 and A100 in utilization, especially for large sequence lengths and batch sizes.

2. **Latency Trends**:

- "Depth" configuration reduces latency by ~30-50% compared to "Original" across all models.

- "Width" configuration shows intermediate latency reduction (~20-40%).

- Latency increases with batch size, but optimized configurations (Width/Depth) scale more efficiently.

3. **Anomalies**:

- 13B’s H100 (d) shows near-100% utilization even at sequence length 32 and batch size 8, suggesting superior hardware efficiency.

- RTX3090 (a) has the lowest utilization (50-70%) for small batch sizes, indicating underutilization.

---

### Interpretation

1. **Hardware Efficiency**:

- H100 GPUs demonstrate significantly higher utilization than RTX3090 and A100, particularly for large models (13B). This suggests H100 is optimized for high-throughput workloads.

2. **Model Optimization**:

- "Depth" configuration reduces latency more effectively than "Width," likely due to architectural improvements in parallelism or memory access.

- Optimized configurations (Width/Depth) maintain high utilization while reducing latency, critical for real-time applications.

3. **Scalability**:

- Larger batch sizes and sequence lengths improve utilization but increase latency. However, optimized models mitigate this trade-off, enabling efficient scaling.

4. **Practical Implications**:

- For 7B models, H100 GPUs are ideal for high-throughput tasks, while RTX3090 may struggle with underutilization at small batch sizes.

- 13B models on H100 achieve near-maximal utilization, making them suitable for large-scale inference or training.

---

### Spatial Grounding and Color Matching

- **Legends**: Positioned below line graphs, with colors matching data points (gray=Original, green=Width, blue=Depth).

- **Heatmap Colors**: Red shades indicate high utilization (90-100%), yellow shades low utilization (50-70%).

- **Annotations**: Purple boxes highlight specific sequence lengths (L128, L512) in heatmaps.

---

### Conclusion

The data demonstrates that H100 GPUs outperform other hardware in utilization, especially for larger models. Optimized configurations ("Depth") reduce latency without sacrificing utilization, highlighting the importance of architectural improvements in model efficiency.

DECODING INTELLIGENCE...