## Flowchart: Ouro Model Training Pipeline

### Overview

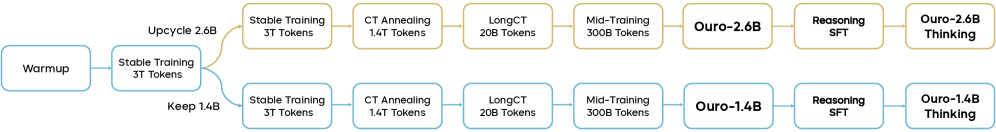

The image depicts a bifurcated training pipeline for Ouro models, showing two parallel processes (gold and blue paths) that converge at different stages. The diagram illustrates token quantity transformations and model development phases, with explicit token counts at each stage.

### Components/Axes

- **Paths**:

- Gold path (top): "Upcycle 2.6B" → "Stable Training 3T Tokens" → "CT Annealing 1.4T Tokens" → "LongCT 20B Tokens" → "Mid-Training 300B Tokens" → "Ouro-2.6B" → "Reasoning SFT" → "Ouro-2.6B Thinking"

- Blue path (bottom): "Keep 1.4B" → "Stable Training 3T Tokens" → "CT Annealing 1.4T Tokens" → "LongCT 20B Tokens" → "Mid-Training 300B Tokens" → "Ouro-1.4B" → "Reasoning SFT" → "Ouro-1.4B Thinking"

- **Arrows**: Labeled with token quantities (e.g., "Upcycle 2.6B", "Keep 1.4B")

- **Stages**:

- Warmup → Stable Training → CT Annealing → LongCT → Mid-Training → Ouro → Reasoning SFT → Ouro Thinking

- **Color Coding**:

- Gold (#FFD700) for the 2.6B path

- Blue (#0000FF) for the 1.4B path

### Detailed Analysis

1. **Initial Branching**:

- "Warmup" splits into two paths:

- Gold: "Upcycle 2.6B" (2.6B tokens)

- Blue: "Keep 1.4B" (1.4B tokens)

- Both paths converge at "Stable Training 3T Tokens"

2. **Token Reduction**:

- Gold path reduces from 2.6B → 1.4T (1,400B) → 20B → 300B tokens

- Blue path maintains 1.4B → 1.4T → 20B → 300B tokens

3. **Final Stages**:

- Both paths undergo "Reasoning SFT" before producing:

- Ouro-2.6B Thinking (gold)

- Ouro-1.4B Thinking (blue)

### Key Observations

- **Token Scaling**: The gold path starts with 2.6B tokens but undergoes significant reduction (2.6B → 1.4T → 20B → 300B), while the blue path maintains 1.4B consistency until final stages.

- **Convergence Points**: Both paths share identical stages after initial branching ("Stable Training" onward).

- **Model Output**: Final models differ only in naming (Ouro-2.6B vs. Ouro-1.4B), suggesting token quantity impacts final model size.

### Interpretation

This flowchart represents a token optimization pipeline for large language models. The gold path demonstrates aggressive token reduction (2.6B → 1.4T) through "Upcycle" and "CT Annealing" stages, while the blue path preserves initial token quantity. Both paths converge at "Stable Training" and share identical processing through "LongCT" and "Mid-Training" stages, suggesting these phases standardize token quality regardless of initial quantity. The final "Reasoning SFT" stage appears critical for developing the Ouro models' specialized thinking capabilities, with the token quantity difference (2.6B vs. 1.4B) potentially affecting model performance or specialization depth.