TECHNICAL ASSET FINGERPRINT

568f7ab88c713bf4c8546659

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

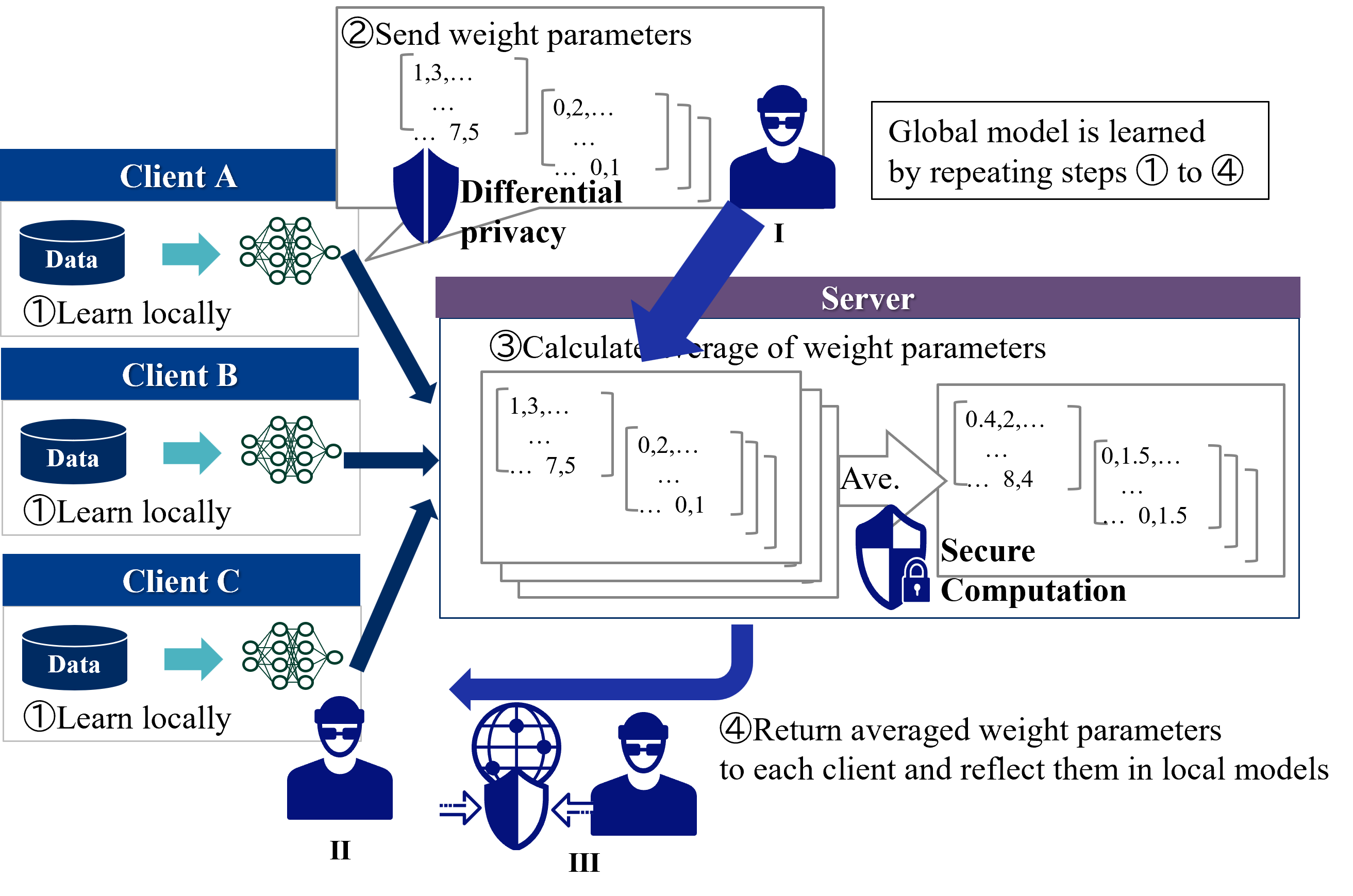

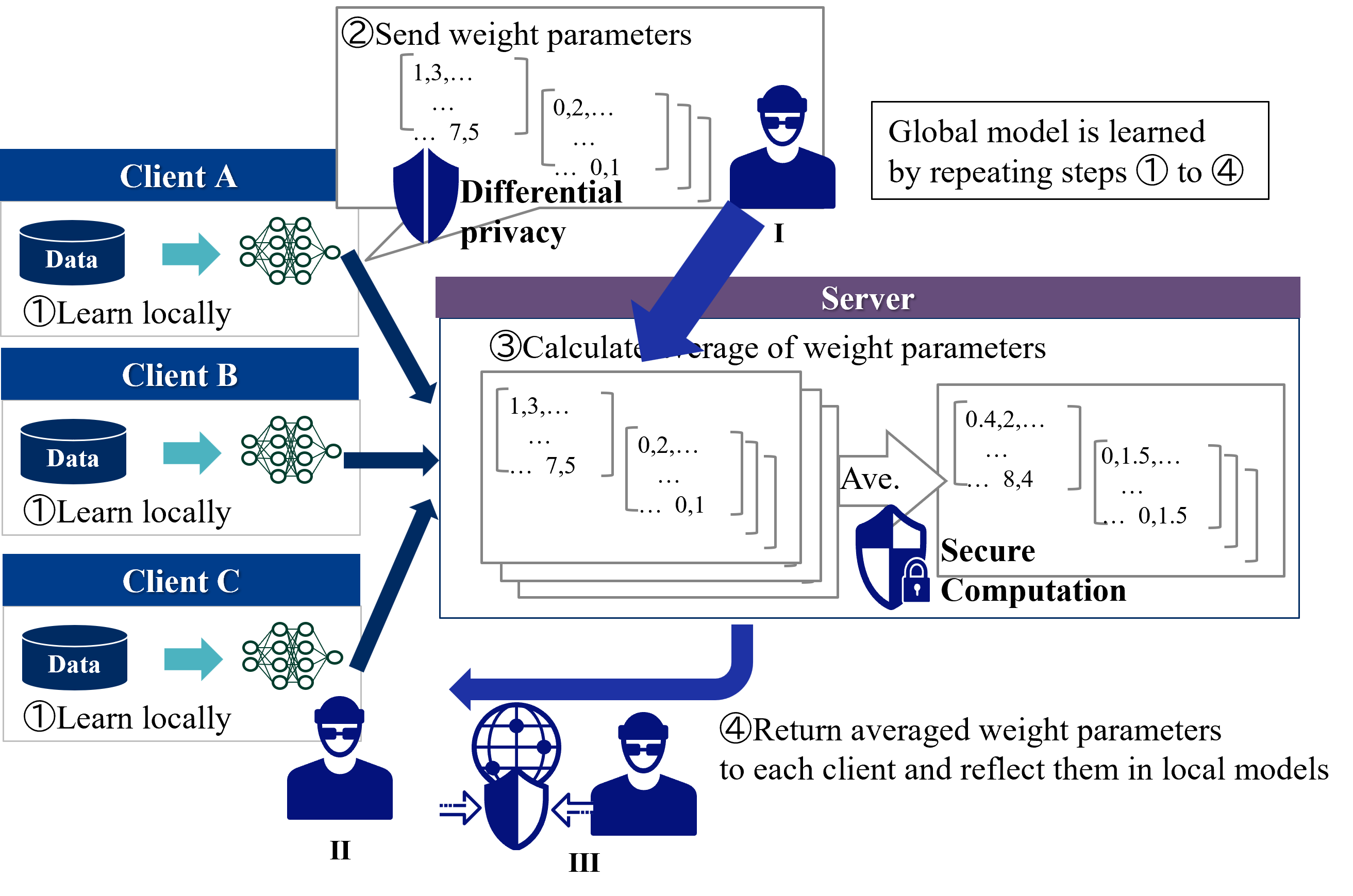

## Diagram: Federated Learning with Differential Privacy and Secure Computation

### Overview

The image is a technical flowchart illustrating a four-step federated learning process. It depicts multiple clients (A, B, C) collaboratively training a global machine learning model without sharing their raw local data. The process incorporates two key privacy/security mechanisms: **Differential Privacy** applied during parameter transmission and **Secure Computation** applied during server-side aggregation. The diagram uses icons, arrows, and text boxes to show the flow of data and operations.

### Components/Axes

The diagram is organized into three main spatial regions:

1. **Left Column (Clients):** Contains three vertically stacked client blocks (Client A, Client B, Client C). Each block contains:

* A blue cylinder icon labeled **"Data"**.

* A teal arrow pointing right.

* A green neural network icon.

* The text **"①Learn locally"**.

2. **Center/Right (Server & Process Flow):** Contains the main process flow, centered around a large purple-header box labeled **"Server"**.

3. **Top Right (Global Note):** A standalone text box states: **"Global model is learned by repeating steps ① to ④"**.

**Key Process Steps (Numbered Circles):**

* **① Learn locally:** Performed by each client on their local data.

* **② Send weight parameters:** Performed by clients, sending parameters to the server. This step is visually associated with a **"Differential privacy"** label and a shield icon.

* **③ Calculate average of weight parameters:** Performed by the server. This step is visually associated with a **"Secure Computation"** label and a shield-with-lock icon.

* **④ Return averaged weight parameters to each client and reflect them in local models:** Performed by the server, sending the aggregated model back to clients.

**Icons and Symbols:**

* **Person Icons (I, II, III):** Represent actors in the process. Icon **I** is near the "Send weight parameters" step. Icons **II** and **III** are near the "Return" step, with a globe-and-shield icon between them.

* **Shield Icons:** Represent security/privacy measures. One shield is labeled **"Differential privacy"**. Another shield with a padlock is labeled **"Secure Computation"**.

* **Arrows:** Thick blue arrows indicate the direction of data/parameter flow between clients and the server.

### Detailed Analysis

**Step-by-Step Process Flow:**

1. **Step ① - Local Learning:**

* **Location:** Left column, within each Client block (A, B, C).

* **Action:** Each client uses its local **"Data"** (blue cylinder) to train a local model, represented by a green neural network icon. The text **"①Learn locally"** confirms this action.

2. **Step ② - Parameter Transmission with Differential Privacy:**

* **Location:** Top-center, originating from the clients and pointing to the server.

* **Action:** Clients send their locally updated model weight parameters to the server.

* **Data Transmitted:** Example weight parameter matrices are shown:

* `[1,3,... ... 7,5]`

* `[0,2,... ... 0,1]`

* **Privacy Mechanism:** A blue shield icon labeled **"Differential privacy"** is placed over the transmission path, indicating noise is added to the parameters before sending to protect individual data points.

3. **Step ③ - Secure Aggregation on Server:**

* **Location:** Inside the large "Server" box.

* **Action:** The server receives the differentially private weight parameters from all clients and calculates their average.

* **Input Data:** The same example matrices from Step ② are shown stacked, representing inputs from multiple clients.

* **Process:** An arrow labeled **"Ave."** points to the output.

* **Output Data:** The averaged result is shown as new matrices:

* `[0.4,2,... ... 8,4]`

* `[0,1.5,... ... 0,1.5]`

* **Security Mechanism:** A shield-with-padlock icon labeled **"Secure Computation"** is placed next to the averaging operation, indicating the aggregation is performed on encrypted or otherwise secured data.

4. **Step ④ - Global Model Distribution:**

* **Location:** Bottom-center, originating from the server and pointing back to the clients.

* **Action:** The server returns the averaged (global) weight parameters to each client.

* **Text:** **"④Return averaged weight parameters to each client and reflect them in local models"**.

* **Visual:** A thick blue arrow curves from the server output back towards the client side. Person icons **II** and **III** and a globe-shield icon are positioned along this return path.

### Key Observations

* **Iterative Process:** The note in the top-right corner explicitly states the entire four-step cycle is repeated to train the global model.

* **Dual Privacy/Security Layers:** The diagram highlights two distinct protective measures: **Differential Privacy** (for data minimization during sharing) and **Secure Computation** (for protected processing during aggregation).

* **Asymmetric Data Flow:** The flow is cyclical: Clients → Server (Steps 1-2), Server processes (Step 3), Server → Clients (Step 4).

* **Example Data:** The numerical matrices (`[1,3,...]`, `[0.4,2,...]`, etc.) are illustrative placeholders to concretely show the transformation from client parameters to an averaged global parameter.

### Interpretation

This diagram visually explains a **privacy-preserving federated learning architecture**. It demonstrates how multiple entities (clients) can jointly train a shared AI model while keeping their sensitive training data local and protected.

* **Core Problem Solved:** It addresses the fundamental tension in collaborative AI: needing to combine knowledge from diverse datasets without compromising the privacy or security of the underlying data.

* **Mechanism Relationship:** **Differential Privacy** acts at the *communication boundary*, adding mathematical noise to obscure any single client's contribution. **Secure Computation** acts at the *aggregation point*, ensuring the server itself cannot inspect individual client parameters during the averaging process. Together, they provide defense-in-depth.

* **Process Significance:** The cyclical nature (repeating steps 1-4) is crucial. It shows that model improvement is incremental, with each cycle allowing the global model to benefit from all clients' data patterns without ever directly accessing it. This is foundational for applications in healthcare, finance, or any field where data cannot be centralized due to regulation, competition, or privacy concerns.

* **Notable Design Choice:** The use of clear, numbered steps and distinct icons for security mechanisms makes a complex technical process accessible. The inclusion of concrete (though example) numerical matrices helps ground the abstract concept of "weight parameters" in a tangible form.

DECODING INTELLIGENCE...