## Flowchart: Federated Learning Process with Differential Privacy and Secure Computation

### Overview

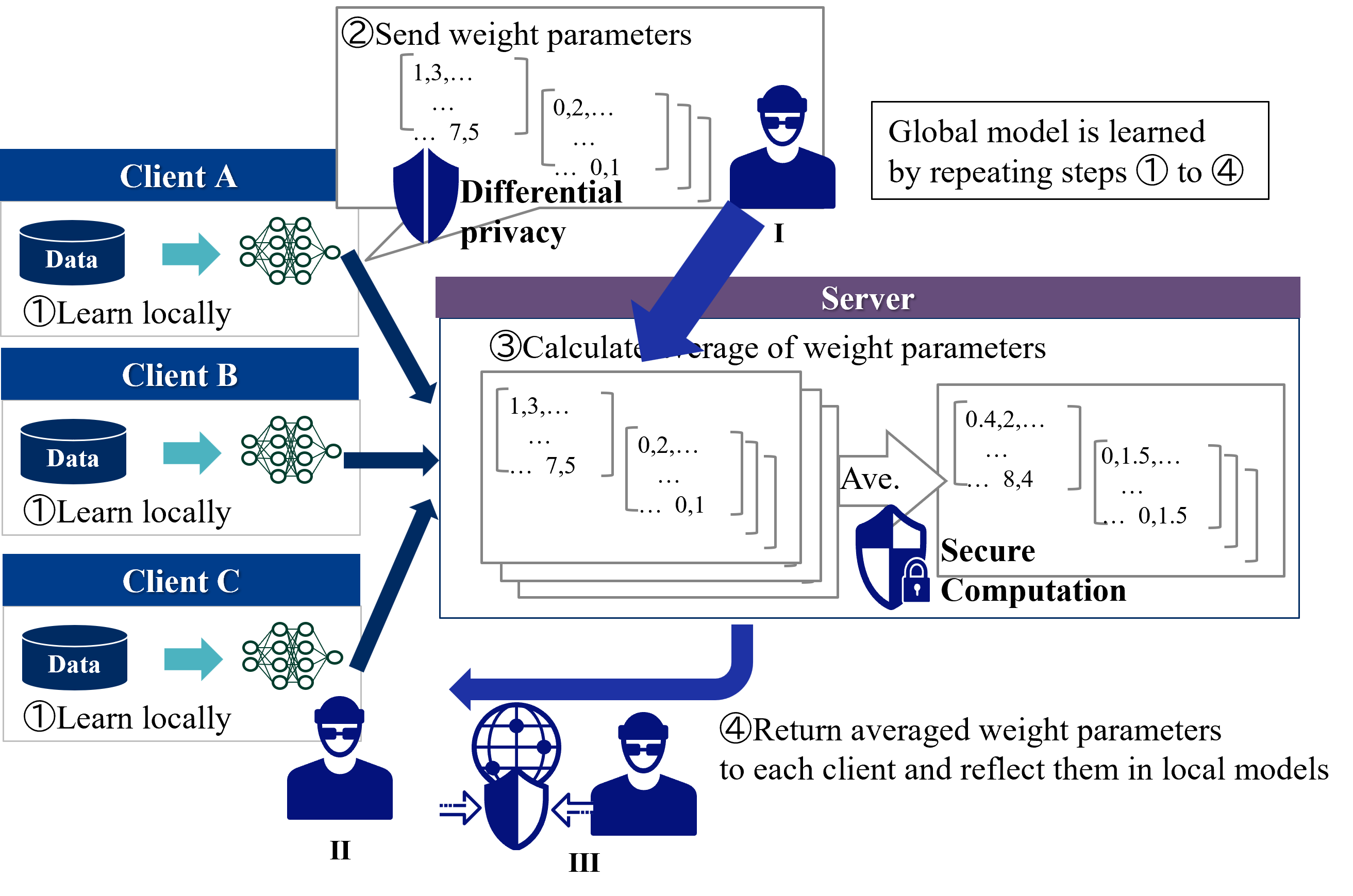

The diagram illustrates a federated learning workflow where multiple clients (A, B, C) collaboratively train a global model while preserving data privacy. The process involves four iterative steps: local learning, secure parameter transmission, server-side aggregation, and model update. Key elements include differential privacy mechanisms and secure computation protocols.

### Components/Axes

1. **Clients (A, B, C)**

- Each client has a "Data" repository and a neural network (represented by interconnected nodes).

- All clients perform identical local learning (Step 1).

2. **Server**

- Central node responsible for aggregating weight parameters from clients.

- Implements "Secure Computation" (lock icon) and "Differential Privacy" (shield icon).

3. **Steps**

- **Step 1**: "Learn locally" (circle icon)

- **Step 2**: "Send weight parameters" (arrow with shield)

- **Step 3**: "Calculate average of weight parameters" (server-side aggregation)

- **Step 4**: "Return averaged weight parameters" (arrow with globe icon)

4. **Textual Elements**

- "Global model is learned by repeating steps ① to ④" (top-right box).

- "Differential privacy" and "Secure Computation" labels with corresponding icons.

### Detailed Analysis

- **Client Workflow**:

- Each client trains a local neural network on its own data (Step 1).

- Weight parameters (e.g., `1,3,...,7.5`, `0.2,...,0.1`) are sent to the server with differential privacy (Step 2).

- **Server Workflow**:

- Receives encrypted weight parameters from all clients.

- Computes the average of these parameters using secure computation (Step 3).

- Returns the averaged parameters (e.g., `0.4,2,...,8.4`, `0.1.5,...,0.1.5`) to clients (Step 4).

- **Iterative Process**:

- The cycle repeats (①→④) to refine the global model.

### Key Observations

1. **Privacy Preservation**: Differential privacy (shield icon) ensures raw data remains decentralized.

2. **Security**: Secure computation (lock icon) protects aggregated parameters during transmission.

3. **Decentralization**: No client shares raw data; only model weights are exchanged.

4. **Scalability**: The process is identical across clients (A, B, C), suggesting uniformity in data structure.

### Interpretation

This diagram represents a **federated learning architecture** designed for privacy-sensitive applications (e.g., healthcare, finance). By combining differential privacy and secure computation, it balances model accuracy with data confidentiality. The iterative refinement of the global model through repeated steps ensures convergence over time. The use of standardized workflows across clients implies scalability for large-scale deployments. The emphasis on secure parameter transmission highlights compliance with regulations like GDPR, making it suitable for industries with strict data governance requirements.