## Causal Diagram: Fairness Scenarios

### Overview

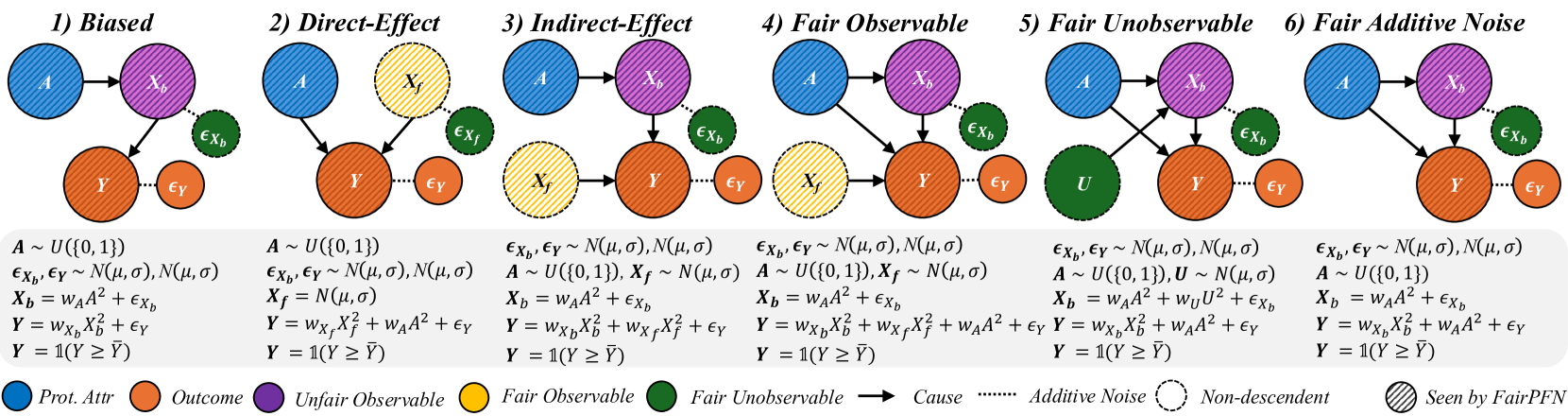

The image presents six causal diagrams illustrating different scenarios related to fairness and bias in machine learning models. Each diagram depicts relationships between protected attributes, outcomes, and other variables, highlighting potential sources of unfairness.

### Components/Axes

* **Nodes:** Represent variables.

* Blue, diagonally-striped circle: Prot. Attr (Protected Attribute)

* Orange, diagonally-striped circle: Outcome

* Yellow, dashed-outline circle: Fair Observable

* Purple, diagonally-striped circle: Unfair Observable

* Green, dashed-outline circle: Fair Unobservable

* **Edges:** Represent causal relationships.

* Solid arrow: Cause

* Dotted line: Additive Noise

* Dashed line: Non-descendent

* **Text:**

* Titles above each diagram: 1) Biased, 2) Direct-Effect, 3) Indirect-Effect, 4) Fair Observable, 5) Fair Unobservable, 6) Fair Additive Noise

* Equations and distributions below the diagrams defining the relationships between variables.

* **Legend:** Located at the bottom of the image, explaining the meaning of the node colors and edge types.

* "Prot. Attr": Blue, diagonally-striped circle

* "Outcome": Orange, diagonally-striped circle

* "Unfair Observable": Purple, diagonally-striped circle

* "Fair Observable": Yellow, dashed-outline circle

* "Fair Unobservable": Green, dashed-outline circle

* "Cause": Solid arrow

* "Additive Noise": Dotted line

* "Non-descendent": Dashed line

* "Seen by FairPFN": Diagonally-striped fill

### Detailed Analysis or Content Details

Each diagram includes the following variables:

* A: Protected Attribute (blue, diagonally-striped)

* Y: Outcome (orange, diagonally-striped)

* Xb: Unfair Observable (purple, diagonally-striped)

* Xf: Fair Observable (yellow, dashed-outline)

* U: Fair Unobservable (green, dashed-outline)

* εXb, εXf, εY: Additive Noise (green, dashed-outline or orange, dashed-outline)

**1) Biased:**

* A -> Xb -> Y

* Y has additive noise εY

* Xb has additive noise εXb

* Equations:

* A ~ U({0, 1})

* εXb, εY ~ N(μ, σ), N(μ, σ)

* Xb = wAA² + εXb

* Y = wXbXb² + εY

* Y = 1(Y ≥ Ȳ)

**2) Direct-Effect:**

* A -> Y

* Xf -> Y

* Y has additive noise εY

* Xf has additive noise εXf

* Equations:

* A ~ U({0, 1})

* εXf, εY ~ N(μ, σ), N(μ, σ)

* Xf = N(μ, σ)

* Y = wXfXf² + wAA² + εY

* Y = 1(Y ≥ Ȳ)

**3) Indirect-Effect:**

* A -> Xb -> Y

* Xf -> Y

* Y has additive noise εY

* Xb has additive noise εXb

* Equations:

* εXb, εY ~ N(μ, σ), N(μ, σ)

* A ~ U({0, 1}), Xf ~ N(μ, σ)

* Xb = wAA² + εXb

* Y = wXbXb² + wXfXf² + εY

* Y = 1(Y ≥ Ȳ)

**4) Fair Observable:**

* A -> Xb -> Y

* A -> Xf -> Y

* Y has additive noise εY

* Xb has additive noise εXb

* Equations:

* εXb, εY ~ N(μ, σ), N(μ, σ)

* A ~ U({0, 1}), Xf ~ N(μ, σ)

* Xb = wAA² + εXb

* Y = wXbXb² + wXfXf² + wAA² + εY

* Y = 1(Y ≥ Ȳ)

**5) Fair Unobservable:**

* A -> Xb -> Y

* U -> Xb -> Y

* A -> Y

* U -> Y

* Y has additive noise εY

* Xb has additive noise εXb

* Equations:

* εXb, εY ~ N(μ, σ), N(μ, σ)

* A ~ U({0, 1}), U ~ N(μ, σ)

* Xb = wAA² + wUU² + εXb

* Y = wXbXb² + wAA² + εY

* Y = 1(Y ≥ Ȳ)

**6) Fair Additive Noise:**

* A -> Xb -> Y

* Y has additive noise εY

* Xb has additive noise εXb

* Equations:

* εXb, εY ~ N(μ, σ), N(μ, σ)

* A ~ U({0, 1})

* Xb = wAA² + εXb

* Y = wXbXb² + wAA² + εY

* Y = 1(Y ≥ Ȳ)

### Key Observations

* The diagrams illustrate different ways in which a protected attribute (A) can influence an outcome (Y), either directly or indirectly through other variables.

* The presence of "fair" and "unfair" observables (Xf and Xb) highlights the potential for bias to be introduced or mitigated depending on which variables are used in a model.

* Additive noise (ε) is present in all diagrams, representing random variation or unobserved factors.

* The equations below each diagram provide a mathematical representation of the relationships between the variables.

* The thresholding function Y = 1(Y ≥ Ȳ) suggests a classification task where the outcome is binary.

### Interpretation

The diagrams demonstrate how different causal structures can lead to biased outcomes. Understanding these structures is crucial for developing fair machine learning models. The diagrams highlight the importance of considering the relationships between protected attributes, outcomes, and other variables, as well as the potential for bias to be introduced through various pathways. The scenarios presented provide a framework for analyzing and mitigating bias in real-world applications. The use of both observable and unobservable variables emphasizes the challenges of achieving fairness when not all relevant information is available.