\n

## Diagram: Neural Network Architecture

### Overview

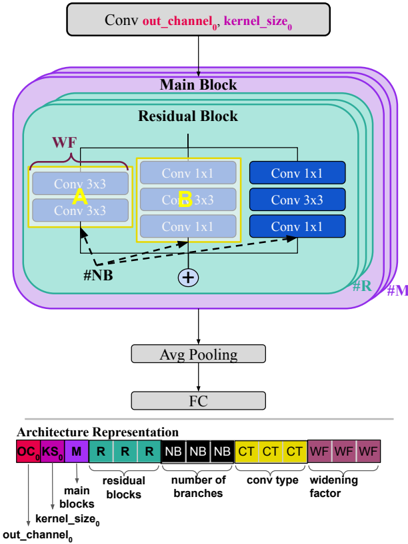

The image depicts a diagram of a neural network architecture, specifically a convolutional neural network (CNN) with residual blocks. The diagram illustrates the flow of data through the network, highlighting the main components and their connections. Below the diagram is a key representing the architecture's components.

### Components/Axes

The diagram consists of the following components:

* **Conv out\_channel₀, kernel\_size₀**: Input layer.

* **Main Block**: A larger block encompassing the residual blocks.

* **Residual Block**: A repeating unit within the Main Block.

* **Conv 3x3**: Convolutional layers with a 3x3 kernel.

* **Conv 1x1**: Convolutional layers with a 1x1 kernel.

* **Avg Pooling**: Average pooling layer.

* **FC**: Fully connected layer.

* **#NB**: Number of Branches.

* **#R**: Residual Blocks.

* **#M**: Main Blocks.

* **Architecture Representation**: A key at the bottom of the diagram.

The key at the bottom uses color-coded blocks to represent different architectural elements:

* **KS**: kernel\_size₀ (Red)

* **M**: Main Blocks (Red)

* **R**: Residual Blocks (Red)

* **NB**: Number of Branches (Green)

* **CT**: Conv Type (Blue)

* **WF**: Widening Factor (Brown)

### Detailed Analysis or Content Details

The diagram shows a data flow starting from the "Conv out\_channel₀, kernel\_size₀" layer at the top. This layer feeds into the "Main Block". Within the "Main Block", there are multiple "Residual Blocks".

Each "Residual Block" contains:

* A "Conv 3x3" layer (yellow) with the label "WF".

* Three "Conv 1x1" layers (blue).

* A summation symbol (+) indicating an addition operation, likely representing the residual connection.

The output of the "Main Block" is then passed through an "Avg Pooling" layer, followed by a "FC" (Fully Connected) layer.

The "Architecture Representation" key at the bottom shows a sequence of colored blocks:

* KS, M, R, R, R, NB, NB, NB, CT, CT, CT, WF, WF, WF.

The diagram uses arrows to indicate the direction of data flow. A dashed arrow connects the input of the "Residual Block" to the summation symbol, representing the residual connection.

### Key Observations

* The architecture utilizes residual connections, which are common in deep neural networks to mitigate the vanishing gradient problem.

* The use of both 3x3 and 1x1 convolutional layers suggests a combination of spatial feature extraction and dimensionality reduction.

* The "Widening Factor" (WF) label on the 3x3 convolution suggests that the number of channels may be increased in this layer.

* The key at the bottom provides a symbolic representation of the network's structure, allowing for a concise description of the architecture.

### Interpretation

The diagram illustrates a CNN architecture designed for image recognition or similar tasks. The residual blocks enable the training of deeper networks by allowing gradients to flow more easily. The combination of 3x3 and 1x1 convolutions provides a balance between spatial feature extraction and computational efficiency. The "Widening Factor" suggests that the network may increase the number of feature maps in certain layers to capture more complex patterns. The architecture representation key provides a compact way to describe the network's structure, making it easier to understand and reproduce. The diagram suggests a modular design, where the residual blocks can be stacked to create a network of arbitrary depth. The use of average pooling before the fully connected layer is a common practice for reducing the spatial dimensions of the feature maps and improving generalization performance. The diagram is a high-level overview and does not provide specific details about the number of filters, activation functions, or other hyperparameters.