\n

## Diagram: Knowledge Graph Querying Methods Comparison

### Overview

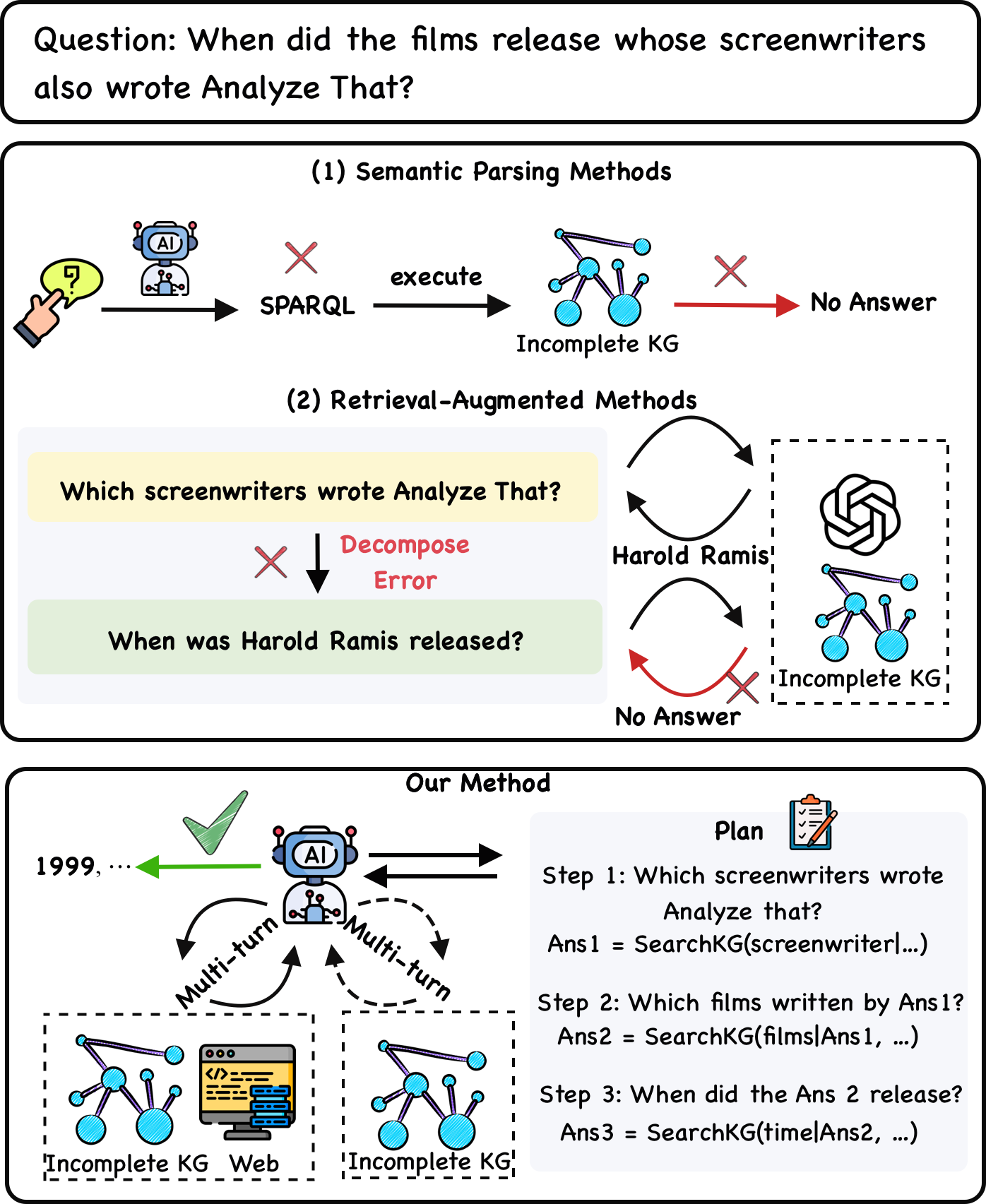

This diagram compares three methods for answering the question: "When did the films release whose screenwriters also wrote Analyze That?". The methods are Semantic Parsing, Retrieval-Augmented Methods, and a novel "Our Method". The diagram illustrates the flow of information and potential failure points for each approach, highlighting the challenges of incomplete knowledge graphs (KG).

### Components/Axes

The diagram is divided into three horizontal sections, each representing a different method. Each section contains visual elements representing the query process, including:

* **Question:** The initial query posed to the system.

* **AI/Agent:** Represented by a brain icon, symbolizing the AI component.

* **Knowledge Graph (KG):** Represented by interconnected nodes and edges.

* **Error/Failure:** Indicated by a red "X" symbol.

* **Output:** "No Answer" or a series of steps in a plan.

* **Web:** Represented by a browser icon.

* **Plan:** A list of steps to answer the question.

* **Harold Ramis:** A portrait of Harold Ramis.

### Detailed Analysis or Content Details

**Section 1: Semantic Parsing Methods**

* **Question:** "When did the films release whose screenwriters also wrote Analyze That?"

* The AI attempts to "execute" a SPARQL query on the KG.

* The KG is labeled "Incomplete KG".

* The process results in "No Answer", indicated by a red "X".

**Section 2: Retrieval-Augmented Methods**

* **Question:** "When did the films release whose screenwriters also wrote Analyze That?"

* The method first decomposes the question into two sub-questions: "Which screenwriters wrote Analyze That?" and "When was Harold Ramis released?".

* The KG is labeled "Incomplete KG" within a dashed box.

* A portrait of Harold Ramis is shown.

* The process results in "No Answer", indicated by a red "X".

**Section 3: Our Method**

* **Question:** "When did the films release whose screenwriters also wrote Analyze That?"

* The AI interacts with the web in a "Multi-turn" fashion.

* The KG is labeled "Incomplete KG" within a dashed box.

* The AI generates a "Plan" with three steps:

* Step 1: "Which screenwriters wrote Analyze That?" Ans1 = SearchKG(screenwriter(...))

* Step 2: "Which films written by Ans1?" Ans2 = SearchKG(filmsAns1, ...)

* Step 3: "When did the Ans 2 release?" Ans3 = SearchKG(timeAns2, ...)

* The output is "1999, ...", suggesting a successful answer.

### Key Observations

* Both Semantic Parsing and Retrieval-Augmented methods fail to provide an answer due to the "Incomplete KG".

* "Our Method" successfully generates a plan and provides a partial answer ("1999, ...").

* "Our Method" utilizes a multi-turn interaction with the web, suggesting it can leverage external information to overcome the limitations of the KG.

* The decomposition of the question in Retrieval-Augmented Methods leads to a specific error case involving Harold Ramis.

### Interpretation

The diagram demonstrates the challenges of answering complex knowledge graph queries when the KG is incomplete. Semantic Parsing relies on a complete and accurate KG to directly execute queries, while Retrieval-Augmented methods attempt to decompose the problem but still struggle with KG limitations. "Our Method" offers a more robust approach by leveraging external information (the web) through a multi-turn interaction, allowing it to overcome the KG's incompleteness and generate a plausible answer. The inclusion of Harold Ramis in the Retrieval-Augmented section suggests a potential issue with identifying the correct entities or relationships within the KG. The diagram highlights the importance of combining KG querying with external knowledge sources for more reliable and comprehensive question answering. The "1999, ..." output suggests that the method is capable of providing at least partial results, even if it doesn't have complete information. The use of dashed boxes around the "Incomplete KG" in sections 2 and 3 suggests that the method is aware of the KG's limitations and attempts to mitigate them.