## Diagram: Differentiable Meta-Level Reasoning and Object-Level Reasoning System

### Overview

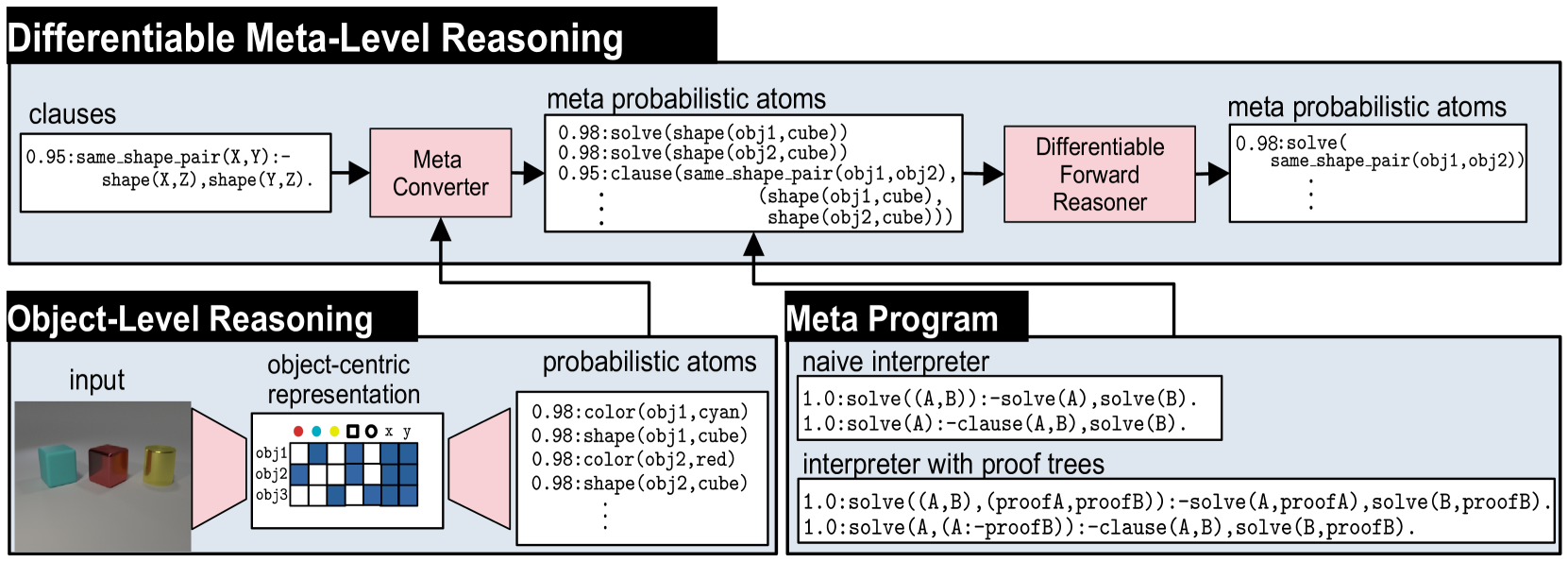

This diagram illustrates a two-tiered reasoning system that combines **Object-Level Reasoning** with **Differentiable Meta-Level Reasoning**. The system processes visual input (objects) into symbolic probabilistic atoms, which are then used by a meta-reasoning layer to perform logical inference in a differentiable manner. The flow shows how raw input is transformed into structured knowledge and then reasoned over using a meta-program.

### Components/Axes

The diagram is divided into three primary, interconnected blocks:

1. **Differentiable Meta-Level Reasoning (Top Block):**

* **Input:** `clauses` (e.g., `0.95:same_shape_pair(X,Y):- shape(X,Z),shape(Y,Z).`)

* **Component 1:** `Meta Converter` (pink box).

* **Intermediate Output:** `meta probabilistic atoms` (e.g., `0.98:solve(shape(obj1,cube))`, `0.95:clause(same_shape_pair(obj1,obj2), (shape(obj1,cube), shape(obj2,cube)))`).

* **Component 2:** `Differentiable Forward Reasoner` (pink box).

* **Final Output:** `meta probabilistic atoms` (e.g., `0.98:solve(same_shape_pair(obj1,obj2))`).

2. **Object-Level Reasoning (Bottom-Left Block):**

* **Input:** `input` - A photograph showing three 3D objects: a cyan cube, a red cube, and a yellow cylinder.

* **Component 1:** `object-centric representation` - A schematic grid with rows labeled `obj1`, `obj2`, `obj3` and columns with icons for color (red, cyan, yellow dots), shape (square, circle), and spatial coordinates (`x`, `y`). The grid contains blue and white cells indicating feature presence/absence.

* **Output:** `probabilistic atoms` (e.g., `0.98:color(obj1,cyan)`, `0.98:shape(obj1,cube)`, `0.98:color(obj2,red)`, `0.98:shape(obj2,cube)`).

3. **Meta Program (Bottom-Right Block):**

* This block defines the logical rules for the reasoning system.

* **Sub-component 1:** `naive interpreter`

* Rule 1: `1.0:solve((A,B)):-solve(A),solve(B).`

* Rule 2: `1.0:solve(A):-clause(A,B),solve(B).`

* **Sub-component 2:** `interpreter with proof trees`

* Rule 1: `1.0:solve((A,B),(proofA,proofB)):-solve(A,proofA),solve(B,proofB).`

* Rule 2: `1.0:solve(A,(A:-proofB)):-clause(A,B),solve(B,proofB).`

**Flow and Connections:**

* The `probabilistic atoms` from the **Object-Level Reasoning** block feed into the `Meta Converter` in the **Differentiable Meta-Level Reasoning** block.

* The `Meta Program` block provides the logical rules that govern the `Meta Converter` and the `Differentiable Forward Reasoner`.

* The overall data flow is: **Input Image** -> **Object-Centric Representation** -> **Probabilistic Atoms** -> **Meta Converter** -> **Meta Probabilistic Atoms** -> **Differentiable Forward Reasoner** -> **Inferred Meta Probabilistic Atoms**.

### Detailed Analysis

**Text Transcription and Structure:**

* **Title:** `Differentiable Meta-Level Reasoning` (Top-left, white text on black background).

* **Object-Level Reasoning Title:** `Object-Level Reasoning` (Bottom-left, white text on black background).

* **Meta Program Title:** `Meta Program` (Bottom-right, white text on black background).

* **Clause Example:** `0.95:same_shape_pair(X,Y):- shape(X,Z),shape(Y,Z).` This is a probabilistic logic rule with a confidence of 0.95.

* **Meta Probabilistic Atoms (Input to Reasoner):**

* `0.98:solve(shape(obj1,cube))`

* `0.98:solve(shape(obj2,cube))`

* `0.95:clause(same_shape_pair(obj1,obj2), (shape(obj1,cube), shape(obj2,cube)))`

* **Meta Probabilistic Atoms (Output of Reasoner):**

* `0.98:solve(same_shape_pair(obj1,obj2))`

* **Probabilistic Atoms (from Object-Level):**

* `0.98:color(obj1,cyan)`

* `0.98:shape(obj1,cube)`

* `0.98:color(obj2,red)`

* `0.98:shape(obj2,cube)`

* **Meta Program Rules:** (As transcribed in the Components section above).

### Key Observations

1. **Hierarchical Abstraction:** The system clearly separates low-level perception (Object-Level) from high-level logical reasoning (Meta-Level).

2. **Probabilistic Foundation:** All knowledge is represented with confidence scores (e.g., 0.98, 0.95), indicating a probabilistic logic framework.

3. **Differentiable Pipeline:** The presence of a "Differentiable Forward Reasoner" suggests the entire reasoning chain is designed to be trained end-to-end using gradient-based methods.

4. **Symbolic Grounding:** The `object-centric representation` bridges the gap between continuous visual data and discrete symbolic atoms (`obj1`, `cube`, `cyan`).

5. **Meta-Interpretation:** The `Meta Program` contains interpreters that define how logical deduction (`solve`) operates, with the "proof trees" version providing a more detailed trace of the reasoning process.

### Interpretation

This diagram represents a neuro-symbolic AI architecture. Its purpose is to perform **explainable, logical reasoning over perceptual data** in a way that is compatible with modern deep learning.

* **What it demonstrates:** The system can take an image of objects, identify their properties (color, shape) with high confidence, and then use logical rules to infer higher-order relationships (e.g., "these two objects have the same shape"). The "differentiable" aspect means the system can potentially learn these rules or the perception module from data.

* **Relationships:** The Object-Level module acts as a **perceptual front-end**, grounding symbols in sensory input. The Meta-Level module acts as a **logical reasoning engine**, manipulating these symbols according to a programmable `Meta Program`. The `Meta Converter` is the crucial translator that formats grounded facts into a structure suitable for meta-reasoning.

* **Significance:** This architecture addresses a key challenge in AI: combining the robust pattern recognition of neural networks with the explicit, interpretable reasoning of symbolic logic. The use of probabilities and differentiability makes it trainable and robust to perceptual uncertainty. The output (`solve(same_shape_pair(obj1,obj2))`) is not just a prediction but a **logical conclusion** derived from a traceable chain of evidence and rules.