## Flowchart: Differentiable Meta-Level Reasoning System

### Overview

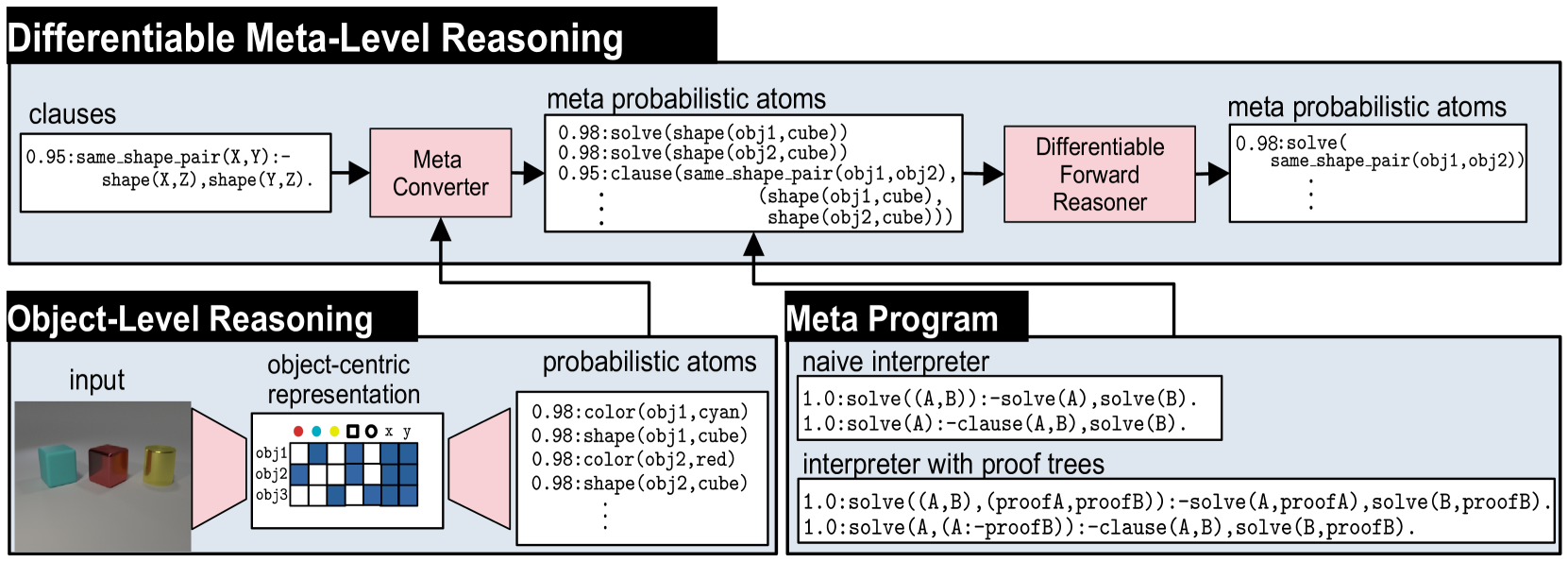

The diagram illustrates a computational framework for differentiable meta-level reasoning, combining object-level perception with probabilistic logical reasoning. It shows two interconnected pipelines: one for object-level reasoning (bottom-left) and one for meta-level reasoning (top), with a shared "meta probabilistic atoms" component.

### Components/Axes

**Top Section (Differentiable Meta-Level Reasoning):**

1. **Input:** Clauses (e.g., `same_shape_pair(X,Y)` with 0.95 probability)

2. **Meta Converter:** Transforms clauses into meta probabilistic atoms

3. **Differentiable Forward Reasoner:** Processes meta probabilistic atoms iteratively

4. **Output:** Refined meta probabilistic atoms (e.g., `solve(shape(obj1,cube))` with 0.98 probability)

**Bottom-Left Section (Object-Level Reasoning):**

1. **Input:** Image of three objects (cyan cube, red cube, yellow cylinder)

2. **Object-Centric Representation:**

- obj1: cyan cube

- obj2: red cube

- obj3: yellow cylinder

3. **Probabilistic Atoms:**

- `color(obj1,cyan)`: 0.98

- `shape(obj1,cube)`: 0.98

- `color(obj2,red)`: 0.98

- `shape(obj2,cube)`: 0.98

- `color(obj3,yellow)`: 0.98

- `shape(obj3,cylinder)`: 0.98

**Bottom-Right Section (Meta Program):**

1. **Naive Interpreter Rules:**

- `solve(A,B) :- solve(A), solve(B)`

- `solve(A) :- clause(A,B), solve(B)`

2. **Interpreter with Proof Trees:**

- `solve(A,B,proofA,proofB) :- solve(A,proofA), solve(B,proofB)`

- `solve(A,proofA) :- clause(A,B), solve(B,proofB)`

### Detailed Analysis

**Top Section Flow:**

- Clauses (0.95 confidence) → Meta Converter → Meta Probabilistic Atoms (0.98 confidence) → Differentiable Forward Reasoner → Refined Meta Probabilistic Atoms

**Object-Level Reasoning:**

- Image input → Object-Centric Representation (3 objects with color/shape attributes) → Probabilistic Atoms (0.98 confidence for each attribute)

**Meta Program Logic:**

- Naive Interpreter: Basic logical deduction rules

- Proof Tree Interpreter: Enhanced with proof tracking for logical consistency

### Key Observations

1. **Probabilistic Confidence:** All extracted features (colors, shapes, logical clauses) maintain >95% confidence

2. **Differentiable Reasoning:** The forward reasoner operates on probabilistic atoms while maintaining differentiability

3. **Hierarchical Structure:** Object-level data feeds into meta-level reasoning through probabilistic representations

4. **Logical Inference:** The meta program combines naive deduction with proof-aware reasoning

### Interpretation

This system implements a neuro-symbolic architecture where:

1. **Perception Layer:** Converts visual input into probabilistic object representations

2. **Reasoning Layer:** Uses differentiable probabilistic logic to refine object relationships

3. **Meta-Programming:** Implements logical inference rules that can operate on both raw data and intermediate representations

The 0.95-0.98 confidence range suggests the system maintains high certainty while allowing for uncertainty propagation through differentiable operations. The proof tree extension indicates support for explainable AI through traceable logical deductions.

The architecture enables end-to-end differentiable training of both perception and reasoning components, potentially allowing learning of both visual features and logical rules simultaneously.