## Bar Charts: Model Performance Comparison

### Overview

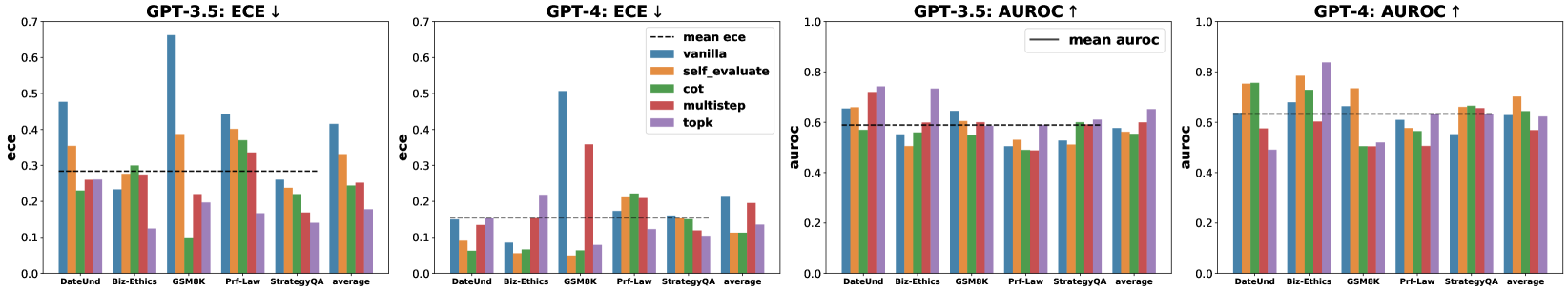

The image contains four bar charts comparing the performance of different models (GPT-3.5 and GPT-4) across several tasks. The charts are arranged in a 2x2 grid. The left two charts display the Expected Calibration Error (ECE), while the right two display the Area Under the Receiver Operating Characteristic curve (AUROC). Each chart compares different prompting strategies: vanilla, self_evaluate, cot (Chain of Thought), multistep, and topk. The x-axis represents different tasks (DateUnd, Biz-Ethics, GSM8K, Prf-Law, StrategyQA) and an average across all tasks.

### Components/Axes

* **Titles:**

* Top-left: GPT-3.5: ECE ↓

* Top-middle-left: GPT-4: ECE ↓

* Top-middle-right: GPT-3.5: AUROC ↑

* Top-right: GPT-4: AUROC ↑

* **Y-axes:**

* Left two charts: "ece", scale from 0.0 to 0.7

* Right two charts: "auroc", scale from 0.0 to 1.0

* **X-axes:**

* All charts: Categories: DateUnd, Biz-Ethics, GSM8K, Prf-Law, StrategyQA, average

* **Legends:** (Located in the top-middle-left chart, applies to all charts)

* `mean ece` (black dashed line in the ECE charts) or `mean auroc` (black solid line in the AUROC charts)

* `vanilla` (blue)

* `self_evaluate` (orange)

* `cot` (green)

* `multistep` (red)

* `topk` (purple)

### Detailed Analysis

**Chart 1: GPT-3.5: ECE ↓**

* Trend: ECE varies across tasks and prompting strategies.

* DateUnd: vanilla ~0.47, self_evaluate ~0.27, cot ~0.24, multistep ~0.26, topk ~0.25

* Biz-Ethics: vanilla ~0.30, self_evaluate ~0.28, cot ~0.29, multistep ~0.24, topk ~0.12

* GSM8K: vanilla ~0.65, self_evaluate ~0.38, cot ~0.10, multistep ~0.43, topk ~0.20

* Prf-Law: vanilla ~0.43, self_evaluate ~0.28, cot ~0.35, multistep ~0.27, topk ~0.40

* StrategyQA: vanilla ~0.35, self_evaluate ~0.30, cot ~0.25, multistep ~0.22, topk ~0.15

* average: vanilla ~0.42, self_evaluate ~0.30, cot ~0.25, multistep ~0.28, topk ~0.22

* Mean ECE (black dashed line): ~0.29

**Chart 2: GPT-4: ECE ↓**

* Trend: ECE is generally lower than GPT-3.5, indicating better calibration.

* DateUnd: vanilla ~0.15, self_evaluate ~0.09, cot ~0.07, multistep ~0.14, topk ~0.14

* Biz-Ethics: vanilla ~0.40, self_evaluate ~0.35, cot ~0.25, multistep ~0.25, topk ~0.20

* GSM8K: vanilla ~0.15, self_evaluate ~0.15, cot ~0.10, multistep ~0.22, topk ~0.05

* Prf-Law: vanilla ~0.18, self_evaluate ~0.16, cot ~0.17, multistep ~0.15, topk ~0.12

* StrategyQA: vanilla ~0.15, self_evaluate ~0.15, cot ~0.15, multistep ~0.15, topk ~0.10

* average: vanilla ~0.20, self_evaluate ~0.18, cot ~0.15, multistep ~0.18, topk ~0.12

* Mean ECE (black dashed line): ~0.15

**Chart 3: GPT-3.5: AUROC ↑**

* Trend: AUROC varies across tasks and prompting strategies.

* DateUnd: vanilla ~0.62, self_evaluate ~0.61, cot ~0.58, multistep ~0.72, topk ~0.73

* Biz-Ethics: vanilla ~0.60, self_evaluate ~0.60, cot ~0.57, multistep ~0.59, topk ~0.59

* GSM8K: vanilla ~0.52, self_evaluate ~0.50, cot ~0.53, multistep ~0.51, topk ~0.60

* Prf-Law: vanilla ~0.60, self_evaluate ~0.59, cot ~0.58, multistep ~0.59, topk ~0.70

* StrategyQA: vanilla ~0.60, self_evaluate ~0.59, cot ~0.57, multistep ~0.59, topk ~0.60

* average: vanilla ~0.58, self_evaluate ~0.57, cot ~0.57, multistep ~0.58, topk ~0.64

* Mean AUROC (black solid line): ~0.59

**Chart 4: GPT-4: AUROC ↑**

* Trend: AUROC is generally higher than GPT-3.5, indicating better performance.

* DateUnd: vanilla ~0.72, self_evaluate ~0.73, cot ~0.72, multistep ~0.56, topk ~0.78

* Biz-Ethics: vanilla ~0.78, self_evaluate ~0.79, cot ~0.75, multistep ~0.57, topk ~0.65

* GSM8K: vanilla ~0.50, self_evaluate ~0.50, cot ~0.50, multistep ~0.50, topk ~0.50

* Prf-Law: vanilla ~0.65, self_evaluate ~0.64, cot ~0.63, multistep ~0.63, topk ~0.63

* StrategyQA: vanilla ~0.65, self_evaluate ~0.64, cot ~0.63, multistep ~0.63, topk ~0.63

* average: vanilla ~0.66, self_evaluate ~0.66, cot ~0.65, multistep ~0.58, topk ~0.64

* Mean AUROC (black solid line): ~0.63

### Key Observations

* GPT-4 generally outperforms GPT-3.5 in both calibration (lower ECE) and accuracy (higher AUROC).

* The "vanilla" prompting strategy often performs comparably to or better than more complex strategies like "self_evaluate" and "cot" for GPT-4.

* The "topk" prompting strategy often achieves higher AUROC scores, especially for GPT-3.5.

* GSM8K consistently shows lower AUROC scores compared to other tasks, particularly for GPT-4.

* The ECE scores for GPT-4 are significantly lower than those for GPT-3.5, indicating better calibration.

### Interpretation

The data suggests that GPT-4 is a more reliable and accurate model than GPT-3.5 across the tasks evaluated. The lower ECE values for GPT-4 indicate that its confidence in its predictions is better aligned with its actual accuracy. The higher AUROC values suggest that GPT-4 is better at distinguishing between positive and negative examples.

The effectiveness of different prompting strategies varies between the two models. While complex strategies like "self_evaluate" and "cot" might be expected to improve performance, the "vanilla" strategy often performs well, especially for GPT-4. This could indicate that GPT-4 is better at understanding and responding to simple prompts.

The consistently lower AUROC scores for GSM8K suggest that this task is particularly challenging for both models. This could be due to the nature of the task itself or the way the data is structured.