TECHNICAL ASSET FINGERPRINT

5ae7acb399fdc3a7fcd55e37

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

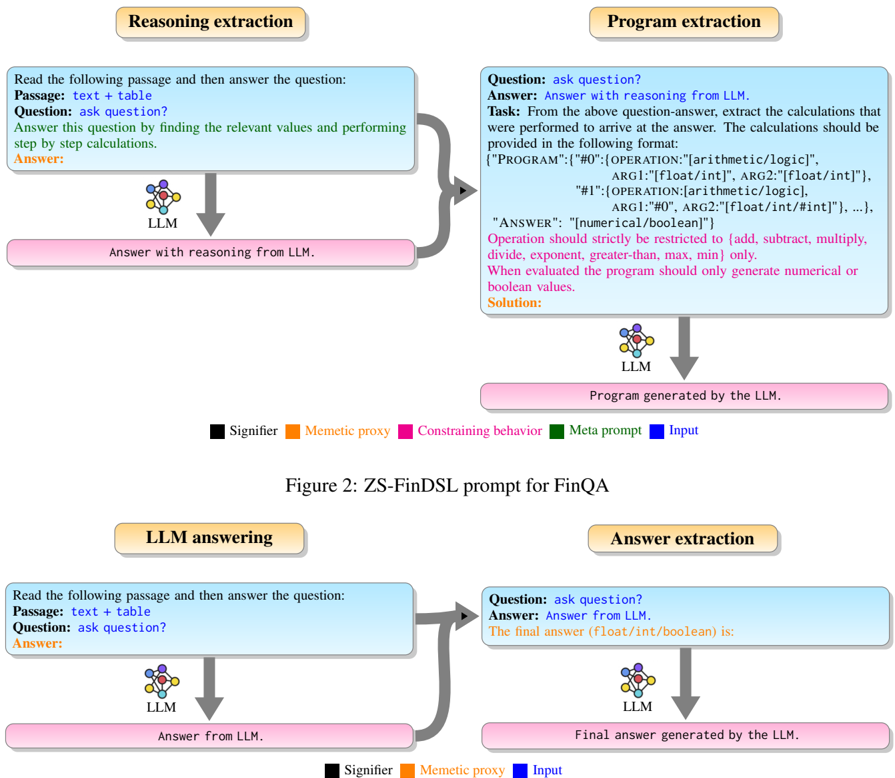

## Diagram: ZS-FinDSL prompt for FinQA

### Overview

The image presents a diagram illustrating the ZS-FinDSL prompt for FinQA, showcasing four distinct processes: Reasoning extraction, Program extraction, LLM answering, and Answer extraction. Each process involves a question-answering sequence, utilizing a Large Language Model (LLM) at different stages. The diagram uses color-coded elements to represent different aspects of the process, such as signifiers, memetic proxies, constraining behavior, meta prompts, and inputs.

### Components/Axes

* **Titles:**

* Reasoning extraction (top-left)

* Program extraction (top-right)

* LLM answering (bottom-left)

* Answer extraction (bottom-right)

* **Elements:**

* **Input Box (Light Blue):** Contains the question-answering prompt.

* Passage: text + table

* Question: ask question?

* Answer:

* **LLM Icon:** Represents the Large Language Model.

* **Output Box (Pink):** Contains the LLM's response.

* **Arrows:** Indicate the flow of information.

* **Legend (Bottom Center):**

* Black square: Signifier

* Orange square: Memetic proxy

* Pink square: Constraining behavior

* Green square: Meta prompt

* Blue square: Input

* **Figure Caption:** Figure 2: ZS-FinDSL prompt for FinQA

### Detailed Analysis

**1. Reasoning Extraction (Top-Left)**

* **Input:**

* "Read the following passage and then answer the question:"

* "Passage: text + table"

* "Question: ask question?"

* "Answer this question by finding the relevant values and performing step by step calculations."

* "Answer:"

* **Process:** The input is fed into an LLM.

* **Output:** "Answer with reasoning from LLM."

**2. Program Extraction (Top-Right)**

* **Input:**

* "Question: ask question?"

* "Answer: Answer with reasoning from LLM."

* "Task: From the above question-answer, extract the calculations that were performed to arrive at the answer. The calculations should be provided in the following format:"

* `{"PROGRAM": {"#0":{OPERATION:"[arithmetic/logic]", ARG1:"[float/int]", ARG2:"[float/int]"}, "#1":{OPERATION: [arithmetic/logic], ARG1:"#0", ARG2:"[float/int/#int]"}, ...}, "ANSWER": "[numerical/boolean]"}`

* "Operation should strictly be restricted to {add, subtract, multiply, divide, exponent, greater-than, max, min} only."

* "When evaluated the program should only generate numerical or boolean values."

* "Solution:"

* **Process:** The input is fed into an LLM.

* **Output:** "Program generated by the LLM."

**3. LLM Answering (Bottom-Left)**

* **Input:**

* "Read the following passage and then answer the question:"

* "Passage: text + table"

* "Question: ask question?"

* "Answer:"

* **Process:** The input is fed into an LLM.

* **Output:** "Answer from LLM."

**4. Answer Extraction (Bottom-Right)**

* **Input:**

* "Question: ask question?"

* "Answer: Answer from LLM."

* "The final answer (float/int/boolean) is:"

* **Process:** The input is fed into an LLM.

* **Output:** "Final answer generated by the LLM."

### Key Observations

* Each process starts with a question-answering prompt.

* The "Program extraction" process has a specific task to extract calculations and provide them in a structured format.

* The LLM is used in all four processes.

* The diagram uses color-coding to differentiate between different elements.

### Interpretation

The diagram illustrates the ZS-FinDSL prompt for FinQA, which involves a multi-stage process of reasoning, program extraction, LLM answering, and answer extraction. The "Program extraction" stage is particularly important as it aims to extract the calculations performed by the LLM to arrive at the answer. This allows for a more transparent and explainable AI system. The diagram highlights the flow of information between the different stages and the role of the LLM in each stage. The color-coding helps to differentiate between the different elements and provides a visual representation of the process.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

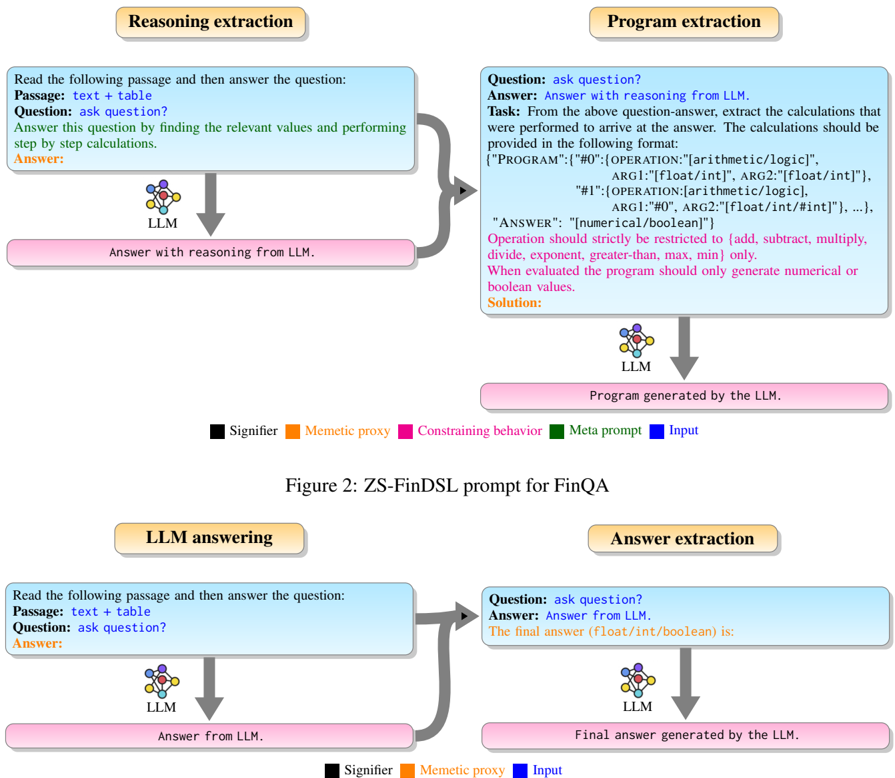

## Diagram: ZS-FinDSL prompt for FinQA Workflows

### Overview

This image is a process diagram illustrating two distinct workflows for interacting with a Large Language Model (LLM) in the context of a task called FinQA. The top workflow focuses on "Reasoning extraction" and "Program extraction," suggesting a multi-step process to derive a programmatic solution. The bottom workflow shows a more direct "LLM answering" and "Answer extraction" approach. Both workflows utilize an LLM and involve structured prompts and outputs, with specific parts of the prompt highlighted by color-coded categories defined in a central legend.

### Components/Axes

The diagram is structured into two main horizontal sections, each representing a workflow, separated by a central legend and figure title.

**Main Workflow Headers (Orange Ovals):**

* **Top-left:** "Reasoning extraction"

* **Top-right:** "Program extraction"

* **Bottom-left:** "LLM answering"

* **Bottom-right:** "Answer extraction"

**Input Prompt Boxes (Light Blue Rectangles):** These boxes contain the textual prompts provided to the LLM.

**LLM Icons:** A multi-colored, atom-like icon labeled "LLM" is positioned below each input prompt, representing the Large Language Model processing the input.

**Output Boxes (Pink Rounded Rectangles):** These boxes contain the expected or generated output from the LLM.

**Arrows (Dark Gray):** Indicate the flow of information between components.

**Curved Arrows (Dark Gray):** Indicate that the output of one LLM process serves as input for another.

**Legend (Centered horizontally, below the top workflow):**

* **Black square:** "Signifier"

* **Orange square:** "Memetic proxy"

* **Pink square:** "Constraining behavior"

* **Green square:** "Meta prompt"

* **Blue square:** "Input" (Note: The blue color is not explicitly used for text within the prompts, but the light blue background of the input boxes likely represents the overall "Input" concept.)

**Figure Title (Centered horizontally, below the legend):**

* "Figure 2: ZS-FinDSL prompt for FinQA"

### Detailed Analysis

The diagram presents two primary workflows, each composed of two stages involving an LLM.

**Top Workflow: Reasoning and Program Extraction**

1. **Reasoning extraction (Top-left):**

* **Input Prompt (light blue box):**

* "Read the following passage and then answer the question:" (Signifier - black text)

* **Passage:** "text + table" (Signifier - black text)

* **Question:** "ask question?" (Signifier - black text)

* "Answer this question by finding the relevant values and performing step by step calculations." (Meta prompt - green text)

* **Answer:** (Memetic proxy - orange text)

* **Flow:** An arrow points from this input prompt to the "LLM" icon.

* **Output (pink rounded rectangle):** "Answer with reasoning from LLM." (Signifier - black text)

2. **Program extraction (Top-right):**

* **Input Prompt (light blue box):**

* A curved arrow connects the output of "Reasoning extraction" ("Answer with reasoning from LLM.") to this input prompt, indicating the reasoning is fed as input.

* **Question:** "ask question?" (Signifier - black text)

* **Answer:** "Answer with reasoning from LLM." (Signifier - black text)

* **Task:** "From the above question-answer, extract the calculations that were performed to arrive at the answer. The calculations should be provided in the following format:" (Signifier - black text)

* `{"PROGRAM":"#0":{"OPERATION":[arithmetic/logic]",` (Signifier - black text)

* `ARG1:"[float/int]", ARG2:"[float/int]"},` (Signifier - black text)

* `"#1":{"OPERATION:[arithmetic/logic],` (Signifier - black text)

* `ARG1:"#0", ARG2:"[float/int/#int]"}, ...},` (Signifier - black text)

* `"ANSWER": "[numerical/boolean]"}` (Signifier - black text)

* "Operation should strictly be restricted to {add, subtract, multiply, divide, exponent, greater-than, max, min} only." (Constraining behavior - pink text)

* "When evaluated the program should only generate numerical or boolean values." (Constraining behavior - pink text)

* **Solution:** (Memetic proxy - orange text)

* **Flow:** An arrow points from this input prompt to the "LLM" icon.

* **Output (pink rounded rectangle):** "Program generated by the LLM." (Signifier - black text)

**Bottom Workflow: Direct LLM Answering and Answer Extraction**

1. **LLM answering (Bottom-left):**

* **Input Prompt (light blue box):**

* "Read the following passage and then answer the question:" (Signifier - black text)

* **Passage:** "text + table" (Signifier - black text)

* **Question:** "ask question?" (Signifier - black text)

* **Answer:** (Memetic proxy - orange text)

* **Flow:** An arrow points from this input prompt to the "LLM" icon.

* **Output (pink rounded rectangle):** "Answer from LLM." (Signifier - black text)

2. **Answer extraction (Bottom-right):**

* **Input Prompt (light blue box):**

* A curved arrow connects the output of "LLM answering" ("Answer from LLM.") to this input prompt, indicating the raw answer is fed as input.

* **Question:** "ask question?" (Signifier - black text)

* **Answer:** "Answer from LLM." (Signifier - black text)

* "The final answer (float/int/boolean) is:" (Memetic proxy - orange text)

* **Flow:** An arrow points from this input prompt to the "LLM" icon.

* **Output (pink rounded rectangle):** "Final answer generated by the LLM." (Signifier - black text)

### Key Observations

* **Two-Stage Processes:** Both workflows are two-stage, where the output of an initial LLM interaction becomes part of the input for a subsequent LLM interaction.

* **Prompt Engineering:** The diagram highlights different types of prompt components using color coding:

* **Signifiers (black text):** Provide basic instructions, context, and structure (e.g., "Question:", "Passage:", JSON format).

* **Meta prompts (green text):** Guide the LLM's internal process or strategy (e.g., "performing step by step calculations").

* **Constraining behavior (pink text):** Impose strict rules or limitations on the LLM's output (e.g., allowed operations, output data types).

* **Memetic proxy (orange text):** Acts as a placeholder or a cue for the LLM to fill in the desired output (e.g., "Answer:", "Solution:", "The final answer...").

* **Output Granularity:** The top workflow aims for a more structured, executable "Program," while the bottom workflow aims for a "Final answer" directly.

* **FinQA Context:** The figure title explicitly links these prompts to "ZS-FinDSL prompt for FinQA," suggesting these are zero-shot prompts for a financial question-answering task using a domain-specific language (FinDSL).

### Interpretation

This diagram illustrates two distinct strategies for leveraging LLMs for complex tasks like FinQA, emphasizing the role of prompt engineering in guiding the LLM's behavior and output format.

The **top workflow (Reasoning and Program Extraction)** represents a more robust and verifiable approach.

1. **Reasoning extraction** first prompts the LLM to generate an answer *with step-by-step calculations*. This "meta prompt" encourages the LLM to show its work, making its reasoning transparent. The "Memetic proxy" "Answer:" cues the LLM to provide this detailed response.

2. The output of this reasoning is then fed into the **Program extraction** stage. Here, the LLM is tasked not just with answering, but with *extracting the underlying calculations into a structured program format*. This stage includes "constraining behavior" prompts (pink text) that strictly define the allowed operations and output types, ensuring the generated program is valid and executable. This approach suggests a desire for not just an answer, but a verifiable, reproducible, and potentially executable solution, which is crucial in domains like finance where accuracy and auditability are paramount.

The **bottom workflow (LLM Answering and Answer Extraction)** represents a more direct, but potentially less transparent, approach.

1. **LLM answering** directly asks the LLM for an "Answer:" based on the passage and question. This is a standard direct question-answering prompt.

2. The raw "Answer from LLM." is then passed to the **Answer extraction** stage. This stage's purpose is to extract *only the final numerical or boolean value* from the LLM's potentially verbose answer. The "Memetic proxy" "The final answer (float/int/boolean) is:" guides the LLM to isolate and present the ultimate result in a specific format. This workflow prioritizes getting a concise final answer quickly, potentially sacrificing the transparency of the reasoning process.

In essence, the diagram contrasts a "programmatic reasoning" approach (top) with a "direct answer" approach (bottom). The top workflow aims to make the LLM's internal "thought process" explicit and convertible into an executable program, offering greater control, verifiability, and potentially better performance for complex multi-step reasoning tasks. The bottom workflow is simpler, focusing on direct answer generation and subsequent extraction, which might be suitable for less complex questions or when the intermediate steps are not critical. The use of "ZS-FinDSL" implies that these prompts are designed for zero-shot learning within a financial domain, potentially leveraging a domain-specific language for better performance and adherence to financial logic.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: ZS-FinDSL Prompt for FinQA

### Overview

This diagram illustrates the process of using a ZS-FinDSL (Zero-Shot Financial Domain-Specific Language) prompt for Financial Question Answering (FinQA). It depicts two main workflows: Reasoning Extraction and Program Extraction, each with an LLM (Large Language Model) at its core. The diagram highlights the input, processing steps, and output for each workflow.

### Components/Axes

The diagram is segmented into four main sections, each representing a stage in the process. These are:

1. **Reasoning Extraction (Top-Left):** Input: Passage + Table, Question. Output: Answer with reasoning from LLM.

2. **Program Extraction (Top-Right):** Input: Question. Output: Program generated by the LLM.

3. **LLM Reasoning and Answering (Bottom-Left):** Input: Passage + Table, Question. Output: Answer from LLM.

4. **Answer Extraction (Bottom-Right):** Input: Question. Output: Final answer generated by the LLM.

A legend at the bottom of the diagram defines the color coding:

* **Signifier** (Red)

* **Memetic proxy** (Orange)

* **Constraining behavior** (Green)

* **Meta prompt** (Blue)

* **Input** (Grey)

The diagram also includes a title: "Figure 2: ZS-FinDSL prompt for FinQA".

### Detailed Analysis or Content Details

**Reasoning Extraction:**

* Input is labeled "Passage: text + table" and "Question: ask question?".

* The LLM is depicted as a rounded rectangle labeled "LLM".

* Output is labeled "Answer with reasoning from LLM."

* The flow is from Input -> LLM -> Output.

**Program Extraction:**

* Input is labeled "Question: ask question?".

* The task description within the input box states: "Answer: Answer with reasoning from LLM. Task: From the above question-answer, extract the calculations that were performed to arrive at the answer. The calculations should be provided in the following format: {"PROGRAM": "#0": OPERATION: [arithmetic/logic], ARG1: [float/int], ARG2: [float/int"], "#1": OPERATION: [arithmetic/logic], ARG1: [float/int], ARG2: [float/int"]...}. Operation should strictly be restricted to [add, subtract, multiply, divide, exponent, greater-than, max, min] only. When evaluated the program should only generate numerical or boolean values. Solution:".

* The LLM is depicted as a rounded rectangle labeled "LLM".

* Output is labeled "Program generated by the LLM."

* The flow is from Input -> LLM -> Output.

**LLM Reasoning and Answering:**

* Input is labeled "Passage: text + table" and "Question: ask question?".

* The LLM is depicted as a rounded rectangle labeled "LLM".

* Output is labeled "Answer from LLM."

* The flow is from Input -> LLM -> Output.

**Answer Extraction:**

* Input is labeled "Question: ask question?".

* The task description within the input box states: "Answer: Answer from LLM. The final answer (float/int/boolean) is:".

* The LLM is depicted as a rounded rectangle labeled "LLM".

* Output is labeled "Final answer generated by the LLM."

* The flow is from Input -> LLM -> Output.

The color coding is applied to the arrows connecting the components, indicating the type of process or information flow.

### Key Observations

The diagram clearly shows a two-pronged approach to FinQA: one focusing on reasoning and the other on program extraction. Both workflows utilize an LLM as the central processing unit. The diagram emphasizes the importance of structured input and output formats, particularly in the Program Extraction workflow. The legend provides a visual key to understanding the different types of information flow within the system.

### Interpretation

The diagram illustrates a sophisticated approach to FinQA that leverages the capabilities of LLMs in two distinct ways. The Reasoning Extraction workflow aims to provide a human-understandable explanation for the answer, while the Program Extraction workflow focuses on identifying the underlying calculations or logic used to arrive at the answer. This dual approach allows for both interpretability and verifiability of the LLM's responses. The use of a specific program format suggests a desire to create a system that can be easily evaluated and debugged. The color coding helps to visualize the different aspects of the process, such as the input data, the LLM's reasoning, and the constraints imposed on the output. The diagram suggests a system designed for both accuracy and transparency in financial question answering. The separation of reasoning and program extraction indicates a modular design, potentially allowing for independent improvement of each component.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Process Diagram: LLM Prompting Methods for Financial Question Answering

### Overview

The image displays a technical flowchart comparing two distinct methodologies for prompting a Large Language Model (LLM) to answer financial questions, specifically within the FinQA context. The diagram is split into two primary sections: a top section illustrating the "ZS-FinDSL prompt" method and a bottom section showing a more direct "LLM answering" method. Each section uses color-coded boxes and arrows to denote different types of components and the flow of information.

### Components/Axes

The diagram is not a data chart with axes but a process flowchart. Its components are defined by labeled boxes, connecting arrows, and a color-coded legend.

**Legend (Present in both sections, positioned at the bottom):**

* **Black Box:** Signifier

* **Orange Box:** Memetic proxy

* **Pink Box:** Constraining behavior

* **Green Box:** Meta prompt

* **Blue Box:** Input

**Top Section: Figure 2: ZS-FinDSL prompt for FinQA**

This section details a two-stage process: "Reasoning extraction" followed by "Program extraction."

1. **Reasoning extraction (Left Column):**

* **Input Box (Blue - "Input"):** Contains the prompt template.

* Text: "Read the following passage and then answer the question:"

* Text: "Passage: text + table"

* Text: "Question: ask question?"

* Text (in green): "Answer this question by finding the relevant values and performing step by step calculations."

* Text (in orange): "Answer:"

* **Process Icon:** An icon labeled "LLM" with a downward arrow.

* **Output Box (Pink - "Constraining behavior"):** "Answer with reasoning from LLM."

2. **Program extraction (Right Column):**

* **Input Box (Blue - "Input"):** Contains a more complex prompt template.

* Text: "Question: ask question?"

* Text: "Answer: Answer with reasoning from LLM."

* Text (in green): "Task: From the above question-answer, extract the calculations that were performed to arrive at the answer. The calculations should be provided in the following format:"

* Text (code block): `{"PROGRAM":{"#0":{"OPERATION":"[arithmetic/logic]", ARG1:"[float/int]", ARG2:"[float/int]"}, "#1":{"OPERATION:[arithmetic/logic]", ARG1:"#0", ARG2:"[float/int/#int]"}, ...}, "ANSWER": "[numerical/boolean]"}`

* Text (in pink): "Operation should strictly be restricted to {add, subtract, multiply, divide, exponent, greater-than, max, min} only. When evaluated the program should only generate numerical or boolean values."

* Text (in orange): "Solution:"

* **Process Icon:** An icon labeled "LLM" with a downward arrow.

* **Output Box (Pink - "Constraining behavior"):** "Program generated by the LLM."

**Bottom Section: LLM answering and Answer extraction**

This section illustrates a simpler, single-stage process.

1. **LLM answering (Left Column):**

* **Input Box (Blue - "Input"):** Identical to the input box in the top section's "Reasoning extraction."

* Text: "Read the following passage and then answer the question:"

* Text: "Passage: text + table"

* Text: "Question: ask question?"

* Text (in orange): "Answer:"

* **Process Icon:** An icon labeled "LLM" with a downward arrow.

* **Output Box (Pink - "Constraining behavior"):** "Answer from LLM."

2. **Answer extraction (Right Column):**

* **Input Box (Blue - "Input"):**

* Text: "Question: ask question?"

* Text: "Answer: Answer from LLM."

* Text (in green): "The final answer (float/int/boolean) is:"

* **Process Icon:** An icon labeled "LLM" with a downward arrow.

* **Output Box (Pink - "Constraining behavior"):** "Final answer generated by the LLM."

### Detailed Analysis

The diagram meticulously outlines the structure of prompts and expected outputs for two different approaches.

* **ZS-FinDSL Method (Top):** This is a structured, two-step method.

1. **Step 1 (Reasoning):** The LLM is first prompted to generate a natural language, step-by-step reasoning answer to a financial question based on a passage containing text and a table.

2. **Step 2 (Program Synthesis):** The LLM is then prompted again, using its own reasoning answer from Step 1 as input. Its new task is to *extract* the underlying calculations and represent them as a formal program in a specific JSON-like schema (`{"PROGRAM":{...}, "ANSWER":...}`). The operations are strictly limited to a defined set (add, subtract, etc.). This step is heavily constrained (pink box) to produce a structured, executable output.

* **Direct Answer Method (Bottom):** This is a simpler, more direct method.

1. **Step 1 (Answering):** The LLM is prompted to directly answer the question, presumably with reasoning, but without the explicit instruction for step-by-step calculation found in the ZS-FinDSL method.

2. **Step 2 (Extraction):** A second prompt asks the LLM to extract just the final numerical or boolean answer from its previous response.

### Key Observations

1. **Structural Complexity:** The ZS-FinDSL method is significantly more complex, involving a transformation from natural language reasoning to a formal program representation. The direct method seeks only to isolate the final answer.

2. **Prompt Engineering:** The green text ("Meta prompt") in each input box defines the specific task for that stage. The ZS-FinDSL program extraction prompt is highly detailed, specifying the exact output format and allowed operations.

3. **Constraining Behavior:** The pink output boxes represent the constrained output from the LLM at each stage. In ZS-FinDSL, the constraint is a structured program; in the direct method, it's the final answer value.

4. **Flow:** Both methods use a two-stage pipeline where the output of the first LLM call becomes part of the input for the second LLM call.

### Interpretation

This diagram illustrates a research or engineering approach to improving the reliability and interpretability of LLMs on financial reasoning tasks (FinQA).

* **The ZS-FinDSL method** aims to force the LLM to externalize its reasoning process into a verifiable, executable program. This "program extraction" acts as a form of **chain-of-thought verification**. By requiring the model to output a structured program, developers can potentially audit the exact calculations performed, debug errors, and ensure the final answer is derived through a logical sequence of arithmetic operations. This addresses the "black box" problem of LLM reasoning.

* **The direct answer method** represents a more conventional approach but may be less transparent. The second "answer extraction" step suggests an attempt to parse the final answer from a potentially verbose response, which can be error-prone.

* **The comparison** highlights a trade-off: the ZS-FinDSL method imposes a higher upfront cost in prompt design and complexity but yields a more structured, auditable, and potentially more reliable output. The direct method is simpler but may lack verifiability. The diagram serves as a blueprint for implementing the more rigorous ZS-FinDSL prompting strategy.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: ZS-FinDSL Prompt for FinQA

### Overview

The diagram illustrates a structured workflow for a Language Learning Model (LLM) to process financial question-answering (FinQA) tasks. It breaks the process into four stages: **Reasoning extraction**, **Program extraction**, **LLM answering**, and **Answer extraction**. Color-coded elements (Signifier, Meme, Constraining behavior, Meta prompt, Input) define the components and their relationships.

---

### Components/Axes

- **Legend**:

- Black: Signifier (labels for components)

- Orange: Meme (proxy for specific elements)

- Pink: Constraining behavior (rules for operations)

- Green: Meta prompt (instructions for the LLM)

- Blue: Input (data or questions)

- **Key Elements**:

- **Reasoning extraction**:

- Input: "Read the following passage and then answer the question."

- Output: "Answer with reasoning from LLM."

- **Program extraction**:

- Input: Question-answer pair.

- Output: Program generated by the LLM (e.g., arithmetic/logic operations).

- **LLM answering**:

- Input: Passage + question.

- Output: Answer from LLM.

- **Answer extraction**:

- Input: Question + LLM answer.

- Output: Final answer (e.g., numerical/boolean value).

---

### Detailed Analysis

1. **Reasoning extraction**:

- The LLM reads a passage (text + table) and answers a question by identifying relevant values and performing step-by-step calculations.

- Example: "Answer this question by finding the relevant values and performing step by step calculations."

2. **Program extraction**:

- The LLM generates a program based on the question-answer pair. The program must:

- Use operations restricted to: `arithmetic` (add, subtract, multiply, divide, exponent) and `logic` (greater-than, max, min).

- Follow a strict format: `{"PROGRAM": [{"OPERATION": "arithmetic/logic", "ARG1": "float/int", ...}, ...], "ANSWER": "numerical/boolean"}`.

- Example program:

DECODING INTELLIGENCE...