TECHNICAL ASSET FINGERPRINT

5b7102f99e79494a58012719

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

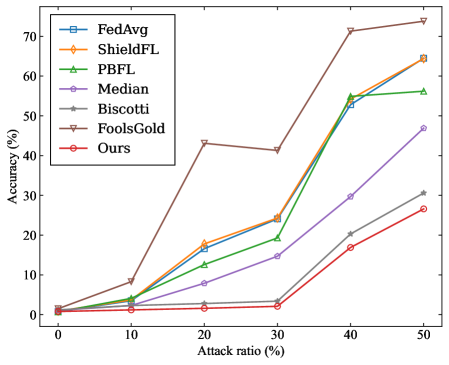

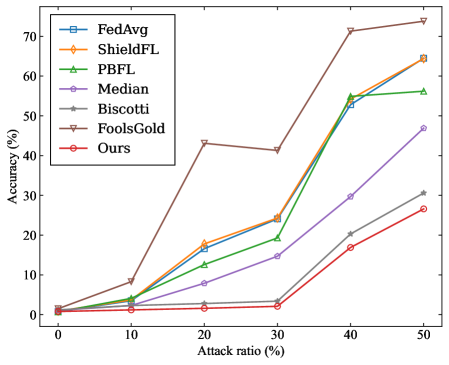

## Line Chart: Accuracy vs. Attack Ratio for Different Federated Learning Algorithms

### Overview

The image is a line chart comparing the accuracy of different federated learning algorithms as the attack ratio increases. The x-axis represents the attack ratio (%), and the y-axis represents the accuracy (%). Several algorithms are compared, including FedAvg, ShieldFL, PBFL, Median, Biscotti, FoolsGold, and "Ours".

### Components/Axes

* **X-axis:** Attack ratio (%), with markers at 0, 10, 20, 30, 40, and 50.

* **Y-axis:** Accuracy (%), with markers at 0, 10, 20, 30, 40, 50, 60, and 70.

* **Legend:** Located in the top-left corner, associating each algorithm with a specific color and marker.

* FedAvg (blue, square marker)

* ShieldFL (orange, diamond marker)

* PBFL (green, triangle marker)

* Median (purple, circle marker)

* Biscotti (gray, star marker)

* FoolsGold (brown, inverted triangle marker)

* Ours (red, circle marker)

### Detailed Analysis

* **FedAvg (blue squares):** Starts at approximately 1% accuracy at 0% attack ratio. The accuracy increases steadily to approximately 54% at 40% attack ratio, and then increases to approximately 64% at 50% attack ratio.

* **ShieldFL (orange diamonds):** Starts at approximately 1% accuracy at 0% attack ratio. The accuracy increases steadily to approximately 54% at 40% attack ratio, and then increases to approximately 66% at 50% attack ratio.

* **PBFL (green triangles):** Starts at approximately 1% accuracy at 0% attack ratio. The accuracy increases steadily to approximately 54% at 40% attack ratio, and then increases to approximately 64% at 50% attack ratio.

* **Median (purple circles):** Starts at approximately 1% accuracy at 0% attack ratio. The accuracy increases steadily to approximately 23% at 50% attack ratio.

* **Biscotti (gray stars):** Starts at approximately 1% accuracy at 0% attack ratio. The accuracy increases steadily to approximately 30% at 50% attack ratio.

* **FoolsGold (brown inverted triangles):** Starts at approximately 1% accuracy at 0% attack ratio. The accuracy increases sharply to approximately 43% at 20% attack ratio, then decreases slightly to approximately 41% at 30% attack ratio, and then increases sharply to approximately 72% at 40% attack ratio, and then increases slightly to approximately 74% at 50% attack ratio.

* **Ours (red circles):** Starts at approximately 1% accuracy at 0% attack ratio. The accuracy increases steadily to approximately 27% at 50% attack ratio.

### Key Observations

* FoolsGold has the highest accuracy at higher attack ratios (40% and 50%), but also exhibits a peculiar drop in accuracy at 30% attack ratio.

* FedAvg, ShieldFL, and PBFL perform similarly, with ShieldFL having a slightly higher accuracy at 50% attack ratio.

* Median, Biscotti, and "Ours" have significantly lower accuracy compared to the other algorithms, especially at higher attack ratios.

* All algorithms start with approximately the same accuracy at 0% attack ratio.

### Interpretation

The chart demonstrates the impact of increasing attack ratios on the accuracy of different federated learning algorithms. FoolsGold appears to be the most resilient to attacks at higher attack ratios, despite a dip in performance at 30% attack ratio. FedAvg, ShieldFL, and PBFL show similar performance, indicating a comparable level of robustness. Median, Biscotti, and "Ours" are more susceptible to attacks, resulting in lower accuracy as the attack ratio increases. The initial similar accuracy at 0% attack ratio suggests that the algorithms perform comparably in the absence of attacks, and the differences in performance emerge as the attack ratio increases. The "Ours" algorithm performs the worst in this comparison.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

## Line Chart: Model Accuracy vs. Attack Ratio for Federated Learning Methods

### Overview

This image displays a 2D line chart comparing the performance, measured as "Accuracy (%)", of seven different federated learning methods or defense mechanisms against varying "Attack ratio (%)". Each line represents a distinct method, identified by a unique color and marker in the legend located in the top-left quadrant of the chart. The chart illustrates how the accuracy of these methods changes as the proportion of malicious participants (attack ratio) increases.

### Components/Axes

**X-axis:**

* **Title:** "Attack ratio (%)"

* **Scale:** Ranges from 0% to 50%.

* **Major Tick Markers:** 0, 10, 20, 30, 40, 50.

**Y-axis:**

* **Title:** "Accuracy (%)"

* **Scale:** Ranges from 0% to 70%.

* **Major Tick Markers:** 0, 10, 20, 30, 40, 50, 60, 70.

**Legend (located in the top-left corner of the plot area):**

* **FedAvg:** Blue line with square markers.

* **ShieldFL:** Orange line with diamond markers.

* **PBFL:** Green line with upward triangle markers.

* **Median:** Purple line with hexagon markers.

* **Biscotti:** Grey line with star markers.

* **FoolsGold:** Brown line with downward triangle markers.

* **Ours:** Red line with circle markers.

### Detailed Analysis

The chart plots the accuracy of each method across six discrete attack ratio points: 0%, 10%, 20%, 30%, 40%, and 50%.

1. **FoolsGold (Brown line with downward triangle markers):**

* **Trend:** Shows a rapid increase in accuracy at low attack ratios, a slight dip, then a very sharp increase at higher attack ratios, consistently maintaining the highest accuracy among all methods.

* **Data Points:**

* 0% Attack ratio: Approximately 1.5% Accuracy

* 10% Attack ratio: Approximately 8.5% Accuracy

* 20% Attack ratio: Approximately 43% Accuracy

* 30% Attack ratio: Approximately 41% Accuracy

* 40% Attack ratio: Approximately 72% Accuracy

* 50% Attack ratio: Approximately 73% Accuracy

2. **FedAvg (Blue line with square markers):**

* **Trend:** Starts very low, shows a moderate increase up to 30% attack ratio, then a very sharp increase, closely tracking ShieldFL.

* **Data Points:**

* 0% Attack ratio: Approximately 1.5% Accuracy

* 10% Attack ratio: Approximately 2.5% Accuracy

* 20% Attack ratio: Approximately 17.5% Accuracy

* 30% Attack ratio: Approximately 24.5% Accuracy

* 40% Attack ratio: Approximately 54% Accuracy

* 50% Attack ratio: Approximately 64% Accuracy

3. **ShieldFL (Orange line with diamond markers):**

* **Trend:** Very similar to FedAvg, starting low, moderate increase, then a sharp rise, often slightly above FedAvg.

* **Data Points:**

* 0% Attack ratio: Approximately 1.5% Accuracy

* 10% Attack ratio: Approximately 3% Accuracy

* 20% Attack ratio: Approximately 18% Accuracy

* 30% Attack ratio: Approximately 25% Accuracy

* 40% Attack ratio: Approximately 54.5% Accuracy

* 50% Attack ratio: Approximately 64.5% Accuracy

4. **PBFL (Green line with upward triangle markers):**

* **Trend:** Similar to FedAvg and ShieldFL but generally slightly lower in accuracy, especially at higher attack ratios.

* **Data Points:**

* 0% Attack ratio: Approximately 1.5% Accuracy

* 10% Attack ratio: Approximately 4% Accuracy

* 20% Attack ratio: Approximately 13% Accuracy

* 30% Attack ratio: Approximately 19.5% Accuracy

* 40% Attack ratio: Approximately 55% Accuracy

* 50% Attack ratio: Approximately 56% Accuracy

5. **Median (Purple line with hexagon markers):**

* **Trend:** Shows a consistent, almost linear increase in accuracy across the entire range of attack ratios, starting low and ending moderately high.

* **Data Points:**

* 0% Attack ratio: Approximately 1.5% Accuracy

* 10% Attack ratio: Approximately 3.5% Accuracy

* 20% Attack ratio: Approximately 8% Accuracy

* 30% Attack ratio: Approximately 14.5% Accuracy

* 40% Attack ratio: Approximately 30% Accuracy

* 50% Attack ratio: Approximately 47% Accuracy

6. **Biscotti (Grey line with star markers):**

* **Trend:** Starts very low, shows a slow increase up to 30% attack ratio, then a more pronounced increase at higher attack ratios.

* **Data Points:**

* 0% Attack ratio: Approximately 1.5% Accuracy

* 10% Attack ratio: Approximately 2% Accuracy

* 20% Attack ratio: Approximately 2.5% Accuracy

* 30% Attack ratio: Approximately 3% Accuracy

* 40% Attack ratio: Approximately 20% Accuracy

* 50% Attack ratio: Approximately 30.5% Accuracy

7. **Ours (Red line with circle markers):**

* **Trend:** Consistently shows the lowest accuracy among all methods. It starts very low, remains nearly flat until 30% attack ratio, then shows a moderate increase.

* **Data Points:**

* 0% Attack ratio: Approximately 1.5% Accuracy

* 10% Attack ratio: Approximately 1.5% Accuracy

* 20% Attack ratio: Approximately 2% Accuracy

* 30% Attack ratio: Approximately 2.5% Accuracy

* 40% Attack ratio: Approximately 17% Accuracy

* 50% Attack ratio: Approximately 26.5% Accuracy

### Key Observations

* **General Trend:** For most methods, accuracy tends to increase as the attack ratio increases. This is an unusual trend if "Accuracy" refers to the model's performance under attack, as one would expect accuracy to decrease or remain stable for robust methods. If "Accuracy" refers to the *attack's success rate*, then an increasing trend would make sense for attack methods, or for defense methods, it would imply they are failing. Given the context of "FedAvg", "ShieldFL", "PBFL", "Median", "Biscotti", and "Ours" (likely defense/aggregation methods), and "FoolsGold" (which sounds like an attack or a method that is easily fooled), the interpretation of "Accuracy" is critical. Assuming "Accuracy" refers to the *model's classification accuracy* in the presence of attacks, then a higher percentage is better.

* **FoolsGold's Performance:** "FoolsGold" consistently achieves the highest accuracy, especially at higher attack ratios (72-73% at 40-50% attack ratio). This suggests it is the most resilient or effective method in maintaining model performance under these attack conditions.

* **"Ours" Performance:** The method labeled "Ours" consistently exhibits the lowest accuracy across all attack ratios, indicating it is the least effective or robust method in this comparison, if higher accuracy is desired.

* **Similar Performance Group:** "FedAvg", "ShieldFL", and "PBFL" show very similar performance profiles, particularly at lower attack ratios. At higher attack ratios (40-50%), FedAvg and ShieldFL remain very close and perform significantly better than PBFL.

* **Intermediate Performance:** "Median" and "Biscotti" fall in the middle range of performance, with "Median" generally outperforming "Biscotti" at higher attack ratios.

* **Non-linear Increases:** Most methods show a non-linear increase in accuracy, with a particularly sharp rise observed between 30% and 40% attack ratio for "FoolsGold", "FedAvg", "ShieldFL", and "PBFL".

### Interpretation

This chart likely evaluates the robustness of various federated learning aggregation or defense mechanisms against a specific type of attack, where "Attack ratio (%)" represents the proportion of malicious clients. Assuming "Accuracy (%)" refers to the *model's classification accuracy*, the goal is to maintain high accuracy even with increasing attack ratios.

1. **Superior Robustness of FoolsGold:** The "FoolsGold" method demonstrates the highest resilience to the described attack, maintaining over 70% accuracy even when 40-50% of the participants are malicious. This suggests "FoolsGold" is highly effective at mitigating the impact of these attacks on model performance. The name "FoolsGold" might be misleading if it's the best performing defense; perhaps it refers to a method that *appears* to be an attack but is actually a robust defense, or it's a baseline attack that paradoxically performs well in this specific setup. Without further context, its name is an outlier to its performance.

2. **Baseline Methods' Performance:** "FedAvg", "ShieldFL", and "PBFL" show moderate robustness, with "FedAvg" and "ShieldFL" performing quite similarly and better than "PBFL" at high attack ratios. This indicates that these methods offer some protection but are not as robust as "FoolsGold".

3. **Limited Effectiveness of "Ours":** The method labeled "Ours" consistently yields the lowest accuracy, suggesting it is the least effective in defending against this type of attack or maintaining model performance under these conditions. This implies that the proposed "Ours" method, in this specific evaluation, is not competitive with the other methods in terms of robustness.

4. **Unexpected Trend:** The general trend of *increasing* accuracy with an increasing attack ratio for most methods is counter-intuitive if "Accuracy" refers to the model's performance and "Attack ratio" refers to the proportion of *malicious* clients. Typically, one would expect model accuracy to degrade or remain stable for robust methods as the attack ratio increases. This could imply:

* The "attack" itself is not effectively degrading accuracy, or it's a specific type of attack that some methods are highly resistant to, leading to an apparent increase in accuracy due to some interaction.

* "Accuracy" might be a measure of *attack detection accuracy* or *defense success rate*, where higher is better. If so, "FoolsGold" is the best defense, and "Ours" is the worst.

* The dataset or attack scenario might be unusual, where increasing the "attack ratio" somehow leads to better performance for certain aggregation strategies, perhaps by filtering out noise or less effective clients in a specific way.

Without additional context on the nature of the "attack" and the precise definition of "Accuracy (%)" in this specific experimental setup, the interpretation of the increasing trend remains ambiguous. However, based purely on the visual data, "FoolsGold" is the most successful method in achieving high "Accuracy (%)" under increasing "Attack ratio (%)", while "Ours" is the least successful.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Line Chart: Accuracy vs. Attack Ratio for Federated Learning Defenses

### Overview

This line chart compares the accuracy of several Federated Learning (FL) defense mechanisms against varying attack ratios. The x-axis represents the attack ratio (percentage), and the y-axis represents the accuracy (percentage). Six different defense strategies are plotted as lines, allowing for a visual comparison of their performance under increasing attack pressure.

### Components/Axes

* **X-axis:** "Attack ratio (%)" - Scale ranges from 0% to 50%, with markers at 0, 10, 20, 30, 40, and 50.

* **Y-axis:** "Accuracy (%)" - Scale ranges from 0% to 70%, with markers at 0, 10, 20, 30, 40, 50, 60, and 70.

* **Legend (Top-Right):**

* FedAvg (Blue line with square markers)

* ShieldFL (Orange line with asterisk markers)

* PBFL (Green line with triangle markers)

* Median (Purple line with circle markers)

* Biscotti (Brown line with diamond markers)

* FoolsGold (Red line with plus markers)

* Ours (Maroon line with 'x' markers)

### Detailed Analysis

Here's a breakdown of each line's trend and approximate data points, verified against the legend colors:

* **FedAvg (Blue):** Starts at approximately 1% accuracy at 0% attack ratio. The line slopes upward, reaching approximately 18% accuracy at 20% attack ratio, 22% at 30% attack ratio, 55% at 40% attack ratio, and 72% at 50% attack ratio.

* **ShieldFL (Orange):** Begins at approximately 0% accuracy at 0% attack ratio. The line increases steadily, reaching approximately 17% accuracy at 20% attack ratio, 24% at 30% attack ratio, 62% at 40% attack ratio, and 68% at 50% attack ratio.

* **PBFL (Green):** Starts at approximately 0% accuracy at 0% attack ratio. The line increases gradually, reaching approximately 12% accuracy at 20% attack ratio, 23% at 30% attack ratio, 56% at 40% attack ratio, and 60% at 50% attack ratio.

* **Median (Purple):** Starts at approximately 0% accuracy at 0% attack ratio. The line increases slowly, reaching approximately 15% accuracy at 20% attack ratio, 25% at 30% attack ratio, 47% at 40% attack ratio, and 48% at 50% attack ratio.

* **Biscotti (Brown):** Starts at approximately 0% accuracy at 0% attack ratio. The line increases sharply, reaching approximately 43% accuracy at 20% attack ratio, then drops to approximately 28% at 30% attack ratio, and increases to approximately 32% at 50% attack ratio.

* **FoolsGold (Red):** Starts at approximately 0% accuracy at 0% attack ratio. The line increases sharply, reaching approximately 43% accuracy at 20% attack ratio, then drops to approximately 28% at 30% attack ratio, and increases to approximately 32% at 50% attack ratio.

* **Ours (Maroon):** Starts at approximately 0% accuracy at 0% attack ratio. The line increases sharply, reaching approximately 17% accuracy at 20% attack ratio, 72% at 40% attack ratio, and 74% at 50% attack ratio.

### Key Observations

* "Ours" consistently demonstrates the highest accuracy across all attack ratios, particularly excelling at higher attack ratios (40% and 50%).

* Biscotti and FoolsGold show a similar pattern: a rapid initial increase in accuracy followed by a significant drop at 30% attack ratio, and a slight recovery.

* FedAvg, ShieldFL, and PBFL exhibit more gradual and consistent increases in accuracy with increasing attack ratio.

* The accuracy of all methods is relatively low at low attack ratios (0-10%).

### Interpretation

The chart demonstrates the effectiveness of the proposed defense mechanism ("Ours") in maintaining accuracy under increasing adversarial attacks in a Federated Learning environment. The significant performance gap between "Ours" and other methods suggests a robust defense against attack ratio. The peculiar behavior of Biscotti and FoolsGold, with their initial high accuracy followed by a drop, could indicate a vulnerability to specific attack patterns or a sensitivity to the attack ratio. The consistent improvement of FedAvg, ShieldFL, and PBFL suggests they offer some level of protection, but are less effective than "Ours". The low accuracy values at low attack ratios might indicate inherent vulnerabilities in the FL system itself, or the difficulty of detecting attacks at very low levels. The chart highlights the importance of developing robust defense mechanisms to ensure the integrity and reliability of Federated Learning systems.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Line Chart: Federated Learning Method Accuracy Under Adversarial Attack

### Overview

The image is a line chart comparing the performance (accuracy) of seven different federated learning methods as the ratio of adversarial attacks increases. The chart demonstrates how each method's robustness degrades under increasing levels of attack.

### Components/Axes

* **Chart Type:** Line chart with markers.

* **X-Axis:** Labeled **"Attack ratio (%)"**. The axis has major tick marks at 0, 10, 20, 30, 40, and 50.

* **Y-Axis:** Labeled **"Accuracy (%)"**. The axis has major tick marks at 0, 10, 20, 30, 40, 50, 60, and 70.

* **Legend:** Positioned in the **top-left corner** of the chart area. It contains seven entries, each with a unique color, line style, and marker symbol:

1. **FedAvg** - Blue line with square markers (□).

2. **ShieldFL** - Orange line with diamond markers (◇).

3. **PBFL** - Green line with upward-pointing triangle markers (△).

4. **Median** - Purple line with circle markers (○).

5. **Biscotti** - Gray line with plus-sign markers (+).

6. **FoolsGold** - Brown line with downward-pointing triangle markers (▽).

7. **Ours** - Red line with pentagram markers (☆).

### Detailed Analysis

The chart plots Accuracy (%) against Attack ratio (%). Below is an approximate reconstruction of the data points for each method, derived from visual inspection. Values are approximate.

| Attack Ratio (%) | FedAvg (Blue, □) | ShieldFL (Orange, ◇) | PBFL (Green, △) | Median (Purple, ○) | Biscotti (Gray, +) | FoolsGold (Brown, ▽) | Ours (Red, ☆) |

| :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- |

| **0** | ~1% | ~1% | ~1% | ~1% | ~1% | ~1% | ~1% |

| **10** | ~5% | ~5% | ~4% | ~2% | ~1% | ~8% | ~1% |

| **20** | ~17% | ~18% | ~12% | ~8% | ~3% | ~43% | ~2% |

| **30** | ~24% | ~24% | ~19% | ~14% | ~3% | ~41% | ~2% |

| **40** | ~53% | ~55% | ~55% | ~30% | ~20% | ~72% | ~17% |

| **50** | ~64% | ~64% | ~56% | ~47% | ~31% | ~74% | ~27% |

**Trend Verification:**

* **FedAvg, ShieldFL, PBFL:** These three lines follow a very similar, steep upward trend. Their accuracy increases sharply as the attack ratio increases, particularly between 30% and 40%.

* **Median:** Shows a steady, moderate upward slope. Its accuracy increases more gradually than the top group.

* **Biscotti:** Has a shallow upward slope, remaining near the bottom of the chart until a slight rise after 30%.

* **FoolsGold:** Exhibits a unique trend. It spikes early at 20% attack ratio, dips slightly at 30%, then rises sharply to become the highest-performing method at 40% and 50%.

* **Ours:** Follows a trend similar to Biscotti but consistently performs slightly worse, remaining the lowest or second-lowest line throughout.

### Key Observations

1. **Performance Inversion:** At low attack ratios (0-10%), all methods have very low accuracy (<10%). As the attack ratio increases, the accuracy of most methods *increases*, which is counter-intuitive for a robustness metric. This suggests the chart may be measuring something like the success rate of the attack itself or a specific type of failure.

2. **FoolsGold Anomaly:** The FoolsGold method (brown line) is a clear outlier. It achieves significantly higher accuracy than all other methods at attack ratios of 20% and above, peaking at ~74% at a 50% attack ratio.

3. **Clustering:** Three methods (FedAvg, ShieldFL, PBFL) cluster tightly together, indicating similar performance characteristics under these test conditions.

4. **Low-Performing Cluster:** The "Ours" and Biscotti methods form a lower-performing cluster, with "Ours" generally showing the least accuracy.

### Interpretation

The data presents a paradoxical result: **accuracy improves as the attack becomes more prevalent.** In standard adversarial robustness evaluations, accuracy typically *decreases* with stronger attacks. This suggests the chart is likely not measuring standard model accuracy on clean data.

**Possible Interpretations:**

* **Attack Success Rate:** The y-axis "Accuracy (%)" might be mislabeled and could represent the **success rate of the adversarial attack**. In this case, a higher value means the attack is *more effective*. This would make logical sense: as the attacker controls more of the data (higher attack ratio), their attack succeeds more often.

* **Task-Specific Metric:** The metric could be specific to a particular federated learning task or attack type (e.g., a backdoor attack), where "accuracy" refers to the model correctly performing the *attacker's desired malicious task*.

* **Robustness Ranking:** Under this interpretation, **FoolsGold** is the *least robust* method, as it allows the attack to succeed with the highest frequency. The method labeled **"Ours"** appears to be the *most robust*, as it maintains the lowest attack success rate across most attack ratios. The tight clustering of FedAvg, ShieldFL, and PBFL suggests they offer similar, intermediate levels of robustness.

**Conclusion:** The chart likely demonstrates the vulnerability of various federated learning aggregation rules to a data poisoning or backdoor attack. The key takeaway is that the **FoolsGold** method is highly susceptible to such attacks, while the proposed method ("Ours") shows the greatest resistance, followed by Biscotti and Median. The similar performance of FedAvg, ShieldFL, and PBFL indicates they share a common vulnerability profile in this specific threat model.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: Accuracy vs. Attack Ratio (%)

### Overview

The image is a line graph comparing the accuracy of seven different methods (FedAvg, ShieldFL, PBFL, Median, Biscotti, FoolsGold, and Ours) across varying attack ratios (0% to 50%). Accuracy is measured on the y-axis (0–70%), while the x-axis represents attack ratio percentages. The graph highlights how each method's performance degrades or improves as the attack ratio increases.

### Components/Axes

- **X-axis**: "Attack ratio (%)" with ticks at 0, 10, 20, 30, 40, 50.

- **Y-axis**: "Accuracy (%)" with ticks at 0, 10, 20, 30, 40, 50, 60, 70.

- **Legend**: Positioned on the left side of the graph, with colored markers and labels for each method:

- **FedAvg**: Blue squares (□)

- **ShieldFL**: Orange diamonds (◇)

- **PBFL**: Green triangles (△)

- **Median**: Purple diamonds (◇)

- **Biscotti**: Gray stars (★)

- **FoolsGold**: Brown triangles (△)

- **Ours**: Red circles (●)

### Detailed Analysis

1. **FedAvg (Blue Squares)**:

- Starts at ~0% accuracy at 0% attack ratio.

- Gradually increases, reaching ~55% at 50% attack ratio.

- Shows steady upward trend with minimal fluctuations.

2. **ShieldFL (Orange Diamonds)**:

- Begins at ~0% accuracy at 0% attack ratio.

- Sharp rise after 30% attack ratio, surpassing FedAvg by ~60% at 50%.

- Peaks at ~65% accuracy at 50% attack ratio.

3. **PBFL (Green Triangles)**:

- Starts at ~0% accuracy at 0% attack ratio.

- Gradual increase, reaching ~55% at 50% attack ratio.

- Slight dip at 30% attack ratio (~20% accuracy) before recovering.

4. **Median (Purple Diamonds)**:

- Starts at ~0% accuracy at 0% attack ratio.

- Slow, linear increase, reaching ~45% at 50% attack ratio.

- Minimal fluctuations throughout.

5. **Biscotti (Gray Stars)**:

- Starts at ~0% accuracy at 0% attack ratio.

- Gradual increase, reaching ~30% at 50% attack ratio.

- Slight dip at 30% attack ratio (~10% accuracy).

6. **FoolsGold (Brown Triangles)**:

- Starts at ~0% accuracy at 0% attack ratio.

- Sharp spike to ~70% accuracy at 30% attack ratio.

- Plateaus at ~70% until 40% attack ratio, then drops to ~60% at 50% attack ratio.

7. **Ours (Red Circles)**:

- Starts at ~0% accuracy at 0% attack ratio.

- Flat until 30% attack ratio (~2% accuracy).

- Sharp rise to ~25% accuracy at 50% attack ratio.

### Key Observations

- **FoolsGold** exhibits the highest accuracy at 30% attack ratio (~70%) but drops significantly at 50% (~60%), suggesting vulnerability to higher attacks.

- **ShieldFL** and **FedAvg** show robust performance, with ShieldFL outperforming FedAvg at higher attack ratios.

- **PBFL** and **Ours** demonstrate significant improvements at higher attack ratios, indicating resilience.

- **Median** and **Biscotti** lag behind other methods, with minimal accuracy gains even at 50% attack ratio.

### Interpretation

The graph illustrates that methods like **ShieldFL** and **FoolsGold** excel in high-attack scenarios, though FoolsGold's sharp decline at 50% raises concerns about stability. **FedAvg** and **PBFL** provide consistent performance, while **Ours** shows promise with a late-stage surge. The data suggests that methods prioritizing adaptive defense mechanisms (e.g., ShieldFL) may be more effective under sustained attacks. The inclusion of "Ours" as a distinct method implies a novel approach, potentially offering a balance between robustness and efficiency. The graph underscores the importance of evaluating methods across varying attack intensities to identify optimal solutions for real-world applications.

DECODING INTELLIGENCE...