\n

## Line Chart: L0 Coefficient over Training Steps

### Overview

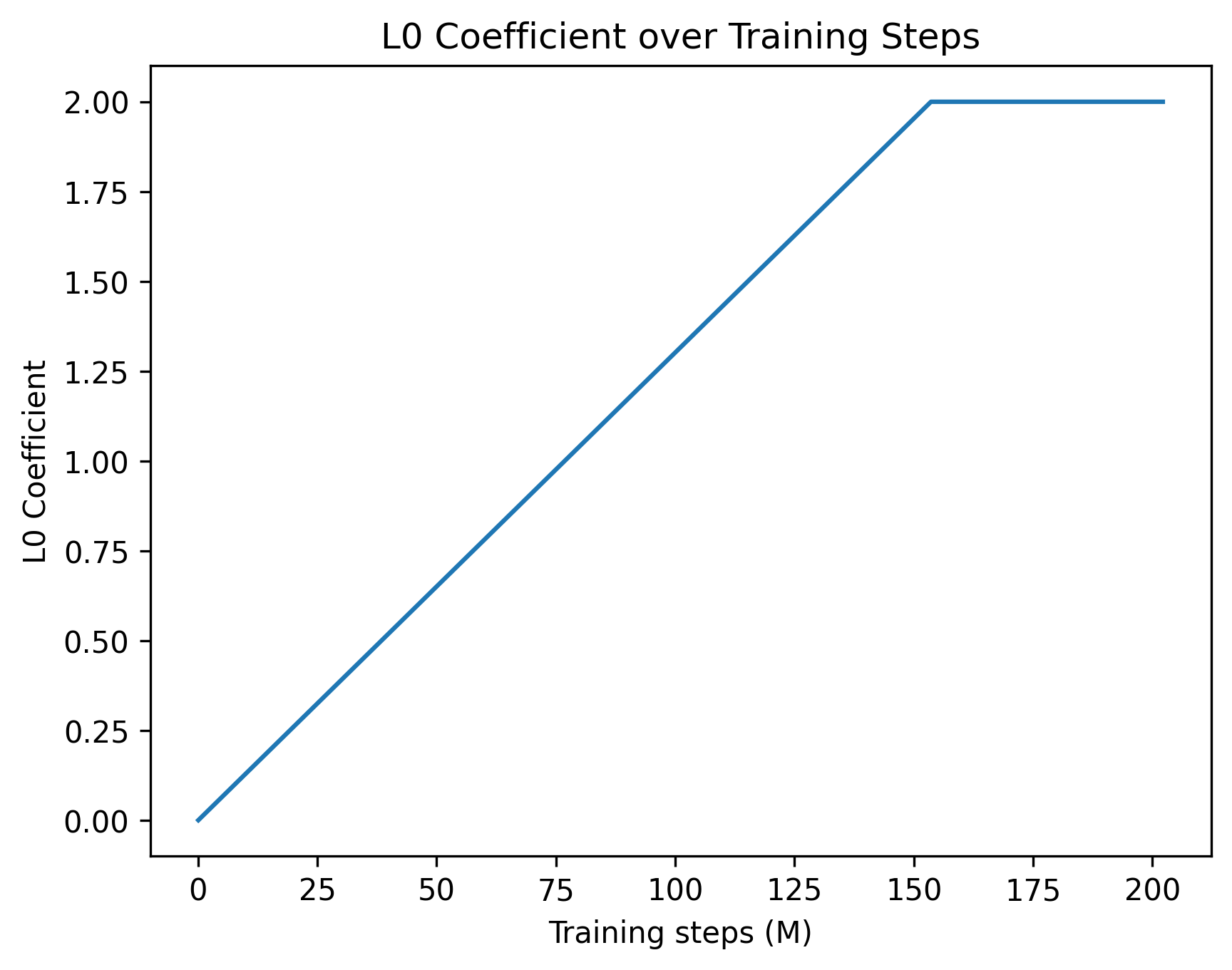

The image presents a line chart illustrating the relationship between the L0 Coefficient and Training Steps (measured in Millions). The chart shows how the L0 Coefficient changes as the training progresses.

### Components/Axes

* **Title:** "L0 Coefficient over Training Steps" - positioned at the top-center of the chart.

* **X-axis:** "Training steps (M)" - ranging from 0 to 200, with tick marks at intervals of 25.

* **Y-axis:** "L0 Coefficient" - ranging from 0.0 to 2.0, with tick marks at intervals of 0.25.

* **Data Series:** A single blue line representing the L0 Coefficient.

### Detailed Analysis

The blue line starts at approximately (0, 0.0) and exhibits a linear increase until approximately (150, 1.9). After 150 training steps, the line plateaus, remaining roughly constant at a value of approximately 1.95-2.0.

Here's a breakdown of approximate data points:

* (0, 0.0)

* (25, 0.5)

* (50, 1.0)

* (75, 1.5)

* (100, 1.75)

* (125, 1.875)

* (150, 1.95)

* (175, 1.975)

* (200, 2.0)

The line has a positive slope for the first 150 training steps, indicating that the L0 Coefficient increases with training. Beyond 150 steps, the slope becomes approximately zero, indicating that the L0 Coefficient no longer changes significantly with further training.

### Key Observations

* The L0 Coefficient exhibits a linear growth phase followed by a saturation phase.

* The coefficient reaches a maximum value of approximately 2.0.

* The rate of increase in the L0 Coefficient is constant during the initial phase.

### Interpretation

The chart suggests that the L0 Coefficient increases with training until it reaches a certain point, after which it stabilizes. This could indicate that the model is learning to utilize the L0 regularization effectively up to a certain point, beyond which further training does not lead to significant changes in the coefficient. The L0 regularization is likely reaching its maximum effect on the model's parameters. The plateau suggests that the model has converged with respect to the L0 regularization term. This behavior is common in machine learning models where regularization techniques are employed to prevent overfitting. The initial linear increase could represent the model adapting to the regularization constraint, while the plateau indicates that the constraint is being fully satisfied.