TECHNICAL ASSET FINGERPRINT

5d29ff0cea1985ce383b42f4

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

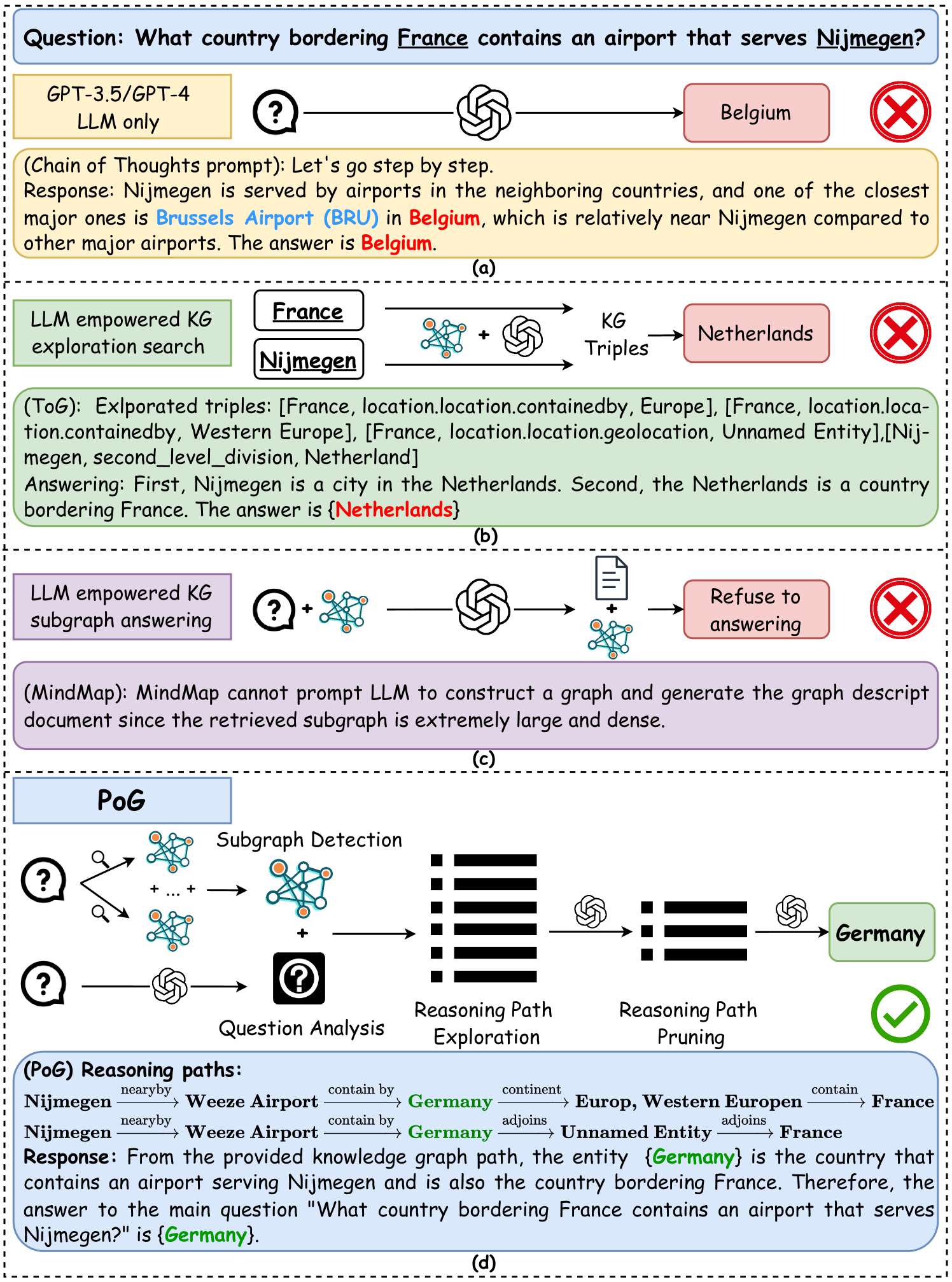

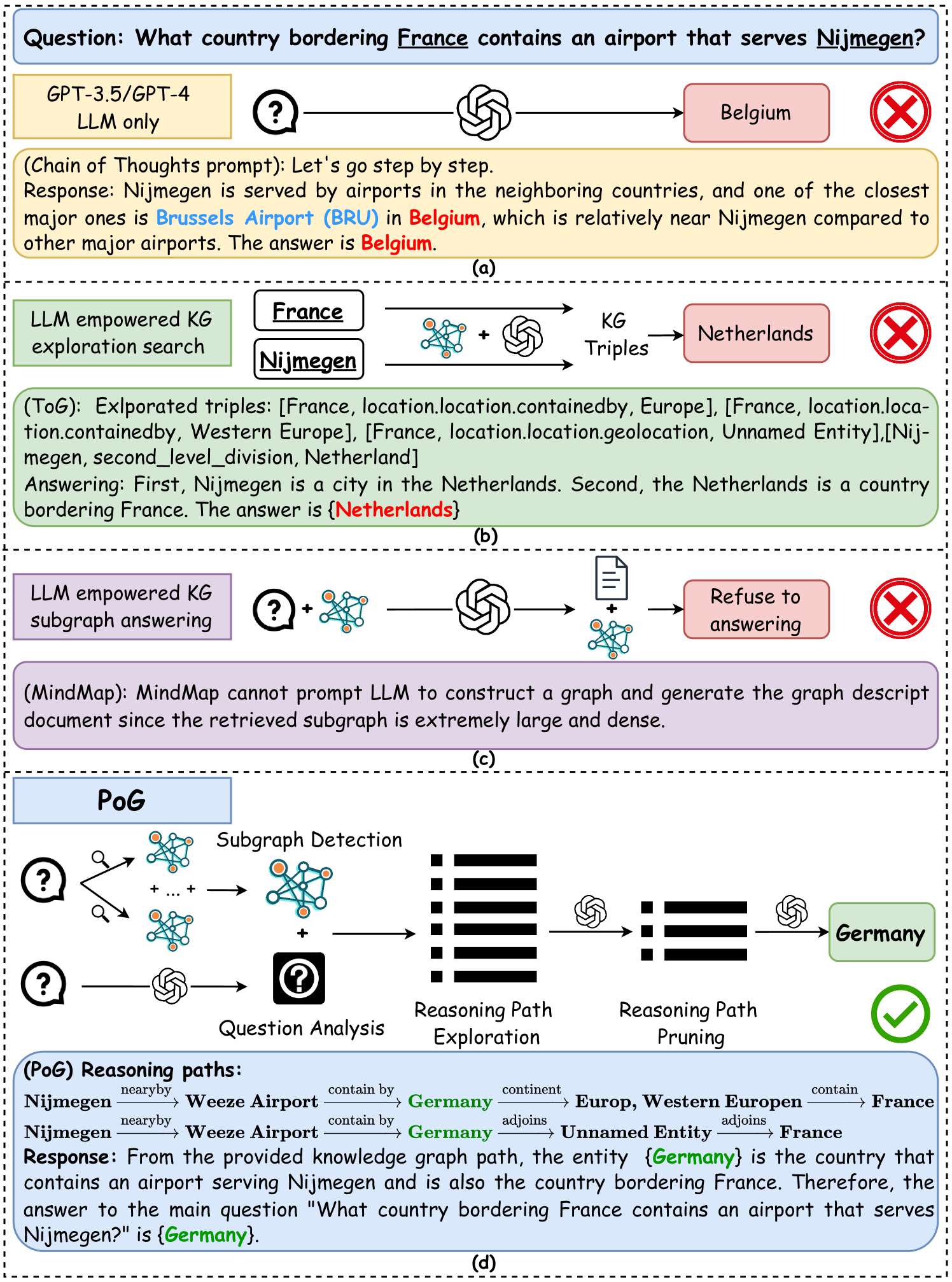

## Diagram: Comparison of AI Reasoning Methods for a Multi-Hop Question

### Overview

The image is a technical diagram comparing four different artificial intelligence approaches to answering a complex, multi-hop reasoning question. The central question posed is: **"What country bordering France contains an airport that serves Nijmegen?"** The diagram is divided into four horizontal panels, labeled (a) through (d), each illustrating a different method's process and final answer. Three methods fail (marked with a red "X"), while one succeeds (marked with a green checkmark).

### Components/Axes

The diagram is structured as a vertical sequence of four panels. Each panel contains:

1. **A method label** (e.g., "GPT-3.5/GPT-4 LLM only").

2. **A flowchart** using icons (question mark, brain/LLM icon, network/KG icon, document icon) and arrows to represent the process flow.

3. **A text box** explaining the method's reasoning or output.

4. **A final answer box** (colored red for incorrect, green for correct) and a status icon (red "X" or green checkmark).

### Detailed Analysis

#### **Panel (a): GPT-3.5/GPT-4 LLM only**

* **Process Flow:** A question mark icon points directly to an LLM (brain) icon, which outputs the answer "Belgium".

* **Reasoning Text (Chain of Thoughts prompt):** "Let's go step by step. Response: Nijmegen is served by airports in the neighboring countries, and one of the closest major ones is **Brussels Airport (BRU)** in **Belgium**, which is relatively near Nijmegen compared to other major airports. The answer is **Belgium**."

* **Final Answer:** "Belgium" (in a red box).

* **Status:** Incorrect (Red "X").

#### **Panel (b): LLM empowered KG exploration search**

* **Process Flow:** The entities "France" and "Nijmegen" are input. They are processed by a combination of a Knowledge Graph (KG) icon and an LLM icon, generating "KG Triples". This leads to the answer "Netherlands".

* **Reasoning Text (TextGG (:****** (** (** triples triples triples triples: location location[,, France,, location.location.containedby, Europe], [France, location.location.containedby, Western Europe], [France, location.location.geolocation, Unnamed Entity], [Nijmegen, second_level_division, Netherland] Answering: First, Nijmegen is a city in the Netherlands. Second, the Netherlands is a country bordering France. The answer is **{Netherlands}**."

* **Final Answer:** "Netherlands" (in a red box).

* **Status:** Incorrect (Red "X").

#### **Panel (c): LLM empowered KG subgraph answering**

* **Process Flow:** A question mark and a KG icon are input to an LLM. The output is a document icon combined with a KG icon, leading to the result "Refuse to answering".

* **Reasoning Text (MindMap):** "MindMap cannot prompt LLM to construct a graph and generate the graph descript document since the retrieved subgraph is extremely large and dense."

* **Final Answer:** "Refuse to answering" (in a red box).

* **Status:** Incorrect (Red "X").

#### **Panel (d): PoG (Path of Graph)**

* **Process Flow:** This is the most complex flowchart.

1. **Subgraph Detection:** Multiple question marks and magnifying glasses point to multiple KG icons, which are combined into a single, denser KG icon.

2. **Question Analysis:** A separate question mark goes through an LLM icon to a "Question Analysis" box (a question mark inside a square).

3. The outputs of Subgraph Detection and Question Analysis are combined.

4. **Reasoning Path Exploration:** The combined data is processed, represented by a series of horizontal black bars (suggesting multiple paths).

5. **Reasoning Path Pruning:** An LLM icon acts on the paths, reducing them to a shorter set of bars.

6. A final LLM icon processes the pruned paths to output the answer "Germany".

* **Reasoning Text (PoG Reasoning paths):**

* **Path 1:** `Nijmegen --(nearby)--> Weeze Airport --(contain by)--> Germany --(continent)--> Europ, Western Europen --(contain)--> France`

* **Path 2:** `Nijmegen --(nearby)--> Weeze Airport --(contain by)--> Germany --(adjoins)--> Unnamed Entity --(adjoins)--> France`

* **Response:** "From the provided knowledge graph path, the entity **{Germany}** is the country that contains an airport serving Nijmegen and is also the country bordering France. Therefore, the answer to the main question 'What country bordering France contains an airport that serves Nijmegen?' is **{Germany}**."

* **Final Answer:** "Germany" (in a green box).

* **Status:** Correct (Green checkmark).

### Key Observations

1. **Question Complexity:** The question requires connecting three entities (Nijmegen, an airport, a country) and verifying a geographical relationship (bordering France). This makes it a challenging multi-hop reasoning task.

2. **Method Failure Modes:**

* **LLM-only (a):** Fails due to a plausible but incorrect assumption (that Brussels is the closest major airport). It lacks factual grounding.

* **KG Exploration (b):** Fails because it retrieves correct but incomplete triples. It correctly identifies Nijmegen as being in the Netherlands but incorrectly concludes the Netherlands borders France.

* **KG Subgraph (c):** Fails due to computational or representational overload; the retrieved knowledge subgraph is too large and dense for the method to process effectively.

3. **PoG Success (d):** The PoG method succeeds by explicitly modeling and pruning reasoning paths. It finds the correct chain: Nijmegen -> Weeze Airport (in Germany) -> Germany (borders France). The "Pruning" step is critical for filtering out irrelevant or incorrect paths.

### Interpretation

This diagram serves as a comparative analysis of AI reasoning architectures, highlighting the limitations of pure LLMs and basic knowledge graph integration for complex, multi-constraint questions. It argues for the superiority of a structured, path-based approach (PoG) that combines subgraph detection, explicit question analysis, and iterative reasoning path exploration and pruning.

The core insight is that answering such questions isn't just about retrieving facts (like "Nijmegen is in the Netherlands") but about constructing and validating a *chain of relationships* that satisfies all constraints of the query. The PoG method's success demonstrates the importance of:

1. **Structured Exploration:** Systematically generating potential reasoning paths from a knowledge graph.

2. **Constraint Verification:** Explicitly checking each path against all conditions in the question (e.g., "contains an airport that serves Nijmegen" AND "borders France").

3. **Pruning:** Eliminating paths that are incomplete, irrelevant, or lead to contradictions.

The incorrect answer "Netherlands" in panel (b) is particularly instructive; it shows how a system can be partially correct yet ultimately wrong by missing a single relational link (the border with France). The diagram implicitly critiques methods that perform shallow retrieval or lack mechanisms for holistic path validation.

DECODING INTELLIGENCE...