\n

## Diagram: Agent Architecture with Self-Reflection

### Overview

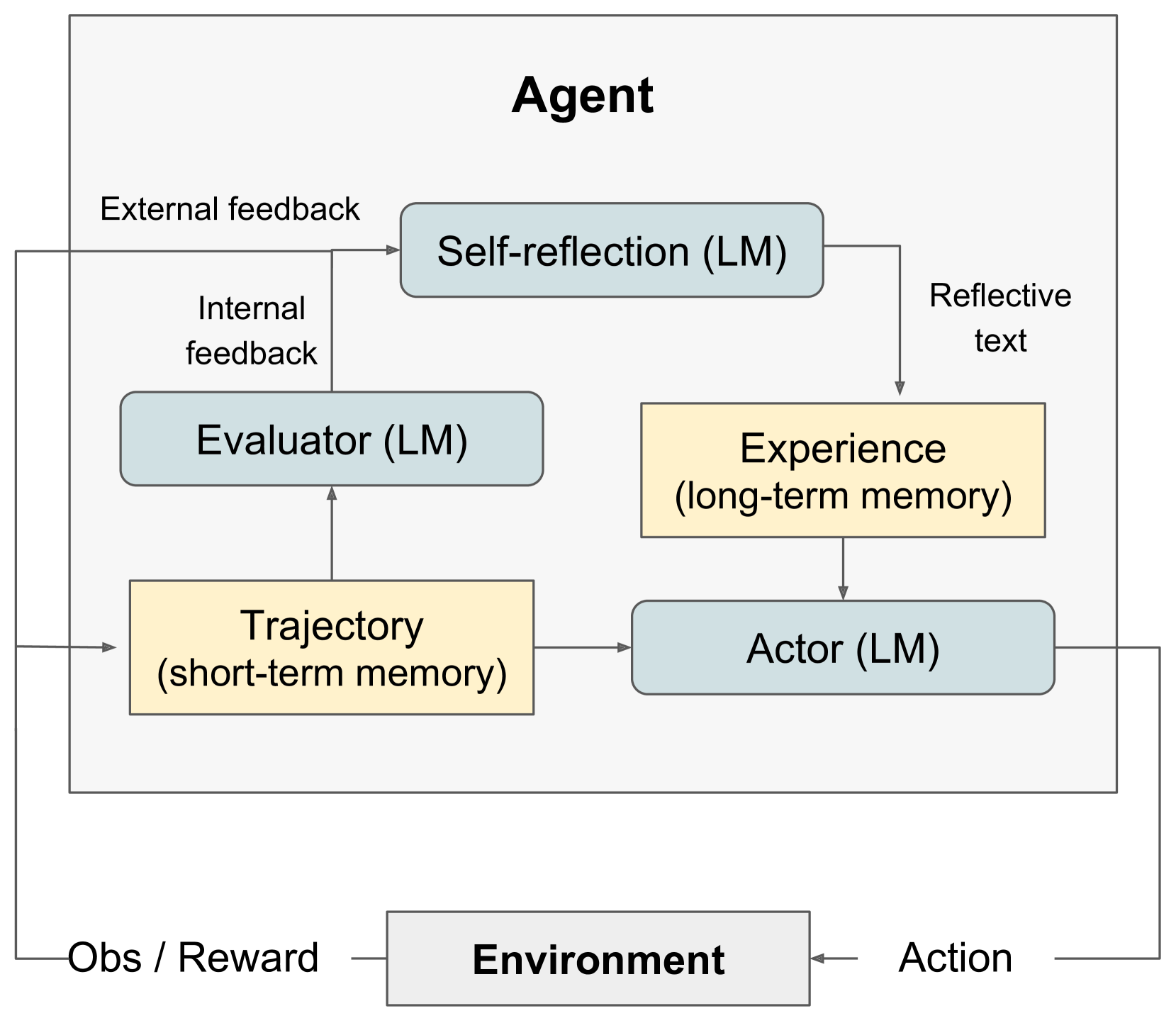

The image depicts a diagram of an agent architecture incorporating self-reflection, utilizing Language Models (LMs) for various components. The agent interacts with an "Environment" and consists of internal modules for evaluation, action, and memory. Feedback loops are present for both internal and external evaluation.

### Components/Axes

The diagram consists of the following components:

* **Agent:** The overarching system.

* **Environment:** The external world the agent interacts with.

* **Self-reflection (LM):** Receives "External feedback" and generates "Reflective text".

* **Evaluator (LM):** Receives "Internal feedback" and provides input to "Trajectory".

* **Trajectory (short-term memory):** Receives input from "Evaluator" and provides input to "Actor".

* **Actor (LM):** Receives input from "Trajectory" and generates "Action".

* **Experience (long-term memory):** Receives "Reflective text" from "Self-reflection" and provides input to "Actor".

The following inputs/outputs are labeled:

* **Obs / Reward:** Input from the "Environment" to "Evaluator" and "Trajectory".

* **Action:** Output from "Actor" to the "Environment".

* **External feedback:** Input to "Self-reflection".

* **Internal feedback:** Input to "Evaluator".

* **Reflective text:** Output from "Self-reflection" to "Experience".

### Detailed Analysis or Content Details

The diagram shows a cyclical flow of information.

1. The "Actor" generates an "Action" which is sent to the "Environment".

2. The "Environment" returns "Obs / Reward" to both the "Evaluator" and "Trajectory".

3. The "Evaluator" receives "Internal feedback" and provides input to the "Trajectory".

4. The "Trajectory" (short-term memory) passes information to the "Actor".

5. The "Self-reflection" module receives "External feedback" and generates "Reflective text" which is stored in the "Experience" (long-term memory).

6. The "Experience" module then provides input to the "Actor".

All modules labeled with "(LM)" indicate the use of a Language Model. The diagram uses arrows to indicate the direction of information flow. The "Agent" is visually separated from the "Environment" by a horizontal line. The "Trajectory" and "Actor" are contained within a light gray rectangle, visually grouping them.

### Key Observations

The diagram highlights the importance of feedback loops in the agent's learning process. The inclusion of "Self-reflection" and "Experience" suggests a focus on meta-cognition and long-term learning. The use of Language Models (LMs) in multiple components indicates a reliance on natural language processing capabilities. The separation of short-term ("Trajectory") and long-term ("Experience") memory is a key architectural feature.

### Interpretation

This diagram illustrates a sophisticated agent architecture designed for continuous learning and improvement. The self-reflection component, coupled with long-term memory, allows the agent to analyze its past experiences and refine its behavior. The use of Language Models suggests the agent can process and generate natural language, potentially enabling more complex reasoning and communication. The cyclical flow of information emphasizes the importance of feedback in the learning process. The architecture appears to be inspired by cognitive architectures, aiming to mimic aspects of human learning and decision-making. The diagram suggests a system capable of adapting to changing environments and improving its performance over time. The architecture is designed to be robust and flexible, allowing for continuous learning and adaptation. The diagram does not provide any quantitative data or specific performance metrics. It is a conceptual representation of an agent's internal workings.