## Diagram: Knowledge Distillation Strategies

### Overview

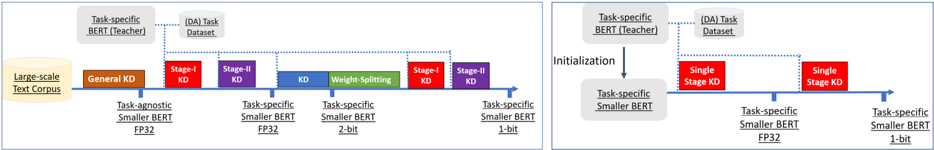

The image presents two diagrams illustrating different knowledge distillation strategies for BERT models. The left diagram shows a multi-stage knowledge distillation process, while the right diagram depicts a single-stage approach. Both diagrams highlight the transfer of knowledge from a larger "teacher" BERT model to a smaller "student" BERT model.

### Components/Axes

**Left Diagram:**

* **Horizontal Axis:** Represents the progression of knowledge distillation stages.

* **Labels along the axis:**

* "Task-agnostic Smaller BERT FP32"

* "Task-specific Smaller BERT FP32"

* "Task-specific Smaller BERT 2-bit"

* "Task-specific Smaller BERT 1-bit"

* **Data Source:** "Large-scale Text Corpus" (represented as a cylinder on the left)

* **Teacher Model:** "Task-specific BERT (Teacher)" (top-left, in a rounded rectangle)

* **(DA) Task Dataset:** "(DA) Task Dataset" (top-center, in a rounded rectangle)

* **Knowledge Distillation Stages (represented as colored rectangles):**

* "General KD" (brown)

* "Stage-I KD" (red)

* "Stage-II KD" (purple)

* "KD Weight-Splitting" (green)

* "Stage-I KD" (red)

* "Stage-II KD" (purple)

**Right Diagram:**

* **Vertical Arrow:** "Initialization" (indicating the transfer of knowledge from the teacher to the student)

* **Teacher Model:** "Task-specific BERT (Teacher)" (top-left, in a rounded rectangle)

* **Student Model:** "Task-specific Smaller BERT" (below the teacher model, in a rounded rectangle)

* **Horizontal Axis:** Represents the progression of knowledge distillation stages.

* **Labels along the axis:**

* "Task-specific Smaller BERT FP32"

* "Task-specific Smaller BERT 1-bit"

* **Knowledge Distillation Stages (represented as colored rectangles):**

* "Single Stage KD" (red)

* "Single Stage KD" (red)

### Detailed Analysis

**Left Diagram:**

1. **Data Source:** The process begins with a "Large-scale Text Corpus."

2. **General KD:** The first stage involves "General KD," resulting in a "Task-agnostic Smaller BERT FP32."

3. **Multi-Stage KD:** Subsequent stages involve "Stage-I KD," "Stage-II KD," "KD Weight-Splitting," "Stage-I KD," and "Stage-II KD," leading to increasingly compressed models: "Task-specific Smaller BERT FP32," "Task-specific Smaller BERT 2-bit," and finally "Task-specific Smaller BERT 1-bit."

4. **Teacher and Dataset:** The "Task-specific BERT (Teacher)" and "(DA) Task Dataset" are connected to the KD stages via dotted lines, indicating their role in guiding the distillation process.

**Right Diagram:**

1. **Initialization:** The "Task-specific Smaller BERT" is initialized from the "Task-specific BERT (Teacher)."

2. **Single-Stage KD:** Two "Single Stage KD" steps are shown, resulting in "Task-specific Smaller BERT FP32" and "Task-specific Smaller BERT 1-bit."

3. **Teacher and Dataset:** The "Task-specific BERT (Teacher)" and "(DA) Task Dataset" are connected to the KD stages via dotted lines, indicating their role in guiding the distillation process.

### Key Observations

* The left diagram illustrates a more complex, multi-stage knowledge distillation process, while the right diagram shows a simpler, single-stage approach.

* Both diagrams aim to compress a larger "teacher" BERT model into a smaller "student" BERT model, reducing the model size from FP32 to 1-bit.

* The "Task-specific BERT (Teacher)" and "(DA) Task Dataset" are crucial components in both distillation strategies.

### Interpretation

The diagrams demonstrate two different strategies for knowledge distillation, a technique used to transfer knowledge from a large, complex model (the teacher) to a smaller, more efficient model (the student). The multi-stage approach (left) allows for finer-grained control over the distillation process, potentially leading to better performance in some cases. The single-stage approach (right) is simpler and may be more suitable for scenarios where computational resources are limited. The progression from FP32 to 1-bit models indicates a focus on extreme model compression, likely for deployment on resource-constrained devices. The use of a "Task-specific BERT (Teacher)" and "(DA) Task Dataset" suggests that the distillation process is tailored to a specific task, which can improve the performance of the smaller model on that task.