## Scatter Plot: OlymMATH EN Accuracy vs. AIME24 Accuracy

### Overview

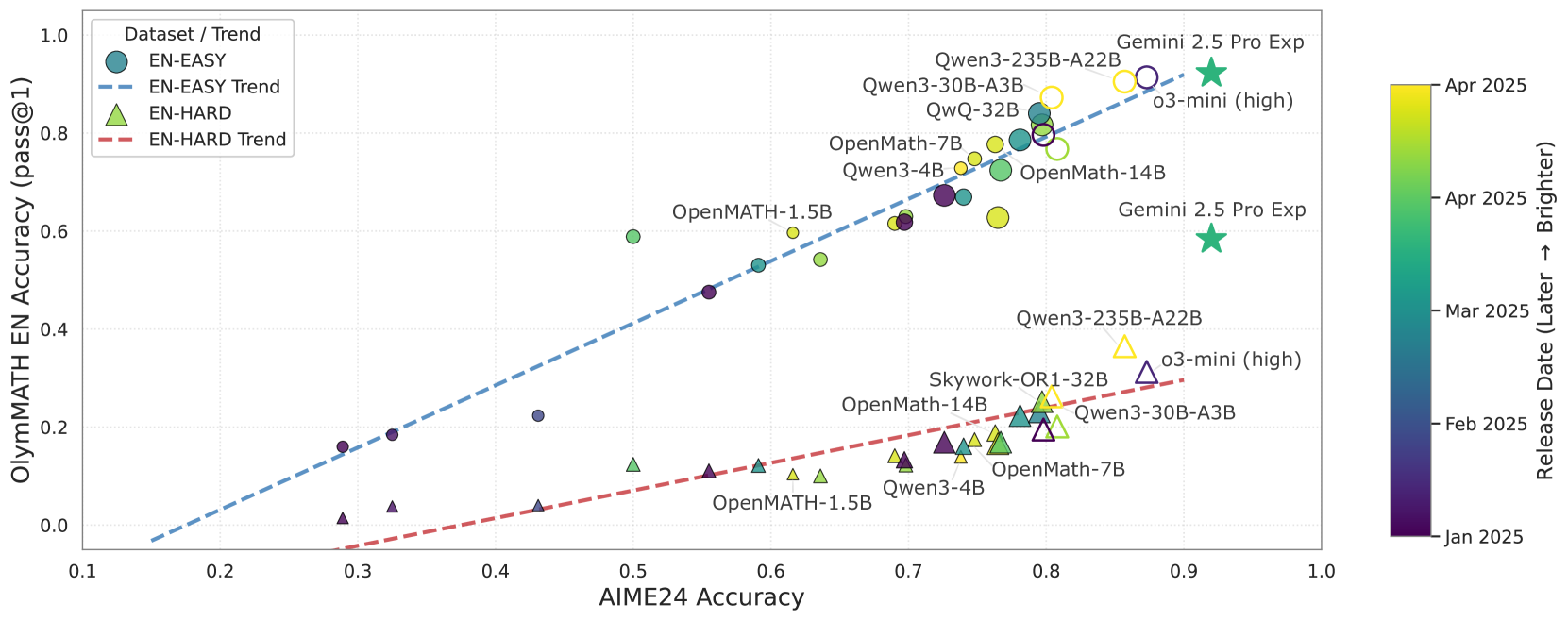

The image is a scatter plot comparing the performance of various language models on two different benchmarks: OlymMATH EN (English) and AIME24. The plot shows the accuracy of each model on these benchmarks, with data points colored according to their release date (from January 2025 to April 2025). The plot includes trend lines for both "EN-EASY" and "EN-HARD" datasets.

### Components/Axes

* **X-axis:** AIME24 Accuracy, ranging from 0.1 to 1.0 in increments of 0.1.

* **Y-axis:** OlymMATH EN Accuracy (pass@1), ranging from 0.0 to 1.0 in increments of 0.2.

* **Legend (Top-Left):**

* EN-EASY (Blue Circle)

* EN-EASY Trend (Blue Dashed Line)

* EN-HARD (Green Triangle)

* EN-HARD Trend (Red Dashed Line)

* **Color Bar (Right):** Represents the release date, ranging from Jan 2025 (dark purple) to Apr 2025 (yellow). The color bar is labeled "Release Date (Later -> Brighter)".

* **Data Points:** Each data point represents a language model, labeled with its name (e.g., "Qwen3-235B-A22B", "OpenMath-7B"). The size of the data point is not explained.

### Detailed Analysis or Content Details

**EN-EASY Dataset (Blue Circles and Dashed Line):**

* Trend: The EN-EASY trend line slopes upward, indicating a positive correlation between AIME24 accuracy and OlymMATH EN accuracy.

* Data Points:

* At AIME24 Accuracy ~0.3, OlymMATH EN Accuracy is ~0.2

* At AIME24 Accuracy ~0.6, OlymMATH EN Accuracy is ~0.6

* At AIME24 Accuracy ~0.8, OlymMATH EN Accuracy is ~0.8

* At AIME24 Accuracy ~0.9, OlymMATH EN Accuracy is ~0.9

**EN-HARD Dataset (Green Triangles and Red Dashed Line):**

* Trend: The EN-HARD trend line also slopes upward, but at a shallower angle than the EN-EASY trend line.

* Data Points:

* At AIME24 Accuracy ~0.3, OlymMATH EN Accuracy is ~0.0

* At AIME24 Accuracy ~0.6, OlymMATH EN Accuracy is ~0.1

* At AIME24 Accuracy ~0.8, OlymMATH EN Accuracy is ~0.2

**Specific Data Points (Examples):**

* "Gemini 2.5 Pro Exp" (Green Star) has an AIME24 accuracy of approximately 0.92 and an OlymMATH EN accuracy of approximately 0.73. The color is yellow, indicating a release date of approximately April 2025.

* "OpenMATH-1.5B" (Blue Circle) has an AIME24 accuracy of approximately 0.55 and an OlymMATH EN accuracy of approximately 0.6. The color is green, indicating a release date of approximately March 2025.

* "Qwen3-4B" (Purple Triangle) has an AIME24 accuracy of approximately 0.7 and an OlymMATH EN accuracy of approximately 0.05. The color is dark purple, indicating a release date of approximately January 2025.

**Other Models:**

* Qwen3-235B-A22B

* Qwen3-30B-A3B

* QwQ-32B

* OpenMath-7B

* OpenMath-14B

* Skywork-OR1-32B

* 03-mini (high)

### Key Observations

* There is a positive correlation between AIME24 accuracy and OlymMATH EN accuracy for both EN-EASY and EN-HARD datasets.

* The EN-EASY dataset generally shows higher OlymMATH EN accuracy for a given AIME24 accuracy compared to the EN-HARD dataset.

* Models released later (closer to April 2025) tend to have higher accuracy on both benchmarks.

* The "Gemini 2.5 Pro Exp" model appears to be an outlier, with high accuracy on both benchmarks.

### Interpretation

The scatter plot suggests that language models are generally improving in their ability to solve both AIME24 and OlymMATH EN problems over time. The difference in performance between the EN-EASY and EN-HARD datasets indicates that the difficulty of the benchmark significantly impacts the accuracy of the models. The color gradient reveals a trend: newer models (released later) tend to perform better, suggesting ongoing progress in language model development. The outlier "Gemini 2.5 Pro Exp" demonstrates that some models significantly outperform others, potentially due to architectural innovations or training methodologies. The size of the data points is not explained, and could represent another variable.