## Screenshot: FlowForge Interface for Peer-Review Simulation

### Overview

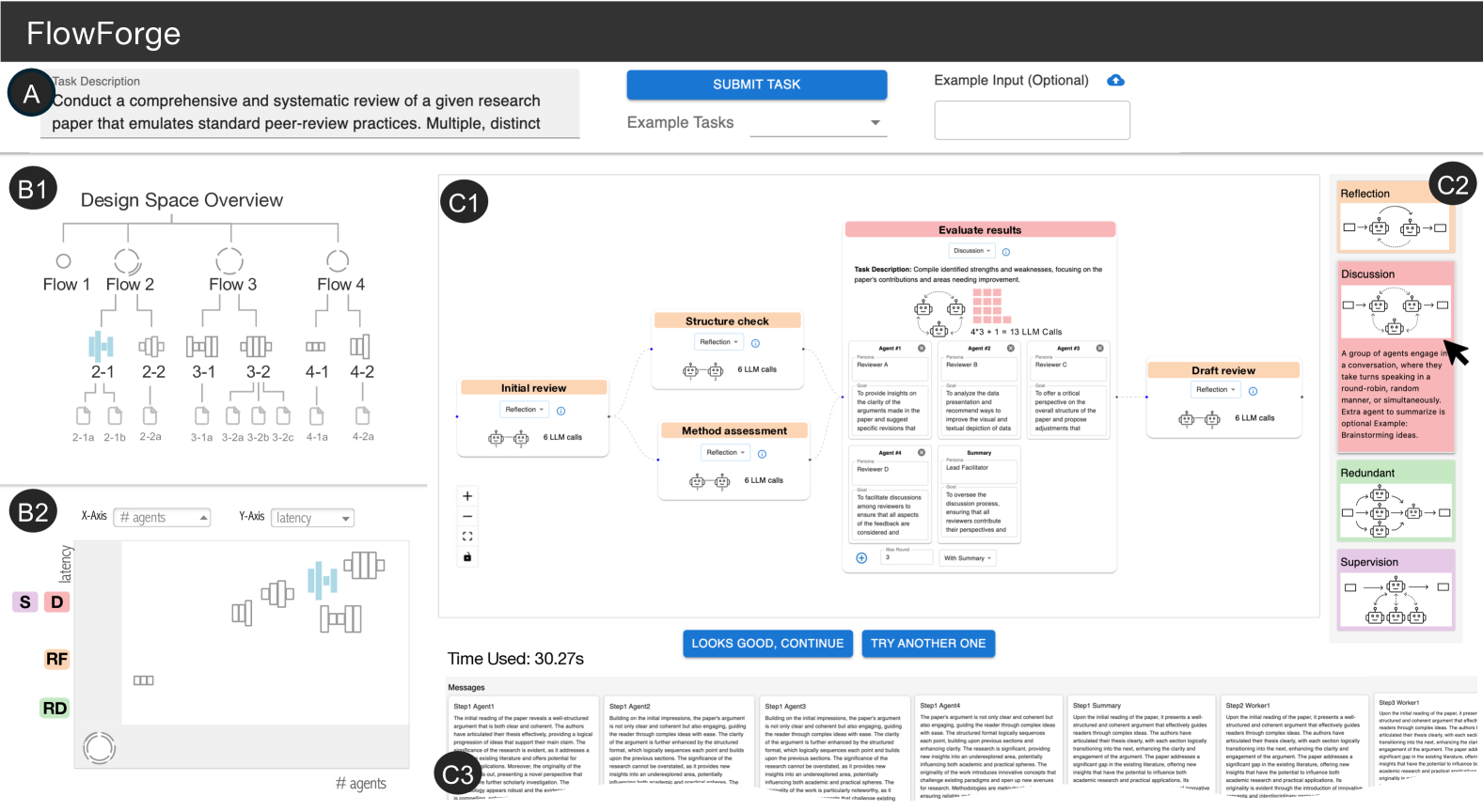

This image depicts a web-based interface for "FlowForge," a tool designed to simulate multi-agent peer-review processes for academic research papers. The interface combines task configuration, design space visualization, and workflow simulation components. Key elements include a task description field, a design space overview with flow diagrams, a latency/agent graph, and a detailed workflow simulation with agent interactions.

### Components/Axes

1. **Task Configuration Section**

- **Task Description**: "Conduct a comprehensive and systematic review of a given research paper that emulates standard peer-review practices. Multiple, distinct paper that emulates standard peer-review practices."

- **Buttons**:

- Blue "SUBMIT TASK" button

- "Example Tasks" dropdown menu

- Cloud icon with "+" for optional input

2. **Design Space Overview (B1)**

- **Flow Diagram**:

- Four main flows (Flow 1-4) with hierarchical sub-flows (e.g., Flow 2 → 2-1, 2-2)

- Visual connections between flows using lines and arrows

- Color-coded components (blue, gray, black)

3. **Latency/Agent Graph (B2)**

- **Axes**:

- X-axis: "# agents" (dropdown)

- Y-axis: "latency" (dropdown)

- **Legend**:

- Colors: S (purple), D (red), RF (orange), RD (green)

- **Data**: Bar chart with multiple colored bars (exact values unclear)

4. **Workflow Diagram (C1)**

- **Steps**:

1. Initial review → Structure check → Method assessment

2. Evaluate results → Draft review

- **Components**:

- "6 LLM calls" annotations

- "Reflection" and "Discussion" nodes

- "Summary" and "Facilitator" roles

- **Time Metric**: "Time Used: 30.27s"

5. **Agent Interaction Log (C3)**

- **Messages**:

- Step1 Agent1: "The initial reading of the paper reveals a well-structured argument..."

- Step1 Agent2: "Building on the initial impressions, the paper's argument..."

- Step1 Agent3: "Building on the initial impressions, the paper's argument..."

- Step1 Agent4: "Building on the initial impressions, the paper's argument..."

- Step1 Summary: "Upon the initial reading of the paper, it presents a well-structured and coherent argument..."

- Step2 Worker1: "Upon the initial reading of the paper, it presents a well-structured and coherent argument..."

- Step3 Worker1: "Upon the initial reading of the paper, it presents a well-structured and coherent argument..."

### Detailed Analysis

- **Design Space**: The flow diagram shows a hierarchical structure with four primary flows (1-4) branching into sub-flows (e.g., 2-1, 2-2). This suggests modular review components that can be combined or isolated.

- **Latency Graph**: While exact values are unclear, the presence of multiple colored bars (S/D/RF/RD) indicates comparative analysis of agent performance metrics across different configurations.

- **Workflow Simulation**: The multi-step process includes reflection points and collaborative discussion, mirroring real-world peer-review cycles. The "6 LLM calls" annotation suggests automated agent interactions.

- **Agent Logs**: Truncated messages reveal iterative analysis of paper structure, coherence, and argument quality, with agents building on prior reviews.

### Key Observations

1. The interface emphasizes iterative refinement through "REFLECTION" and "DISCUSSION" nodes.

2. Multiple agent configurations (S/D/RF/RD) are tracked, though specific performance metrics remain ambiguous.

3. The 30.27s time metric suggests real-time simulation capabilities.

4. Truncated agent messages indicate complex, multi-step reasoning processes.

### Interpretation

FlowForge appears to model collaborative academic review through:

1. **Modular Design**: The flow diagram's hierarchical structure allows customization of review components.

2. **Agent Collaboration**: Multiple agents (S/D/RF/RD) contribute specialized perspectives, with reflection points enabling consensus-building.

3. **Automated Evaluation**: The "6 LLM calls" annotation implies machine learning integration for initial analysis.

4. **Temporal Constraints**: The 30.27s metric suggests either rapid simulation or real-time processing limits.

The tool bridges human-like peer review with computational efficiency, though the latency graph's ambiguity raises questions about scalability. The truncated agent messages hint at sophisticated natural language processing capabilities, though full transparency would require access to complete logs.