## Bar Chart: Computation FLOPs Comparison for Federated Learning Methods

### Overview

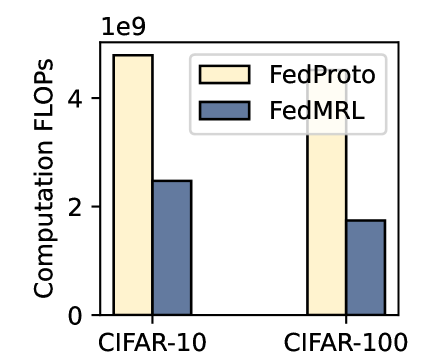

The image is a vertical bar chart comparing the computational cost, measured in FLOPs (Floating Point Operations), of two federated learning methods—FedProto and FedMRL—across two standard image classification datasets: CIFAR-10 and CIFAR-100. The chart visually demonstrates that FedMRL requires significantly fewer computations than FedProto for both datasets.

### Components/Axes

* **Y-Axis:** Labeled "Computation FLOPs". The scale is linear and marked with major ticks at 0, 2, and 4. A multiplier of `1e9` (1 billion) is indicated at the top of the axis, meaning the values represent billions of FLOPs.

* **X-Axis:** Represents the datasets. Two categorical groups are present: "CIFAR-10" on the left and "CIFAR-100" on the right.

* **Legend:** Positioned in the top-right corner of the chart area. It contains two entries:

* A light yellow (cream) rectangle labeled "FedProto".

* A blue-gray rectangle labeled "FedMRL".

* **Data Series:** Two bars are plotted for each dataset category, corresponding to the two methods in the legend.

### Detailed Analysis

**Spatial Grounding & Trend Verification:**

For each dataset group on the x-axis, the FedProto bar (light yellow) is on the left, and the FedMRL bar (blue-gray) is on the right.

1. **CIFAR-10 Group (Left):**

* **FedProto (Yellow, Left Bar):** The bar extends upward to a value of approximately **4.5e9 FLOPs** (4.5 billion). The trend is a high computational cost.

* **FedMRL (Blue, Right Bar):** The bar is substantially shorter, reaching approximately **2.5e9 FLOPs** (2.5 billion). The trend shows a significant reduction in computation compared to FedProto.

2. **CIFAR-100 Group (Right):**

* **FedProto (Yellow, Left Bar):** The bar reaches approximately **3.5e9 FLOPs** (3.5 billion). This is lower than its cost on CIFAR-10.

* **FedMRL (Blue, Right Bar):** The bar is the shortest on the chart, at approximately **1.8e9 FLOPs** (1.8 billion). This represents the lowest computational cost shown.

**Data Table Reconstruction (Approximate Values):**

| Dataset | Method | Computation FLOPs (Approx.) |

| :-------- | :------- | :-------------------------- |

| CIFAR-10 | FedProto | 4.5 x 10^9 |

| CIFAR-10 | FedMRL | 2.5 x 10^9 |

| CIFAR-100 | FedProto | 3.5 x 10^9 |

| CIFAR-100 | FedMRL | 1.8 x 10^9 |

### Key Observations

1. **Consistent Efficiency Advantage:** FedMRL demonstrates a consistent and substantial reduction in computational FLOPs compared to FedProto across both datasets.

2. **Dataset Impact:** For both methods, the computational cost is higher on the CIFAR-10 dataset than on the CIFAR-100 dataset. This is an interesting observation, as CIFAR-100 is a more complex classification task (100 classes vs. 10 classes). The chart suggests the measured FLOPs may be more related to the specific model architecture or training protocol used for each dataset benchmark rather than task complexity alone.

3. **Magnitude of Difference:** The relative computational saving of FedMRL over FedProto is more pronounced on CIFAR-10 (a reduction of ~2.0e9 FLOPs, or ~44%) than on CIFAR-100 (a reduction of ~1.7e9 FLOPs, or ~49%).

### Interpretation

This chart provides a clear performance metric—computational efficiency—for evaluating federated learning algorithms. The data strongly suggests that the **FedMRL method is designed to be significantly more computationally efficient than FedProto**. In the context of federated learning, where client devices (like mobile phones or IoT devices) often have limited processing power and battery life, reducing FLOPs is a critical advantage. It implies faster training rounds, lower energy consumption, and broader feasibility for deployment on resource-constrained edge devices.

The Peircean investigative reading suggests the chart is an **index** of a causal relationship: the algorithmic design of FedMRL (the cause) leads to a measurable reduction in computational load (the effect). The consistent pattern across two different datasets (CIFAR-10 and CIFAR-100) strengthens the claim that this efficiency is a robust property of the FedMRL method, not an artifact of a single test scenario. The anomaly that both methods show lower FLOPs on the more complex CIFAR-100 dataset invites further investigation into the experimental setup, hinting that factors like model size, number of local epochs, or communication rounds might differ between the two dataset benchmarks.