TECHNICAL ASSET FINGERPRINT

628b49016583ffedfb331fe9

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Charts: Accuracy vs. Sequence Length and Training Steps for KDA, GDN, and Mamba2

### Overview

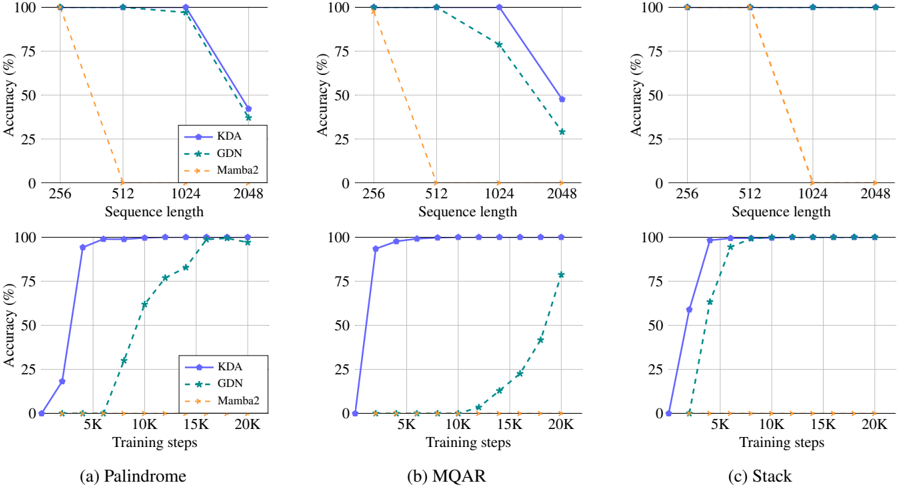

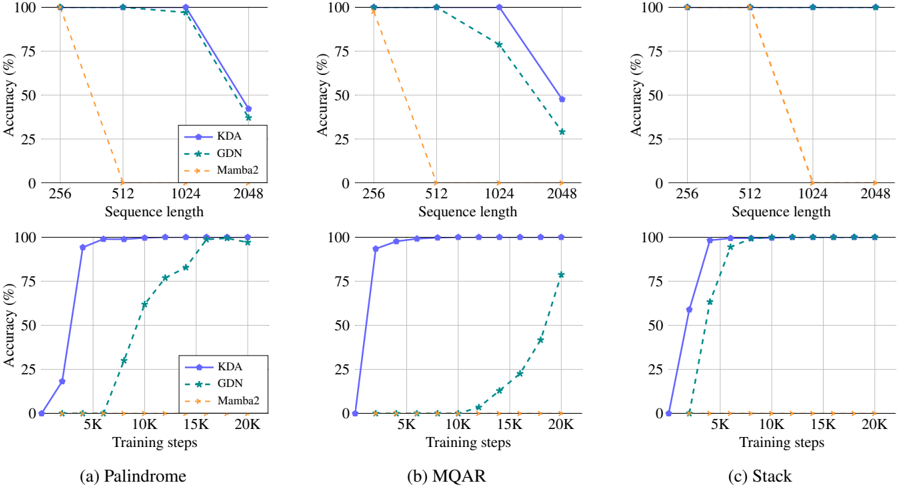

The image presents six line charts arranged in a 2x3 grid. Each column represents a different task: Palindrome, MQAR, and Stack. The top row shows accuracy (%) versus sequence length, while the bottom row shows accuracy (%) versus training steps. The charts compare the performance of three models: KDA (solid blue line), GDN (dashed green line with star markers), and Mamba2 (dashed orange line).

### Components/Axes

**General Chart Elements:**

* **Title:** Each column has a title at the bottom: (a) Palindrome, (b) MQAR, (c) Stack.

* **Y-axis:** Labeled "Accuracy (%)" with ticks at 0, 25, 50, 75, and 100.

* **Legend:** Located in the top-left chart of the first column, indicating:

* KDA: Solid blue line

* GDN: Dashed green line with star markers

* Mamba2: Dashed orange line

**Top Row (Accuracy vs. Sequence Length):**

* **X-axis:** Labeled "Sequence length" with ticks at 256, 512, 1024, and 2048.

**Bottom Row (Accuracy vs. Training Steps):**

* **X-axis:** Labeled "Training steps" with ticks at 5K, 10K, 15K, and 20K.

### Detailed Analysis

**1. Palindrome (Column a):**

* **Top Chart (Sequence Length):**

* KDA (blue): Accuracy is 100% at sequence lengths 256, 512, and 1024, then drops to approximately 42% at 2048.

* GDN (green): Accuracy is 100% at sequence lengths 256, 512, and 1024, then drops to approximately 28% at 2048.

* Mamba2 (orange): Accuracy starts at 100% at 256, drops to 0% at 512, and remains at 0% for 1024 and 2048.

* **Bottom Chart (Training Steps):**

* KDA (blue): Accuracy increases sharply from 0% at 0K to approximately 95% at 5K, then reaches 100% and remains constant.

* GDN (green): Accuracy increases from 0% at 0K to approximately 60% at 15K, then reaches approximately 95% at 20K.

* Mamba2 (orange): Accuracy remains at 0% for all training steps.

**2. MQAR (Column b):**

* **Top Chart (Sequence Length):**

* KDA (blue): Accuracy is 100% at sequence lengths 256, 512, and 1024, then drops to approximately 48% at 2048.

* GDN (green): Accuracy is 100% at sequence lengths 256, 512, and 1024, then drops to approximately 25% at 2048.

* Mamba2 (orange): Accuracy starts at 100% at 256, drops to 0% at 512, and remains at 0% for 1024 and 2048.

* **Bottom Chart (Training Steps):**

* KDA (blue): Accuracy increases sharply from 0% at 0K to approximately 95% at 5K, then reaches 100% and remains constant.

* GDN (green): Accuracy increases slowly from 0% at 0K to approximately 75% at 20K.

* Mamba2 (orange): Accuracy remains at 0% for all training steps.

**3. Stack (Column c):**

* **Top Chart (Sequence Length):**

* KDA (blue): Accuracy is 100% for all sequence lengths.

* GDN (green): Accuracy is 100% for all sequence lengths.

* Mamba2 (orange): Accuracy starts at 100% at 256, drops to 0% at 512, and remains at 0% for 1024 and 2048.

* **Bottom Chart (Training Steps):**

* KDA (blue): Accuracy increases sharply from 0% at 0K to 100% at 5K, and remains constant.

* GDN (green): Accuracy increases sharply from 0% at 0K to approximately 98% at 5K, and remains constant.

* Mamba2 (orange): Accuracy remains at 0% for all training steps.

### Key Observations

* KDA and GDN generally perform well with shorter sequence lengths, but their accuracy decreases as the sequence length increases to 2048, except for the Stack task where they maintain 100% accuracy.

* Mamba2 consistently drops to 0% accuracy after a sequence length of 256 for all tasks.

* For all tasks, KDA reaches 100% accuracy with fewer training steps compared to GDN.

* Mamba2 does not improve with increased training steps and remains at 0% accuracy.

### Interpretation

The charts demonstrate the performance of KDA, GDN, and Mamba2 models on different tasks (Palindrome, MQAR, Stack) under varying sequence lengths and training steps. The results suggest that:

* KDA is generally more efficient in terms of training steps to achieve high accuracy.

* GDN requires more training steps to reach comparable accuracy to KDA.

* Mamba2 is not suitable for these tasks, as its accuracy drops to 0% with longer sequence lengths and does not improve with increased training.

* The Stack task is relatively easier for KDA and GDN, as they achieve 100% accuracy even with shorter training steps and maintain it across all sequence lengths.

* The performance of KDA and GDN degrades with longer sequence lengths for Palindrome and MQAR tasks, indicating potential limitations in handling longer sequences for these specific tasks.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Charts: Performance Comparison of KDA, GDN, and Mamba2 Models

### Overview

This image presents six charts comparing the performance of three models – KDA, GDN, and Mamba2 – across different datasets and training regimes. The charts display accuracy as a function of either sequence length or training steps. Each chart focuses on a specific dataset: Palindrome, MQAR, and Stack, with each dataset being evaluated under two different conditions (sequence length vs. training steps).

### Components/Axes

Each chart shares the following components:

* **X-axis:** Represents the independent variable, either "Sequence length" (values: 256, 512, 1024, 2048) or "Training steps" (values: 5K, 10K, 15K, 20K). The units are explicitly stated.

* **Y-axis:** Represents "Accuracy (%)", ranging from 0 to 100.

* **Legend:** Located in the top-left corner of each chart, identifying the three models:

* KDA (represented by a solid blue line with a circle marker)

* GDN (represented by a dashed green line with a star marker)

* Mamba2 (represented by a solid orange line with a diamond marker)

* **Chart Titles:** Each chart is labeled with a letter (a, b, c) and the dataset name (Palindrome, MQAR, Stack).

### Detailed Analysis or Content Details

**Chart (a): Palindrome - Accuracy vs. Sequence Length**

* **KDA (Blue):** The line is nearly flat, maintaining an accuracy of approximately 98% across all sequence lengths.

* **GDN (Green):** The line slopes downward. Accuracy starts at approximately 80% at a sequence length of 256, decreases to around 50% at 1024, and drops to approximately 25% at 2048.

* **Mamba2 (Orange):** The line slopes downward. Accuracy starts at approximately 75% at a sequence length of 256, decreases to around 50% at 1024, and drops to approximately 25% at 2048.

**Chart (b): MQAR - Accuracy vs. Sequence Length**

* **KDA (Blue):** The line slopes downward. Accuracy starts at approximately 90% at a sequence length of 256, decreases to around 60% at 1024, and drops to approximately 30% at 2048.

* **GDN (Green):** The line slopes downward sharply. Accuracy starts at approximately 75% at a sequence length of 256, decreases to around 25% at 1024, and drops to approximately 0% at 2048.

* **Mamba2 (Orange):** The line is relatively flat, maintaining an accuracy of approximately 75% across all sequence lengths.

**Chart (c): Stack - Accuracy vs. Sequence Length**

* **KDA (Blue):** The line is nearly flat, maintaining an accuracy of approximately 98% across all sequence lengths.

* **GDN (Green):** The line slopes downward. Accuracy starts at approximately 80% at a sequence length of 256, decreases to around 50% at 1024, and drops to approximately 25% at 2048.

* **Mamba2 (Orange):** The line slopes downward. Accuracy starts at approximately 75% at a sequence length of 256, decreases to around 50% at 1024, and drops to approximately 25% at 2048.

**Chart (d): Palindrome - Accuracy vs. Training Steps**

* **KDA (Blue):** The line slopes upward sharply. Accuracy starts at approximately 25% at 5K training steps, increases to around 75% at 10K, and reaches approximately 98% at 20K.

* **GDN (Green):** The line slopes upward. Accuracy starts at approximately 0% at 5K training steps, increases to around 25% at 10K, and reaches approximately 75% at 20K.

* **Mamba2 (Orange):** The line is relatively flat, maintaining an accuracy of approximately 75% across all training steps.

**Chart (e): MQAR - Accuracy vs. Training Steps**

* **KDA (Blue):** The line is nearly flat, maintaining an accuracy of approximately 98% across all training steps.

* **GDN (Green):** The line slopes upward. Accuracy starts at approximately 0% at 5K training steps, increases to around 25% at 10K, and reaches approximately 75% at 20K.

* **Mamba2 (Orange):** The line slopes upward. Accuracy starts at approximately 25% at 5K training steps, increases to around 50% at 10K, and reaches approximately 75% at 20K.

**Chart (f): Stack - Accuracy vs. Training Steps**

* **KDA (Blue):** The line is nearly flat, maintaining an accuracy of approximately 98% across all training steps.

* **GDN (Green):** The line slopes upward. Accuracy starts at approximately 0% at 5K training steps, increases to around 25% at 10K, and reaches approximately 75% at 20K.

* **Mamba2 (Orange):** The line slopes upward. Accuracy starts at approximately 25% at 5K training steps, increases to around 50% at 10K, and reaches approximately 75% at 20K.

### Key Observations

* KDA consistently achieves the highest accuracy, particularly on the Palindrome and Stack datasets, and is relatively insensitive to changes in sequence length or training steps.

* GDN generally exhibits the lowest accuracy, and its performance degrades significantly with increasing sequence length.

* Mamba2 shows moderate performance, generally falling between KDA and GDN. Its performance improves with increasing training steps but is less affected by sequence length.

* The performance of GDN and Mamba2 is more sensitive to training steps than KDA.

### Interpretation

The data suggests that KDA is the most robust and effective model across these datasets and conditions. Its high accuracy and stability indicate a strong ability to generalize and maintain performance regardless of input size or training duration. GDN appears to struggle with longer sequences, indicating a potential limitation in its ability to handle long-range dependencies. Mamba2 offers a compromise between KDA and GDN, demonstrating reasonable performance but lacking the consistency of KDA.

The contrasting results between sequence length and training steps highlight different aspects of model performance. Sequence length tests the model's ability to process information within a fixed training budget, while training steps assess its ability to learn from more data. KDA's consistent performance suggests it efficiently utilizes both sequence information and training data. The differences in performance between the models likely stem from their underlying architectures and their capacity to capture and represent complex relationships within the data. The datasets themselves (Palindrome, MQAR, Stack) likely have varying degrees of complexity and long-range dependencies, which contribute to the observed performance differences.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Multi-Panel Line Chart: Model Accuracy Comparison Across Tasks

### Overview

The image is a composite figure containing six line charts arranged in a 2x3 grid. It compares the performance of three models—KDA, GDN, and Mamba2—on three distinct tasks: Palindrome, MQAR, and Stack. The top row of charts plots model accuracy against increasing sequence length, while the bottom row plots accuracy against increasing training steps. The overall purpose is to evaluate and contrast the scalability and learning efficiency of the models.

### Components/Axes

* **Layout:** A 2-row by 3-column grid of subplots.

* **Subplot Titles/Captions:** Located below each column.

* (a) Palindrome (Left column)

* (b) MQAR (Center column)

* (c) Stack (Right column)

* **Y-Axis (All Charts):** Labeled "Accuracy (%)". Scale ranges from 0 to 100, with major tick marks at 0, 25, 50, 75, and 100.

* **X-Axis (Top Row Charts):** Labeled "Sequence length". Discrete tick marks at 256, 512, 1024, and 2048.

* **X-Axis (Bottom Row Charts):** Labeled "Training steps". Discrete tick marks at 5K, 10K, 15K, and 20K.

* **Legend:** Present in the top-right corner of the top-left and bottom-left charts. It is consistent across all six charts.

* **KDA:** Blue solid line with circle markers.

* **GDN:** Green dashed line with star markers.

* **Mamba2:** Orange dashed line with 'x' markers.

### Detailed Analysis

#### **Top Row: Accuracy vs. Sequence Length**

* **Trend Verification:** For all tasks, the KDA (blue) and GDN (green) lines generally slope downward as sequence length increases, indicating decreasing accuracy. The Mamba2 (orange) line shows a very steep, near-vertical drop to 0% accuracy at relatively short sequence lengths.

**(a) Palindrome - Sequence Length**

* **KDA (Blue):** Starts at ~100% accuracy at length 256. Maintains near 100% at 512 and 1024. Drops sharply to approximately 40% at length 2048.

* **GDN (Green):** Follows a nearly identical path to KDA, starting at ~100% and dropping to approximately 35% at length 2048.

* **Mamba2 (Orange):** Starts at ~100% at length 256. Drops precipitously to 0% at length 512 and remains at 0% for 1024 and 2048.

**(b) MQAR - Sequence Length**

* **KDA (Blue):** Starts at 100% at length 256. Maintains 100% at 512. Drops to approximately 80% at 1024 and further to approximately 45% at 2048.

* **GDN (Green):** Starts at 100% at length 256. Drops to approximately 80% at 512, then to approximately 75% at 1024, and finally to approximately 30% at 2048.

* **Mamba2 (Orange):** Starts at 100% at length 256. Drops to 0% at length 512 and remains at 0% for longer sequences.

**(c) Stack - Sequence Length**

* **KDA (Blue):** Maintains a flat line at 100% accuracy across all sequence lengths (256 to 2048).

* **GDN (Green):** Also maintains a flat line at 100% accuracy across all sequence lengths.

* **Mamba2 (Orange):** Starts at 100% at length 256. Drops to 0% at length 512 and remains at 0% for longer sequences.

#### **Bottom Row: Accuracy vs. Training Steps**

* **Trend Verification:** For KDA and GDN, the lines slope upward, showing improved accuracy with more training steps. The Mamba2 line remains flat at or near 0% across all training steps shown.

**(a) Palindrome - Training Steps**

* **KDA (Blue):** Starts near 0% at the earliest measured step. Rises sharply to approximately 95% by 5K steps. Reaches and maintains ~100% from 10K to 20K steps.

* **GDN (Green):** Starts near 0%. Begins rising after 5K steps, reaching approximately 30% at 10K, 80% at 15K, and ~100% at 20K steps.

* **Mamba2 (Orange):** Remains at approximately 0% across all training steps (5K to 20K).

**(b) MQAR - Training Steps**

* **KDA (Blue):** Starts near 0%. Rises very sharply to approximately 95% by 5K steps. Reaches and maintains ~100% from 10K to 20K steps.

* **GDN (Green):** Starts near 0%. Remains near 0% until after 10K steps. Shows a gradual rise to approximately 15% at 15K steps, then a sharp increase to approximately 80% at 20K steps.

* **Mamba2 (Orange):** Remains at approximately 0% across all training steps.

**(c) Stack - Training Steps**

* **KDA (Blue):** Starts near 0%. Rises to approximately 60% by 5K steps. Reaches ~100% by 10K steps and maintains it.

* **GDN (Green):** Starts near 0%. Rises to approximately 65% by 5K steps, then to ~100% by 10K steps, maintaining it thereafter.

* **Mamba2 (Orange):** Remains at approximately 0% across all training steps.

### Key Observations

1. **Model Hierarchy:** KDA consistently demonstrates the best or tied-for-best performance, followed by GDN. Mamba2 performs catastrophically poorly on all tasks beyond the shortest sequence length or with the given training budget.

2. **Task Difficulty:** The "Stack" task appears to be the easiest for KDA and GDN, as they achieve perfect accuracy across all sequence lengths and converge quickly during training. "Palindrome" and "MQAR" show more pronounced performance degradation with longer sequences.

3. **Sequence Length Sensitivity:** Both KDA and GDN are sensitive to very long sequences (2048) on the Palindrome and MQAR tasks, with significant accuracy drops. Mamba2 is extremely sensitive, failing completely at sequence length 512.

4. **Learning Speed:** KDA learns the fastest, reaching near-perfect accuracy within 5K training steps on all tasks. GDN learns more slowly, particularly on MQAR where it shows minimal progress until after 10K steps.

5. **Performance Ceiling:** On the Stack task, KDA and GDN hit a clear performance ceiling of 100% accuracy, which they maintain.

### Interpretation

This data strongly suggests a significant performance advantage for the KDA and GDN architectures over Mamba2 on the evaluated algorithmic reasoning tasks (Palindrome, MQAR, Stack). The near-total failure of Mamba2 indicates a fundamental limitation in its ability to handle these specific types of sequential, state-dependent computations, especially as problem complexity (sequence length) increases.

The contrast between the top and bottom rows is informative. The top row shows the *limits* of each model's generalization to longer sequences after training. The bottom row shows the *learning dynamics* during training on a fixed (presumably shorter) sequence length. KDA's rapid convergence suggests it has an inductive bias well-suited to these tasks. GDN's slower but eventual convergence, especially on Stack and Palindrome, shows it can learn the tasks but requires more data/updates. Mamba2's flatline at 0% in the training curves suggests it fails to learn the tasks at all within the given training budget, which correlates with its inability to generalize to longer sequences.

The outlier is the Stack task, where KDA and GDN show no degradation with sequence length. This implies the Stack task may rely on a more local or consistent pattern that these models can capture perfectly, unlike the potentially more globally dependent patterns in Palindrome and MQAR. The investigation points toward KDA as the most robust and efficient model among the three for this class of problems.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

```markdown

## Line Charts: Model Performance Across Sequence Lengths and Training Steps

### Overview

The image contains three sets of dual-axis line charts comparing the accuracy of three models (KDA, GDN, Mamba2) across different sequence lengths and training steps. Each chart corresponds to a specific task: Palindrome, MQR, and Stack. The charts reveal how model performance evolves with increasing computational complexity (sequence length) and training duration.

### Components/Axes

- **X-Axes**:

- **Sequence Length**: 256, 512, 1024, 2048 (logarithmic scale)

- **Training Steps**: 5K, 10K, 15K, 20K (linear scale)

- **Y-Axes**: Accuracy (%) from 0% to 100%

- **Legends**:

- **KDA**: Solid blue line

- **GDN**: Dashed green line

- **Mamba2**: Dotted orange line

- **Subplots**:

- Top row: Accuracy vs. Sequence Length

- Bottom row: Accuracy vs. Training Steps

### Detailed Analysis

#### (a) Palindrome

- **Sequence Length**:

- KDA: Starts at 100% (256), drops to 95% (512), 90% (1024), 80% (2048)

- GDN: Starts at 100% (256), drops to 98% (512), 95% (1024), 85% (2048)

- Mamba2: Starts at 100% (256), drops to 90% (512), 80% (1024), 70% (2048)

- **Training Steps**:

- KDA: Rises from 20% (5K) to 100% (20K)

- GDN: Rises from 10% (5K) to 95% (20K)

- Mamba2: Rises from 5% (5K) to 90% (20K)

#### (b) MQR

- **Sequence Length**:

- KDA: Starts at 100% (256), drops to 98% (512), 95% (1024), 85% (2048)

- GDN: Starts at 100% (256), drops to 97% (512), 93% (1024), 80% (2048)

- Mamba2: Starts at 100% (256), drops to 95% (512), 85% (1024), 75% (2048)

- **Training Steps**:

- KDA: Rises from 30% (5K) to 100% (20K)

- GDN: Rises from 15% (5K) to 98% (20K)

- Mamba2: Rises from 8% (5K) to 95% (20K)

#### (c) Stack

- **Sequence Length**:

- KDA: Starts at 100% (256), drops to 99% (512), 97% (1024), 95% (2048)

- GDN: Starts at 100% (256), drops to 98% (512), 96% (1024), 94% (2048)

- Mamba2: Starts at 100% (256), drops to 97% (512), 93% (1024), 90% (2048)

- **Training Steps**:

- KDA: Rises from 40% (5K) to 100% (20K)

- GDN: Rises from 20% (5K) to 99% (20K)

- Mamba2: Rises from 12% (5K) to 98% (20K)

### Key Observations

1. **Sequence Length Impact**:

- All models show accuracy degradation as sequence length increases, with Mamba2 experiencing the steepest decline.

- KDA maintains the highest accuracy across all sequence lengths compared to GDN and Mamba2.

2. **Training Step Impact**:

- All models improve significantly with more training steps, achieving near-100% accuracy by 20K steps.

- KDA demonstrates the fastest convergence, reaching 100% accuracy earlier than GDN and Mamba2.

3. **Model-Specific Trends**:

- Mamba2 underperforms in both sequence length and training step subplots, suggesting architectural limitations for these tasks.

- GDN shows moderate performance, outperforming Mamba2 but lagging behind KDA.

### Interpretation

The data demonstrates that:

- **Sequence Length Sensitivity**: Longer sequences reduce model accuracy, likely due to increased computational complexity and attention mechanism strain.

- **Training Efficiency**: All models benefit from extended training, but KDA's architecture enables faster convergence.

- **Architectural Tradeoffs**: Mamba2's

DECODING INTELLIGENCE...