## Diagram: LLM Workflow

### Overview

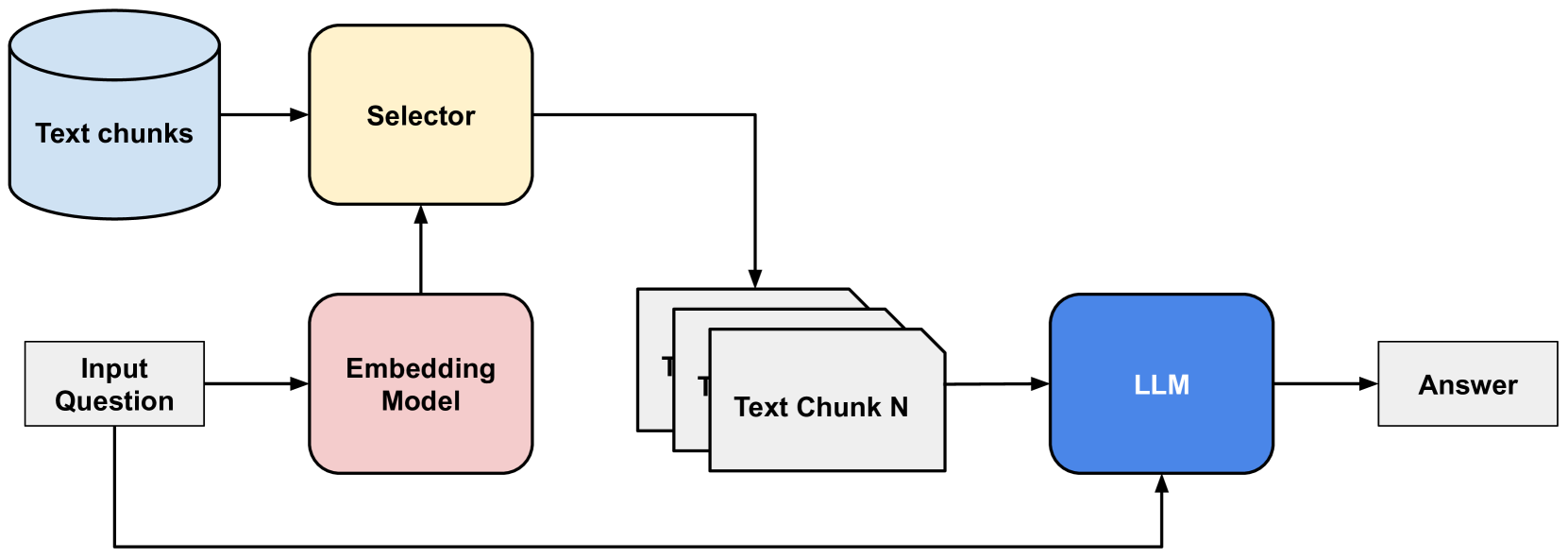

The image is a diagram illustrating the workflow of a Large Language Model (LLM) system. It shows the flow of information from an input question, through various components like an embedding model, a selector, and text chunks, culminating in an answer generated by the LLM.

### Components/Axes

* **Input Question:** A white rectangle on the left, representing the initial query.

* **Embedding Model:** A pink rounded rectangle, which processes the input question.

* **Text chunks:** A light blue cylinder, representing a collection of text data.

* **Selector:** A yellow rounded rectangle, which selects relevant text chunks.

* **Text Chunk N:** A stack of white rectangles, representing the selected text chunks.

* **LLM:** A blue rounded rectangle, representing the Large Language Model.

* **Answer:** A white rectangle on the right, representing the final output.

* Arrows indicate the flow of information between components.

### Detailed Analysis

1. **Input Question** flows into the **Embedding Model**.

2. The **Embedding Model** sends information to the **Selector**.

3. The **Text chunks** also send information to the **Selector**.

4. The **Selector** selects relevant **Text Chunk N**.

5. **Text Chunk N** is fed into the **LLM**.

6. The **Input Question** is also fed into the **LLM**.

7. The **LLM** generates an **Answer**.

### Key Observations

* The diagram illustrates a typical Retrieval-Augmented Generation (RAG) architecture.

* The Embedding Model and Selector components are crucial for retrieving relevant information from the text chunks.

* The LLM uses both the input question and the retrieved text chunks to generate the final answer.

### Interpretation

The diagram depicts a system where an LLM is augmented with external knowledge retrieved from a collection of text chunks. The input question is first processed by an embedding model, which helps to identify relevant text chunks. A selector then chooses the most pertinent chunks, which are fed into the LLM along with the original question. This allows the LLM to generate a more informed and accurate answer by leveraging the retrieved information. The system effectively combines the LLM's reasoning capabilities with external knowledge, improving its performance on complex tasks.