## Technical Document: Evaluation Guidelines for AI Model Mapping Descriptions

### Overview

The image displays a set of "Important guidelines" for evaluating an AI model's (specifically "GPT4") ability to recognize and describe patterns in input-to-output string mappings. The document outlines criteria for three distinct evaluation questions (Q1, Q2, Q3) and provides instructions for annotators. The text is presented in a bulleted list format with nested sub-bullets.

### Components/Axes

The document is structured as a single list with five main bullet points. The text is black on a white background. There are no charts, diagrams, or axes. The content is purely textual.

### Detailed Analysis / Content Details

The following is a precise transcription of the text content from the image.

**Important guidelines:**

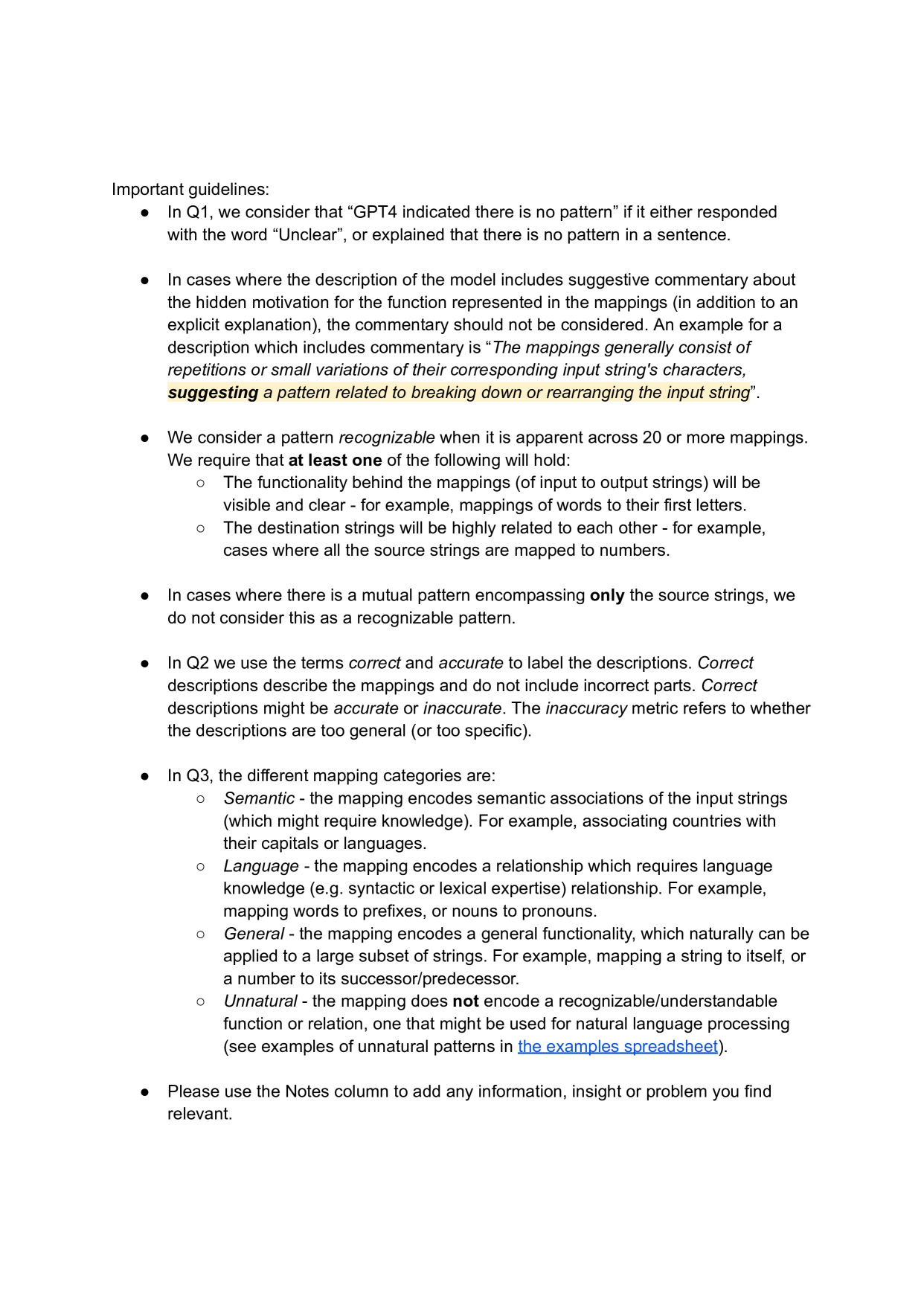

* In Q1, we consider that “GPT4 indicated there is no pattern” if it either responded with the word “Unclear”, or explained that there is no pattern in a sentence.

* In cases where the description of the model includes suggestive commentary about the hidden motivation for the function represented in the mappings (in addition to an explicit explanation), the commentary should not be considered. An example for a description which includes commentary is “*The mappings generally consist of repetitions or small variations of their corresponding input string's characters, **suggesting** a pattern related to breaking down or rearranging the input string*”.

* We consider a pattern *recognizable* when it is apparent across 20 or more mappings. We require that **at least one** of the following will hold:

* The functionality behind the mappings (of input to output strings) will be visible and clear - for example, mappings of words to their first letters.

* The destination strings will be highly related to each other - for example, cases where all the source strings are mapped to numbers.

* In cases where there is a mutual pattern encompassing **only** the source strings, we do not consider this as a recognizable pattern.

* In Q2 we use the terms *correct* and *accurate* to label the descriptions. *Correct* descriptions describe the mappings and do not include incorrect parts. *Correct* descriptions might be *accurate* or *inaccurate*. The *inaccuracy* metric refers to whether the descriptions are too general (or too specific).

* In Q3, the different mapping categories are:

* *Semantic* - the mapping encodes semantic associations of the input strings (which might require knowledge). For example, associating countries with their capitals or languages.

* *Language* - the mapping encodes a relationship which requires language knowledge (e.g. syntactic or lexical expertise) relationship. For example, mapping words to prefixes, or nouns to pronouns.

* *General* - the mapping encodes a general functionality, which naturally can be applied to a large subset of strings. For example, mapping a string to itself, or a number to its successor/predecessor.

* *Unnatural* - the mapping does **not** encode a recognizable/understandable function or relation, one that might be used for natural language processing (see examples of unnatural patterns in [the examples spreadsheet](the%20examples%20spreadsheet)).

* Please use the Notes column to add any information, insight or problem you find relevant.

### Key Observations

1. **Structured Evaluation Framework:** The guidelines define a clear, multi-faceted evaluation process (Q1, Q2, Q3) for assessing an AI's analytical output on mapping tasks.

2. **Specific Thresholds:** A pattern is only considered "recognizable" if it is consistent across a minimum of 20 mappings, establishing a quantitative benchmark.

3. **Distinction Between Correctness and Accuracy:** The document makes a nuanced separation between a description being factually correct (no errors) and being accurate (appropriately specific or general).

4. **Categorization of Mapping Logic:** Q3 provides a taxonomy (Semantic, Language, General, Unnatural) to classify the underlying logic of the mappings being analyzed.

5. **Exclusion of Speculative Commentary:** There is an explicit rule to ignore the model's speculative or "suggestive commentary" about hidden motivations, focusing only on the explicit explanation of the pattern.

6. **Source-Only Pattern Exclusion:** A pattern that exists only in the input (source) strings, without a corresponding relationship to the output (destination) strings, is explicitly disqualified.

### Interpretation

This document serves as a rubric or annotation guide for human evaluators tasked with assessing the performance of a large language model (GPT4) on a specific analytical task: identifying and describing patterns in string transformation mappings.

The guidelines are designed to ensure **consistent, objective, and granular evaluation**. They move beyond a simple "right/wrong" assessment by:

* Defining what constitutes a valid pattern (Q1).

* Separating the factual correctness of a description from its precision (Q2).

* Classifying the *type* of intelligence or knowledge required to discern the pattern (Q3).

The emphasis on excluding "suggestive commentary" and "source-only" patterns indicates a focus on evaluating the model's ability to deduce the *functional relationship* between input and output, not just its ability to describe the data superficially or generate plausible-sounding hypotheses. The requirement for patterns to be "apparent across 20 or more mappings" guards against overfitting to small, coincidental samples.

The linked "examples spreadsheet" for "Unnatural" patterns suggests this is part of a larger, active research or annotation project aimed at understanding the limits and capabilities of AI in logical and semantic reasoning tasks. The final instruction to use a "Notes column" implies this text is likely part of a larger spreadsheet or data annotation interface.