## Line Chart: CIFAR-100 Test Accuracy vs. Number of Classes

### Overview

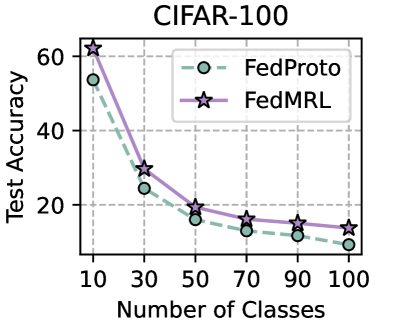

This is a line chart comparing the test accuracy of two federated learning methods, FedProto and FedMRL, on the CIFAR-100 dataset as the number of classification classes increases. The chart demonstrates a clear negative correlation between the number of classes and model accuracy for both methods.

### Components/Axes

* **Title:** "CIFAR-100" (centered at the top).

* **Y-Axis:** Labeled "Test Accuracy". The scale runs from 0 to 60, with major tick marks at 20, 40, and 60.

* **X-Axis:** Labeled "Number of Classes". The scale shows discrete values: 10, 30, 50, 70, 90, 100.

* **Legend:** Located in the top-right quadrant of the chart area.

* **FedProto:** Represented by a green dashed line with circular markers (○).

* **FedMRL:** Represented by a purple solid line with star markers (☆).

* **Grid:** A light gray grid is present, aiding in value estimation.

### Detailed Analysis

**Data Series & Trends:**

1. **FedProto (Green, Dashed Line with Circles):**

* **Trend:** The line slopes steeply downward from left to right, indicating a significant decrease in accuracy as the number of classes grows.

* **Approximate Data Points:**

* 10 Classes: ~55% accuracy

* 30 Classes: ~25% accuracy

* 50 Classes: ~15% accuracy

* 70 Classes: ~12% accuracy

* 90 Classes: ~10% accuracy

* 100 Classes: ~8% accuracy

2. **FedMRL (Purple, Solid Line with Stars):**

* **Trend:** Also slopes downward, but maintains a consistent performance advantage over FedProto at every measured point. The rate of decline appears slightly less severe after the initial drop.

* **Approximate Data Points:**

* 10 Classes: ~60% accuracy

* 30 Classes: ~30% accuracy

* 50 Classes: ~19% accuracy

* 70 Classes: ~16% accuracy

* 90 Classes: ~14% accuracy

* 100 Classes: ~13% accuracy

**Spatial Grounding & Verification:**

* The legend is positioned in the top-right, clearly associating the green circle with "FedProto" and the purple star with "FedMRL".

* At each x-axis value (10, 30, 50, etc.), the purple star marker is positioned vertically higher than the corresponding green circle marker, confirming FedMRL's superior accuracy at each point.

* The vertical gap between the two lines is largest at 10 classes (~5 percentage points) and narrows as the number of classes increases, but FedMRL remains above FedProto throughout.

### Key Observations

1. **Performance Degradation:** Both methods experience a sharp decline in test accuracy when moving from 10 to 30 classes, with the decline continuing at a slower rate thereafter.

2. **Consistent Superiority:** FedMRL outperforms FedProto at every data point shown on the chart.

3. **Convergence of Performance:** The absolute difference in accuracy between the two methods decreases as the task becomes more complex (more classes). The gap is approximately 5% at 10 classes and narrows to about 5% again at 100 classes, but the relative advantage of FedMRL is more pronounced at lower class counts.

4. **Low Final Accuracy:** Both methods achieve very low accuracy (below 20%) when classifying 100 classes, highlighting the difficulty of the task.

### Interpretation

The chart illustrates a fundamental challenge in machine learning: scalability to a large number of categories. The steep initial drop suggests that the core difficulty lies in distinguishing between a moderately increased set of classes (from 10 to 30), rather than a linear increase in difficulty with each added class.

FedMRL's consistent lead implies its underlying methodology (likely involving meta-learning or representation learning in a federated setting) provides more robust or generalizable features than FedProto's approach. This advantage is most impactful when the classification problem is less complex (fewer classes). As the problem becomes extremely complex (100 classes), the inherent difficulty overwhelms the architectural advantages of both methods, leading to similarly poor performance.

The data suggests that for practical applications on CIFAR-100-like data, FedMRL is the preferable method, but neither approach scales well to the full 100-class problem under the conditions tested. This could indicate a need for more data, more sophisticated models, or different federated learning strategies for high-class-count scenarios.