## Horizontal Bar Chart: LLM Model Performance Comparison

### Overview

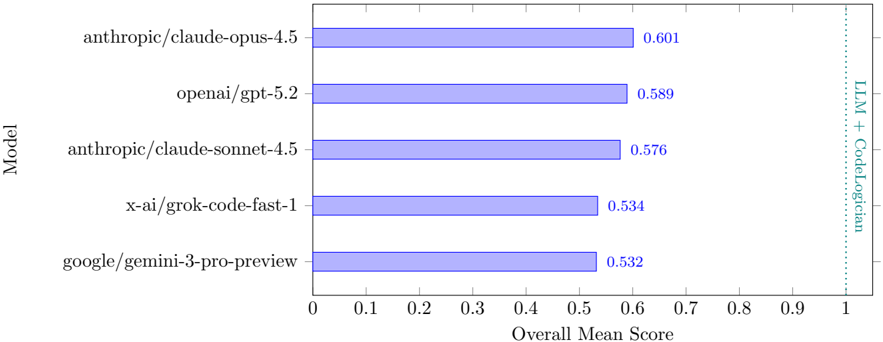

The image displays a horizontal bar chart comparing the performance of five different Large Language Models (LLMs) based on an "Overall Mean Score." The chart is oriented with models listed vertically on the y-axis and their corresponding scores on the x-axis. A vertical dashed line on the far right serves as a reference point.

### Components/Axes

* **Y-Axis (Vertical):** Labeled "Model." It lists five specific model identifiers.

* **X-Axis (Horizontal):** Labeled "Overall Mean Score." The scale runs from 0 to 1, with major tick marks at every 0.1 interval (0, 0.1, 0.2, ... 1.0).

* **Data Series:** Five horizontal bars, all in a uniform light blue/periwinkle color. Each bar's length corresponds to its score, and the exact numerical value is printed to the right of each bar.

* **Reference Line:** A vertical, dashed, teal-colored line is positioned at the x-axis value of 1.0. It is labeled vertically along its right side with the text "LLM + CodeLogician."

### Detailed Analysis

The models are presented in descending order of their Overall Mean Score. The exact scores are as follows:

1. **Top Bar (Highest Score):**

* **Model Label:** `anthropic/claude-opus-4.5`

* **Score:** 0.601

* **Visual Trend:** This is the longest bar, extending just past the 0.6 mark on the x-axis.

2. **Second Bar:**

* **Model Label:** `openai/gpt-5.2`

* **Score:** 0.589

* **Visual Trend:** Slightly shorter than the top bar, ending between the 0.5 and 0.6 marks, closer to 0.6.

3. **Third Bar:**

* **Model Label:** `anthropic/claude-sonnet-4.5`

* **Score:** 0.576

* **Visual Trend:** Marginally shorter than the second bar.

4. **Fourth Bar:**

* **Model Label:** `x-ai/grok-code-fast-1`

* **Score:** 0.534

* **Visual Trend:** Noticeably shorter than the top three bars, ending just past the 0.5 mark.

5. **Bottom Bar (Lowest Score):**

* **Model Label:** `google/gemini-3-pro-preview`

* **Score:** 0.532

* **Visual Trend:** The shortest bar, nearly identical in length to the fourth bar, with a difference of only 0.002.

### Key Observations

* **Performance Cluster:** The top three models (Claude Opus 4.5, GPT-5.2, Claude Sonnet 4.5) form a relatively tight cluster with scores between 0.576 and 0.601.

* **Performance Gap:** There is a distinct drop of approximately 0.042 points between the third-ranked model (0.576) and the fourth-ranked model (0.534).

* **Close Competition at the Bottom:** The bottom two models (Grok and Gemini) have nearly indistinguishable scores, separated by only 0.002.

* **Reference Point:** All five models score significantly below the reference line at 1.0, which is labeled "LLM + CodeLogician." The highest score (0.601) is approximately 60% of the value indicated by this reference line.

### Interpretation

This chart provides a snapshot of comparative performance among leading LLMs on a specific benchmark or evaluation metric, likely related to coding or logical reasoning given the "CodeLogician" reference.

* **What the Data Suggests:** The data suggests that on this particular task, Anthropic's Claude Opus 4.5 holds a slight performance advantage over its competitors, though the margin over OpenAI's GPT-5.2 is small (0.012). The performance hierarchy is clear, but the absolute differences between adjacent ranks are modest.

* **Relationship of Elements:** The uniform color of the bars focuses attention on the length (score) as the sole differentiating factor. The vertical dashed line at 1.0 acts as a crucial benchmark, framing all current model performances as falling short of a defined target or combined system capability ("LLM + CodeLogician"). This implies the scores are measured on a scale where 1.0 represents a maximum or ideal performance level.

* **Notable Anomalies/Trends:** The most striking trend is the clustering. The chart tells a story of two tiers: a top tier of three models performing in the high 0.5s to low 0.6s, and a lower tier of two models performing in the low 0.5s. The near-identical scores of the bottom two models suggest they may have hit a similar performance ceiling on this evaluation. The consistent gap between all models and the 1.0 reference line indicates substantial room for improvement across the board for this specific capability.