\n

## Dual-Axis Line Chart: Computation Time vs. Search Space Size

### Overview

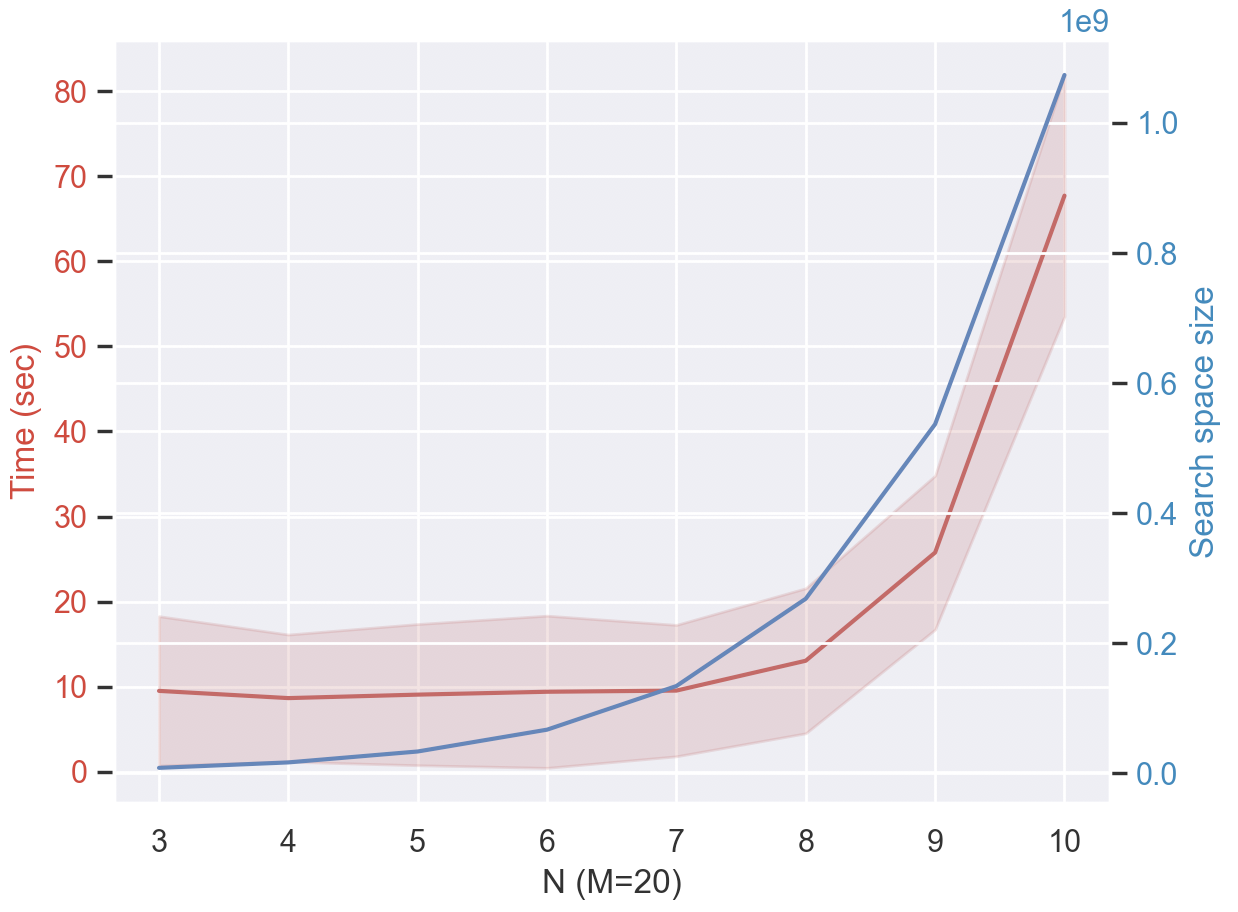

This image is a dual-axis line chart plotting two dependent variables against a common independent variable, `N`. The chart demonstrates the relationship between computational time (in seconds) and the size of a search space as the parameter `N` increases, with a fixed parameter `M=20`. The data suggests an exponential relationship, particularly for the search space size.

### Components/Axes

* **X-Axis (Bottom):** Labeled `N (M=20)`. It has discrete integer markers from 3 to 10.

* **Primary Y-Axis (Left):** Labeled `Time (sec)` in red text. The scale is linear, ranging from 0 to 80 with major tick marks every 10 units.

* **Secondary Y-Axis (Right):** Labeled `Search space size` in blue text. The scale is linear but represents values multiplied by `1e9` (1 billion), as indicated by the `1e9` notation at the top of the axis. The scale ranges from 0.0 to 1.0, corresponding to 0 to 1,000,000,000.

* **Data Series 1 (Red Line):** Represents `Time (sec)`. It is plotted against the left y-axis. The line is accompanied by a semi-transparent red shaded area, likely indicating a confidence interval, standard deviation, or range of measured times.

* **Data Series 2 (Blue Line):** Represents `Search space size`. It is plotted against the right y-axis.

* **Legend:** There is no explicit legend box. The color-coding is implicitly defined by the axis labels: red for Time, blue for Search space size.

### Detailed Analysis

**Data Series: Time (sec) - Red Line**

* **Trend:** The line shows a gradual, near-linear increase from N=3 to N=8, followed by a sharp, exponential-like increase from N=8 to N=10.

* **Approximate Data Points (with uncertainty from shaded area):**

* N=3: ~10 sec (Range: ~5 to ~18 sec)

* N=4: ~9 sec (Range: ~4 to ~16 sec)

* N=5: ~9 sec (Range: ~4 to ~17 sec)

* N=6: ~10 sec (Range: ~5 to ~18 sec)

* N=7: ~10 sec (Range: ~5 to ~17 sec)

* N=8: ~13 sec (Range: ~5 to ~20 sec)

* N=9: ~26 sec (Range: ~16 to ~36 sec)

* N=10: ~68 sec (Range: ~53 to ~82 sec)

**Data Series: Search Space Size - Blue Line**

* **Trend:** The line exhibits clear exponential growth. The increase is slow for lower N values but becomes extremely steep after N=7.

* **Approximate Data Points (values on right axis, multiply by 1e9):**

* N=3: ~0.01 (10,000,000)

* N=4: ~0.02 (20,000,000)

* N=5: ~0.05 (50,000,000)

* N=6: ~0.12 (120,000,000)

* N=7: ~0.25 (250,000,000)

* N=8: ~0.45 (450,000,000)

* N=9: ~0.75 (750,000,000)

* N=10: ~1.05 (1,050,000,000)

### Key Observations

1. **Correlated Growth:** Both computation time and search space size increase with N. The growth of the search space appears to be the primary driver for the increase in computation time.

2. **Phase Change at N=8:** There is a distinct inflection point around N=8. Before this point, time increases modestly. After N=8, both metrics, but especially time, increase dramatically.

3. **Uncertainty in Time:** The shaded red area indicates significant variability or uncertainty in the measured computation time, especially at lower N values (N=3 to N=8). The relative uncertainty (the width of the band compared to the mean value) decreases as N and the mean time increase.

4. **Exponential vs. Super-Exponential:** The search space size (blue) follows a smooth exponential curve. The computation time (red) appears to follow a steeper, possibly super-exponential curve after N=8, suggesting that the algorithm's time complexity may be worse than polynomial relative to the search space size.

### Interpretation

This chart likely visualizes the performance scaling of a search or optimization algorithm (e.g., in planning, constraint satisfaction, or combinatorial problems) where `N` is a problem size parameter and `M` is a fixed branching factor or similar constant.

The data demonstrates the classic "combinatorial explosion" problem. The search space grows exponentially with `N`, which is a fundamental challenge in computer science. More importantly, the chart shows that the *actual computation time* does not just track the search space size linearly; it accelerates even faster after a certain problem size (N=8). This could indicate:

* **Algorithmic Overhead:** The algorithm has a time complexity that is a higher-order function of the search space size (e.g., O(S^2) where S is the search space).

* **Resource Saturation:** At N=8, the problem may have exceeded the capacity of a fast memory cache (like L2/L3), leading to more costly memory accesses and a sharp slowdown.

* **Increased Difficulty:** The problems at N>8 may be intrinsically harder to solve, requiring more processing per node in the search space.

The significant variance in time at lower N suggests that problem difficulty is highly instance-dependent for smaller sizes, while for larger N, the sheer scale of the problem dominates, leading to more consistent (but very long) runtimes. This chart is a powerful argument for the need for heuristic improvements, better pruning strategies, or more computational resources when tackling problems where N must be large.