## Line Chart: Loss vs. Epochs for MRL and SMRL Methods

### Overview

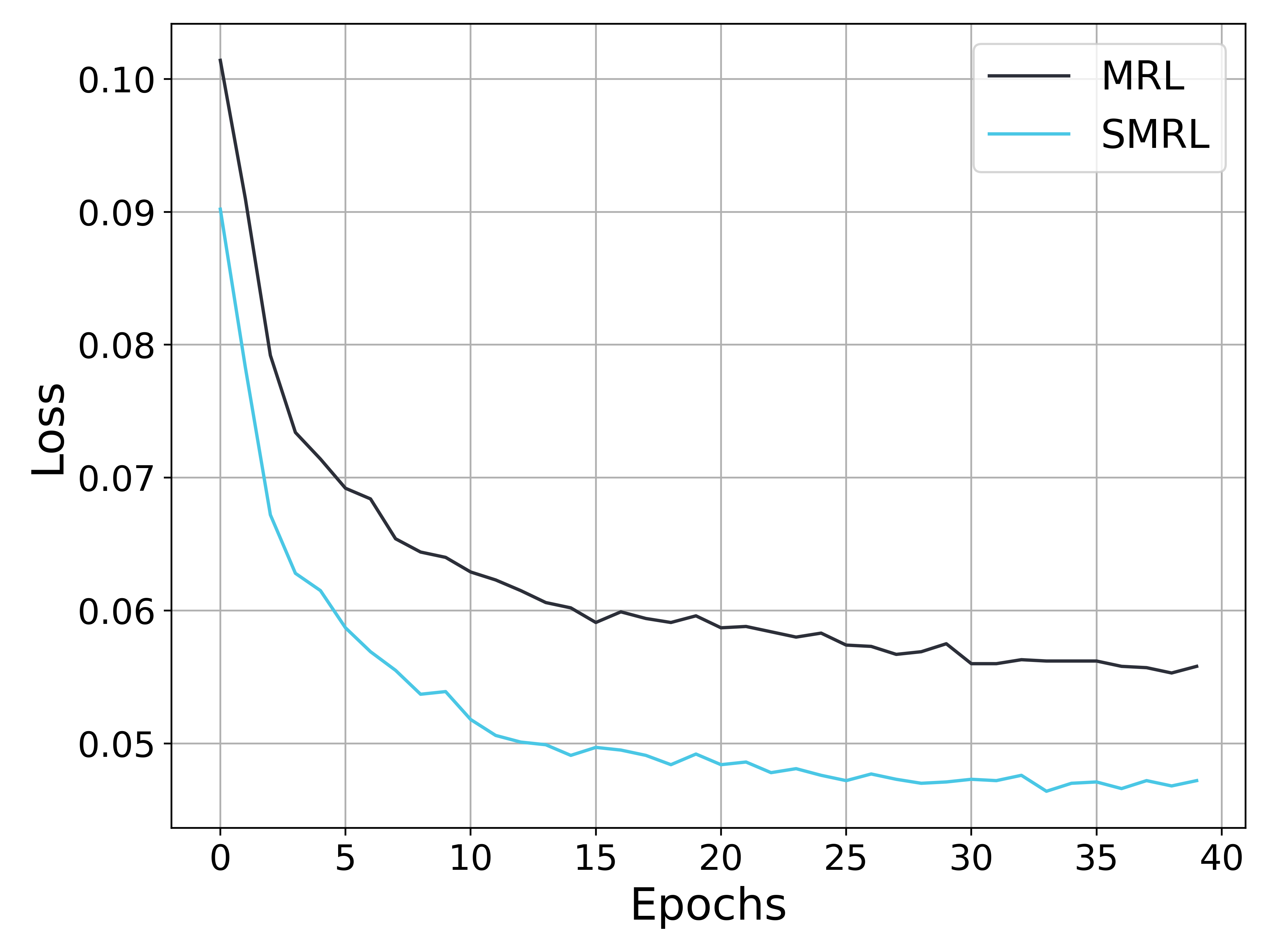

The image is a line chart comparing the training loss over 40 epochs for two different methods or models, labeled "MRL" and "SMRL". The chart demonstrates the learning curves, showing how the loss metric decreases as training progresses for both approaches.

### Components/Axes

* **Chart Type:** Line chart with two data series.

* **X-Axis:**

* **Label:** "Epochs"

* **Scale:** Linear, from 0 to 40.

* **Major Tick Marks:** 0, 5, 10, 15, 20, 25, 30, 35, 40.

* **Y-Axis:**

* **Label:** "Loss"

* **Scale:** Linear, from approximately 0.045 to 0.105.

* **Major Tick Marks:** 0.05, 0.06, 0.07, 0.08, 0.09, 0.10.

* **Legend:**

* **Position:** Top-right corner of the chart area.

* **Entries:**

1. **MRL:** Represented by a dark gray (almost black) solid line.

2. **SMRL:** Represented by a cyan (light blue) solid line.

* **Grid:** A light gray grid is present, aligned with the major tick marks on both axes.

### Detailed Analysis

**Data Series 1: MRL (Dark Gray Line)**

* **Trend:** The line shows a steep, rapid decrease in loss initially, followed by a gradual, steady decline that begins to plateau after approximately epoch 20.

* **Approximate Data Points:**

* Epoch 0: Loss ≈ 0.101

* Epoch 5: Loss ≈ 0.073

* Epoch 10: Loss ≈ 0.063

* Epoch 20: Loss ≈ 0.059

* Epoch 30: Loss ≈ 0.056

* Epoch 40: Loss ≈ 0.056 (final value)

**Data Series 2: SMRL (Cyan Line)**

* **Trend:** Similar to MRL, this line exhibits a very sharp initial drop in loss, followed by a slower rate of decrease. It consistently maintains a lower loss value than the MRL line throughout the entire training period shown.

* **Approximate Data Points:**

* Epoch 0: Loss ≈ 0.090

* Epoch 5: Loss ≈ 0.059

* Epoch 10: Loss ≈ 0.052

* Epoch 20: Loss ≈ 0.049

* Epoch 30: Loss ≈ 0.048

* Epoch 40: Loss ≈ 0.047 (final value)

### Key Observations

1. **Performance Gap:** The SMRL method achieves a lower loss value at every epoch compared to the MRL method. The gap between the two lines is established early (by epoch 5) and remains relatively consistent.

2. **Convergence Behavior:** Both curves show classic convergence patterns: a phase of rapid improvement (steep descent) in the first ~5-10 epochs, followed by a phase of fine-tuning and diminishing returns (gradual descent/plateau).

3. **Final Values:** By epoch 40, the loss for SMRL (~0.047) is approximately 16% lower than the loss for MRL (~0.056).

4. **Stability:** Both lines exhibit minor fluctuations or "noise" in the later epochs (post epoch 20), but the overall downward trend is clear and stable.

### Interpretation

This chart provides a direct performance comparison between two training methodologies, MRL and SMRL. The data strongly suggests that the **SMRL method is more effective** for the given task, as evidenced by its consistently lower loss values throughout the training process.

The relationship between the elements is clear: the x-axis (Epochs) represents training time or iterations, and the y-axis (Loss) represents the error or cost function being minimized. The downward slope of both lines confirms that both models are learning. The key differentiator is the **efficiency and final performance** of SMRL.

The notable anomaly is not a single outlier point but the **persistent performance gap**. This indicates a fundamental difference in how the two methods optimize or represent the problem, leading SMRL to find a better solution (lower loss) faster and maintain that advantage. The plateauing of both curves suggests that further significant gains beyond epoch 40 would require changes to the training regimen (e.g., learning rate adjustment) or model architecture, as the current optimization process is nearing its limit for both methods.