## Flowchart: Task Planning and Agent Optimization Workflow

### Overview

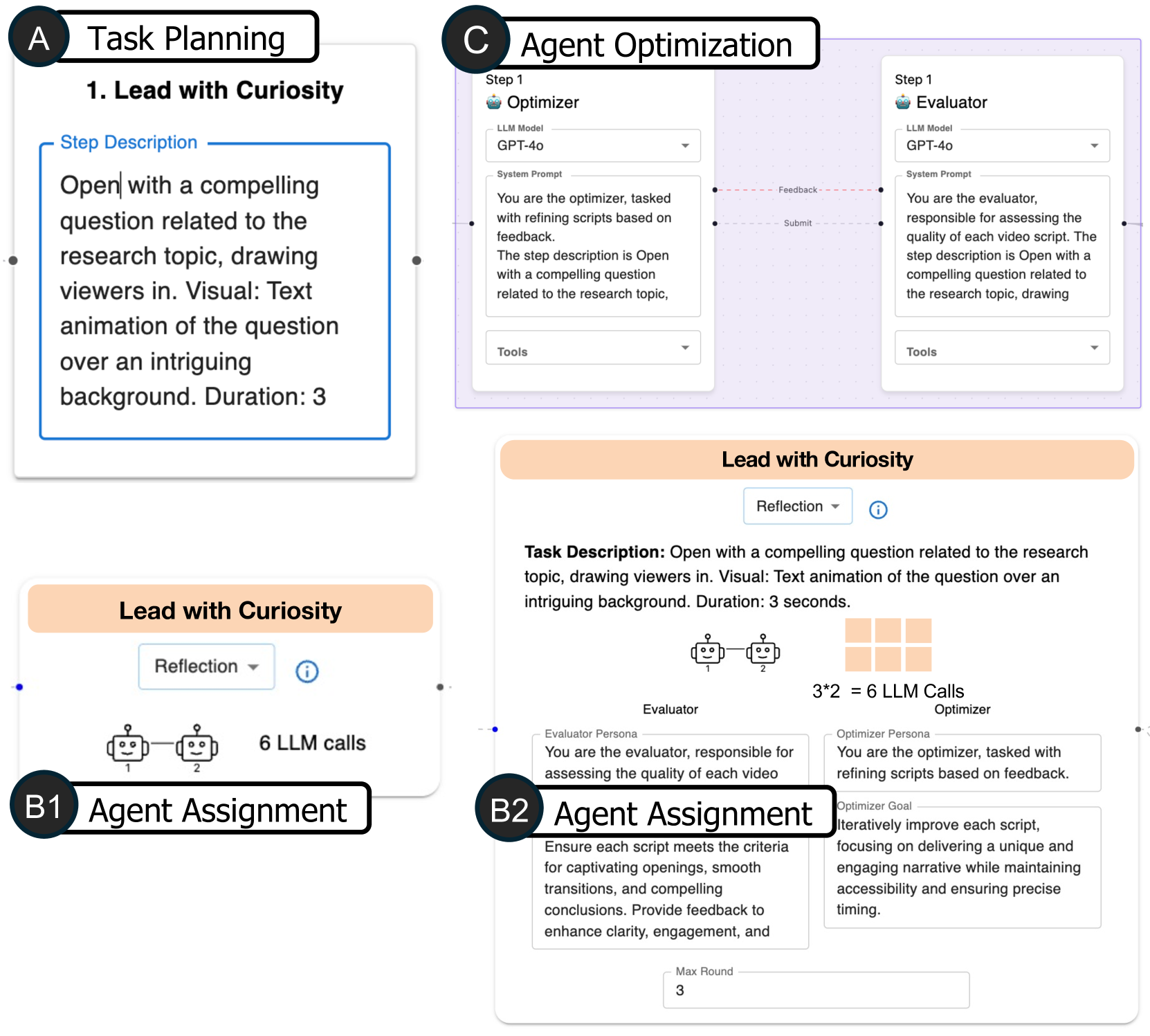

The image depicts a multi-stage workflow for creating and optimizing video scripts using AI agents. It includes task planning, agent assignments, and iterative optimization processes. Key components involve curiosity-driven openings, evaluator/optimizer roles, and feedback loops.

### Components/Axes

1. **Task Planning (Section A)**

- **Step 1: Lead with Curiosity**

- **Step Description**: Open with a compelling question related to the research topic, drawing viewers in. Visual: Text animation of the question over an intriguing background. Duration: 3 seconds.

- **Reflection**: Dropdown menu labeled "Reflection" with an info icon.

2. **Agent Assignment (Sections B1 and B2)**

- **B1: Agent Assignment**

- **6 LLM Calls**: Visual representation of two interconnected agents (labeled 1 and 2) with a total of 6 LLM calls.

- **B2: Agent Assignment**

- **Evaluator Persona**: Responsible for assessing script quality (captivating openings, smooth transitions, compelling conclusions). Provides feedback to enhance clarity and engagement.

- **Optimizer Persona**: Iteratively improves scripts, focusing on unique narratives, accessibility, and precise timing.

- **Max Round**: 3 iterations.

3. **Agent Optimization (Section C)**

- **Step 1: Optimizer**

- **LLM Model**: GPT-4o.

- **System Prompt**: "You are the optimizer, tasked with refining scripts based on feedback."

- **Step 1: Evaluator**

- **LLM Model**: GPT-4o.

- **System Prompt**: "You are the evaluator, responsible for assessing the quality of each video script."

- **Feedback Loop**: Dashed red lines connect Optimizer and Evaluator, indicating iterative refinement.

### Detailed Analysis

- **Task Planning**: The initial step emphasizes engaging viewers with a curiosity-driven question, supported by a 3-second text animation.

- **Agent Roles**:

- **Evaluator**: Focuses on script quality metrics (e.g., transitions, conclusions).

- **Optimizer**: Prioritizes narrative uniqueness and timing precision.

- **Feedback Mechanism**: The dashed lines in Section C suggest a cyclical process where the Evaluator’s feedback informs the Optimizer’s revisions.

- **LLM Usage**: GPT-4o is the primary model for both optimization and evaluation tasks.

### Key Observations

- **Iterative Process**: The workflow emphasizes repeated refinement (3 max rounds) to balance creativity and technical precision.

- **Resource Allocation**: 6 LLM calls in B1 suggest significant computational effort for initial script generation.

- **Dual-Agent System**: Separation of evaluator and optimizer roles mirrors human creative workflows (e.g., writer-editor dynamics).

### Interpretation

This workflow illustrates a hybrid human-AI collaboration model for content creation. The "Lead with Curiosity" step leverages psychological engagement tactics, while the agent assignments mirror specialized human roles (e.g., editors, directors). The feedback loop between Optimizer and Evaluator ensures scripts evolve through data-driven iterations, balancing artistic and technical criteria. The 3-second animation duration and 6 LLM calls highlight constraints on runtime and computational resources, respectively.

**Notable Patterns**:

- The Optimizer’s goal to "deliver a unique and engaging narrative" while maintaining accessibility suggests a tension between creativity and inclusivity.

- The Evaluator’s focus on "smooth transitions" implies technical polish is prioritized alongside content quality.

- The 3-round limit may reflect practical limits on revision cycles before finalizing a script.

**Underlying Implications**:

- The diagram assumes GPT-4o’s capability to handle nuanced feedback, which may depend on prompt engineering quality.

- The separation of evaluator and optimizer roles could reduce cognitive load but may introduce latency in the feedback loop.

- The emphasis on "compelling conclusions" aligns with research showing viewers retain information better when openings and closings are strong.