## Bar Chart: NMSE Comparison Across Methods (ID vs. OOD)

### Overview

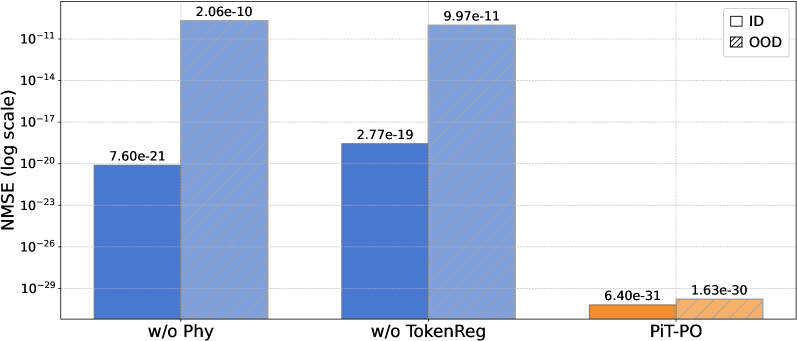

This is a grouped bar chart comparing the Normalized Mean Squared Error (NMSE) on a logarithmic scale for three different methods or conditions. Each method has two bars representing performance on In-Distribution (ID) and Out-Of-Distribution (OOD) data. The chart demonstrates a significant performance gap between ID and OOD scenarios for the first two methods, while the third method ("PiT-PO") shows dramatically lower error overall.

### Components/Axes

* **Chart Type:** Grouped bar chart.

* **Y-Axis:**

* **Label:** `NMSE (log scale)`

* **Scale:** Logarithmic, ranging from `10^-29` to `10^-11`.

* **Major Tick Marks:** `10^-29`, `10^-26`, `10^-23`, `10^-20`, `10^-17`, `10^-14`, `10^-11`.

* **X-Axis (Categories):** Three distinct methods/conditions:

1. `w/o Phy`

2. `w/o TokenReg`

3. `PiT-PO`

* **Legend:** Located in the top-right corner.

* `ID`: Represented by solid-colored bars.

* `OOD`: Represented by hatched (diagonal lines) bars.

* **Bar Colors:** The first two method groups (`w/o Phy`, `w/o TokenReg`) use blue bars. The final group (`PiT-PO`) uses orange bars, likely to highlight it as the primary or proposed method.

### Detailed Analysis

The chart presents the following exact data points, read from the labels atop each bar:

| Method | Data Type | NMSE Value (Scientific Notation) | Approximate Value (Decimal) |

| :----------- | :-------- | :------------------------------- | :-------------------------- |

| **w/o Phy** | ID | `7.60e-21` | 0.0000000000000000000076 |

| | OOD | `2.06e-10` | 0.000000000206 |

| **w/o TokenReg** | ID | `2.77e-19` | 0.000000000000000000277 |

| | OOD | `9.97e-11` | 0.0000000000997 |

| **PiT-PO** | ID | `6.40e-31` | 0.0000000000000000000000000000064 |

| | OOD | `1.63e-30` | 0.00000000000000000000000000163 |

**Visual Trend Verification:**

1. **For `w/o Phy`:** The OOD bar (hatched blue) is dramatically taller than the ID bar (solid blue), indicating a massive increase in error for out-of-distribution data.

2. **For `w/o TokenReg`:** The same pattern holds. The OOD bar is significantly taller than the ID bar, though the absolute error values are slightly lower than the `w/o Phy` case.

3. **For `PiT-PO`:** Both bars are extremely short, sitting near the bottom of the chart (`10^-30` range). The OOD bar is slightly taller than the ID bar, but the difference is minuscule compared to the other methods. The color shift to orange visually sets this method apart.

### Key Observations

1. **Massive OOD Degradation:** The first two methods (`w/o Phy` and `w/o TokenReg`) suffer from catastrophic performance degradation on out-of-distribution data. Their OOD NMSE is **10 to 11 orders of magnitude higher** than their ID NMSE.

2. **PiT-PO Superiority:** The `PiT-PO` method achieves an NMSE that is **10 to 20 orders of magnitude lower** than the other methods for both ID and OOD scenarios. Its performance is exceptionally strong.

3. **Robustness of PiT-PO:** While `PiT-PO` still shows a slight increase in error for OOD data (`1.63e-30` vs. `6.40e-31`), the relative gap is very small. This suggests the method is highly robust and generalizes well.

4. **Log Scale Necessity:** The use of a log scale is essential to visualize all data points simultaneously, as the values span over 20 orders of magnitude.

### Interpretation

This chart provides strong empirical evidence for the effectiveness of the `PiT-PO` method. The data suggests that:

* **The Problem:** Standard models (represented by `w/o Phy` and `w/o TokenReg`) are extremely brittle. They perform well only on data similar to their training distribution (ID) but fail dramatically when faced with novel or shifted data (OOD). This is a classic sign of poor generalization and overfitting to the training distribution.

* **The Solution:** `PiT-PO` appears to be a technique that successfully addresses this brittleness. Its extraordinarily low NMSE values indicate it makes highly accurate predictions. More importantly, the minimal difference between its ID and OOD performance demonstrates **exceptional robustness and generalization capability**. It maintains its accuracy even when the data distribution changes.

* **Practical Implication:** In real-world applications where data is rarely perfectly stationary, a model like `PiT-PO` would be far more reliable and trustworthy than the alternatives shown. The chart is likely from a research paper aiming to prove that `PiT-PO` is a state-of-the-art solution for robust machine learning or modeling tasks.