## Line Charts: Model Accuracy Comparison Across Training and Evaluation Sets

### Overview

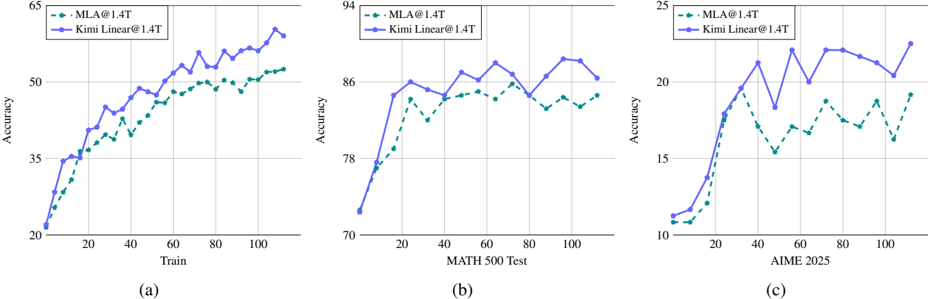

The image contains three separate line charts, labeled (a), (b), and (c), arranged horizontally. Each chart compares the performance of two models, "MLA@1.4T" and "Kimi Linear@1.4T," across different evaluation contexts. The charts track "Accuracy" (y-axis) against a progression metric (x-axis), which varies per chart. The overall visual trend shows both models improving, with "Kimi Linear@1.4T" consistently achieving higher accuracy than "MLA@1.4T" across all three scenarios.

### Components/Axes

* **Legend:** Located in the top-left corner of each chart.

* `MLA@1.4T`: Represented by a teal, dashed line with circular markers.

* `Kimi Linear@1.4T`: Represented by a purple, solid line with circular markers.

* **Chart (a):**

* **Title/Label:** (a) [Bottom-left]

* **X-axis:** Label: "Train". Scale: 0 to 100, with major ticks at 20, 40, 60, 80, 100.

* **Y-axis:** Label: "Accuracy". Scale: 20 to 65, with major ticks at 20, 35, 50, 65.

* **Chart (b):**

* **Title/Label:** (b) [Bottom-left]

* **X-axis:** Label: "MATH 500 Test". Scale: 0 to 100, with major ticks at 20, 40, 60, 80, 100.

* **Y-axis:** Label: "Accuracy". Scale: 70 to 94, with major ticks at 70, 78, 86, 94.

* **Chart (c):**

* **Title/Label:** (c) [Bottom-left]

* **X-axis:** Label: "AIME 2025". Scale: 0 to 100, with major ticks at 20, 40, 60, 80, 100.

* **Y-axis:** Label: "Accuracy". Scale: 10 to 25, with major ticks at 10, 15, 20, 25.

### Detailed Analysis

**Chart (a) - Training Progress:**

* **Trend Verification:** Both lines show a strong, generally upward trend from left to right, indicating learning over training steps. The purple line (Kimi Linear) maintains a consistent lead above the teal line (MLA).

* **Data Points (Approximate):**

* **Start (x≈0):** Both models begin near 20% accuracy.

* **Mid-point (x≈50):** MLA ≈ 45%, Kimi Linear ≈ 50%.

* **End (x≈100):** MLA ≈ 50%, Kimi Linear ≈ 60%.

**Chart (b) - Performance on MATH 500 Test:**

* **Trend Verification:** Both lines show an initial sharp rise followed by a more volatile, plateau-like trend with fluctuations. The purple line (Kimi Linear) is consistently positioned above the teal line (MLA).

* **Data Points (Approximate):**

* **Start (x≈0):** Both models begin near 72% accuracy.

* **Peak (Kimi Linear, x≈70):** ≈ 88%.

* **Valley (MLA, x≈80):** ≈ 82%.

* **End (x≈100):** MLA ≈ 84%, Kimi Linear ≈ 87%.

**Chart (c) - Performance on AIME 2025:**

* **Trend Verification:** Both lines show a steep initial climb followed by significant volatility. The purple line (Kimi Linear) maintains a clear and widening lead over the teal line (MLA) after the initial phase.

* **Data Points (Approximate):**

* **Start (x≈0):** Both models begin near 11% accuracy.

* **First Peak (Kimi Linear, x≈30):** ≈ 22%.

* **Valley (MLA, x≈50):** ≈ 15%.

* **End (x≈100):** MLA ≈ 19%, Kimi Linear ≈ 23%.

### Key Observations

1. **Consistent Superiority:** The "Kimi Linear@1.4T" model (purple line) outperforms the "MLA@1.4T" model (teal line) at every measured point across all three charts.

2. **Performance Hierarchy:** The absolute accuracy values differ dramatically by task: highest on MATH 500 Test (70-90% range), moderate during training (20-60% range), and lowest on AIME 2025 (10-25% range). This suggests AIME 2025 is the most challenging evaluation set.

3. **Volatility:** Performance on the specific test sets (charts b and c) is more volatile (jagged lines) than the smoother learning curve during training (chart a).

4. **Gap Analysis:** The performance gap between the two models appears largest on the most challenging task (AIME 2025, chart c), suggesting the Kimi Linear architecture may have a greater advantage on harder problems.

### Interpretation

This set of charts provides a comparative performance analysis of two large-scale models (presumably with 1.4 trillion parameters, denoted by "@1.4T"). The data demonstrates that the "Kimi Linear" architecture consistently yields higher accuracy than the "MLA" architecture when trained and evaluated under the same conditions.

The progression from chart (a) to (c) tells a story of increasing task difficulty. While both models learn effectively during training (a), their performance on standardized tests reveals their true capabilities. The "MATH 500 Test" (b) shows high but volatile scores, indicating a challenging but manageable benchmark. The "AIME 2025" (c) results, with much lower absolute accuracy, highlight a significantly harder problem domain, likely requiring advanced mathematical reasoning.

The key takeaway is not just that Kimi Linear is better, but that its performance advantage is most pronounced on the most difficult task (AIME 2025). This suggests the architectural differences between Kimi Linear and MLA may confer a specific benefit for complex reasoning or generalization, which becomes the differentiating factor when simpler pattern recognition is insufficient. The volatility in test scores also implies that single-point evaluations may be unreliable; the consistent trend across multiple points is the more robust finding.