\n

## Code Block: Python Function Definitions

### Overview

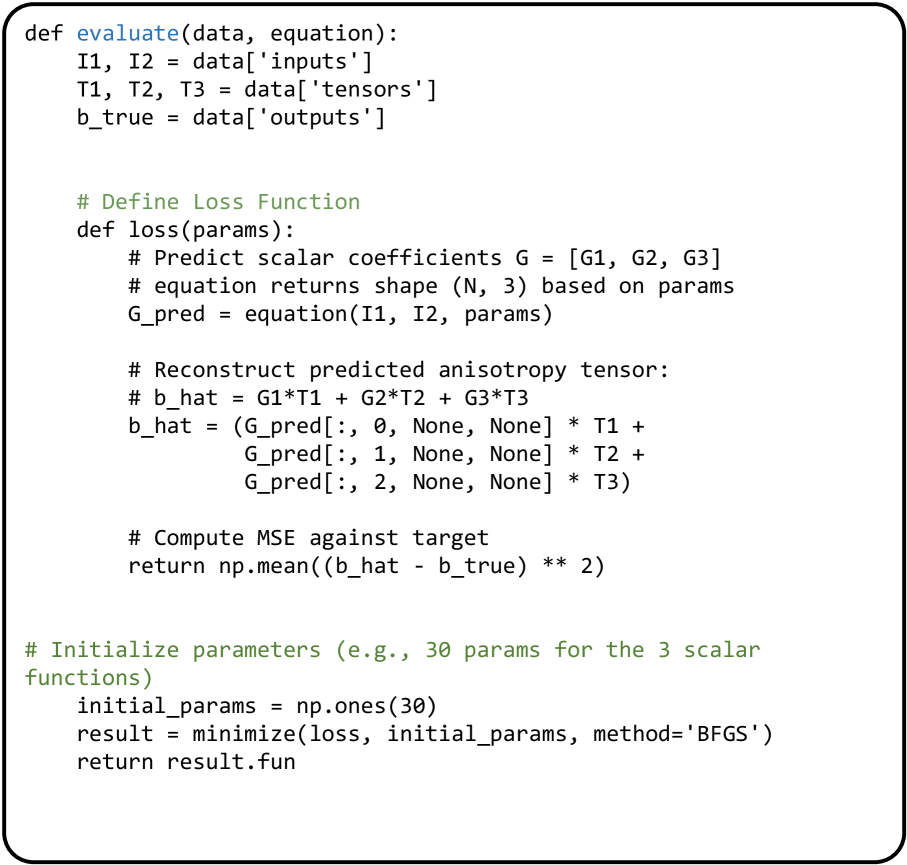

The image contains a block of Python code defining functions related to evaluating data and minimizing a loss function, likely within a machine learning or scientific computing context. The code appears to be focused on reconstructing an anisotropy tensor based on input tensors and scalar coefficients.

### Components/Axes

The code consists of function definitions with comments explaining the purpose of each section. There are no axes or charts in this image. The code uses the `numpy` library (indicated by `np.mean` and `np.ones`).

### Detailed Analysis or Content Details

The code defines two functions: `evaluate` and `loss`.

**`evaluate(data, equation)` function:**

- Takes `data` and `equation` as input.

- Extracts `I1`, `I2` from `data['inputs']`.

- Extracts `T1`, `T2`, `T3` from `data['tensors']`.

- Extracts `b_true` from `data['outputs']`.

**`loss(params)` function:**

- Takes `params` as input.

- Predicts scalar coefficients `G = [G1, G2, G3]` using the `equation` function, which returns a shape (N, 3) based on the `params`. `G_pred` is the result of this prediction.

- Reconstructs the predicted anisotropy tensor `b_hat` using the formula: `b_hat = G1*T1 + G2*T2 + G3*T3`. The code expands this using `G_pred` and indexing to calculate each component.

- Computes the Mean Squared Error (MSE) between the predicted tensor `b_hat` and the target tensor `b_true`.

- Returns the MSE.

**Initialization and Minimization:**

- Initializes parameters with `np.ones(30)`, creating a numpy array of 30 ones.

- Uses the `minimize` function (likely from `scipy.optimize`) to minimize the `loss` function, starting with the `initial_params` and using the BFGS method.

- Returns the function value (`result.fun`) after minimization.

### Key Observations

- The code is well-commented, explaining the purpose of each step.

- The `equation` function is passed as an argument to `evaluate`, suggesting a flexible framework where different equations can be used.

- The loss function is based on the Mean Squared Error, a common metric for regression problems.

- The use of `BFGS` suggests an optimization problem with a smooth objective function.

- The code assumes the existence of `data` dictionary with keys 'inputs', 'tensors', and 'outputs'.

### Interpretation

The code implements a process for estimating parameters that reconstruct an anisotropy tensor from input tensors. The `loss` function quantifies the difference between the reconstructed tensor and a target tensor, and the `minimize` function finds the parameters that minimize this difference. This suggests a model fitting or parameter estimation task, potentially in materials science, image processing, or other fields where anisotropy is important. The use of numpy and scipy indicates a numerical computation focus. The code is designed to be modular, with the equation being passed as a parameter, allowing for different models to be tested.