## Diagram: Neural Network Architecture

### Overview

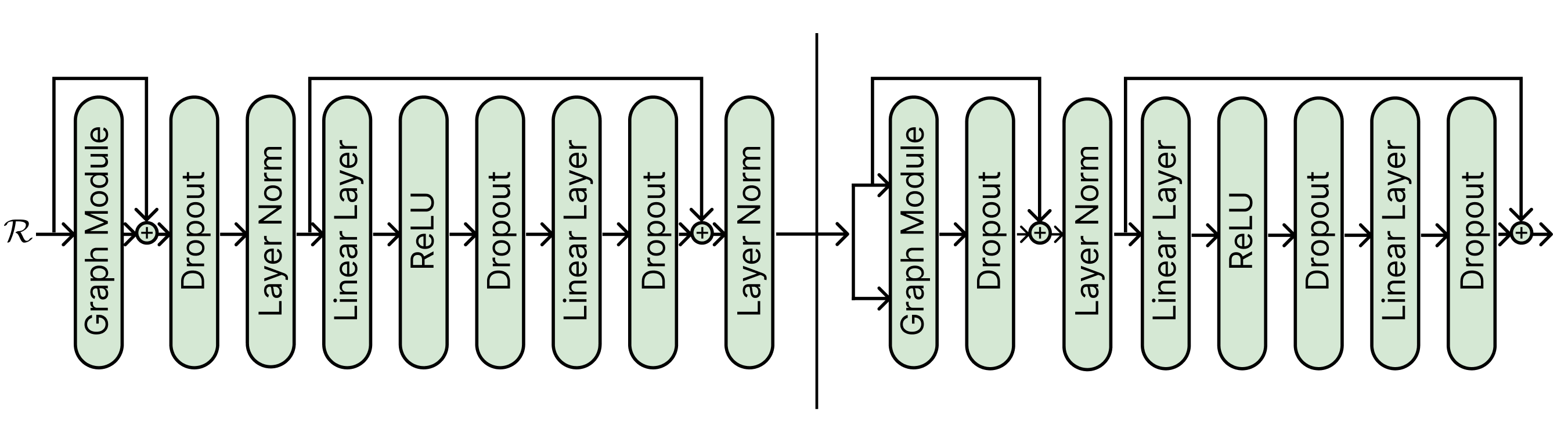

The image depicts a neural network architecture consisting of two identical modules connected in sequence. Each module contains a graph module, dropout layers, layer normalization, linear layers, and ReLU activation functions. Skip connections are present, adding the output of the graph module to the output of a dropout layer.

### Components/Axes

The diagram consists of the following components, arranged sequentially within each module:

* **R**: Input to the first module.

* **Graph Module**: A processing block, likely involving graph neural network operations.

* **Dropout**: A regularization technique that randomly sets a fraction of input units to 0 at each update during training time.

* **Layer Norm**: Layer Normalization.

* **Linear Layer**: A fully connected layer.

* **ReLU**: Rectified Linear Unit activation function.

* **"+"**: Addition operation, indicating a skip connection.

* **Arrows**: Indicate the direction of data flow.

### Detailed Analysis

The diagram can be broken down into two identical modules, separated by a vertical line. Each module has the following structure:

1. **Input:** The first module receives input 'R'. The second module receives the output of the first module.

2. **Graph Module:** The input is processed by a Graph Module.

3. **Skip Connection:** The output of the Graph Module is added to the output of a Dropout layer later in the module.

4. **Dropout:** A dropout layer follows the Graph Module.

5. **Layer Norm:** A layer normalization layer follows the dropout layer.

6. **Linear Layer:** A linear layer follows the layer normalization layer.

7. **ReLU:** A ReLU activation function follows the linear layer.

8. **Dropout:** A dropout layer follows the ReLU activation function.

9. **Linear Layer:** A linear layer follows the dropout layer.

10. **Dropout:** A dropout layer follows the linear layer.

11. **Addition:** The output of the first dropout layer is added to the output of the graph module.

12. **Layer Norm:** A layer normalization layer follows the addition.

The data flow is generally sequential, with the exception of the skip connection that adds the output of the Graph Module to the output of a Dropout layer.

### Key Observations

* The architecture is modular, with two identical modules connected in series.

* Skip connections are used to potentially improve information flow and gradient propagation.

* Dropout layers are used for regularization.

* Layer normalization is used to stabilize training.

* ReLU activation functions introduce non-linearity.

### Interpretation

The diagram illustrates a specific neural network architecture, likely designed for processing graph-structured data. The modular design suggests that the architecture can be scaled by adding more modules. The skip connections are a key feature, potentially allowing the network to learn more complex relationships between the input and output. The use of dropout and layer normalization indicates a focus on training stability and generalization performance. The repetition of the same sequence of layers in both modules suggests a hierarchical feature extraction process.