## Diagram: Neuro-Symbolic AI Architectures

### Overview

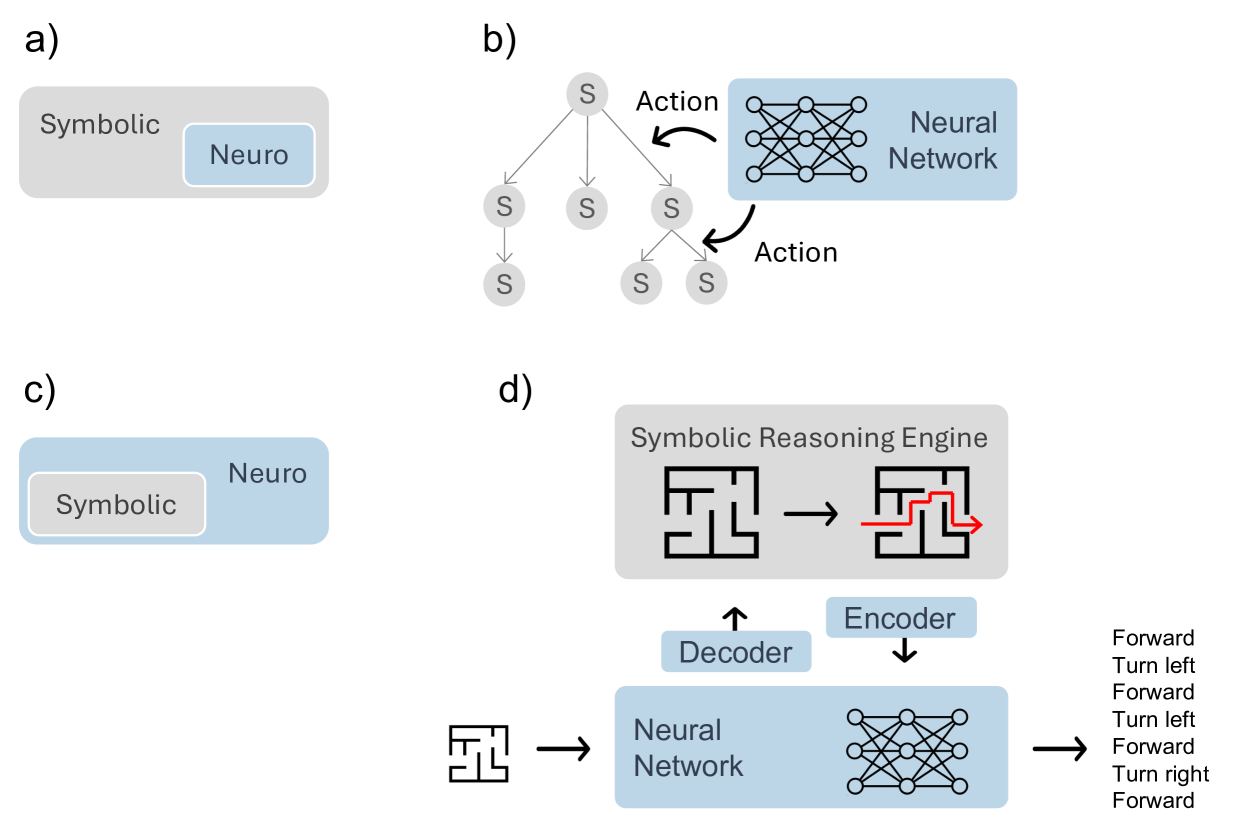

The image displays four distinct conceptual diagrams (labeled a, b, c, d) illustrating different architectural paradigms for integrating symbolic reasoning and neural networks. The diagrams use a consistent color scheme: gray represents symbolic components, and light blue represents neural components. The overall theme is the structural relationship between these two AI approaches.

### Components/Axes

The image is divided into four quadrants, each containing a labeled sub-diagram:

* **a)** Top-left: A nested box diagram.

* **b)** Top-right: A tree structure connected to a neural network.

* **c)** Bottom-left: An inverted nested box diagram.

* **d)** Bottom-right: A more complex system flow involving mazes, a reasoning engine, and a neural network with an encoder-decoder structure.

**Labels and Text Elements:**

* **a)** Outer gray box: "Symbolic". Inner blue box: "Neuro".

* **b)** Tree nodes: "S". Arrows labeled: "Action". Blue box: "Neural Network".

* **c)** Outer blue box: "Neuro". Inner gray box: "Symbolic".

* **d)** Top gray box: "Symbolic Reasoning Engine". Blue boxes: "Encoder", "Decoder", "Neural Network". Text list on far right: "Forward", "Turn left", "Forward", "Turn left", "Forward", "Turn right", "Forward".

### Detailed Analysis

**Diagram a) - Neuro within Symbolic:**

* **Structure:** A large gray rectangle labeled "Symbolic" contains a smaller, nested blue rectangle labeled "Neuro".

* **Relationship:** This visually represents a system where a neural network component operates *within* or is subservient to a broader symbolic reasoning framework. The symbolic system is the primary container.

**Diagram b) - Symbolic Tree with Neural Action Selection:**

* **Structure:** A tree graph on the left has a root node "S" branching into three child nodes "S", with one of those further branching into two leaf nodes "S". Arrows labeled "Action" point from two of the symbolic ("S") nodes toward a blue box on the right labeled "Neural Network", which contains a standard multilayer perceptron icon.

* **Relationship:** This depicts a hybrid system where a symbolic search or planning process (the tree) generates candidate states or decisions ("S"). A neural network is then used to evaluate these candidates or select the next "Action" to take within the symbolic structure.

**Diagram c) - Symbolic within Neuro:**

* **Structure:** The inverse of diagram (a). A large blue rectangle labeled "Neuro" contains a smaller, nested gray rectangle labeled "Symbolic".

* **Relationship:** This represents a system where symbolic reasoning modules are embedded *within* a dominant neural network architecture. The neural system is the primary container, potentially using symbolic logic as a specialized subroutine.

**Diagram d) - Integrated Neuro-Symbolic System for Navigation:**

* **Structure:** This is a multi-stage pipeline.

1. **Input:** A maze icon (left) feeds into a blue "Neural Network" box.

2. **Neural Processing:** The neural network has an "Encoder" (down arrow) and a "Decoder" (up arrow) connecting it to a higher-level component.

3. **Symbolic Reasoning:** The "Decoder" output goes to a gray "Symbolic Reasoning Engine" box. This box contains two maze icons: the first is a raw maze, and the second shows the same maze with a red path solving it (arrow indicating solution direction).

4. **Output:** The "Encoder" receives input from the symbolic engine. The final output from the neural network is a sequence of text commands: "Forward", "Turn left", "Forward", "Turn left", "Forward", "Turn right", "Forward".

* **Relationship:** This illustrates a full neuro-symbolic pipeline for a task like maze navigation. The neural network likely processes the raw maze input (perception), the symbolic engine reasons about the solution path (planning/logic), and the neural network then translates this symbolic plan into executable action commands (control).

### Key Observations

1. **Architectural Dichotomy:** Diagrams (a) and (c) present a fundamental dichotomy in integration: is the symbolic system the host or the guest within the neural system?

2. **Functional Separation:** Diagram (b) shows a clear functional separation: symbolic search generates options, neural networks select among them.

3. **Complex Pipeline:** Diagram (d) is the most detailed, showing a complete perception-reasoning-action loop. It explicitly includes an encoder-decoder interface between the neural and symbolic components, suggesting a translation of representations between the two domains.

4. **Consistent Visual Language:** The use of gray for symbolic and blue for neural is maintained throughout, allowing for easy comparison of the structural relationships.

### Interpretation

These diagrams collectively map the design space for neuro-symbolic AI systems. They move from simple conceptual relationships (a, c) to more functional (b) and finally to a detailed, task-specific implementation (d).

* **What it demonstrates:** The image argues that there is no single way to combine neural and symbolic AI. The choice of architecture depends on the problem. For tasks requiring structured search and explicit reasoning (like planning in a maze, as in d), a tight integration where symbolic engines handle core logic and neural networks handle perception and translation may be optimal. For other tasks, one paradigm might simply host the other as a module.

* **Relationships:** The progression suggests an evolution in thinking, from abstract containment models to interactive and finally integrated pipeline models. The "Action" arrows in (b) and the encoder/decoder in (d) highlight the critical challenge of *interface design*—how these two fundamentally different computational paradigms communicate.

* **Notable Pattern:** The most sophisticated diagram (d) is applied to a classic AI problem (navigation), implying that neuro-symbolic approaches are particularly suited for tasks that require both pattern recognition (seeing the maze) and logical deduction (finding the path). The explicit text output ("Forward", "Turn left"...) underscores the goal of generating interpretable, high-level commands from low-level data.