## Diagram: Federated Learning Process

### Overview

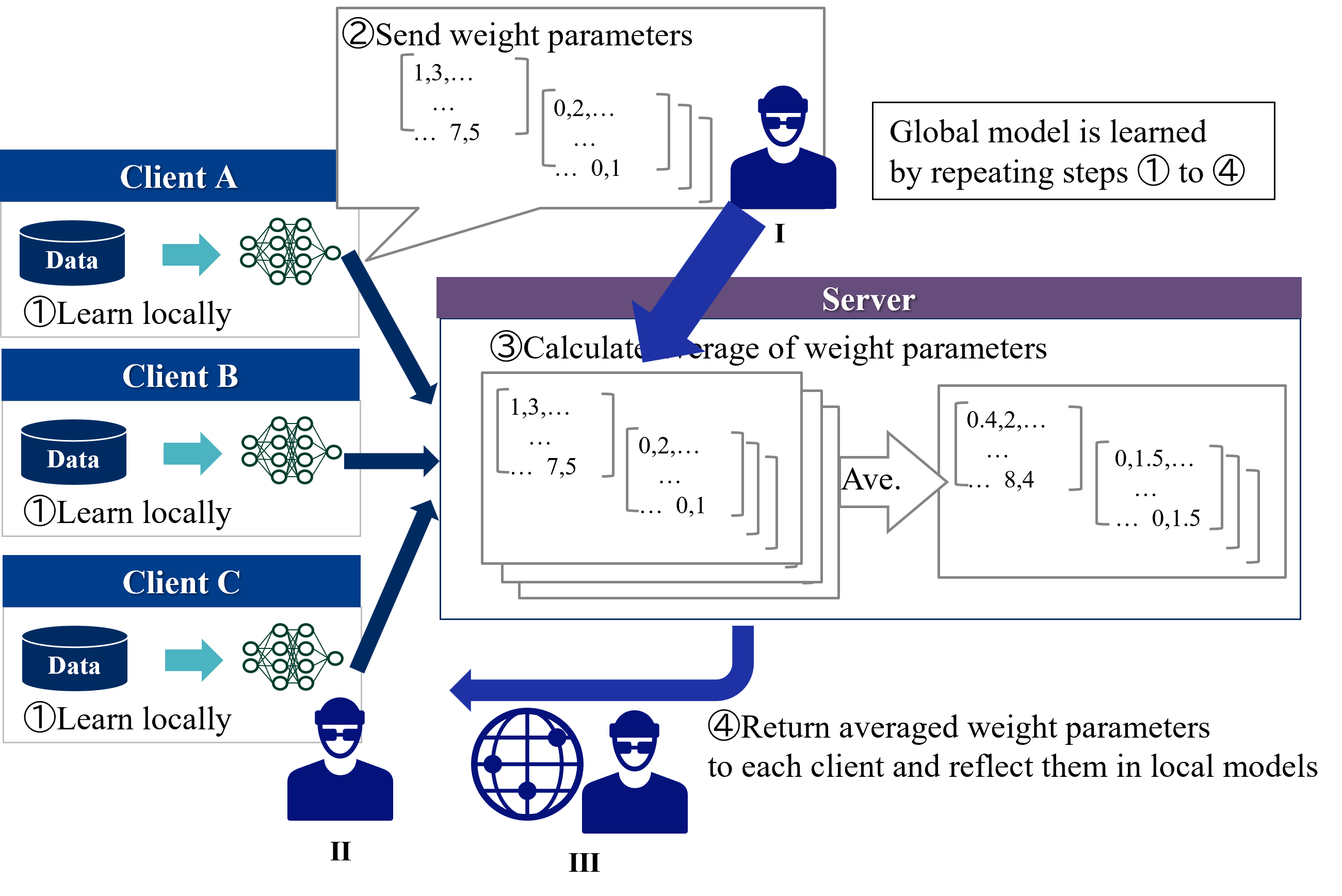

The image illustrates the federated learning process, where multiple clients train a model locally and then send weight parameters to a central server. The server averages these parameters to create a global model, which is then sent back to the clients. This process repeats iteratively.

### Components/Axes

* **Clients:** Client A, Client B, Client C. Each client has its own data and trains a local model.

* Each client block contains the client name, a data icon labeled "Data", and the text "①Learn locally".

* **Server:** The central server aggregates the weight parameters from the clients.

* The server block is labeled "Server".

* **Data Flow:** The diagram shows the flow of data and weight parameters between the clients and the server using arrows.

* **Icons:**

* Data: Represents the data stored at each client.

* Neural Network: Represents the local model trained at each client.

* Person Icon: Represents the client or server.

* Globe Icon: Represents the global model.

* **Steps:** The process is broken down into four steps, labeled ① to ④.

### Detailed Analysis or ### Content Details

1. **Client-Side Learning (①Learn locally):**

* Each client (A, B, and C) has a "Data" store and a neural network.

* The text "①Learn locally" indicates that each client trains a model using its local data.

2. **Sending Weight Parameters (②Send weight parameters):**

* Client A sends weight parameters to the server.

* The weight parameters are represented as matrices with values like "1,3,...", "7,5", "0,2,...", and "0,1".

* A person icon labeled "I" is present near the server.

3. **Server-Side Aggregation (③Calculate average of weight parameters):**

* The server calculates the average of the weight parameters received from the clients.

* The text "③Calculate average of weight parameters" describes this step.

* The averaging process is represented by an arrow labeled "Ave.".

* The averaged weight parameters are represented as matrices with values like "0.4,2,...", "8,4", "0,1.5,...", and "0,1.5".

4. **Returning Averaged Weight Parameters (④Return averaged weight parameters):**

* The server returns the averaged weight parameters to each client.

* The text "④Return averaged weight parameters to each client and reflect them in local models" describes this step.

* A globe icon and a person icon labeled "III" are present, representing the global model being distributed.

5. **Iteration:**

* The text "Global model is learned by repeating steps ① to ④" indicates that the process is iterative.

6. **Client Icons:**

* A person icon labeled "II" is present near the clients.

### Key Observations

* The diagram illustrates a standard federated learning setup with multiple clients and a central server.

* The process involves local training, parameter aggregation, and global model distribution.

* The iterative nature of the process is emphasized.

### Interpretation

The diagram provides a high-level overview of the federated learning process. It highlights the key steps involved in training a global model without directly accessing the clients' data. The process is iterative, allowing the global model to improve over time as the clients continue to train on their local data and contribute to the global model. The use of weight parameters allows the server to aggregate the knowledge learned by each client without compromising data privacy.