\n

## Diagram: Federated Learning Process

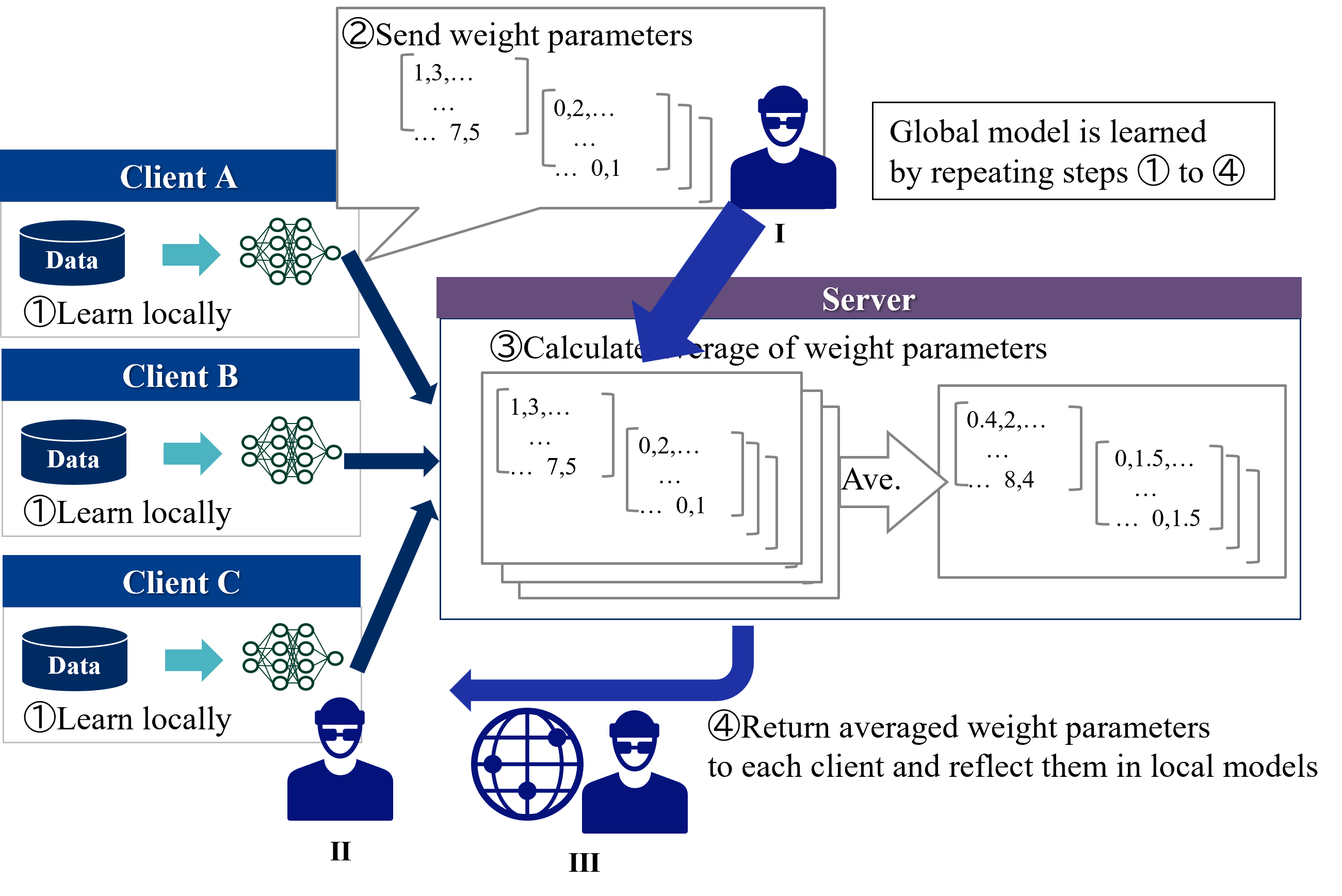

### Overview

The image is a technical diagram illustrating the four-step cycle of a federated learning system. It shows how multiple clients (Client A, Client B, Client C) collaboratively train a shared global model without centralizing their local data. The process involves local training, parameter aggregation on a server, and distribution of the updated global model.

### Components/Axes

The diagram is organized into three main spatial regions:

1. **Left Column (Clients):** Three vertically stacked client blocks (Client A, Client B, Client C). Each block contains:

* A blue cylinder labeled **"Data"**.

* A teal arrow pointing right.

* A green neural network icon.

* The text **"①Learn locally"**.

2. **Center (Server):** A large central block labeled **"Server"** in a purple header. It contains:

* The text **"③Calculate average of weight parameters"**.

* A stack of three matrices on the left, representing received parameters.

* A large arrow labeled **"Ave."** pointing right.

* A single matrix on the right, representing the averaged result.

3. **Right Side & Flow Arrows:**

* A text box in the top-right states: **"Global model is learned by repeating steps ① to ④"**.

* A large blue arrow labeled **"②Send weight parameters"** points from the clients up to the server. A callout bubble from this arrow shows example matrix values: `[1,3,... ... 7,5]` and `[0,2,... ... 0,1]`.

* A large blue arrow labeled **"④Return averaged weight parameters to each client and reflect them in local models"** points from the server back to the clients.

* Three stylized human icons with Roman numerals:

* **I** (top-right, near step 2 arrow).

* **II** (bottom-left, below Client C).

* **III** (bottom-center, next to a globe icon).

### Detailed Analysis

The process flow is explicitly numbered:

* **Step ① (Learn locally):** Each client (A, B, C) uses its local **"Data"** to train its own neural network model. The data does not leave the client.

* **Step ② (Send weight parameters):** Each client sends its locally updated model parameters (weights) to the central **Server**. The diagram provides example parameter values in matrix notation: `[1,3,... ... 7,5]` and `[0,2,... ... 0,1]`.

* **Step ③ (Calculate average of weight parameters):** The **Server** collects the parameter sets from all clients. It then computes an average (indicated by **"Ave."**) of these parameters. The output is a single, averaged parameter set shown as `[0.4,2,... ... 8,4]` and `[0,1.5,... ... 0,1.5]`.

* **Step ④ (Return averaged weight parameters...):** The server sends the newly computed global model parameters back to all clients. Each client then updates its local model with these averaged parameters.

* **Iteration:** The text box confirms this is a cyclic process: **"Global model is learned by repeating steps ① to ④"**.

### Key Observations

1. **Data Privacy:** The core principle is visually emphasized: raw **"Data"** remains within each client's cylinder. Only model parameters (weights) are transmitted.

2. **Centralized Aggregation:** The **Server** acts solely as an aggregator. It does not possess or train on raw data; it only computes the average of received parameters.

3. **Homogeneous Model Architecture:** The identical neural network icons for all clients and the server's averaging operation imply all clients are training the same model architecture.

4. **Parameter Example Discrepancy:** The example parameters sent by clients (`[1,3,...]`) differ from the averaged result (`[0.4,2,...]`), illustrating the aggregation effect. The values are illustrative, not mathematically precise averages of the shown inputs.

5. **Human/System Icons:** The icons labeled **I**, **II**, and **III** likely represent different roles or system components (e.g., **I**: Client Device, **II**: Local User, **III**: Global Coordinator/Network), though their specific functions are not detailed in the text.

### Interpretation

This diagram succinctly explains the federated learning paradigm, a machine learning approach designed for privacy and distributed data.

* **What it demonstrates:** It shows a decentralized training workflow where intelligence is improved collaboratively. The "global model" evolves through iterative consensus, learning patterns from diverse, isolated datasets without compromising data locality.

* **Relationships:** The clients are peers contributing to a common goal. The server is a facilitator, not a data holder. The arrows define a clear, closed-loop communication protocol.

* **Notable Implications:**

* **Privacy-Preserving:** The primary benefit is keeping sensitive data on local devices.

* **Communication Efficiency:** Only model updates (which can be compressed) are sent, not raw data.

* **Iterative Convergence:** The note about repeating steps highlights that the global model improves gradually over many cycles.

* **Assumption of Homogeneity:** The diagram assumes all clients can train the same model structure, which may not hold in more complex, real-world scenarios with heterogeneous devices.

The diagram serves as a high-level conceptual map for understanding how federated learning systems operate, emphasizing the sequence of operations and the flow of information (parameters) rather than specific algorithms or mathematical details.