## Diagram: Recurrent Neural Network Cell Structure

### Overview

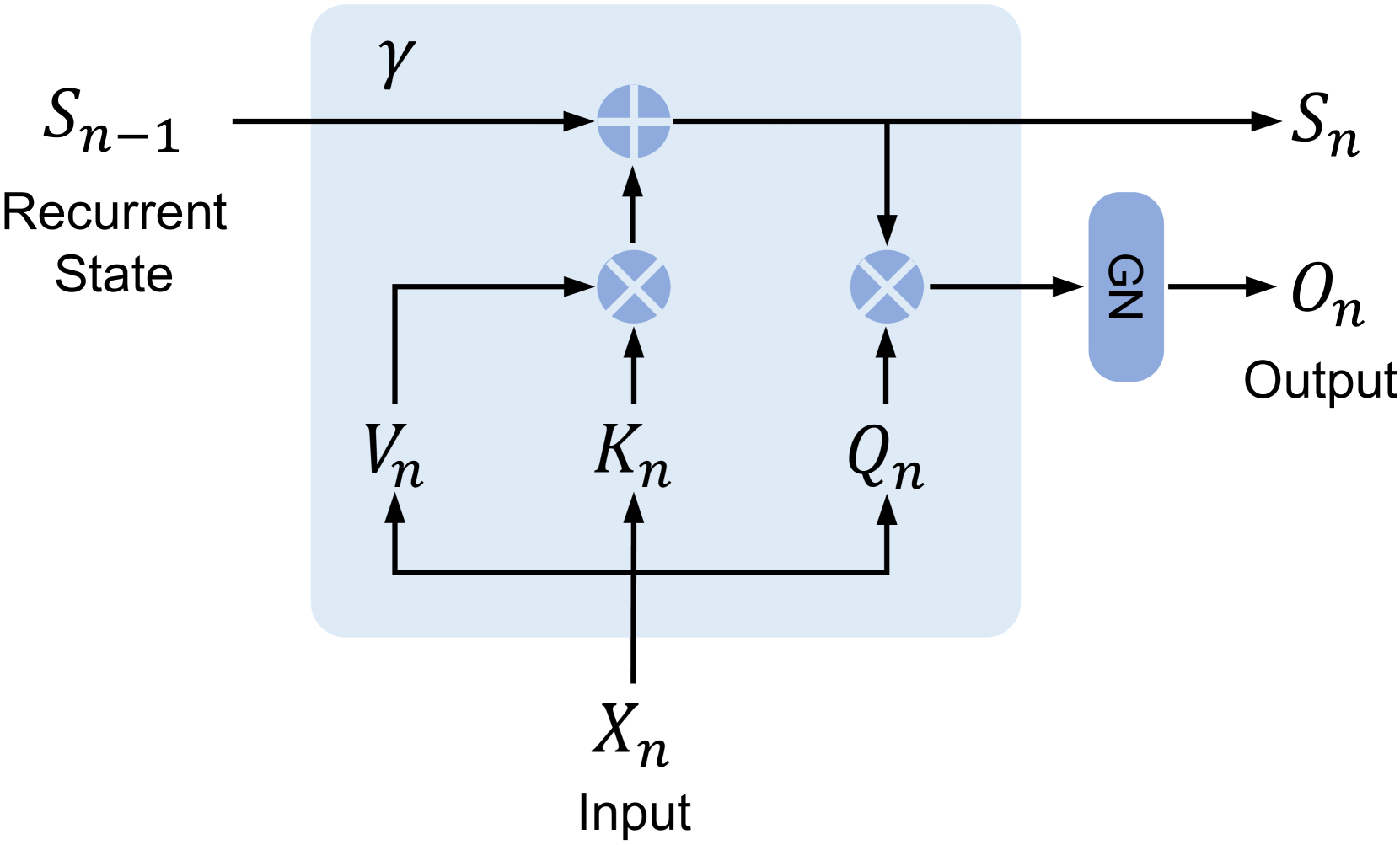

This diagram illustrates a computational cell within a recurrent neural network (RNN). It depicts the flow of information and the operations performed at a specific time step 'n', taking into account the previous state 'S_{n-1}' and the current input 'X_n'. The cell produces a new recurrent state 'S_n' and an output 'O_n'.

### Components/Axes

The diagram does not feature traditional axes or legends as it is a schematic representation of a computational process. The key components and their labels are:

* **S_{n-1}**: Represents the recurrent state from the previous time step (n-1). It is shown as an input on the left side of the diagram.

* **Recurrent State**: A textual label accompanying 'S_{n-1}', clarifying its meaning.

* **X_n**: Represents the input at the current time step 'n'. It is shown as an input at the bottom of the diagram.

* **Input**: A textual label accompanying 'X_n', clarifying its meaning.

* **V_n**: A variable or intermediate state derived from 'X_n'. It is shown as an input to a multiplication operation.

* **K_n**: A variable or intermediate state derived from 'X_n'. It is shown as an input to a multiplication operation.

* **Q_n**: A variable or intermediate state derived from 'X_n'. It is shown as an input to a multiplication operation.

* **γ (gamma)**: A parameter or operation applied to the recurrent state 'S_{n-1}'. It is shown as an input to an addition operation.

* **⊕ (Plus Symbol within a Circle)**: Represents an addition operation.

* **⊗ (Cross Symbol within a Circle)**: Represents a multiplication operation.

* **GN**: Represents a "Gated Network" or a similar gating mechanism. It takes an input and produces an output.

* **S_n**: Represents the new recurrent state at the current time step 'n'. It is an output of the cell.

* **O_n**: Represents the output at the current time step 'n'. It is an output of the cell.

* **Output**: A textual label accompanying 'O_n', clarifying its meaning.

The entire cell's computation is enclosed within a light blue shaded region.

### Detailed Analysis or Content Details

The diagram illustrates the following computational flow:

1. **Input Processing**: The input 'X_n' is processed to generate intermediate variables 'V_n', 'K_n', and 'Q_n'. The exact nature of this processing is not specified but is implied to be a transformation of 'X_n'.

2. **State Update Calculation**:

* The previous recurrent state 'S_{n-1}' is combined with a parameter 'γ'.

* 'V_n' is multiplied by 'K_n' (⊗ operation).

* The result of 'S_{n-1}' and 'γ' is added to the result of the 'V_n' * 'K_n' multiplication (⊕ operation). This forms a component of the new state 'S_n'.

3. **Output Calculation**:

* The result of 'S_{n-1}' and 'γ' is also multiplied by 'Q_n' (⊗ operation).

* This product is then passed through the "GN" (Gated Network) component.

* The output of the "GN" component is the final output 'O_n'.

4. **New State Generation**: The result from the addition operation (from step 2) directly becomes the new recurrent state 'S_n'.

### Key Observations

* The diagram represents a modular computational unit, likely a component of a more complex RNN architecture such as a Gated Recurrent Unit (GRU) or a Long Short-Term Memory (LSTM) cell, or a custom variant.

* The use of multiplication (⊗) and addition (⊕) symbols indicates element-wise operations or matrix multiplications, depending on the dimensionality of the states and inputs.

* The "GN" block suggests a gating mechanism that controls the information flow to the output.

* The diagram clearly separates the calculation of the next recurrent state ('S_n') from the generation of the observable output ('O_n').

### Interpretation

This diagram outlines a specific type of recurrent neural network cell that processes sequential data. The structure suggests a mechanism for selectively updating and propagating information through time.

* **Information Flow and Memory**: The recurrent state 'S_{n-1}' acts as a form of memory, carrying information from previous time steps. The operations involving 'γ', 'V_n', 'K_n', and 'Q_n' suggest that the cell dynamically decides how much of the past state to retain and how to incorporate the current input. The addition operation likely combines the "remembered" past state with a "newly computed" component derived from the input.

* **Gating Mechanisms**: The multiplication operations and the "GN" block are indicative of gating mechanisms. These gates are crucial in RNNs for controlling the flow of information, preventing vanishing or exploding gradients, and allowing the network to learn long-term dependencies. The multiplication of 'S_{n-1}' with 'γ' and the subsequent addition could be interpreted as a form of "forgetting" or "updating" the previous state. The multiplication with 'Q_n' and passing through "GN" for the output 'O_n' suggests that the output is a filtered or transformed version of the internal state, controlled by the input-derived 'Q_n'.

* **Purpose**: Such a cell is designed to learn patterns in sequential data, where the output at any given time step depends not only on the current input but also on the history of inputs. The specific arrangement of operations and variables would determine the cell's capacity to model different types of temporal dependencies. For instance, if 'γ' is a learned parameter, it could act as a forget gate. If 'V_n', 'K_n', and 'Q_n' are derived through learned transformations of 'X_n', they would function as input gates or update gates. The "GN" block could be a final activation or another gating layer.

In essence, this diagram provides a blueprint for a sophisticated computational unit capable of processing sequential information by maintaining an internal state and dynamically modulating the influence of past information and current inputs on both the state update and the output.