## Diagram: Simple Neural Network Model and Memory Allocation

### Overview

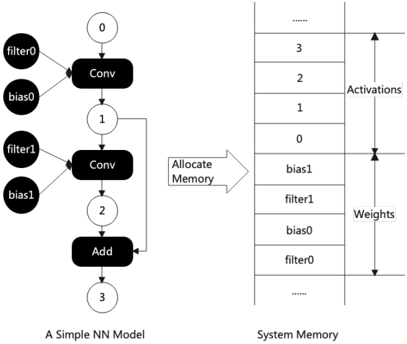

The image presents a diagram illustrating a simple neural network (NN) model and its corresponding memory allocation in a system. The left side depicts the NN model's architecture, showing convolutional layers and an addition layer, along with filters and biases. The right side represents the system memory, indicating how activations and weights are stored. An arrow labeled "Allocate Memory" connects the NN model to the system memory, signifying the memory allocation process.

### Components/Axes

* **Left Side: A Simple NN Model**

* Nodes: Represented by circles with numbers (0, 1, 2, 3) indicating the sequence of operations.

* Layers: Convolutional layers ("Conv") and an addition layer ("Add") are represented by black rectangles.

* Inputs: "filter0", "bias0", "filter1", "bias1" are represented by black circles.

* Connections: Arrows indicate the flow of data between layers and inputs.

* **Right Side: System Memory**

* Memory Blocks: Represented by stacked rectangles, each containing a value or label.

* Activations: Labeled section of memory containing values 0, 1, 2, 3, and "...".

* Weights: Labeled section of memory containing "filter0", "bias0", "filter1", "bias1", and "...".

* Allocation Arrow: An arrow pointing from the NN model to the system memory, labeled "Allocate Memory".

### Detailed Analysis

* **NN Model Architecture:**

* Node 0: Input to the first convolutional layer ("Conv").

* Inputs to the first "Conv" layer are "filter0" and "bias0".

* Node 1: Output of the first "Conv" layer, input to the second "Conv" layer.

* Inputs to the second "Conv" layer are "filter1" and "bias1".

* Node 2: Output of the second "Conv" layer, input to the "Add" layer.

* The output of the first "Conv" layer (Node 1) is also fed back as input to the "Add" layer.

* Node 3: Output of the "Add" layer.

* **Memory Allocation:**

* The "Allocate Memory" arrow indicates that the NN model's parameters and intermediate results are stored in the system memory.

* The system memory is divided into two sections: "Activations" and "Weights".

* "Activations" store the intermediate results of the NN model's computations (0, 1, 2, 3).

* "Weights" store the model's parameters ("filter0", "bias0", "filter1", "bias1").

* The order of storage in the "Weights" section is "filter0", "bias0", "filter1", "bias1" from bottom to top.

### Key Observations

* The diagram illustrates a feedforward neural network with two convolutional layers followed by an addition layer.

* The memory allocation scheme shows a clear separation between activations and weights.

* The feedback loop from Node 1 to the "Add" layer (Node 2) suggests a recurrent or skip connection within the network.

### Interpretation

The diagram provides a simplified view of how a neural network model is implemented and how its data is stored in memory. The "Allocate Memory" arrow highlights the crucial step of assigning memory resources to the model's parameters (weights) and intermediate computations (activations). The separation of activations and weights in memory is a common practice in neural network implementations. The feedback loop in the NN model suggests a more complex architecture than a simple feedforward network, potentially incorporating recurrent or residual connections. The diagram demonstrates the relationship between the NN model's architecture and its memory footprint, which is essential for efficient implementation and deployment.