## Diagram: Convolutional Neural Network Architecture Transformation

### Overview

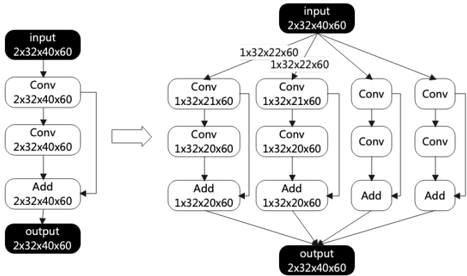

The image depicts a transformation in a convolutional neural network (CNN) architecture. The left side shows a simple sequential structure, while the right side illustrates a more complex, branched architecture, possibly representing an Inception-like module. The diagram uses blocks to represent layers and arrows to indicate the flow of data.

### Components/Axes

* **Input (Left):** Black rounded rectangle labeled "input" with dimensions "2x32x40x60".

* **Conv (Left):** White rounded rectangle labeled "Conv" with dimensions "2x32x40x60". This appears twice in sequence.

* **Add (Left):** White rounded rectangle labeled "Add" with dimensions "2x32x40x60".

* **Output (Left):** Black rounded rectangle labeled "output" with dimensions "2x32x40x60".

* **Arrow:** A right-pointing arrow indicates the transformation from the left architecture to the right architecture.

* **Input (Right):** Black rounded rectangle labeled "input" with dimensions "2x32x40x60".

* **Conv (Right):** White rounded rectangles labeled "Conv" with varying dimensions: "1x32x21x60" (appears twice), and other unlabeled "Conv" blocks.

* **Add (Right):** White rounded rectangles labeled "Add" with dimensions "1x32x20x60" (appears twice), and other unlabeled "Add" blocks.

* **Output (Right):** Black rounded rectangle labeled "output" with dimensions "2x32x40x60".

* **Branching Connections (Right):** The right side shows the input splitting into multiple parallel convolutional paths, which are then concatenated via addition operations before producing the final output.

* **Dimension Labels:** Dimensions are indicated above the connections between the input and the first layer of Conv blocks on the right side: "1x32x22x60" (appears twice).

### Detailed Analysis

**Left Side (Sequential Architecture):**

1. **Input:** The input layer has dimensions 2x32x40x60.

2. **Conv Layer 1:** A convolutional layer with dimensions 2x32x40x60 follows the input.

3. **Conv Layer 2:** Another convolutional layer with dimensions 2x32x40x60 follows the first convolutional layer.

4. **Add Layer:** An addition layer with dimensions 2x32x40x60 combines the output of the second convolutional layer.

5. **Output:** The output layer has dimensions 2x32x40x60.

**Right Side (Branched Architecture):**

1. **Input:** The input layer has dimensions 2x32x40x60.

2. **Branching:** The input splits into multiple paths. Two paths are explicitly labeled with dimensions "1x32x22x60".

3. **Convolutional Paths:**

* Path 1: Conv (1x32x21x60) -> Conv (1x32x20x60) -> Add (1x32x20x60)

* Path 2: Conv (1x32x21x60) -> Conv (1x32x20x60) -> Add (1x32x20x60)

* Path 3: Conv -> Conv -> Add

* Path 4: Conv -> Conv -> Add

4. **Addition Layers:** The outputs of the convolutional paths are combined using addition layers.

5. **Output:** The output layer has dimensions 2x32x40x60.

### Key Observations

* The diagram illustrates a transformation from a simple sequential CNN architecture to a more complex branched architecture.

* The branched architecture on the right side resembles an Inception module, where the input is processed through multiple parallel convolutional paths with different filter sizes.

* The dimensions of the layers change as the data flows through the network, indicating the application of convolutional operations and pooling.

* The addition layers likely serve to concatenate the outputs of the different convolutional paths.

### Interpretation

The diagram demonstrates a common technique in CNN design: replacing a simple sequential structure with a more complex, branched structure to improve feature extraction and model performance. The Inception-like module on the right side allows the network to learn features at multiple scales, which can be beneficial for tasks such as image classification and object detection. The transformation suggests an optimization or evolution of the network architecture to enhance its capabilities. The change in dimensions from 2x32x40x60 to 1x32x22x60 and subsequent changes in the branched paths indicate the use of different filter sizes and strides in the convolutional layers. The addition layers likely combine the feature maps learned by the different convolutional paths, allowing the network to integrate information from multiple scales.