## Bar Chart: Model Accuracy Across Academic Disciplines

### Overview

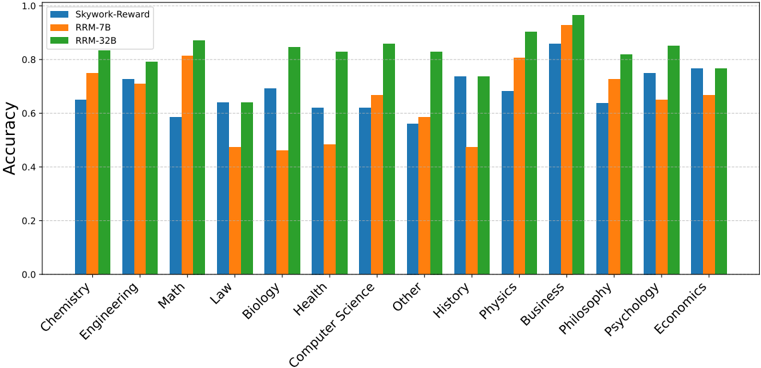

The chart compares the accuracy of three AI models (Skywork-Reward, RRM-7B, RRM-32B) across 15 academic disciplines. Accuracy is measured on a scale from 0.0 to 1.0, with higher values indicating better performance. The chart uses grouped bars to visualize performance differences between models within each discipline.

### Components/Axes

- **X-axis**: Academic disciplines (Chemistry, Engineering, Math, Law, Biology, Health, Computer Science, Other, History, Physics, Business, Philosophy, Psychology, Economics)

- **Y-axis**: Accuracy (0.0 to 1.0 in increments of 0.2)

- **Legend**:

- Blue: Skywork-Reward

- Orange: RRM-7B

- Green: RRM-32B

- **Bar Structure**: Three bars per discipline, grouped by model

### Detailed Analysis

1. **Chemistry**:

- Skywork-Reward (blue): ~0.65

- RRM-7B (orange): ~0.75

- RRM-32B (green): ~0.85

2. **Engineering**:

- Skywork-Reward: ~0.72

- RRM-7B: ~0.70

- RRM-32B: ~0.80

3. **Math**:

- Skywork-Reward: ~0.58

- RRM-7B: ~0.82

- RRM-32B: ~0.88

4. **Law**:

- Skywork-Reward: ~0.64

- RRM-7B: ~0.48

- RRM-32B: ~0.65

5. **Biology**:

- Skywork-Reward: ~0.68

- RRM-7B: ~0.46

- RRM-32B: ~0.85

6. **Health**:

- Skywork-Reward: ~0.62

- RRM-7B: ~0.49

- RRM-32B: ~0.83

7. **Computer Science**:

- Skywork-Reward: ~0.62

- RRM-7B: ~0.67

- RRM-32B: ~0.84

8. **Other**:

- Skywork-Reward: ~0.56

- RRM-7B: ~0.59

- RRM-32B: ~0.83

9. **History**:

- Skywork-Reward: ~0.74

- RRM-7B: ~0.47

- RRM-32B: ~0.74

10. **Physics**:

- Skywork-Reward: ~0.68

- RRM-7B: ~0.80

- RRM-32B: ~0.90

11. **Business**:

- Skywork-Reward: ~0.85

- RRM-7B: ~0.92

- RRM-32B: ~0.95

12. **Philosophy**:

- Skywork-Reward: ~0.64

- RRM-7B: ~0.73

- RRM-32B: ~0.82

13. **Psychology**:

- Skywork-Reward: ~0.75

- RRM-7B: ~0.66

- RRM-32B: ~0.83

14. **Economics**:

- Skywork-Reward: ~0.77

- RRM-7B: ~0.68

- RRM-32B: ~0.77

### Key Observations

- **RRM-32B (green)** consistently outperforms other models in most disciplines, with particularly strong performance in Math (+0.88), Biology (+0.85), and Physics (+0.90).

- **RRM-7B (orange)** shows significant weaknesses in Law (-0.16 vs. Skywork-Reward), Health (-0.13), and History (-0.27), but excels in Business (+0.07 over Skywork-Reward).

- **Skywork-Reward (blue)** demonstrates mid-range performance across disciplines, with notable strength in Business (+0.85) and Psychology (+0.75).

- **Economics** is an outlier where all models show similar performance (~0.77 for Skywork-Reward/RRM-32B vs. 0.68 for RRM-7B).

### Interpretation

The data suggests RRM-32B is the most robust model across academic domains, particularly in quantitative fields (Math, Physics) and interdisciplinary areas (Business). RRM-7B's performance varies dramatically by discipline, indicating potential specialization gaps. Skywork-Reward maintains consistent mid-tier performance, suggesting balanced but less specialized capabilities. The Business discipline shows exceptional performance across all models, possibly reflecting the availability of high-quality training data in this field. The Economics outlier may indicate unique challenges or data characteristics in that domain.