## Line Graph: Training Loss vs. Steps and Tokens

### Overview

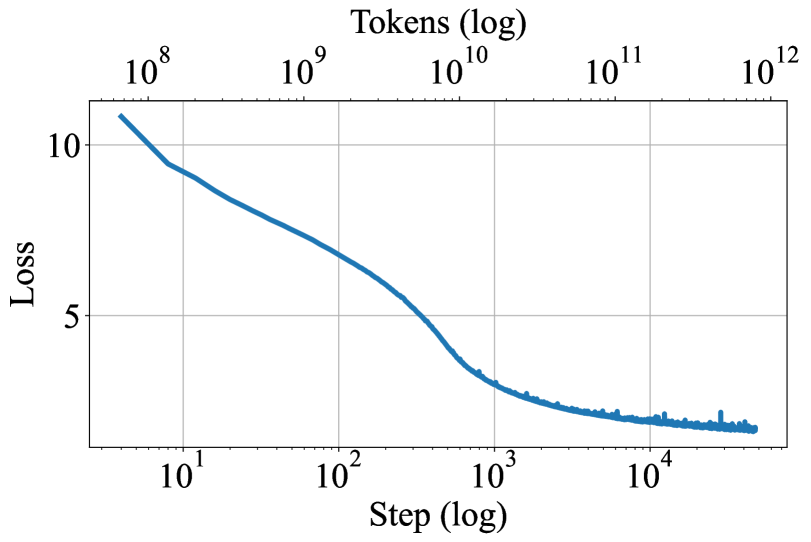

The image displays a line graph plotting a model's training loss against the number of training steps and processed tokens, both on logarithmic scales. The graph shows a clear, decreasing trend in loss as training progresses, with the rate of decrease slowing significantly in later stages.

### Components/Axes

* **Chart Type:** Single-series line graph.

* **Title/Top Axis Label:** "Tokens (log)" - positioned at the top center of the chart.

* **X-Axis (Bottom):** Labeled "Step (log)". It is a logarithmic scale with major tick marks and labels at `10^1`, `10^2`, `10^3`, and `10^4`.

* **X-Axis (Top):** A secondary logarithmic axis labeled "Tokens (log)" with major tick marks and labels at `10^8`, `10^9`, `10^10`, `10^11`, and `10^12`. This axis is aligned with the bottom "Step" axis, indicating a direct relationship between steps and tokens processed.

* **Y-Axis:** Labeled "Loss". It is a linear scale with major tick marks and labels at `5` and `10`. The axis extends slightly below 5 and above 10.

* **Data Series:** A single, solid blue line representing the loss value.

* **Grid:** A light gray grid is present, with vertical lines corresponding to the major x-axis ticks and horizontal lines at y=5 and y=10.

### Detailed Analysis

The blue line demonstrates a consistent downward trend from left to right.

* **Initial Phase (Steps ~5 to 100):** The line begins at a loss value slightly above 10 (approx. 10.5) at a step count just below `10^1`. It descends steeply and relatively smoothly. At step `10^2`, the loss is approximately 7.

* **Middle Phase (Steps ~100 to 1,000):** The descent continues but begins to shallow. The line passes through a loss of approximately 5 at a step count between `10^2` and `10^3` (roughly at step 300-400). At step `10^3`, the loss is approximately 3.5.

* **Late Phase (Steps >1,000):** The curve flattens considerably, showing diminishing returns. The line becomes noticeably noisier, with small, frequent upward spikes. By step `10^4`, the loss has decreased to approximately 2.5. The line continues with a very gradual downward slope and persistent noise until the end of the plotted data, which is slightly beyond step `10^4`.

**Trend Verification:** The visual trend is a classic "learning curve": a rapid initial improvement (steep negative slope) that gradually plateaus (slope approaches zero). The increasing noise in the later phase is also a common characteristic.

### Key Observations

1. **Log-Log Relationship:** The use of logarithmic scales on both the step/token axes and the (implied) loss axis suggests the relationship between training effort and loss reduction follows a power law or exponential decay pattern.

2. **Dual X-Axes:** The alignment of "Step" and "Tokens" implies a fixed or average number of tokens per step. For example, step `10^3` aligns with approximately `10^10` tokens, suggesting ~10 million tokens per step in that region.

3. **Noise Onset:** The transition from a smooth curve to a noisy line occurs around step `10^3` (loss ~3.5). This could indicate a change in training dynamics, such as a shift in learning rate, the introduction of regularization, or simply the inherent variance becoming more visible as the loss signal weakens.

4. **Plateau Level:** The loss appears to be approaching an asymptote somewhere between 2 and 2.5, indicating the model's performance limit under the current training configuration.

### Interpretation

This graph is a fundamental diagnostic tool for machine learning model training. It visually answers the question: "Is the model learning, and how efficiently?"

* **What it demonstrates:** The model is successfully learning, as evidenced by the consistent reduction in loss (a measure of error) over time. The steep initial drop indicates the model is quickly learning the most obvious patterns in the data.

* **Relationship between elements:** The dual x-axes explicitly link computational effort (steps) to data exposure (tokens). The flattening curve illustrates the principle of diminishing returns in training: each additional order of magnitude in steps/tokens yields a progressively smaller improvement in loss.

* **Notable implications:** The persistent noise in the late stage suggests the training process has entered a regime of high variance. This is often where techniques like learning rate decay or early stopping become critical to prevent overfitting and to efficiently finalize the model. The plateau indicates that simply training for more steps with the same hyperparameters is unlikely to yield significant further improvement; a change in strategy (e.g., model architecture, data quality, or optimization algorithm) would be needed to break through this loss floor.

**Language:** All text in the image is in English.